0. 실습 환경 배포

# 코드 다운로드, 작업 디렉터리 이동

$ cd aews/2w

# 이번에 2w를 새로 다운로드 (기존 폴더에 2w만 받아짐)

aews git:(main*) $ git pull origin main

remote: Enumerating objects: 8, done.

remote: Counting objects: 100% (8/8), done.

remote: Compressing objects: 100% (7/7), done.

remote: Total 7 (delta 0), reused 7 (delta 0), pack-reused 0 (from 0)

Unpacking objects: 100% (7/7), 3.51 KiB | 359.00 KiB/s, done.

From https://github.com/gasida/aews

* branch main -> FETCH_HEAD

c95a0bb..1ad820c main -> origin/main

Updating c95a0bb..1ad820c

Fast-forward

2w/eks.tf | 169 ++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

2w/outputs.tf | 4 +++

2w/var.tf | 72 ++++++++++++++++++++++++++++++++++++++

2w/vpc.tf | 50 +++++++++++++++++++++++++++

4 files changed, 295 insertions(+)

create mode 100644 2w/eks.tf

create mode 100644 2w/outputs.tf

create mode 100644 2w/var.tf

create mode 100644 2w/vpc.tf

# 변수 지정

aews git:(main*) $ export TF_VAR_KeyName=test-key

aews git:(main*) $ export TF_VAR_ssh_access_cidr=$(curl -s ipinfo.io/ip)/32

aews git:(main*) $ echo $TF_VAR_KeyName $TF_VAR_ssh_access_cidr

test-key 1x.x.x.x/32

# 배포 : 12분 정도 소요

:2w git:(main*) $ terraform init

Initializing the backend...

Initializing modules...

Downloading registry.terraform.io/terraform-aws-modules/eks/aws 21.15.1 for eks...

- eks in .terraform/modules/eks

- eks.eks_managed_node_group in .terraform/modules/eks/modules/eks-managed-node-group

- eks.eks_managed_node_group.user_data in .terraform/modules/eks/modules/_user_data

- eks.fargate_profile in .terraform/modules/eks/modules/fargate-profile

Downloading registry.terraform.io/terraform-aws-modules/kms/aws 4.0.0 for eks.kms...

- eks.kms in .terraform/modules/eks.kms

- eks.self_managed_node_group in .terraform/modules/eks/modules/self-managed-node-group

- eks.self_managed_node_group.user_data in .terraform/modules/eks/modules/_user_data

Downloading registry.terraform.io/terraform-aws-modules/vpc/aws 6.6.0 for vpc...

- vpc in .terraform/modules/vpc

Initializing provider plugins...

- Finding hashicorp/aws versions matching ">= 6.0.0, >= 6.28.0"...

- Finding hashicorp/tls versions matching ">= 4.0.0"...

- Finding hashicorp/time versions matching ">= 0.9.0"...

- Finding hashicorp/cloudinit versions matching ">= 2.0.0"...

- Finding hashicorp/null versions matching ">= 3.0.0"...

- Installing hashicorp/aws v6.37.0...

- Installed hashicorp/aws v6.37.0 (signed by HashiCorp)

- Installing hashicorp/tls v4.2.1...

- Installed hashicorp/tls v4.2.1 (signed by HashiCorp)

- Installing hashicorp/time v0.13.1...

- Installed hashicorp/time v0.13.1 (signed by HashiCorp)

- Installing hashicorp/cloudinit v2.3.7...

- Installed hashicorp/cloudinit v2.3.7 (signed by HashiCorp)

- Installing hashicorp/null v3.2.4...

- Installed hashicorp/null v3.2.4 (signed by HashiCorp)

Terraform has created a lock file .terraform.lock.hcl to record the provider

selections it made above. Include this file in your version control repository

so that Terraform can guarantee to make the same selections by default when

you run "terraform init" in the future.

Terraform has been successfully initialized!

You may now begin working with Terraform. Try running "terraform plan" to see

any changes that are required for your infrastructure. All Terraform commands

should now work.

If you ever set or change modules or backend configuration for Terraform,

rerun this command to reinitialize your working directory. If you forget, other

commands will detect it and remind you to do so if necessary.

2w git:(main*) $ nohup sh -c "terraform apply -auto-approve" > create.log 2>&1 &

[1] 13492

2w git:(main*) $ tail -f create.log

module.eks.aws_eks_addon.this["coredns"]: Still creating... [00m10s elapsed]

module.eks.aws_eks_addon.this["coredns"]: Creation complete after 15s [id=myeks:coredns]

module.eks.aws_eks_addon.this["kube-proxy"]: Still creating... [00m20s elapsed]

module.eks.aws_eks_addon.this["kube-proxy"]: Creation complete after 25s [id=myeks:kube-proxy]

Apply complete! Resources: 59 added, 0 changed, 0 destroyed.

Outputs:

configure_kubectl = "aws eks --region ap-northeast-2 update-kubeconfig --name myeks"

# 자격증명 설정

2w git:(main*) $ terraform output -raw configure_kubectl

aws eks --region ap-northeast-2 update-kubeconfig --name myeks%

2w git:(main*) $ aws eks --region ap-northeast-2 update-kubeconfig --name myeks

Added new context arn:aws:eks:ap-northeast-2:123123123:cluster/myeks to /Users/.kube/config

2w git:(main*) $ kubectl config rename-context $(cat ~/.kube/config | grep current-context | awk '{print $2}') myeks

Context "arn:aws:eks:ap-northeast-2:123123123:cluster/myeks" renamed to "myeks".

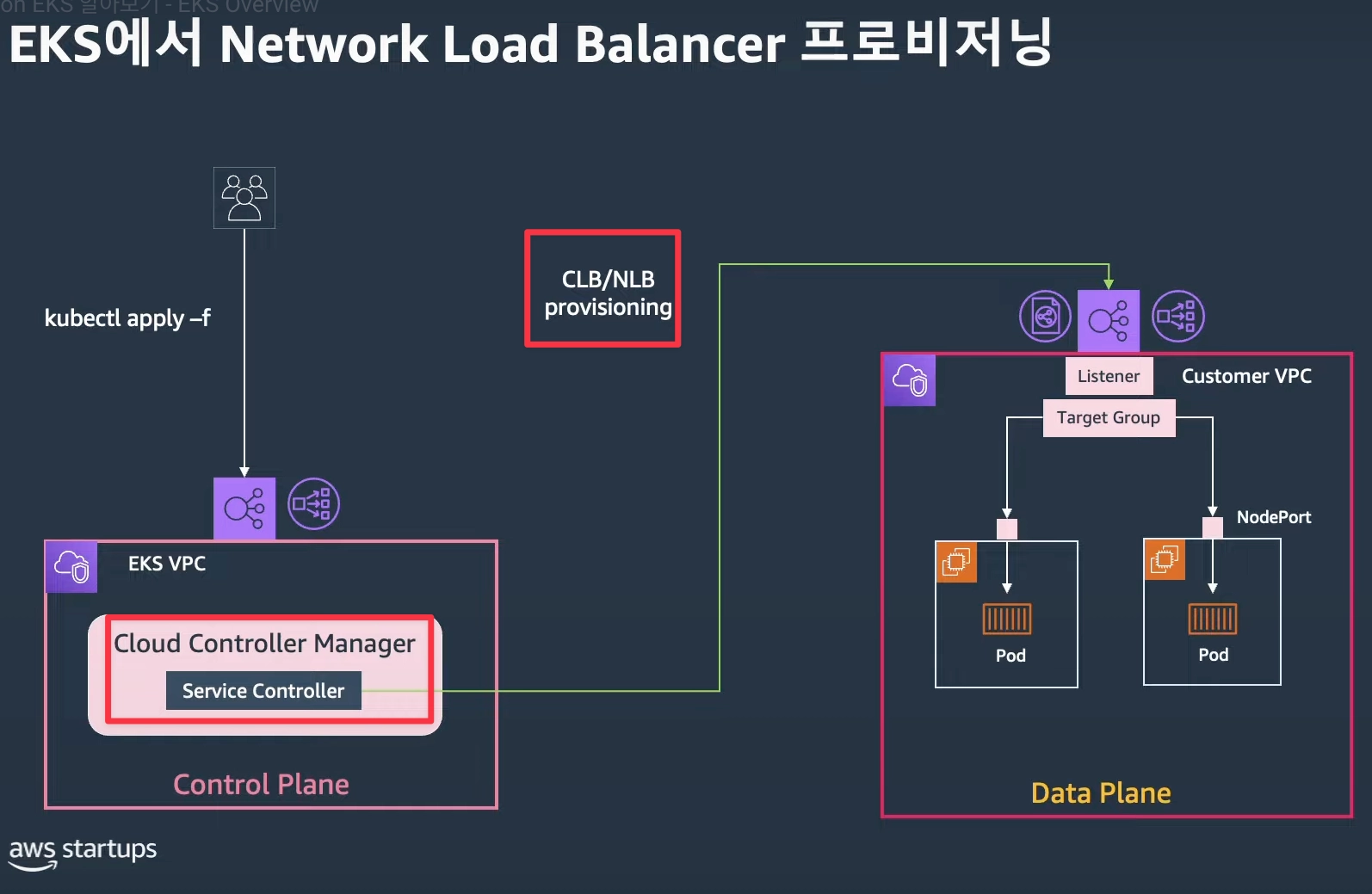

배포 후 기본 정보 확인

EKS 관리 콘솔 확인

- Overview 개요 : API server endpoint, Open ID Connect provider URL기본 정보(oidc)

- Compute 컴퓨팅 : Node groups 클릭 → 상세 정보 확인 ⇒ kubernetes 레이블 tier = primary

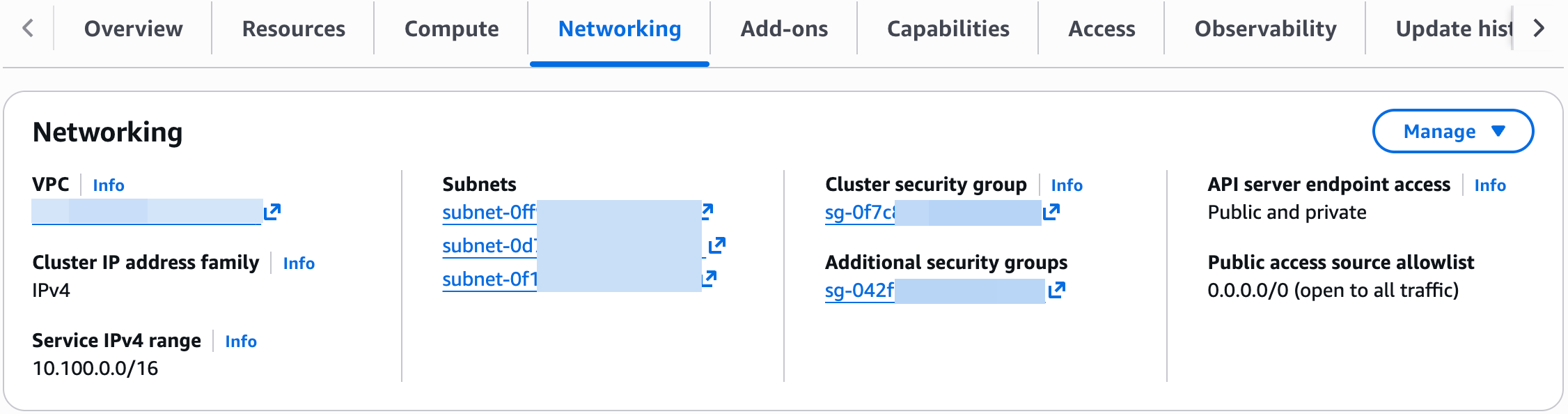

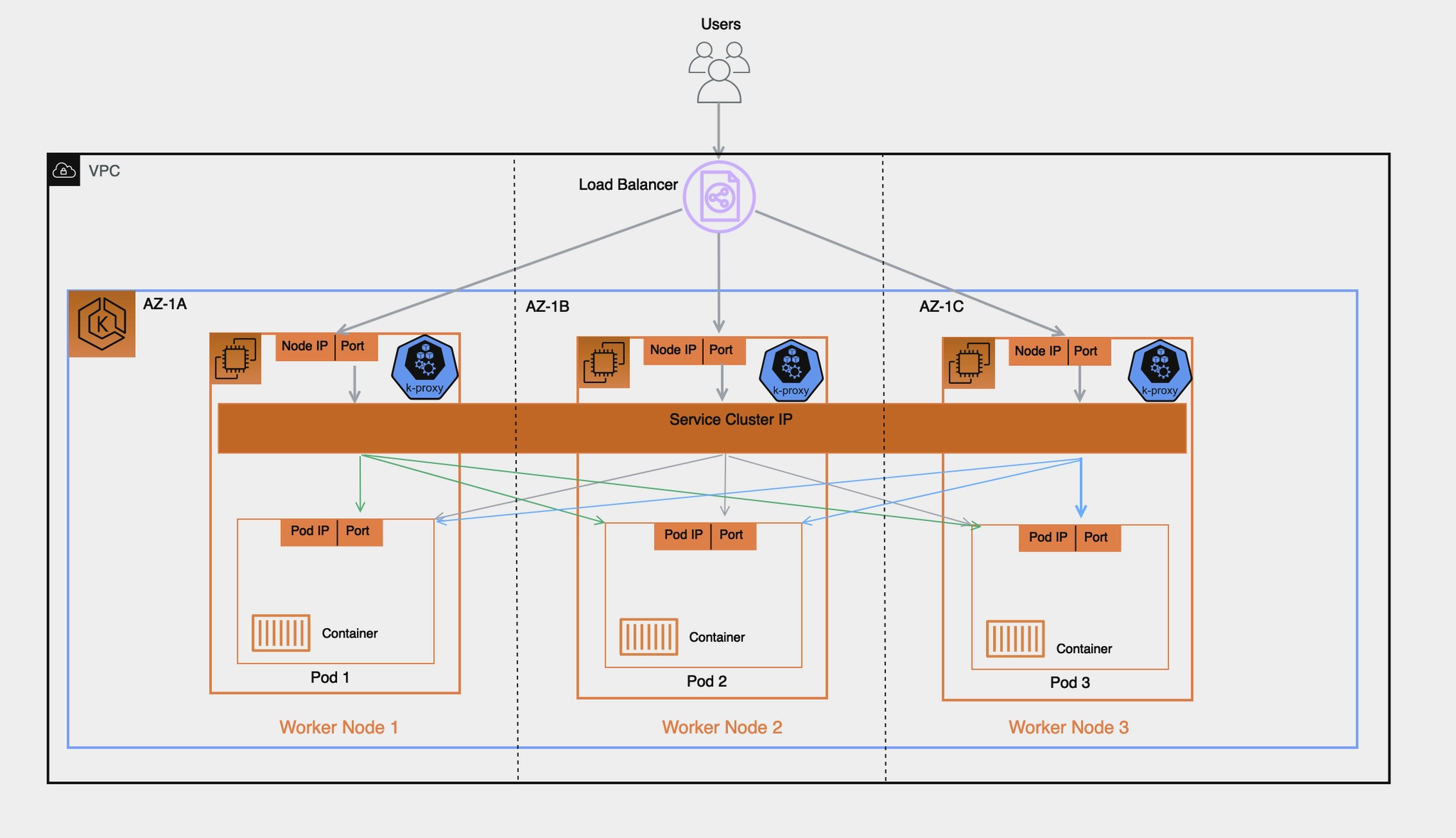

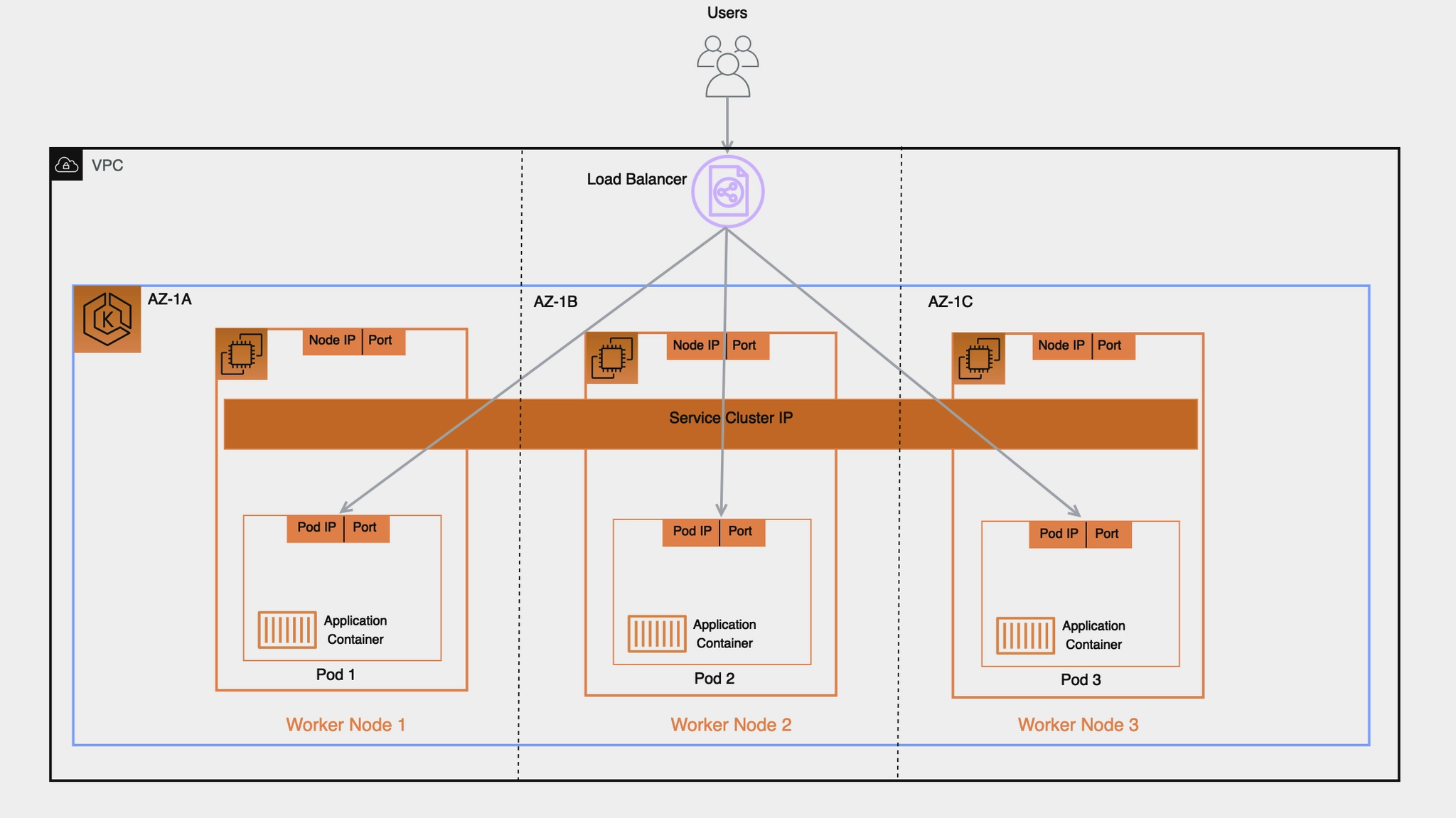

- Networking 네트워킹 : 서비스 IPv4 범위(10.100.0.0/16), 서브넷, access(public and private)..

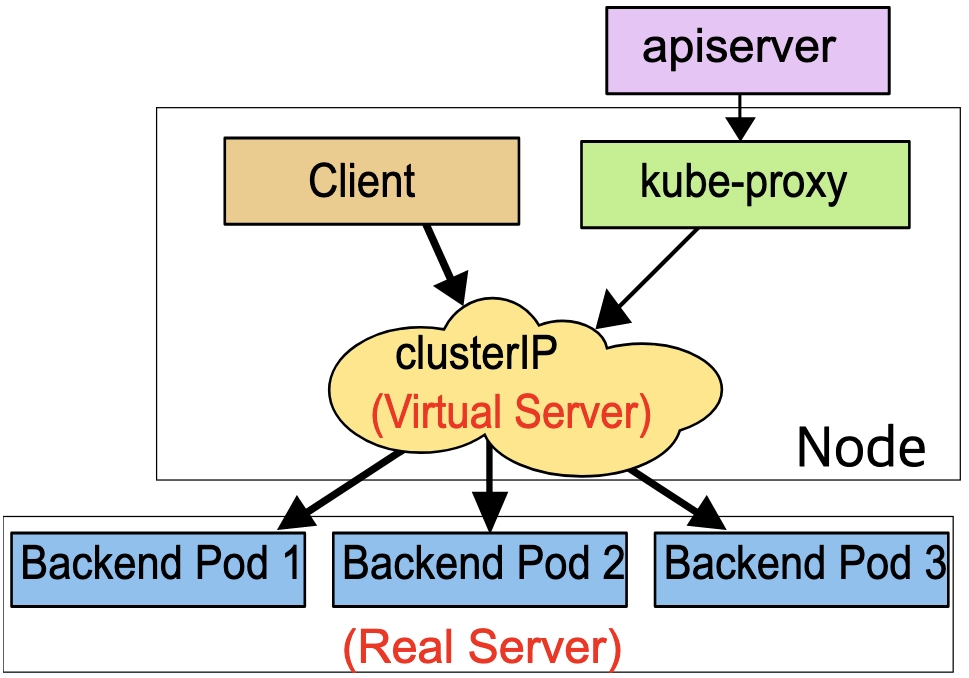

- Service IPv4 range : Kubernetes Service에 할당되는 “가상 IP 대역 (ClusterIP 대역)"

- Service란? Pod들을 묶어서 고정된 접속 주소를 제공

- Public access source allowlist : EKS API 서버에 접근할 IP

( API server endpoint access가 public으로 되어 있어서 API 서버로 외부 접근 가능)

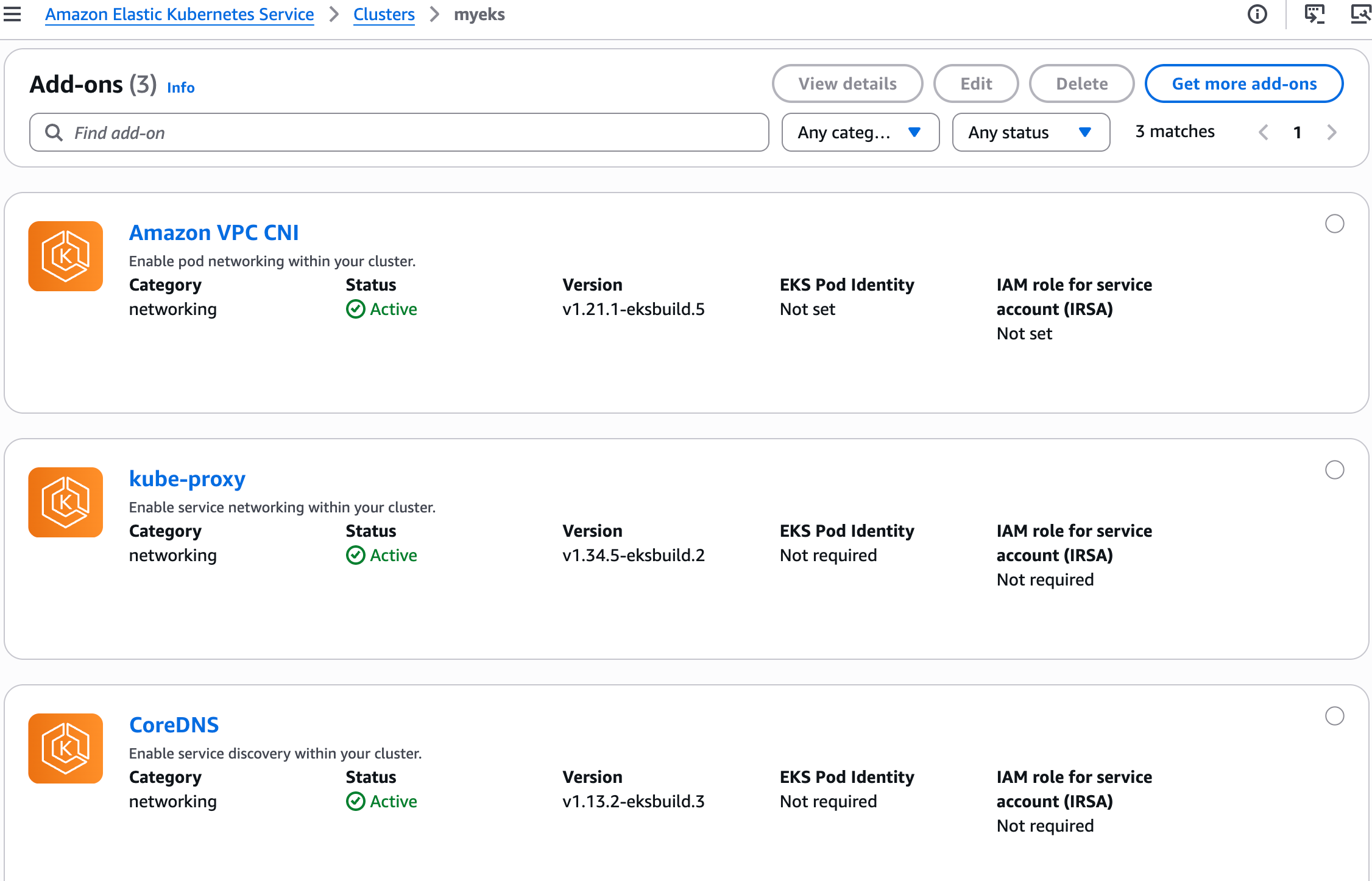

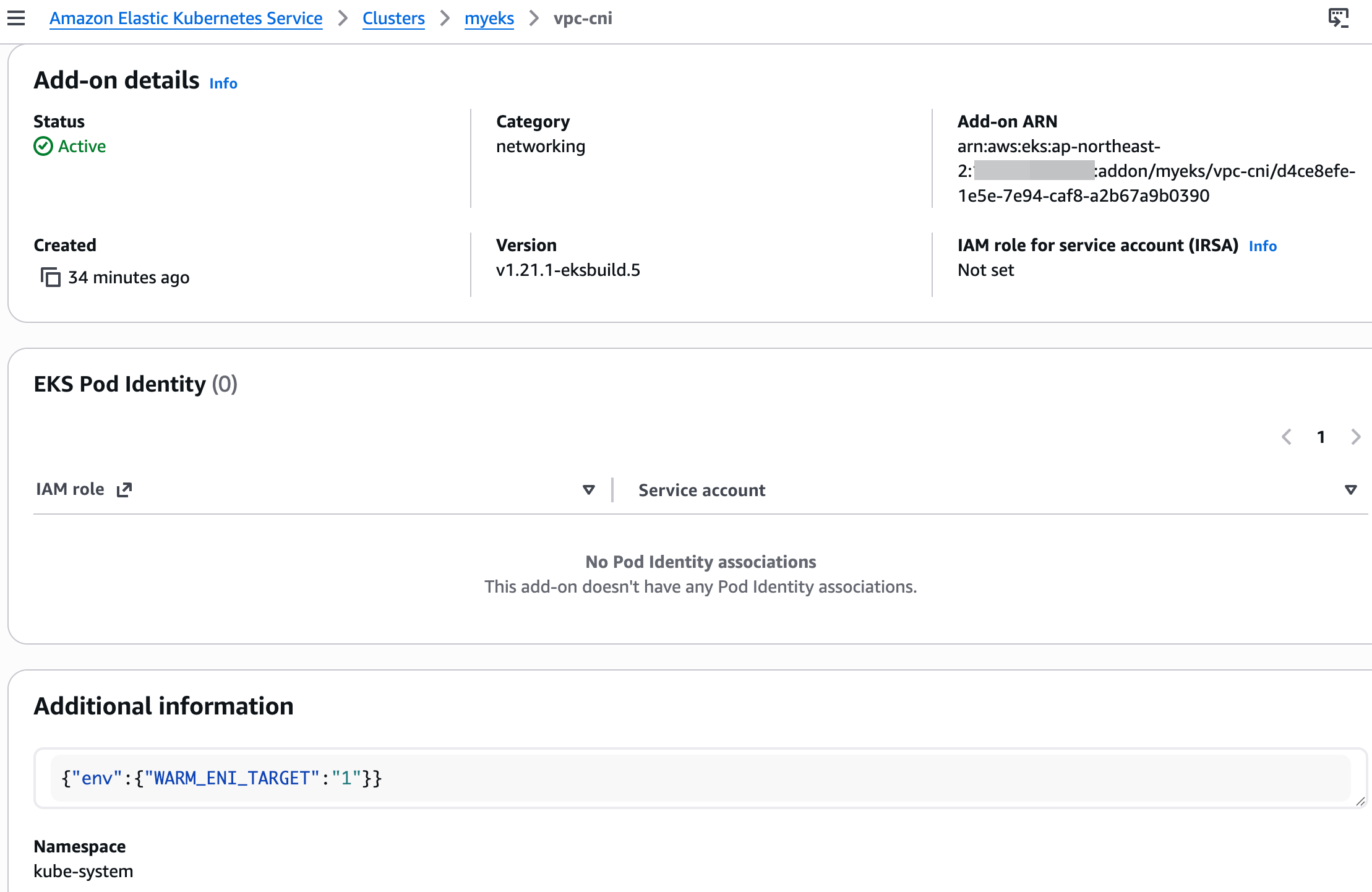

- Add-ons 추가 기능 : VPC CNI 클릭 후 추가 정보 확인

- Access : IAM access entries (설치 시 사용한 자격증명 username 확인)

EKS 기본 정보 확인

# 노드 라벨 확인

2w git:(main*) $ kubectl get node --show-labels

NAME STATUS ROLES AGE VERSION LABELS

ip-192-168-1-210.ap-northeast-2.compute.internal Ready <none> 39m v1.34.4-eks-f69f56f beta.kubernetes.io/arch=amd64,beta.kubernetes.io/instance-type=t3.medium,beta.kubernetes.io/os=linux,eks.amazonaws.com/capacityType=ON_DEMAND,eks.amazonaws.com/nodegroup-image=ami-0041be04b53631868,eks.amazonaws.com/nodegroup=myeks-1nd-node-group,eks.amazonaws.com/sourceLaunchTemplateId=lt-08e395f933cfc84e6,eks.amazonaws.com/sourceLaunchTemplateVersion=1,failure-domain.beta.kubernetes.io/region=ap-northeast-2,failure-domain.beta.kubernetes.io/zone=ap-northeast-2a,k8s.io/cloud-provider-aws=5553ae84a0d29114870f67bbabd07d44,kubernetes.io/arch=amd64,kubernetes.io/hostname=ip-192-168-1-210.ap-northeast-2.compute.internal,kubernetes.io/os=linux,node.kubernetes.io/instance-type=t3.medium,tier=primary,topology.k8s.aws/zone-id=apne2-az1,topology.kubernetes.io/region=ap-northeast-2,topology.kubernetes.io/zone=ap-northeast-2a

ip-192-168-11-16.ap-northeast-2.compute.internal Ready <none> 39m v1.34.4-eks-f69f56f beta.kubernetes.io/arch=amd64,beta.kubernetes.io/instance-type=t3.medium,beta.kubernetes.io/os=linux,eks.amazonaws.com/capacityType=ON_DEMAND,eks.amazonaws.com/nodegroup-image=ami-0041be04b53631868,eks.amazonaws.com/nodegroup=myeks-1nd-node-group,eks.amazonaws.com/sourceLaunchTemplateId=lt-08e395f933cfc84e6,eks.amazonaws.com/sourceLaunchTemplateVersion=1,failure-domain.beta.kubernetes.io/region=ap-northeast-2,failure-domain.beta.kubernetes.io/zone=ap-northeast-2c,k8s.io/cloud-provider-aws=5553ae84a0d29114870f67bbabd07d44,kubernetes.io/arch=amd64,kubernetes.io/hostname=ip-192-168-11-16.ap-northeast-2.compute.internal,kubernetes.io/os=linux,node.kubernetes.io/instance-type=t3.medium,tier=primary,topology.k8s.aws/zone-id=apne2-az3,topology.kubernetes.io/region=ap-northeast-2,topology.kubernetes.io/zone=ap-northeast-2c

ip-192-168-6-183.ap-northeast-2.compute.internal Ready <none> 39m v1.34.4-eks-f69f56f beta.kubernetes.io/arch=amd64,beta.kubernetes.io/instance-type=t3.medium,beta.kubernetes.io/os=linux,eks.amazonaws.com/capacityType=ON_DEMAND,eks.amazonaws.com/nodegroup-image=ami-0041be04b53631868,eks.amazonaws.com/nodegroup=myeks-1nd-node-group,eks.amazonaws.com/sourceLaunchTemplateId=lt-08e395f933cfc84e6,eks.amazonaws.com/sourceLaunchTemplateVersion=1,failure-domain.beta.kubernetes.io/region=ap-northeast-2,failure-domain.beta.kubernetes.io/zone=ap-northeast-2b,k8s.io/cloud-provider-aws=5553ae84a0d29114870f67bbabd07d44,kubernetes.io/arch=amd64,kubernetes.io/hostname=ip-192-168-6-183.ap-northeast-2.compute.internal,kubernetes.io/os=linux,node.kubernetes.io/instance-type=t3.medium,tier=primary,topology.k8s.aws/zone-id=apne2-az2,topology.kubernetes.io/region=ap-northeast-2,topology.kubernetes.io/zone=ap-northeast-2b

[13:37:39] mzc01-voieul:2w git:(main*) $ kubectl get node -l tier=primary

NAME STATUS ROLES AGE VERSION

ip-192-168-1-210.ap-northeast-2.compute.internal Ready <none> 39m v1.34.4-eks-f69f56f

ip-192-168-11-16.ap-northeast-2.compute.internal Ready <none> 39m v1.34.4-eks-f69f56f

ip-192-168-6-183.ap-northeast-2.compute.internal Ready <none> 39m v1.34.4-eks-f69f56f

2w git:(main*) $ kubectl get node -l tier=primary

NAME STATUS ROLES AGE VERSION

ip-192-168-1-210.ap-northeast-2.compute.internal Ready <none> 39m v1.34.4-eks-f69f56f

ip-192-168-11-16.ap-northeast-2.compute.internal Ready <none> 39m v1.34.4-eks-f69f56f

ip-192-168-6-183.ap-northeast-2.compute.internal Ready <none> 39m v1.34.4-eks-f69f56f

# 파드 정보 확인

2w git:(main*) $ kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system aws-node-6922w 2/2 Running 0 41m

kube-system aws-node-6ttt6 2/2 Running 0 41m

kube-system aws-node-r77xb 2/2 Running 0 41m

kube-system coredns-d487b6fcb-dkvpq 1/1 Running 0 40m

kube-system coredns-d487b6fcb-ftjqs 1/1 Running 0 40m

kube-system kube-proxy-54b6l 1/1 Running 0 40m

kube-system kube-proxy-7kgjr 1/1 Running 0 40m

kube-system kube-proxy-w2gzn 1/1 Running 0 40m

2w git:(main*) $ kubectl get pdb -n kube-system

NAME MIN AVAILABLE MAX UNAVAILABLE ALLOWED DISRUPTIONS AGE

coredns N/A 1 1 40m

# 관리형 노드 그룹 확인

2w git:(main*) $ aws eks describe-nodegroup --cluster-name myeks --nodegroup-name myeks-1nd-node-group | jq

{

"nodegroup": {

"nodegroupName": "myeks-1nd-node-group",

"nodegroupArn": "arn:aws:eks:ap-northeast-2:143649248460:nodegroup/myeks/myeks-1nd-node-group/dcce8efe-6362-fde5-2cf2-4c2c4ee74afa",

"clusterName": "myeks",

"version": "1.34",

"releaseVersion": "1.34.4-20260317",

"createdAt": "2026-03-24T12:56:41.571000+09:00",

"modifiedAt": "2026-03-24T13:38:04.663000+09:00",

"status": "ACTIVE",

"capacityType": "ON_DEMAND",

"scalingConfig": {

"minSize": 2,

"maxSize": 5,

"desiredSize": 3

},

"instanceTypes": [

"t3.medium"

],

"subnets": [

"subnet-0ff9ed04cb8b082ec",

"subnet-0d7752b137e08028c",

"subnet-0f16f6746d3a7c4be"

],

"amiType": "AL2023_x86_64_STANDARD",

"nodeRole": "arn:aws:iam::143649248460:role/myeks-1nd-node-group-eks-node-group-20260324034658874100000006",

"labels": {

"tier": "primary"

},

"resources": {

"autoScalingGroups": [

{

"name": "eks-myeks-1nd-node-group-dcce8efe-6362-fde5-2cf2-4c2c4ee74afa"

}

]

},

"health": {

"issues": []

},

"updateConfig": {

"maxUnavailablePercentage": 33

},

"launchTemplate": {

"name": "primary-20260324035632468600000009",

"version": "1",

"id": "lt-08e395f933cfc84e6"

},

"tags": {

"Terraform": "true",

"Environment": "cloudneta-lab",

"Name": "myeks-1nd-node-group"

}

}

}

# eks addon 확인

1. AWS VPC CNI 소개

- K8S CNI : Container Network Interface 는 k8s 네트워크 환경을 구성해준다 - 링크, 다양한 플러그인이 존재 - 링크

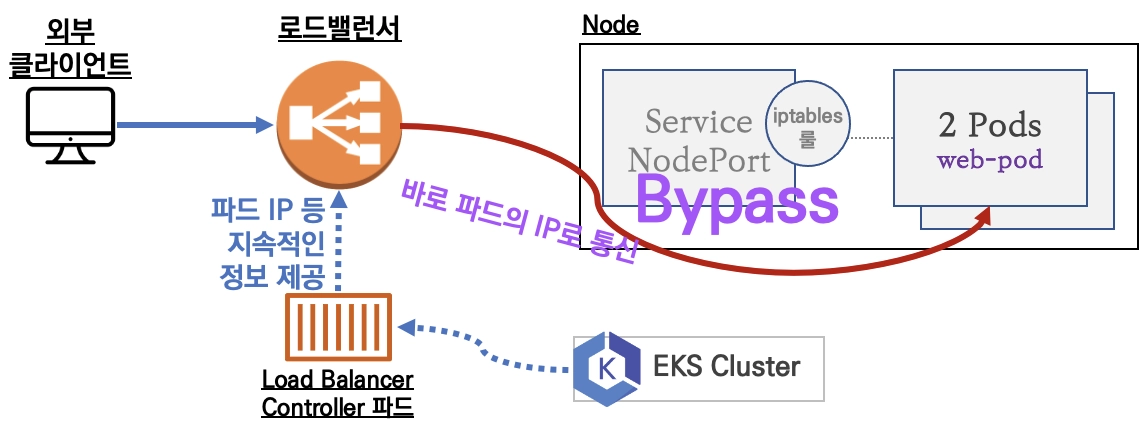

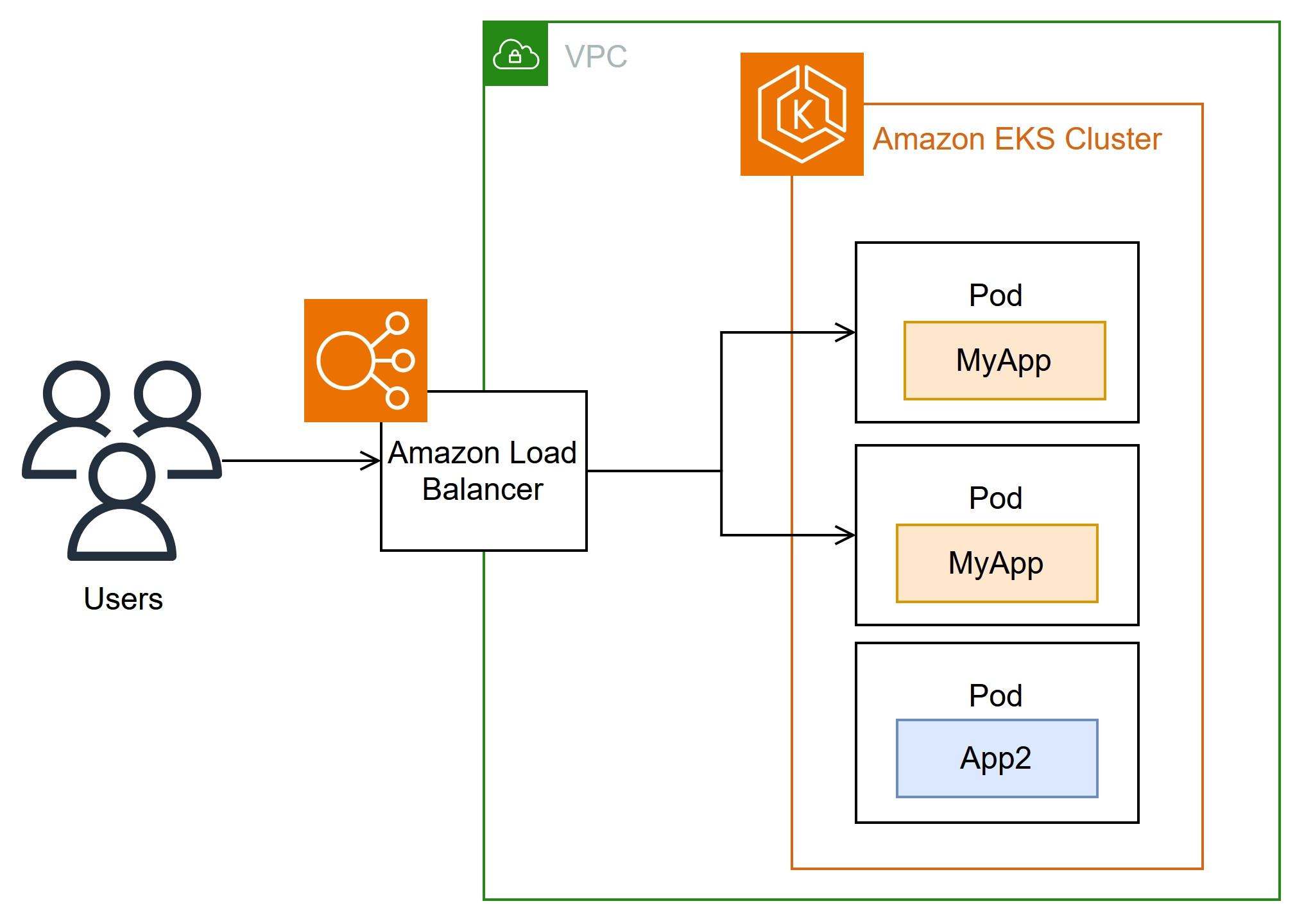

- AWS VPC CNI : 파드 IP 할당, 파드의 IP 네트워크 대역과 노드(워커)의 IP 대역이 같아서 직접 통신이 가능 - Docs , Github , Proposal

- Amazon Virtual Private Cloud(VPC) CNI add-on

- AWS에서 제공하는 VPC CNI는 EKS 클러스터의 기본 네트워킹 추가 기능 add-on 입니다. VPC CNI 추가 기능은 EKS 클러스터를 프로비저닝할 때 기본적으로 설치됩니다. VPC CNI는 Kubernetes 작업자 노드에서 실행됩니다. VPC CNI 추가 기능은 CNI 바이너리와 IP 주소 관리(ipamd) 플러그인으로 구성됩니다. CNI는 VPC 네트워크의 IP 주소를 포드에 할당합니다. ipamd는 각 Kubernetes 노드에 대한 AWS 탄력적 네트워킹 인터페이스(ENIs)를 관리하고 IPs의 웜 풀을 warm pool 유지합니다. VPC CNI는 빠른 포드 시작 시간을 위한 ENIs 및 IP 주소의 사전 할당 pre-allocation 을 위한 구성 옵션을 제공합니다. 권장 플러그인 관리 모범 사례는 Amazon VPC CNI를 참조하세요.

- VPC 와 통합 : VPC Flow logs , VPC 라우팅 정책, 보안 그룹(Security group) 을 사용 가능함

- Amazon EKS에서는 클러스터를 생성할 때 두 개 이상의 가용 영역에 서브넷을 지정하는 것이 좋습니다. Amazon VPC CNI는 노드 서브넷의 포드에 IP 주소를 할당합니다. 서브넷에서 사용 가능한 IP 주소를 확인하는 것이 좋습니다. EKS 클러스터를 배포하기 전에 VPC 및 서브넷 권장 사항을 고려하세요.

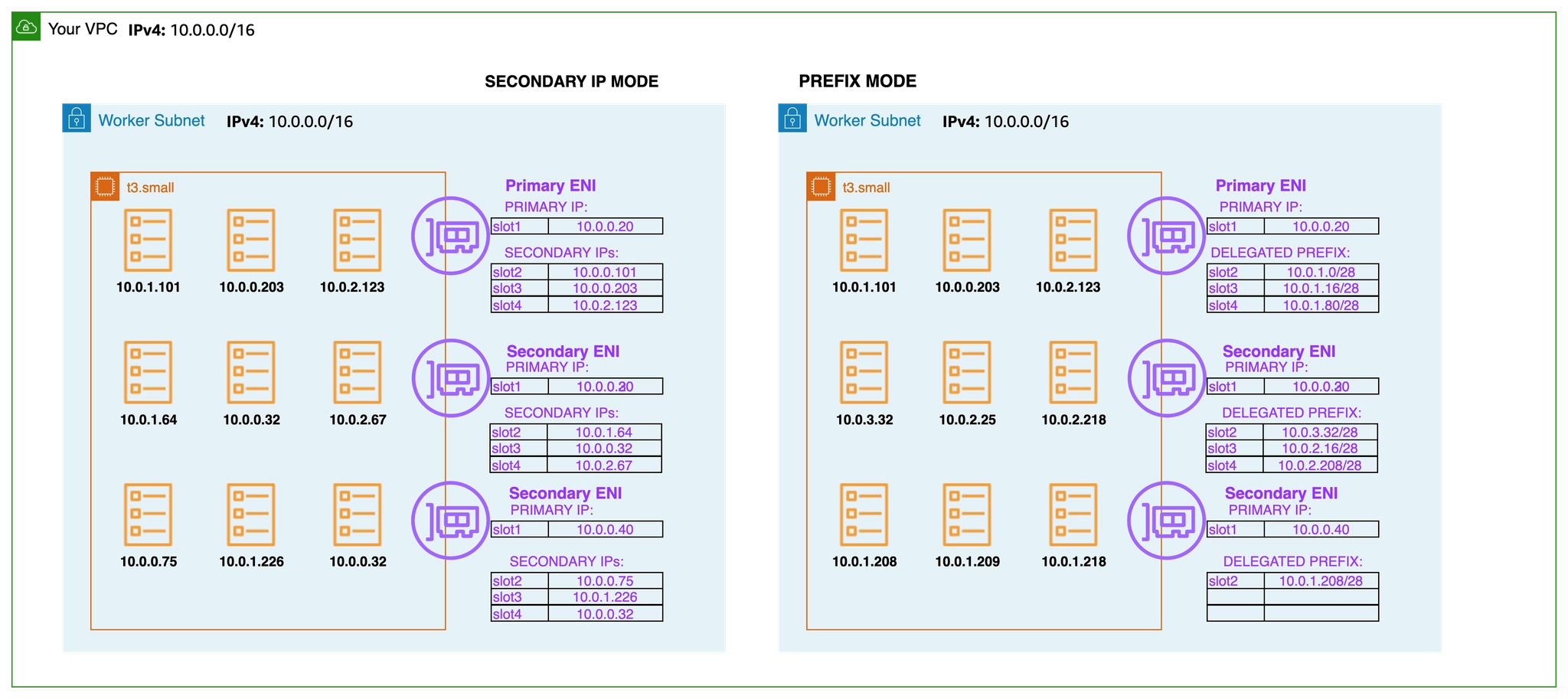

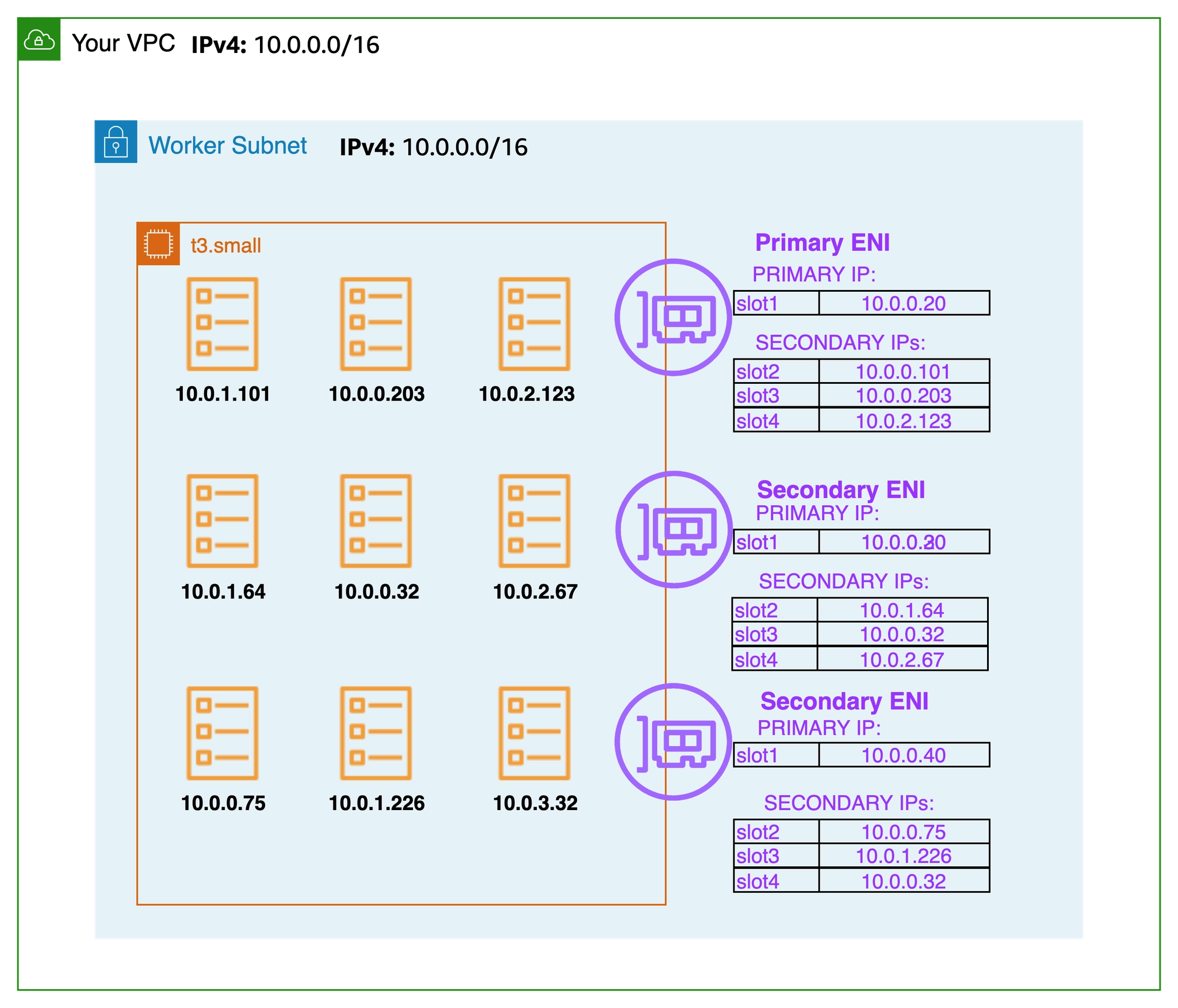

- 보조 IP 모드(기본값) secondary IP mode : Amazon VPC CNI는 노드의 기본 ENIs에 연결된 서브넷에서 ENI 및 보조 IP 주소의 웜 풀을 할당합니다. VPC CNI의이 모드를 보조 IP 모드라고 합니다. IP 주소 수와 따라서 **포드 수(Pod 밀도)**는 인스턴스 유형에 의해 정의된 ENIs 수와 ENI당 IP 주소(한도)로 정의됩니다. 보조 모드는 기본값이며 인스턴스 유형이 작은 소규모 클러스터에 적합합니다.

- 접두사 모드 (포드 밀도 필요 시) prefix mode : 포드 밀도 문제가 발생하는 경우 ENIs 접두사 모드를 사용하는 것이 좋습니다.

- 파드 보안 그룹 security groups for Pods : Amazon VPC CNI는 기본적으로 AWS VPC와 통합되며 사용자는 Kubernetes 클러스터 구축을 위한 기존 AWS VPC 네트워킹 및 보안 모범 사례를 적용할 수 있습니다. 여기에는 네트워크 트래픽 격리를 위해 VPC 흐름 로그, VPC 라우팅 정책 및 보안 그룹을 사용하는 기능이 포함됩니다. 기본적으로 Amazon VPC CNI는 노드의 기본 ENI와 연결된 보안 그룹을 포드에 적용합니다. 포드에 다른 네트워크 규칙을 할당하려는 경우 포드에 대한 보안 그룹을 활성화하는 것이 좋습니다.

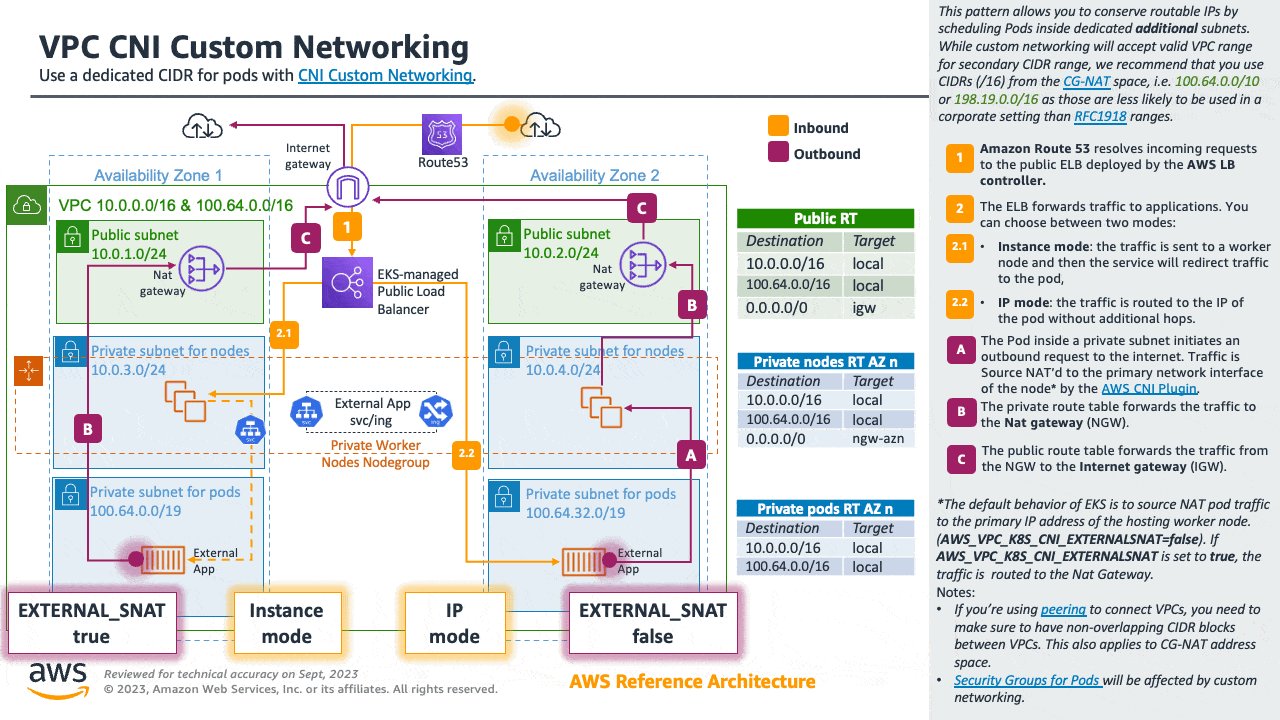

- 사용자 지정 네트워킹(보조 CIDR 할당 사용) custom networking : 기본적으로 VPC CNI는 노드의 기본 ENI에 할당된 서브넷의 포드에 IP 주소를 할당합니다. 수천 개의 워크로드가 있는 대규모 클러스터를 실행할 때 IPv4 주소가 부족한 것이 일반적입니다. AWS VPC를 사용하면 IPv4 CIDRs 블록의 고갈을 해결할 보조 CIDR을 할당하여 사용 가능한 IPs를 확장할 수 있습니다. AWS VPC CNI를 사용하면 포드에 대해 다른 서브넷 CIDR 범위를 사용할 수 있습니다. VPC CNI의이 기능을 사용자 지정 네트워킹이라고 합니다. 사용자 지정 네트워킹을 사용하여 EKS에서 100.64.0.0/10 및 198.19.0.0/16 CIDRs(CG-NAT)을 사용하는 것이 좋습니다. 이렇게 하면 포드가 더 이상 VPC의 RFC1918 IP 주소를 사용하지 않는 환경을 효과적으로 생성할 수 있습니다.

Amazon VPC CNI 플러그인 - Docs , Kor

소개

- Amazon EKS는 VPC CNI라고도 하는 Amazon VPC 컨테이너 네트워크 인터페이스 플러그인을 통해 클러스터 네트워킹을 구현합니다.

- CNI 플러그인을 사용하면 Kubernetes 포드가 VPC 네트워크에서와 동일한 IP 주소를 가질 수 있습니다.

- 특히 포드 내의 모든 컨테이너는 네트워크 네임스페이스를 공유하며 로컬 포트를 사용하여 서로 통신할 수 있습니다.

- (참고) 파드간 통신 시 일반적으로 K8S CNI는 오버레이(VXLAN, IP-IP 등) 통신을 하고, AWS VPC CNI는 동일 대역으로 직접 통신을 한다.

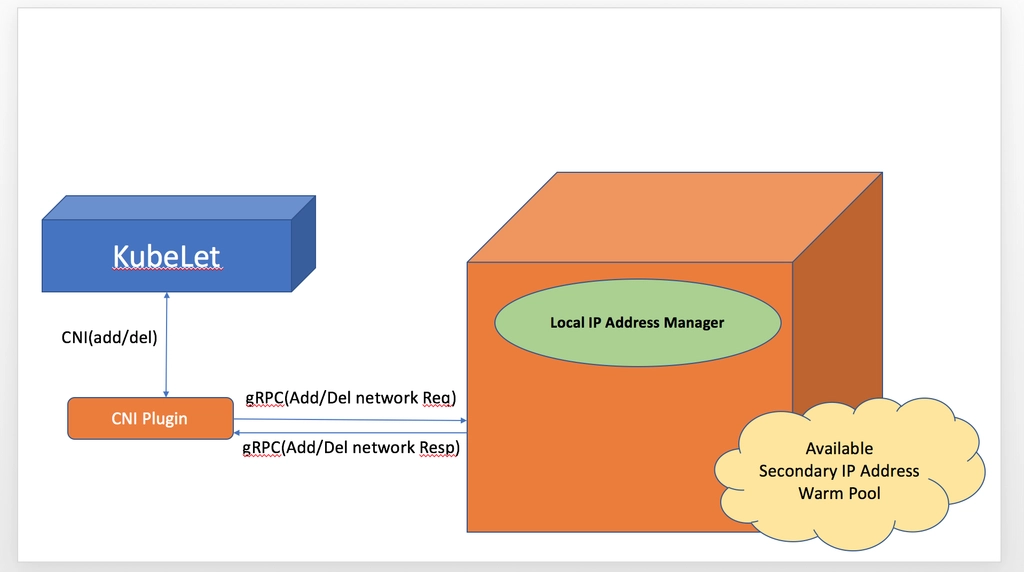

- Amazon VPC CNI에는 두 가지 구성 요소가 있습니다.

- 포드 간 통신을 활성화Pod-to-Pod 네트워크를 설정하는 CNI 바이너리입니다. CNI 바이너리는 노드 루트 파일 시스템에서 실행되며 새 포드가에 추가되거나 노드에서 기존 포드가 제거될 때 kubelet에 의해 호출됩니다.

- long-running node-local IP Address Management 장기 실행 노드-로컬 IP 주소 관리(IPAM) 데몬인 ipamd는 다음을 담당합니다.

- 노드에서 ENIs 관리 managing ENIs on a node

- 사용 가능한 IP 주소 또는 접두사의 웜 풀 유지 관리 maintaining a warm-pool of available IP addresses or prefix

- VPC ENI 에 미리 할당된 IP(=Local-IPAM Warm IP Pool)를 파드에서 사용할 수 있음 ← 파드의 빠른 시작을 위해서

- L-IPAM 소개 - 링크

Secondary IP mode Overview

- 보조 IP 모드 Secondary IP mode 는 VPC CNI의 기본 모드입니다. 이 가이드에서는 보조 IP 모드가 활성화된 경우 VPC CNI 동작에 대한 일반적인 개요를 제공합니다. ipamd(IP 주소 할당)의 기능은 , Linux용 접두사 모드및 포드당 보안 그룹와 같은 VPC CNI의 구성 설정에 따라 달라질 수 있습니다사용자 지정 네트워킹.

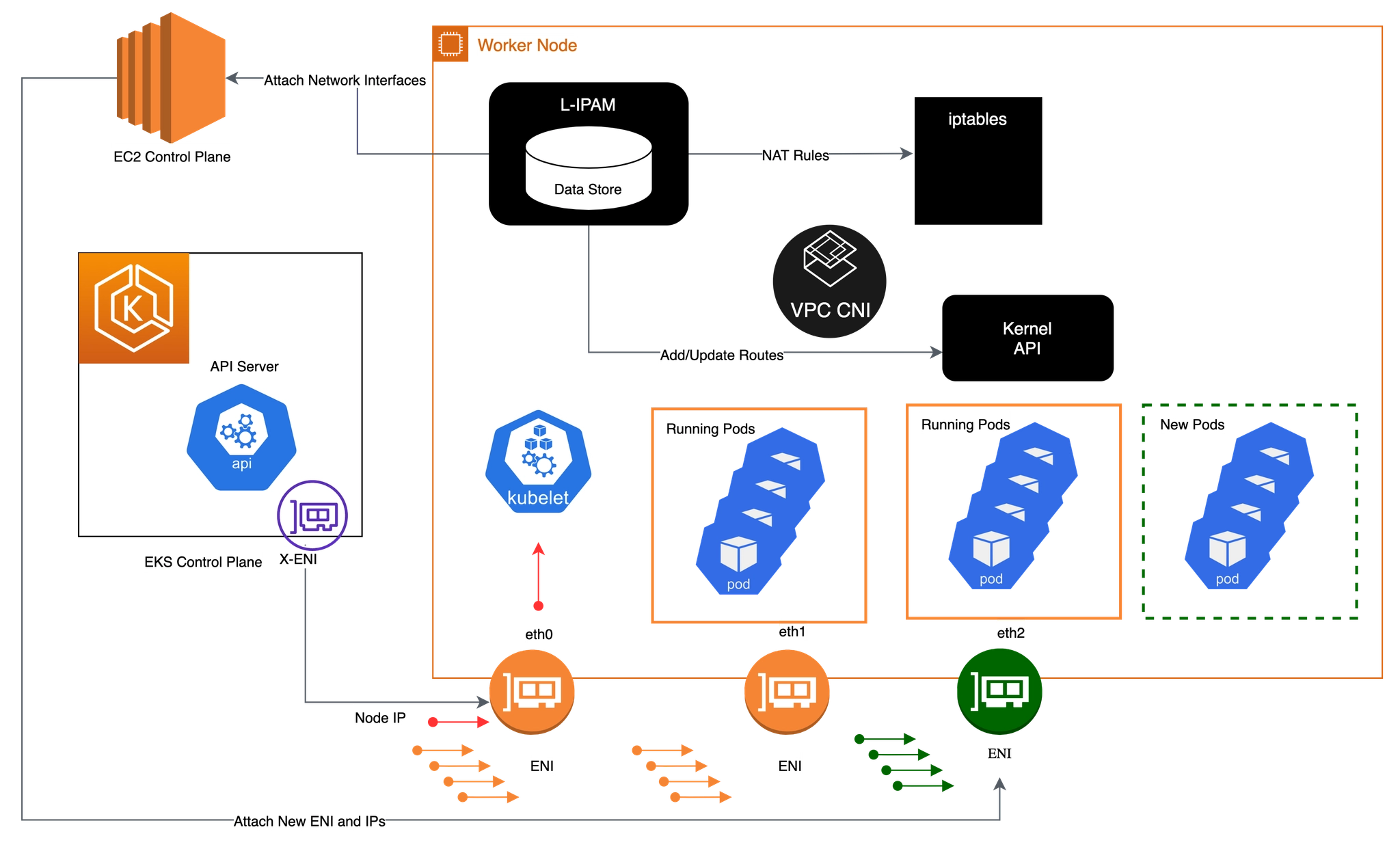

- Amazon VPC CNI는 작업자 노드에 aws-node라는 Kubernetes Daemonset으로 배포됩니다. 작업자 노드가 프로비저닝되면 기본 ENI라고 하는 기본 ENI가 노드에 연결됩니다. CNI는 노드의 기본 ENIs에 연결된 서브넷에서 ENI 및 보조 IP 주소의 웜 풀을 할당합니다. 기본적으로 ipamd는 노드에 추가 ENI를 할당하려고 시도합니다. IPAMD는 단일 포드가 예약되고 기본 ENI의 보조 IP 주소가 할당될 때 추가 ENI를 할당합니다. 이 "웜" ENI를 사용하면 더 빠른 포드 네트워킹이 가능합니다. 보조 IP 주소 풀이 부족해지면 CNI는 다른 ENI를 추가하여 더 할당합니다.

- 풀의 ENIs 및 IP 주소 수는 WARM_ENI_TARGET, WARM_IP_TARGET, MINIMUM_IP_TARGET이라는 환경 변수를 통해 구성됩니다. aws-node Daemonset은 충분한 수의 ENIs가 연결되어 있는지 주기적으로 확인합니다. WARM_ENI_TARGET 또는 WARM_IP_TARGET 및 MINIMUM_IP_TARGET 조건이 모두 충족되면 충분한 수의 ENIs가 연결됩니다. ENIs가 충분하지 않으면 CNI는 MAX_ENI 한도에 도달할 때까지 EC2에 API를 호출하여 더 많이 연결합니다.

- WARM_ENI_TARGET : 미리 붙여둘 ENI 개수

- 예) WARM_ENI_TARGET = 1 ⇒ 항상 사용하지 않는 ENI 1개 유지

- 정의: 현재 사용 중인 ENI 외에 추가로 유지할 빈(Available) ENI의 개수입니다.

- 작동: 예를 들어 이 값이 1이면, 현재 ENI가 꽉 차지 않았더라도 VPC CNI는 나중에 올 Pod들을 위해 미리 ENI 하나를 더 할당받아 둡니다.

- 특징: ENI를 통째로 가져오므로 IP 확보 속도가 가장 빠르지만, IP 낭비가 심할 수 있습니다.

- WARM_IP_TARGET : 남겨둘 여유 IP 개수

- 예) WARM_IP_TARGET = 10 ⇒ 항상 10개 IP 여유 유지

- 정의: 현재 사용 중인 IP 외에 추가로 유지할 여유 IP 주소의 개수입니다.

- 작동: 이 값이 5라면, Pod가 10개 떠 있을 때 노드는 항상 15개의 IP를 확보하려고 시도합니다.

- 특징: WARM_ENI_TARGET보다 세밀하게(Fine-grained) IP를 관리할 수 있어 IP 자원이 부족한 VPC에서 선호됩니다.

- MINIMUM_IP_TARGET : 최소 확보해야 할 IP 총량

- 예) MINIMUM_IP_TARGET = 30 ⇒ 노드 시작 시 최소 30개 IP 확보

- 정의: 노드가 가동될 때 최소한으로 확보하고 있어야 하는 전체 IP의 개수입니다.

- 작동: 이 값이 20이면, Pod가 하나도 없더라도 일단 IP 20개를 확보해 둡니다.

- 특징: 초기 대규모 트래픽 유입으로 Pod가 급격히 늘어날 때(Scale-out) IP 할당 지연을 방지합니다. 보통 WARM_IP_TARGET과 함께 사용됩니다.

- aws-node 데몬셋에서 관련 env 확인

- 아래 파라미터 값이 설정되어 기본 ENI 외에 여분의 ENI 1개가 더 추가됨

- [현재 사용 중인 ENI] + [여분의 ENI 1개]

# aws-node DaemonSet의 env 확인

2w git:(main*) $ kubectl get ds aws-node -n kube-system -o json | jq '.spec.template.spec.containers[0].env'

[

{

"name": "ADDITIONAL_ENI_TAGS",

"value": "{}"

},

{

"name": "ANNOTATE_POD_IP",

"value": "false"

},

{

"name": "AWS_VPC_CNI_NODE_PORT_SUPPORT",

"value": "true"

},

{

"name": "AWS_VPC_ENI_MTU",

"value": "9001"

},

{

"name": "AWS_VPC_K8S_CNI_CUSTOM_NETWORK_CFG",

"value": "false"

},

{

"name": "AWS_VPC_K8S_CNI_EXTERNALSNAT",

"value": "false"

},

{

"name": "AWS_VPC_K8S_CNI_LOGLEVEL",

"value": "DEBUG"

},

{

"name": "AWS_VPC_K8S_CNI_LOG_FILE",

"value": "/host/var/log/aws-routed-eni/ipamd.log"

},

{

"name": "AWS_VPC_K8S_CNI_RANDOMIZESNAT",

"value": "prng"

},

{

"name": "AWS_VPC_K8S_CNI_VETHPREFIX",

"value": "eni"

},

{

"name": "AWS_VPC_K8S_PLUGIN_LOG_FILE",

"value": "/var/log/aws-routed-eni/plugin.log"

},

{

"name": "AWS_VPC_K8S_PLUGIN_LOG_LEVEL",

"value": "DEBUG"

},

{

"name": "CLUSTER_ENDPOINT",

"value": "https://ECAEBC91A81409A04556F202056B6FFE.gr7.ap-northeast-2.eks.amazonaws.com"

},

{

"name": "CLUSTER_NAME",

"value": "myeks"

},

{

"name": "DISABLE_INTROSPECTION",

"value": "false"

},

{

"name": "DISABLE_METRICS",

"value": "false"

},

{

"name": "DISABLE_NETWORK_RESOURCE_PROVISIONING",

"value": "false"

},

{

"name": "ENABLE_IMDS_ONLY_MODE",

"value": "false"

},

{

"name": "ENABLE_IPv4",

"value": "true"

},

{

"name": "ENABLE_IPv6",

"value": "false"

},

{

"name": "ENABLE_MULTI_NIC",

"value": "false"

},

{

"name": "ENABLE_POD_ENI",

"value": "false"

},

{

"name": "ENABLE_PREFIX_DELEGATION",

"value": "false"

},

{

"name": "ENABLE_SUBNET_DISCOVERY",

"value": "true"

},

{

"name": "NETWORK_POLICY_ENFORCING_MODE",

"value": "standard"

},

{

"name": "VPC_CNI_VERSION",

"value": "v1.21.1"

},

{

"name": "VPC_ID",

"value": "vpc-0a978c99d0f9f870a"

},

{

"name": "WARM_ENI_TARGET",

"value": "1"

},

{

"name": "WARM_PREFIX_TARGET",

"value": "1"

},

{

"name": "MY_NODE_NAME",

"valueFrom": {

"fieldRef": {

"apiVersion": "v1",

"fieldPath": "spec.nodeName"

}

}

},

{

"name": "MY_POD_NAME",

"valueFrom": {

"fieldRef": {

"apiVersion": "v1",

"fieldPath": "metadata.name"

}

}

}

]

2w git:(main*) $ kubectl describe ds aws-node -n kube-system | grep -E "WARM_ENI_TARGET|WARM_IP_TARGET|MINIMUM_IP_TARGET"

WARM_ENI_TARGET:

2w git:(main*) $ kubectl get daemonset aws-node --show-managed-fields -n kube-system -o yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

annotations:

deprecated.daemonset.template.generation: "1"

creationTimestamp: "2026-03-24T11:32:41Z"

generation: 1

labels:

app.kubernetes.io/instance: aws-vpc-cni

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/name: aws-node

app.kubernetes.io/version: v1.21.1

helm.sh/chart: aws-vpc-cni-1.21.1

k8s-app: aws-node

managedFields:

- apiVersion: apps/v1

fieldsType: FieldsV1

fieldsV1:

f:metadata:

f:labels:

f:app.kubernetes.io/instance: {}

f:app.kubernetes.io/managed-by: {}

f:app.kubernetes.io/name: {}

f:app.kubernetes.io/version: {}

f:helm.sh/chart: {}

f:k8s-app: {}

f:spec:

f:selector: {}

f:template:

f:metadata:

f:labels:

f:app.kubernetes.io/instance: {}

f:app.kubernetes.io/name: {}

f:k8s-app: {}

f:spec:

f:affinity:

f:nodeAffinity:

f:requiredDuringSchedulingIgnoredDuringExecution: {}

f:containers:

k:{"name":"aws-eks-nodeagent"}:

.: {}

f:args: {}

f:env:

k:{"name":"MY_NODE_NAME"}:

.: {}

f:name: {}

f:valueFrom:

f:fieldRef: {}

f:image: {}

f:imagePullPolicy: {}

f:name: {}

f:ports:

k:{"containerPort":8162,"protocol":"TCP"}:

.: {}

f:containerPort: {}

f:name: {}

f:resources:

f:requests:

f:cpu: {}

f:securityContext:

f:capabilities:

f:add: {}

f:privileged: {}

f:volumeMounts:

k:{"mountPath":"/host/opt/cni/bin"}:

.: {}

f:mountPath: {}

f:name: {}

k:{"mountPath":"/sys/fs/bpf"}:

.: {}

f:mountPath: {}

f:name: {}

k:{"mountPath":"/var/log/aws-routed-eni"}:

.: {}

f:mountPath: {}

f:name: {}

k:{"mountPath":"/var/run/aws-node"}:

.: {}

f:mountPath: {}

f:name: {}

k:{"name":"aws-node"}:

.: {}

f:env:

k:{"name":"ADDITIONAL_ENI_TAGS"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"ANNOTATE_POD_IP"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_CNI_NODE_PORT_SUPPORT"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_ENI_MTU"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_K8S_CNI_CUSTOM_NETWORK_CFG"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_K8S_CNI_EXTERNALSNAT"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_K8S_CNI_LOG_FILE"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_K8S_CNI_LOGLEVEL"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_K8S_CNI_RANDOMIZESNAT"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_K8S_CNI_VETHPREFIX"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_K8S_PLUGIN_LOG_FILE"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"AWS_VPC_K8S_PLUGIN_LOG_LEVEL"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"CLUSTER_ENDPOINT"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"CLUSTER_NAME"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"DISABLE_INTROSPECTION"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"DISABLE_METRICS"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"DISABLE_NETWORK_RESOURCE_PROVISIONING"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"ENABLE_IMDS_ONLY_MODE"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"ENABLE_IPv4"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"ENABLE_IPv6"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"ENABLE_MULTI_NIC"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"ENABLE_POD_ENI"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"ENABLE_PREFIX_DELEGATION"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"ENABLE_SUBNET_DISCOVERY"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"MY_NODE_NAME"}:

.: {}

f:name: {}

f:valueFrom:

f:fieldRef: {}

k:{"name":"MY_POD_NAME"}:

.: {}

f:name: {}

f:valueFrom:

f:fieldRef: {}

k:{"name":"NETWORK_POLICY_ENFORCING_MODE"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"VPC_CNI_VERSION"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"VPC_ID"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"WARM_ENI_TARGET"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"WARM_PREFIX_TARGET"}:

.: {}

f:name: {}

f:value: {}

f:image: {}

f:livenessProbe:

f:exec:

f:command: {}

f:initialDelaySeconds: {}

f:timeoutSeconds: {}

f:name: {}

f:ports:

k:{"containerPort":61678,"protocol":"TCP"}:

.: {}

f:containerPort: {}

f:name: {}

f:readinessProbe:

f:exec:

f:command: {}

f:initialDelaySeconds: {}

f:timeoutSeconds: {}

f:resources:

f:requests:

f:cpu: {}

f:securityContext:

f:capabilities:

f:add: {}

f:volumeMounts:

k:{"mountPath":"/host/etc/cni/net.d"}:

.: {}

f:mountPath: {}

f:name: {}

k:{"mountPath":"/host/opt/cni/bin"}:

.: {}

f:mountPath: {}

f:name: {}

k:{"mountPath":"/host/var/log/aws-routed-eni"}:

.: {}

f:mountPath: {}

f:name: {}

k:{"mountPath":"/run/xtables.lock"}:

.: {}

f:mountPath: {}

f:name: {}

k:{"mountPath":"/var/run/aws-node"}:

.: {}

f:mountPath: {}

f:name: {}

f:hostNetwork: {}

f:initContainers:

k:{"name":"aws-vpc-cni-init"}:

.: {}

f:env:

k:{"name":"DISABLE_TCP_EARLY_DEMUX"}:

.: {}

f:name: {}

f:value: {}

k:{"name":"ENABLE_IPv6"}:

.: {}

f:name: {}

f:value: {}

f:image: {}

f:imagePullPolicy: {}

f:name: {}

f:resources:

f:requests:

f:cpu: {}

f:securityContext:

f:privileged: {}

f:volumeMounts:

k:{"mountPath":"/host/opt/cni/bin"}:

.: {}

f:mountPath: {}

f:name: {}

f:priorityClassName: {}

f:securityContext: {}

f:serviceAccountName: {}

f:terminationGracePeriodSeconds: {}

f:tolerations: {}

f:volumes:

k:{"name":"bpf-pin-path"}:

.: {}

f:hostPath:

f:path: {}

f:name: {}

k:{"name":"cni-bin-dir"}:

.: {}

f:hostPath:

f:path: {}

f:name: {}

k:{"name":"cni-net-dir"}:

.: {}

f:hostPath:

f:path: {}

f:name: {}

k:{"name":"log-dir"}:

.: {}

f:hostPath:

f:path: {}

f:type: {}

f:name: {}

k:{"name":"run-dir"}:

.: {}

f:hostPath:

f:path: {}

f:type: {}

f:name: {}

k:{"name":"xtables-lock"}:

.: {}

f:hostPath:

f:path: {}

f:type: {}

f:name: {}

f:updateStrategy:

f:rollingUpdate:

f:maxUnavailable: {}

f:type: {}

manager: eks

operation: Apply

time: "2026-03-24T11:32:41Z"

- apiVersion: apps/v1

fieldsType: FieldsV1

fieldsV1:

f:status:

f:currentNumberScheduled: {}

f:desiredNumberScheduled: {}

f:numberAvailable: {}

f:numberReady: {}

f:observedGeneration: {}

f:updatedNumberScheduled: {}

manager: kube-controller-manager

operation: Update

subresource: status

time: "2026-03-24T11:34:44Z"

name: aws-node

namespace: kube-system

resourceVersion: "1284"

uid: 74d414fc-b1fa-4059-b08f-c97bb86c4726

spec:

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: aws-node

template:

metadata:

labels:

app.kubernetes.io/instance: aws-vpc-cni

app.kubernetes.io/name: aws-node

k8s-app: aws-node

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

- key: kubernetes.io/arch

operator: In

values:

- amd64

- arm64

- key: eks.amazonaws.com/compute-type

operator: NotIn

values:

- fargate

- hybrid

- auto

containers:

- env:

- name: ADDITIONAL_ENI_TAGS

value: '{}'

- name: ANNOTATE_POD_IP

value: "false"

- name: AWS_VPC_CNI_NODE_PORT_SUPPORT

value: "true"

- name: AWS_VPC_ENI_MTU

value: "9001"

- name: AWS_VPC_K8S_CNI_CUSTOM_NETWORK_CFG

value: "false"

- name: AWS_VPC_K8S_CNI_EXTERNALSNAT

value: "false"

- name: AWS_VPC_K8S_CNI_LOGLEVEL

value: DEBUG

- name: AWS_VPC_K8S_CNI_LOG_FILE

value: /host/var/log/aws-routed-eni/ipamd.log

- name: AWS_VPC_K8S_CNI_RANDOMIZESNAT

value: prng

- name: AWS_VPC_K8S_CNI_VETHPREFIX

value: eni

- name: AWS_VPC_K8S_PLUGIN_LOG_FILE

value: /var/log/aws-routed-eni/plugin.log

- name: AWS_VPC_K8S_PLUGIN_LOG_LEVEL

value: DEBUG

- name: CLUSTER_ENDPOINT

value: https://ECAEBC91A81409A04556F202056B6FFE.gr7.ap-northeast-2.eks.amazonaws.com

- name: CLUSTER_NAME

value: myeks

- name: DISABLE_INTROSPECTION

value: "false"

- name: DISABLE_METRICS

value: "false"

- name: DISABLE_NETWORK_RESOURCE_PROVISIONING

value: "false"

- name: ENABLE_IMDS_ONLY_MODE

value: "false"

- name: ENABLE_IPv4

value: "true"

- name: ENABLE_IPv6

value: "false"

- name: ENABLE_MULTI_NIC

value: "false"

- name: ENABLE_POD_ENI

value: "false"

- name: ENABLE_PREFIX_DELEGATION

value: "false"

- name: ENABLE_SUBNET_DISCOVERY

value: "true"

- name: NETWORK_POLICY_ENFORCING_MODE

value: standard

- name: VPC_CNI_VERSION

value: v1.21.1

- name: VPC_ID

value: vpc-0a978c99d0f9f870a

- name: WARM_ENI_TARGET

value: "1"

- name: WARM_PREFIX_TARGET

value: "1"

- name: MY_NODE_NAME

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: spec.nodeName

- name: MY_POD_NAME

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: metadata.name

image: 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon-k8s-cni:v1.21.1-eksbuild.5

imagePullPolicy: IfNotPresent

livenessProbe:

exec:

command:

- /app/grpc-health-probe

- -addr=:50051

- -connect-timeout=5s

- -rpc-timeout=5s

failureThreshold: 3

initialDelaySeconds: 60

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

name: aws-node

ports:

- containerPort: 61678

name: metrics

protocol: TCP

readinessProbe:

exec:

command:

- /app/grpc-health-probe

- -addr=:50051

- -connect-timeout=5s

- -rpc-timeout=5s

failureThreshold: 3

initialDelaySeconds: 1

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

resources:

requests:

cpu: 25m

securityContext:

capabilities:

add:

- NET_ADMIN

- NET_RAW

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /host/opt/cni/bin

name: cni-bin-dir

- mountPath: /host/etc/cni/net.d

name: cni-net-dir

- mountPath: /host/var/log/aws-routed-eni

name: log-dir

- mountPath: /var/run/aws-node

name: run-dir

- mountPath: /run/xtables.lock

name: xtables-lock

- args:

- --enable-ipv6=false

- --enable-network-policy=false

- --enable-cloudwatch-logs=false

- --enable-policy-event-logs=false

- --log-file=/var/log/aws-routed-eni/network-policy-agent.log

- --metrics-bind-addr=:8162

- --health-probe-bind-addr=:8163

- --conntrack-cache-cleanup-period=300

- --log-level=debug

env:

- name: MY_NODE_NAME

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: spec.nodeName

image: 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon/aws-network-policy-agent:v1.3.1-eksbuild.1

imagePullPolicy: Always

name: aws-eks-nodeagent

ports:

- containerPort: 8162

name: agentmetrics

protocol: TCP

resources:

requests:

cpu: 25m

securityContext:

capabilities:

add:

- NET_ADMIN

privileged: true

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /host/opt/cni/bin

name: cni-bin-dir

- mountPath: /sys/fs/bpf

name: bpf-pin-path

- mountPath: /var/log/aws-routed-eni

name: log-dir

- mountPath: /var/run/aws-node

name: run-dir

dnsPolicy: ClusterFirst

hostNetwork: true

initContainers:

- env:

- name: DISABLE_TCP_EARLY_DEMUX

value: "false"

- name: ENABLE_IPv6

value: "false"

image: 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon-k8s-cni-init:v1.21.1-eksbuild.5

imagePullPolicy: Always

name: aws-vpc-cni-init

resources:

requests:

cpu: 25m

securityContext:

privileged: true

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /host/opt/cni/bin

name: cni-bin-dir

priorityClassName: system-node-critical

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

serviceAccount: aws-node

serviceAccountName: aws-node

terminationGracePeriodSeconds: 10

tolerations:

- operator: Exists

volumes:

- hostPath:

path: /sys/fs/bpf

type: ""

name: bpf-pin-path

- hostPath:

path: /opt/cni/bin

type: ""

name: cni-bin-dir

- hostPath:

path: /etc/cni/net.d

type: ""

name: cni-net-dir

- hostPath:

path: /var/log/aws-routed-eni

type: DirectoryOrCreate

name: log-dir

- hostPath:

path: /var/run/aws-node

type: DirectoryOrCreate

name: run-dir

- hostPath:

path: /run/xtables.lock

type: FileOrCreate

name: xtables-lock

updateStrategy:

rollingUpdate:

maxSurge: 0

maxUnavailable: 10%

type: RollingUpdate

status:

currentNumberScheduled: 3

desiredNumberScheduled: 3

numberAvailable: 3

numberMisscheduled: 0

numberReady: 3

observedGeneration: 1

updatedNumberScheduled: 3

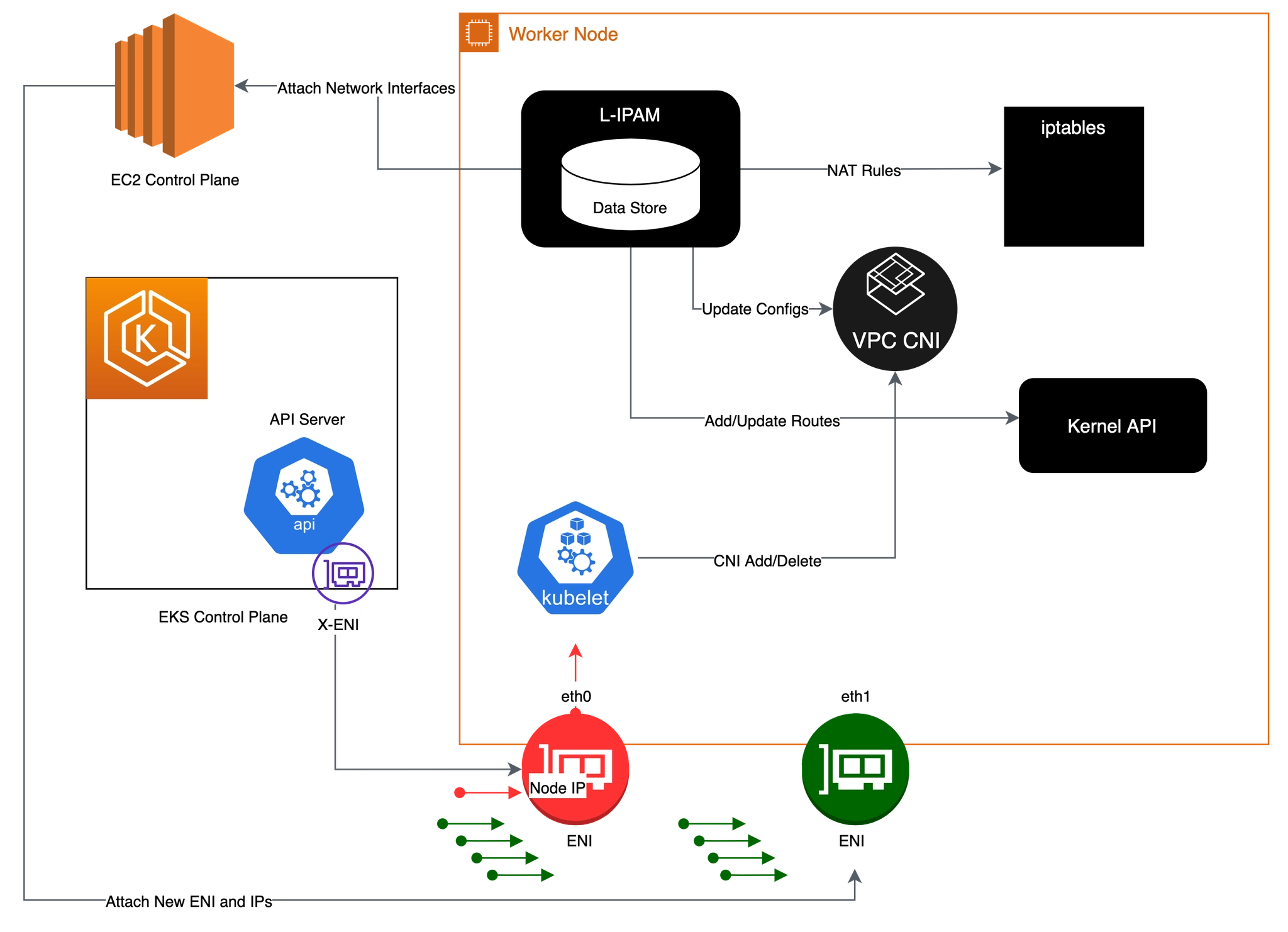

VPC CNI 동작 흐름

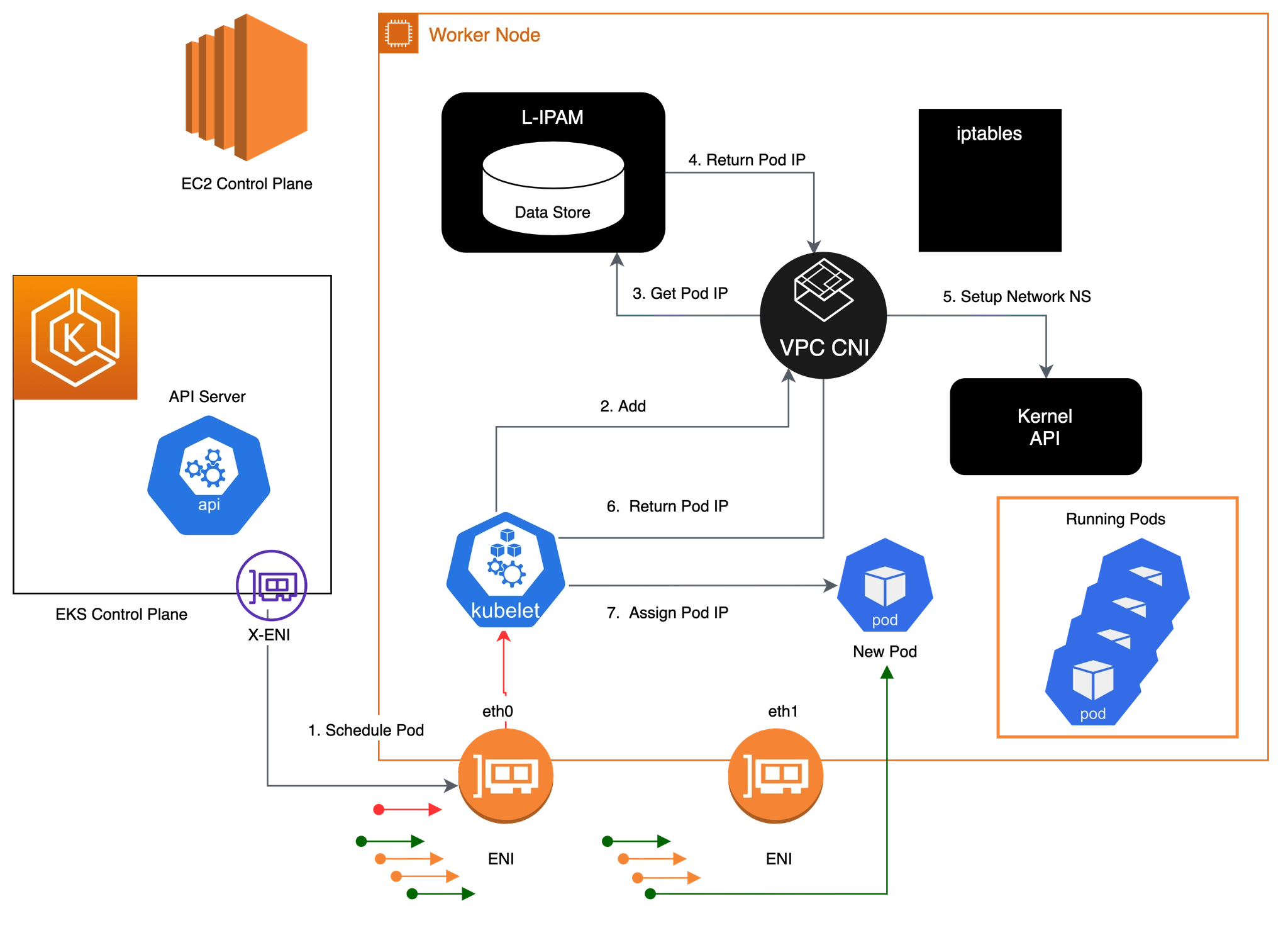

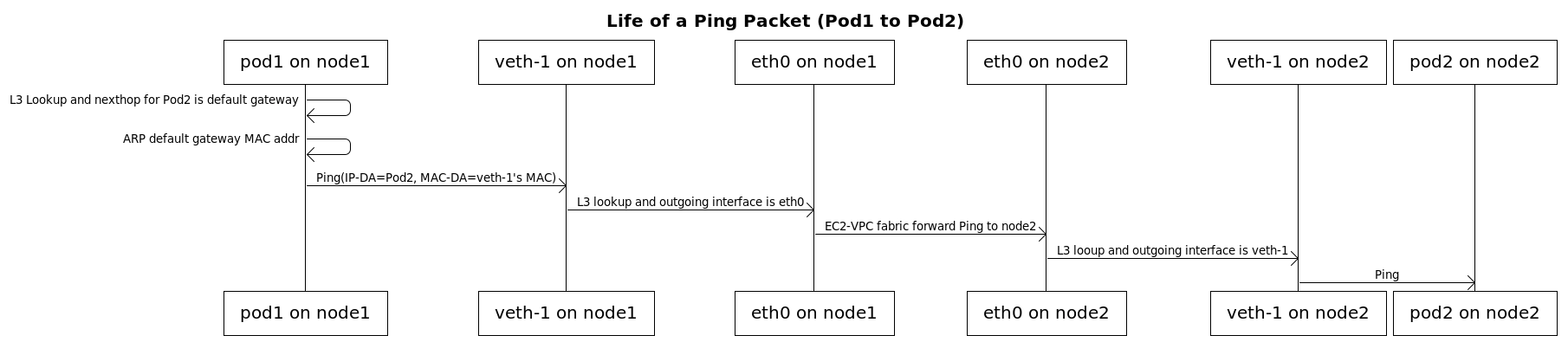

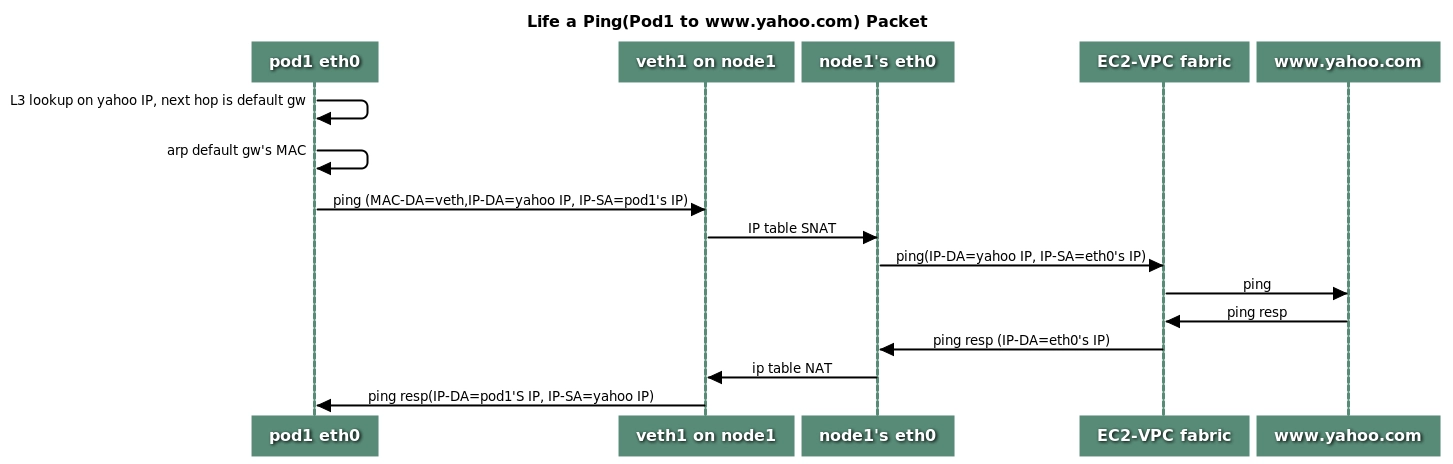

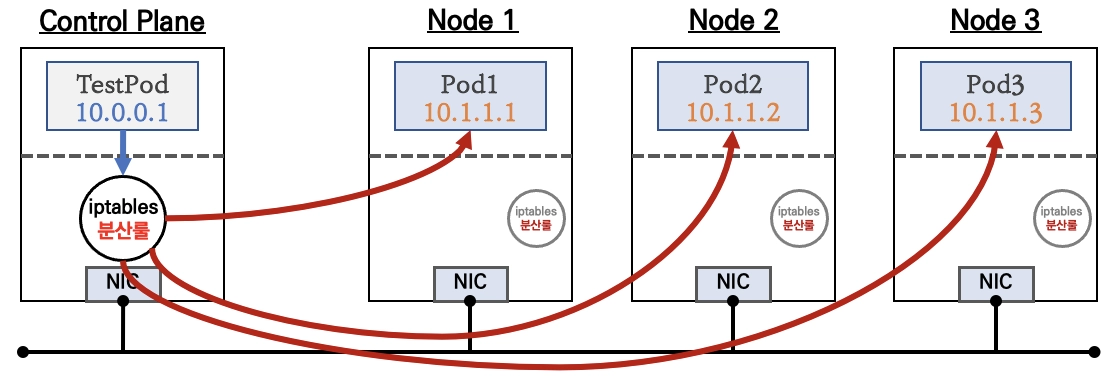

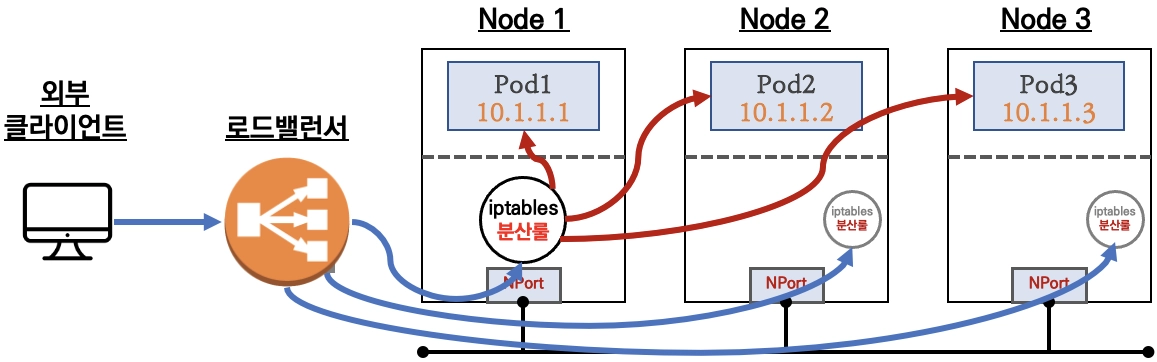

1. 파드 통신을 위한 IPtables, 라우팅 설정 과정 : kubelet 에서 CNI 바이너리에 CNI 추가/삭제 요청을 통해 처리. 이때 L-IPAM 조회 확인

2. 파드 네트워크 환경 구성(파드 네트워크 네임스페이스) : kubelet → vpc cni → L-IPAM → kernel API ⇒ kubelet → New Pod

- Kubelet이 포드 추가 요청을 수신하면 CNI 바이너리가 사용 가능한 IP 주소에 대해 ipamd를 쿼리한 다음 ipamd가 포드에 제공합니다. CNI 바이너리가 호스트 및 포드 네트워크를 연결합니다.

- 노드에 배포된 포드는 기본적으로 기본 ENI와 동일한 보안 그룹에 할당됩니다. 또는 다른 보안 그룹으로 포드를 구성할 수 있습니다.

- IP 주소 풀이 고갈되면 플러그 인은 다른 탄력적 네트워크 인터페이스를 인스턴스에 자동으로 연결하고 이 인터페이스에 다른 보조 IP 주소 집합을 할당합니다. 이 프로세스는 노드가 탄력적 네트워크 인터페이스를 추가 지원할 수 없을 때까지 계속됩니다.

3. 포드가 삭제되면 VPC CNI는 포드의 IP 주소를 30초 cool down cache 에 배치합니다.

- cool down cache IPs는 새 포드에 할당되지 않습니다.

- cooling-off 쿨링 오프 기간이 끝나면 VPC CNI는 포드 IP를 웜 풀로 다시 이동합니다.

- 쿨링 오프 기간은 포드 IP 주소가 조기에 재활용되는 것을 방지하고 모든 클러스터 노드에서 kube-proxy가 iptables 규칙 업데이트를 완료하도록 허용합니다.

- IPs 또는 ENIs 수가 웜 풀 설정 수를 초과하면 ipamd 플러그인은 IPs 및 ENIs에 반환합니다.

2. 노드에서 기본 네트워크 정보 확인

노드 접속 및 IP 변수 지정

# EC2 ENI IP 확인

2w git:(main*) $ aws ec2 describe-instances --query "Reservations[*].Instances[*].{PublicIPAdd:PublicIpAddress,PrivateIPAdd:PrivateIpAddress,InstanceName:Tags[?Key=='Name']|[0].Value,Status:State.Name}" --filters Name=instance-state-name,Values=running --output table

# 아래 IP는 각자 실습 환경에 따라 사용

2w git:(main*) $ N1=13.125.90.155

N2=3.36.10.59

N3=52.79.83.80

# 워커 노드 SSH 접속

2w git:(main*) $ for i in $N1 $N2 $N3; do echo ">> node $i <<"; ssh -o StrictHostKeyChecking=no ec2-user@$i hostname; echo; done

>> node 13.125.90.155 <<

Warning: Permanently added '13.125.90.155' (ED25519) to the list of known hosts.

ip-192-168-3-7.ap-northeast-2.compute.internal

>> node 3.36.10.59 <<

Warning: Permanently added '3.36.10.59' (ED25519) to the list of known hosts.

ip-192-168-5-36.ap-northeast-2.compute.internal

>> node 52.79.83.80 <<

Warning: Permanently added '52.79.83.80' (ED25519) to the list of known hosts.

ip-192-168-11-144.ap-northeast-2.compute.internal

네트워크 기본 정보 확인

# 파드 상세 정보 확인

2w git:(main*) $ kubectl get daemonset aws-node --namespace kube-system -owide

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE CONTAINERS IMAGES SELECTOR

aws-node 3 3 3 3 3 <none> 59m aws-node,aws-eks-nodeagent 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon-k8s-cni:v1.21.1-eksbuild.5,602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon/aws-network-policy-agent:v1.3.1-eksbuild.1 k8s-app=aws-node

2w git:(main*) $ kubectl describe daemonset aws-node --namespace kube-system

Name: aws-node

Namespace: kube-system

Selector: k8s-app=aws-node

Node-Selector: <none>

Labels: app.kubernetes.io/instance=aws-vpc-cni

app.kubernetes.io/managed-by=Helm

app.kubernetes.io/name=aws-node

app.kubernetes.io/version=v1.21.1

helm.sh/chart=aws-vpc-cni-1.21.1

k8s-app=aws-node

Annotations: deprecated.daemonset.template.generation: 1

Desired Number of Nodes Scheduled: 3

Current Number of Nodes Scheduled: 3

Number of Nodes Scheduled with Up-to-date Pods: 3

Number of Nodes Scheduled with Available Pods: 3

Number of Nodes Misscheduled: 0

Pods Status: 3 Running / 0 Waiting / 0 Succeeded / 0 Failed

Pod Template:

Labels: app.kubernetes.io/instance=aws-vpc-cni

app.kubernetes.io/name=aws-node

k8s-app=aws-node

Service Account: aws-node

Init Containers:

aws-vpc-cni-init:

Image: 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon-k8s-cni-init:v1.21.1-eksbuild.5

Port: <none>

Host Port: <none>

Requests:

cpu: 25m

Environment:

DISABLE_TCP_EARLY_DEMUX: false

ENABLE_IPv6: false

Mounts:

/host/opt/cni/bin from cni-bin-dir (rw)

Containers:

aws-node:

Image: 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon-k8s-cni:v1.21.1-eksbuild.5

Port: 61678/TCP (metrics)

Host Port: 0/TCP (metrics)

Requests:

cpu: 25m

Liveness: exec [/app/grpc-health-probe -addr=:50051 -connect-timeout=5s -rpc-timeout=5s] delay=60s timeout=10s period=10s #success=1 #failure=3

Readiness: exec [/app/grpc-health-probe -addr=:50051 -connect-timeout=5s -rpc-timeout=5s] delay=1s timeout=10s period=10s #success=1 #failure=3

Environment:

ADDITIONAL_ENI_TAGS: {}

ANNOTATE_POD_IP: false

AWS_VPC_CNI_NODE_PORT_SUPPORT: true

AWS_VPC_ENI_MTU: 9001

AWS_VPC_K8S_CNI_CUSTOM_NETWORK_CFG: false

AWS_VPC_K8S_CNI_EXTERNALSNAT: false

AWS_VPC_K8S_CNI_LOGLEVEL: DEBUG

AWS_VPC_K8S_CNI_LOG_FILE: /host/var/log/aws-routed-eni/ipamd.log

AWS_VPC_K8S_CNI_RANDOMIZESNAT: prng

AWS_VPC_K8S_CNI_VETHPREFIX: eni

AWS_VPC_K8S_PLUGIN_LOG_FILE: /var/log/aws-routed-eni/plugin.log

AWS_VPC_K8S_PLUGIN_LOG_LEVEL: DEBUG

CLUSTER_ENDPOINT: https://ECAEBC91A81409A04556F202056B6FFE.gr7.ap-northeast-2.eks.amazonaws.com

CLUSTER_NAME: myeks

DISABLE_INTROSPECTION: false

DISABLE_METRICS: false

DISABLE_NETWORK_RESOURCE_PROVISIONING: false

ENABLE_IMDS_ONLY_MODE: false

ENABLE_IPv4: true

ENABLE_IPv6: false

ENABLE_MULTI_NIC: false

ENABLE_POD_ENI: false

ENABLE_PREFIX_DELEGATION: false

ENABLE_SUBNET_DISCOVERY: true

NETWORK_POLICY_ENFORCING_MODE: standard

VPC_CNI_VERSION: v1.21.1

VPC_ID: vpc-0a978c99d0f9f870a

WARM_ENI_TARGET: 1

WARM_PREFIX_TARGET: 1

MY_NODE_NAME: (v1:spec.nodeName)

MY_POD_NAME: (v1:metadata.name)

Mounts:

/host/etc/cni/net.d from cni-net-dir (rw)

/host/opt/cni/bin from cni-bin-dir (rw)

/host/var/log/aws-routed-eni from log-dir (rw)

/run/xtables.lock from xtables-lock (rw)

/var/run/aws-node from run-dir (rw)

aws-eks-nodeagent:

Image: 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon/aws-network-policy-agent:v1.3.1-eksbuild.1

Port: 8162/TCP (agentmetrics)

Host Port: 0/TCP (agentmetrics)

Args:

--enable-ipv6=false

--enable-network-policy=false

--enable-cloudwatch-logs=false

--enable-policy-event-logs=false

--log-file=/var/log/aws-routed-eni/network-policy-agent.log

--metrics-bind-addr=:8162

--health-probe-bind-addr=:8163

--conntrack-cache-cleanup-period=300

--log-level=debug

Requests:

cpu: 25m

Environment:

MY_NODE_NAME: (v1:spec.nodeName)

Mounts:

/host/opt/cni/bin from cni-bin-dir (rw)

/sys/fs/bpf from bpf-pin-path (rw)

/var/log/aws-routed-eni from log-dir (rw)

/var/run/aws-node from run-dir (rw)

Volumes:

bpf-pin-path:

Type: HostPath (bare host directory volume)

Path: /sys/fs/bpf

HostPathType:

cni-bin-dir:

Type: HostPath (bare host directory volume)

Path: /opt/cni/bin

HostPathType:

cni-net-dir:

Type: HostPath (bare host directory volume)

Path: /etc/cni/net.d

HostPathType:

log-dir:

Type: HostPath (bare host directory volume)

Path: /var/log/aws-routed-eni

HostPathType: DirectoryOrCreate

run-dir:

Type: HostPath (bare host directory volume)

Path: /var/run/aws-node

HostPathType: DirectoryOrCreate

xtables-lock:

Type: HostPath (bare host directory volume)

Path: /run/xtables.lock

HostPathType: FileOrCreate

Priority Class Name: system-node-critical

Node-Selectors: <none>

Tolerations: op=Exists

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal SuccessfulCreate 58m daemonset-controller Created pod: aws-node-kmscr

Normal SuccessfulCreate 58m daemonset-controller Created pod: aws-node-mlbgs

Normal SuccessfulCreate 58m daemonset-controller Created pod: aws-node-4skpv

# aws-node 데몬셋 env 확인

2w git:(main*) $ kubectl get ds aws-node -n kube-system -o json | jq '.spec.template.spec.containers[0].env'

[

{

"name": "ADDITIONAL_ENI_TAGS",

"value": "{}"

},

{

"name": "ANNOTATE_POD_IP",

"value": "false"

},

{

"name": "AWS_VPC_CNI_NODE_PORT_SUPPORT",

"value": "true"

},

{

"name": "AWS_VPC_ENI_MTU",

"value": "9001"

},

{

"name": "AWS_VPC_K8S_CNI_CUSTOM_NETWORK_CFG",

"value": "false"

},

{

"name": "AWS_VPC_K8S_CNI_EXTERNALSNAT",

"value": "false"

},

{

"name": "AWS_VPC_K8S_CNI_LOGLEVEL",

"value": "DEBUG"

},

{

"name": "AWS_VPC_K8S_CNI_LOG_FILE",

"value": "/host/var/log/aws-routed-eni/ipamd.log"

},

{

"name": "AWS_VPC_K8S_CNI_RANDOMIZESNAT",

"value": "prng"

},

{

"name": "AWS_VPC_K8S_CNI_VETHPREFIX",

"value": "eni"

},

{

"name": "AWS_VPC_K8S_PLUGIN_LOG_FILE",

"value": "/var/log/aws-routed-eni/plugin.log"

},

{

"name": "AWS_VPC_K8S_PLUGIN_LOG_LEVEL",

"value": "DEBUG"

},

{

"name": "CLUSTER_ENDPOINT",

"value": "https://ECAEBC91A81409A04556F202056B6FFE.gr7.ap-northeast-2.eks.amazonaws.com"

},

{

"name": "CLUSTER_NAME",

"value": "myeks"

},

{

"name": "DISABLE_INTROSPECTION",

"value": "false"

},

{

"name": "DISABLE_METRICS",

"value": "false"

},

{

"name": "DISABLE_NETWORK_RESOURCE_PROVISIONING",

"value": "false"

},

{

"name": "ENABLE_IMDS_ONLY_MODE",

"value": "false"

},

{

"name": "ENABLE_IPv4",

"value": "true"

},

{

"name": "ENABLE_IPv6",

"value": "false"

},

{

"name": "ENABLE_MULTI_NIC",

"value": "false"

},

{

"name": "ENABLE_POD_ENI",

"value": "false"

},

{

"name": "ENABLE_PREFIX_DELEGATION",

"value": "false"

},

{

"name": "ENABLE_SUBNET_DISCOVERY",

"value": "true"

},

{

"name": "NETWORK_POLICY_ENFORCING_MODE",

"value": "standard"

},

{

"name": "VPC_CNI_VERSION",

"value": "v1.21.1"

},

{

"name": "VPC_ID",

"value": "vpc-0a978c99d0f9f870a"

},

{

"name": "WARM_ENI_TARGET",

"value": "1"

},

{

"name": "WARM_PREFIX_TARGET",

"value": "1"

},

{

"name": "MY_NODE_NAME",

"valueFrom": {

"fieldRef": {

"apiVersion": "v1",

"fieldPath": "spec.nodeName"

}

}

},

{

"name": "MY_POD_NAME",

"valueFrom": {

"fieldRef": {

"apiVersion": "v1",

"fieldPath": "metadata.name"

}

}

}

]

노드에 네트워크 정보 확인

# cni log 확인

# cni 관련 트러블슈팅 시, ipamd.log 파일 참조

2w git:(main*) $ 2w git:(main*) $ for i in $N1 $N2 $N3; do echo ">> node $i <<"; ssh ec2-user@$i tree /var/log/aws-routed-eni ; echo; done

>> node 13.125.90.155 <<

/var/log/aws-routed-eni

├── ebpf-sdk.log

├── ipamd.log

└── network-policy-agent.log

0 directories, 3 files

>> node 3.36.10.59 <<

/var/log/aws-routed-eni

├── ebpf-sdk.log

├── egress-v6-plugin.log

├── ipamd.log

├── network-policy-agent.log

└── plugin.log

0 directories, 5 files

>> node 52.79.83.80 <<

/var/log/aws-routed-eni

├── ebpf-sdk.log

├── egress-v6-plugin.log

├── ipamd.log

├── network-policy-agent.log

└── plugin.log

0 directories, 5 files

2w git:(main*) $ for i in $N1 $N2 $N3; do echo ">> node $i <<"; ssh ec2-user@$i sudo cat /var/log/aws-routed-eni/plugin.log | jq ; echo; done

>> node 13.125.90.155 <<

cat: /var/log/aws-routed-eni/plugin.log: No such file or directory

>> node 3.36.10.59 <<

{

"level": "info",

"ts": "2026-03-24T12:42:24.088Z",

"caller": "routed-eni-cni-plugin/cni.go:131",

"msg": "Constructed new logger instance"

}

{

"level": "info",

"ts": "2026-03-24T12:42:24.088Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Received CNI add request: ContainerID(9057ac941292277cf8bd3dc28f6c58bb90eced5232b75132d27679e64eac99dc) Netns(/var/run/netns/cni-b50f9442-d17b-8951-95c3-46862cb4df5d) IfName(eth0) Args(K8S_POD_UID=e0586ebd-ba17-42fc-afa1-195787394f7c;IgnoreUnknown=1;K8S_POD_NAMESPACE=kube-system;K8S_POD_NAME=coredns-cc56d5f8b-9nvgz;K8S_POD_INFRA_CONTAINER_ID=9057ac941292277cf8bd3dc28f6c58bb90eced5232b75132d27679e64eac99dc) Path(/opt/cni/bin) argsStdinData({\"capabilities\":{\"io.kubernetes.cri.pod-annotations\":true},\"cniVersion\":\"0.4.0\",\"mtu\":\"9001\",\"name\":\"aws-cni\",\"pluginLogFile\":\"/var/log/aws-routed-eni/plugin.log\",\"pluginLogLevel\":\"DEBUG\",\"podSGEnforcingMode\":\"strict\",\"runtimeConfig\":{\"io.kubernetes.cri.pod-annotations\":{\"kubernetes.io/config.seen\":\"2026-03-24T12:42:23.738464638Z\",\"kubernetes.io/config.source\":\"api\"}},\"type\":\"aws-cni\",\"vethPrefix\":\"eni\"})"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.088Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Prev Result: <nil>\n"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.088Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "MTU value set is 9001:"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.088Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "pod requires multi-nic attachment: false"

}

{

"level": "info",

"ts": "2026-03-24T12:42:24.094Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Received add network response from ipamd for container 9057ac941292277cf8bd3dc28f6c58bb90eced5232b75132d27679e64eac99dc interface eth0: Success:true IPAllocationMetadata:{IPv4Addr:\"192.168.5.76\" RouteTableId:254} VPCv4CIDRs:\"192.168.0.0/16\" NetworkPolicyMode:\"standard\""

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.094Z",

"caller": "routed-eni-cni-plugin/cni.go:279",

"msg": "SetupPodNetwork: hostVethName=eni481fe145bd1, contVethName=eth0, netnsPath=/var/run/netns/cni-b50f9442-d17b-8951-95c3-46862cb4df5d, ipAddr=192.168.5.76/32, routeTableNumber=254, mtu=9001"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.132Z",

"caller": "driver/driver.go:276",

"msg": "Successfully set IPv6 sysctls on hostVeth eni481fe145bd1"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.135Z",

"caller": "driver/driver.go:286",

"msg": "Successfully setup container route, containerAddr=192.168.5.76/32, hostVeth=eni481fe145bd1, rtTable=main"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.135Z",

"caller": "driver/driver.go:286",

"msg": "Successfully setup toContainer rule, containerAddr=192.168.5.76/32, rtTable=main"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.135Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Using dummy interface: {Name:dummy481fe145bd1 Mac:0 Mtu:0 Sandbox:0 SocketPath: PciID:}"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.141Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Network Policy agent for EnforceNpToPod returned Success : true"

}

>> node 52.79.83.80 <<

{

"level": "info",

"ts": "2026-03-24T12:42:24.117Z",

"caller": "routed-eni-cni-plugin/cni.go:131",

"msg": "Constructed new logger instance"

}

{

"level": "info",

"ts": "2026-03-24T12:42:24.117Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Received CNI add request: ContainerID(f4ed3b515de27c5ae54519c12dbc5c7eef96985c43951b5550e9d8d4dbe6a7a2) Netns(/var/run/netns/cni-4c8defb0-eb51-0e65-cc93-fee1aa750c32) IfName(eth0) Args(K8S_POD_NAME=coredns-cc56d5f8b-x7p4t;K8S_POD_INFRA_CONTAINER_ID=f4ed3b515de27c5ae54519c12dbc5c7eef96985c43951b5550e9d8d4dbe6a7a2;K8S_POD_UID=11e0e653-7cdb-4fe1-8e95-a36318ce3606;IgnoreUnknown=1;K8S_POD_NAMESPACE=kube-system) Path(/opt/cni/bin) argsStdinData({\"capabilities\":{\"io.kubernetes.cri.pod-annotations\":true},\"cniVersion\":\"0.4.0\",\"mtu\":\"9001\",\"name\":\"aws-cni\",\"pluginLogFile\":\"/var/log/aws-routed-eni/plugin.log\",\"pluginLogLevel\":\"DEBUG\",\"podSGEnforcingMode\":\"strict\",\"runtimeConfig\":{\"io.kubernetes.cri.pod-annotations\":{\"kubernetes.io/config.seen\":\"2026-03-24T12:42:23.784005411Z\",\"kubernetes.io/config.source\":\"api\"}},\"type\":\"aws-cni\",\"vethPrefix\":\"eni\"})"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.117Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Prev Result: <nil>\n"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.117Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "MTU value set is 9001:"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.117Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "pod requires multi-nic attachment: false"

}

{

"level": "info",

"ts": "2026-03-24T12:42:24.121Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Received add network response from ipamd for container f4ed3b515de27c5ae54519c12dbc5c7eef96985c43951b5550e9d8d4dbe6a7a2 interface eth0: Success:true IPAllocationMetadata:{IPv4Addr:\"192.168.10.183\" RouteTableId:254} VPCv4CIDRs:\"192.168.0.0/16\" NetworkPolicyMode:\"standard\""

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.121Z",

"caller": "routed-eni-cni-plugin/cni.go:279",

"msg": "SetupPodNetwork: hostVethName=eni6422ac782e4, contVethName=eth0, netnsPath=/var/run/netns/cni-4c8defb0-eb51-0e65-cc93-fee1aa750c32, ipAddr=192.168.10.183/32, routeTableNumber=254, mtu=9001"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.204Z",

"caller": "driver/driver.go:276",

"msg": "Successfully set IPv6 sysctls on hostVeth eni6422ac782e4"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.204Z",

"caller": "driver/driver.go:286",

"msg": "Successfully setup container route, containerAddr=192.168.10.183/32, hostVeth=eni6422ac782e4, rtTable=main"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.204Z",

"caller": "driver/driver.go:286",

"msg": "Successfully setup toContainer rule, containerAddr=192.168.10.183/32, rtTable=main"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.204Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Using dummy interface: {Name:dummy6422ac782e4 Mac:0 Mtu:0 Sandbox:0 SocketPath: PciID:}"

}

{

"level": "debug",

"ts": "2026-03-24T12:42:24.208Z",

"caller": "routed-eni-cni-plugin/cni.go:140",

"msg": "Network Policy agent for EnforceNpToPod returned Success : true"

}

# 네트워크 정보 확인 : eniY는 pod network 네임스페이스와 veth pair

2w git:(main*) $ for i in $N1 $N2 $N3; do echo ">> node $i <<"; ssh ec2-user@$i sudo ip -br -c addr; echo; done

>> node 13.125.90.155 <<

lo UNKNOWN 127.0.0.1/8 ::1/128

ens5 UP 192.168.3.7/22 metric 512 fe80::4d:80ff:feab:fb03/64

>> node 3.36.10.59 <<

lo UNKNOWN 127.0.0.1/8 ::1/128

ens5 UP 192.168.5.36/22 metric 512 fe80::409:ffff:fe29:eb23/64

eni481fe145bd1@if3 UP fe80::80b9:cff:fe9d:bd66/64

ens6 UP 192.168.4.106/22 fe80::459:b6ff:fefa:9319/64

>> node 52.79.83.80 <<

lo UNKNOWN 127.0.0.1/8 ::1/128

ens5 UP 192.168.11.144/22 metric 512 fe80::83c:3eff:fe51:1309/64

eni6422ac782e4@if3 UP fe80::1c59:e7ff:fe6d:e994/64

ens6 UP 192.168.9.236/22 fe80::899:8aff:fe8a:2813/64

2w git:(main*) $ for i in $N1 $N2 $N3; do echo ">> node $i <<"; ssh ec2-user@$i sudo ip -c addr; echo; done

>> node 13.125.90.155 <<

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host noprefixroute

valid_lft forever preferred_lft forever

2: ens5: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 9001 qdisc mq state UP group default qlen 1000

link/ether 02:4d:80:ab:fb:03 brd ff:ff:ff:ff:ff:ff

altname enp0s5

inet 192.168.3.7/22 metric 512 brd 192.168.3.255 scope global dynamic ens5

valid_lft 2778sec preferred_lft 2778sec

inet6 fe80::4d:80ff:feab:fb03/64 scope link proto kernel_ll

valid_lft forever preferred_lft forever

>> node 3.36.10.59 <<

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host noprefixroute

valid_lft forever preferred_lft forever

2: ens5: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 9001 qdisc mq state UP group default qlen 1000

link/ether 06:09:ff:29:eb:23 brd ff:ff:ff:ff:ff:ff

altname enp0s5

inet 192.168.5.36/22 metric 512 brd 192.168.7.255 scope global dynamic ens5

valid_lft 2775sec preferred_lft 2775sec

inet6 fe80::409:ffff:fe29:eb23/64 scope link proto kernel_ll

valid_lft forever preferred_lft forever

3: eni481fe145bd1@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 9001 qdisc noqueue state UP group default

link/ether 82:b9:0c:9d:bd:66 brd ff:ff:ff:ff:ff:ff link-netns cni-b50f9442-d17b-8951-95c3-46862cb4df5d

inet6 fe80::80b9:cff:fe9d:bd66/64 scope link proto kernel_ll

valid_lft forever preferred_lft forever

4: ens6: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 9001 qdisc mq state UP group default qlen 1000

link/ether 06:59:b6:fa:93:19 brd ff:ff:ff:ff:ff:ff

altname enp0s6

inet 192.168.4.106/22 brd 192.168.7.255 scope global ens6

valid_lft forever preferred_lft forever

inet6 fe80::459:b6ff:fefa:9319/64 scope link proto kernel_ll

valid_lft forever preferred_lft forever

>> node 52.79.83.80 <<

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host noprefixroute

valid_lft forever preferred_lft forever

2: ens5: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 9001 qdisc mq state UP group default qlen 1000

link/ether 0a:3c:3e:51:13:09 brd ff:ff:ff:ff:ff:ff

altname enp0s5

inet 192.168.11.144/22 metric 512 brd 192.168.11.255 scope global dynamic ens5

valid_lft 2776sec preferred_lft 2776sec

inet6 fe80::83c:3eff:fe51:1309/64 scope link proto kernel_ll

valid_lft forever preferred_lft forever

3: eni6422ac782e4@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 9001 qdisc noqueue state UP group default

link/ether 1e:59:e7:6d:e9:94 brd ff:ff:ff:ff:ff:ff link-netns cni-4c8defb0-eb51-0e65-cc93-fee1aa750c32

inet6 fe80::1c59:e7ff:fe6d:e994/64 scope link proto kernel_ll

valid_lft forever preferred_lft forever

4: ens6: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 9001 qdisc mq state UP group default qlen 1000

link/ether 0a:99:8a:8a:28:13 brd ff:ff:ff:ff:ff:ff

altname enp0s6

inet 192.168.9.236/22 brd 192.168.11.255 scope global ens6

valid_lft forever preferred_lft forever

inet6 fe80::899:8aff:fe8a:2813/64 scope link proto kernel_ll

valid_lft forever preferred_lft forever

2w git:(main*) $ for i in $N1 $N2 $N3; do echo ">> node $i <<"; ssh ec2-user@$i sudo ip -c route; echo; done

>> node 13.125.90.155 <<

default via 192.168.0.1 dev ens5 proto dhcp src 192.168.3.7 metric 512

192.168.0.0/22 dev ens5 proto kernel scope link src 192.168.3.7 metric 512

192.168.0.1 dev ens5 proto dhcp scope link src 192.168.3.7 metric 512

192.168.0.2 dev ens5 proto dhcp scope link src 192.168.3.7 metric 512

>> node 3.36.10.59 <<

default via 192.168.4.1 dev ens5 proto dhcp src 192.168.5.36 metric 512

192.168.0.2 via 192.168.4.1 dev ens5 proto dhcp src 192.168.5.36 metric 512

192.168.4.0/22 dev ens5 proto kernel scope link src 192.168.5.36 metric 512

192.168.4.1 dev ens5 proto dhcp scope link src 192.168.5.36 metric 512

192.168.5.76 dev eni481fe145bd1 scope link

>> node 52.79.83.80 <<

default via 192.168.8.1 dev ens5 proto dhcp src 192.168.11.144 metric 512

192.168.0.2 via 192.168.8.1 dev ens5 proto dhcp src 192.168.11.144 metric 512

192.168.8.0/22 dev ens5 proto kernel scope link src 192.168.11.144 metric 512

192.168.8.1 dev ens5 proto dhcp scope link src 192.168.11.144 metric 512

192.168.10.183 dev eni6422ac782e4 scope link

2w git:(main*) $ ssh ec2-user@$N1 sudo iptables -t nat -S

-P PREROUTING ACCEPT

-P INPUT ACCEPT

-P OUTPUT ACCEPT

-P POSTROUTING ACCEPT

-N AWS-CONNMARK-CHAIN-0

-N AWS-SNAT-CHAIN-0

-N KUBE-KUBELET-CANARY

-N KUBE-MARK-MASQ

-N KUBE-NODEPORTS

-N KUBE-POSTROUTING

-N KUBE-PROXY-CANARY

-N KUBE-SEP-5UDGFAFYELDECNYA

-N KUBE-SEP-7ETZDPY2QTUGX22R

-N KUBE-SEP-BAHASVEYSP77KY2T

-N KUBE-SEP-BU7V3HIQWPVU7HYM

-N KUBE-SEP-G5V4KEWYO6B2RBGW

-N KUBE-SEP-PQBIC6FYNOGG3SED

-N KUBE-SEP-S5CQZPQZARHXYA6J

-N KUBE-SEP-XYDDOFWXZXQGZRSQ

-N KUBE-SEP-ZNIZZUBEGKJH5NYC

-N KUBE-SERVICES

-N KUBE-SVC-ERIFXISQEP7F7OF4

-N KUBE-SVC-I7SKRZYQ7PWYV5X7

-N KUBE-SVC-JD5MR3NA4I4DYORP

-N KUBE-SVC-NPX46M4PTMTKRN6Y

-N KUBE-SVC-TCOU7JCQXEZGVUNU

-A PREROUTING -m comment --comment "kubernetes service portals" -j KUBE-SERVICES

-A PREROUTING -i eni+ -m comment --comment "AWS, outbound connections" -j AWS-CONNMARK-CHAIN-0

-A PREROUTING -m comment --comment "AWS, CONNMARK" -j CONNMARK --restore-mark --nfmask 0x80 --ctmask 0x80

-A OUTPUT -m comment --comment "kubernetes service portals" -j KUBE-SERVICES

-A POSTROUTING -m comment --comment "kubernetes postrouting rules" -j KUBE-POSTROUTING

-A POSTROUTING -m comment --comment "AWS SNAT CHAIN" -j AWS-SNAT-CHAIN-0

-A AWS-CONNMARK-CHAIN-0 -d 192.168.0.0/16 -m comment --comment "AWS CONNMARK CHAIN, VPC CIDR" -j RETURN

-A AWS-CONNMARK-CHAIN-0 -m comment --comment "AWS, CONNMARK" -j CONNMARK --set-xmark 0x80/0x80

-A AWS-SNAT-CHAIN-0 -d 192.168.0.0/16 -m comment --comment "AWS SNAT CHAIN" -j RETURN

-A AWS-SNAT-CHAIN-0 ! -o vlan+ -m comment --comment "AWS, SNAT" -m addrtype ! --dst-type LOCAL -j SNAT --to-source 192.168.3.7 --random-fully

-A KUBE-MARK-MASQ -j MARK --set-xmark 0x4000/0x4000

-A KUBE-POSTROUTING -m mark ! --mark 0x4000/0x4000 -j RETURN

-A KUBE-POSTROUTING -j MARK --set-xmark 0x4000/0x0

-A KUBE-POSTROUTING -m comment --comment "kubernetes service traffic requiring SNAT" -j MASQUERADE --random-fully

-A KUBE-SEP-5UDGFAFYELDECNYA -s 192.168.10.183/32 -m comment --comment "kube-system/kube-dns:dns" -j KUBE-MARK-MASQ

-A KUBE-SEP-5UDGFAFYELDECNYA -p udp -m comment --comment "kube-system/kube-dns:dns" -m udp -j DNAT --to-destination 192.168.10.183:53

-A KUBE-SEP-7ETZDPY2QTUGX22R -s 192.168.10.183/32 -m comment --comment "kube-system/kube-dns:metrics" -j KUBE-MARK-MASQ

-A KUBE-SEP-7ETZDPY2QTUGX22R -p tcp -m comment --comment "kube-system/kube-dns:metrics" -m tcp -j DNAT --to-destination 192.168.10.183:9153

-A KUBE-SEP-BAHASVEYSP77KY2T -s 192.168.6.31/32 -m comment --comment "default/kubernetes:https" -j KUBE-MARK-MASQ

-A KUBE-SEP-BAHASVEYSP77KY2T -p tcp -m comment --comment "default/kubernetes:https" -m tcp -j DNAT --to-destination 192.168.6.31:443

-A KUBE-SEP-BU7V3HIQWPVU7HYM -s 192.168.10.183/32 -m comment --comment "kube-system/kube-dns:dns-tcp" -j KUBE-MARK-MASQ

-A KUBE-SEP-BU7V3HIQWPVU7HYM -p tcp -m comment --comment "kube-system/kube-dns:dns-tcp" -m tcp -j DNAT --to-destination 192.168.10.183:53

-A KUBE-SEP-G5V4KEWYO6B2RBGW -s 192.168.0.98/32 -m comment --comment "default/kubernetes:https" -j KUBE-MARK-MASQ

-A KUBE-SEP-G5V4KEWYO6B2RBGW -p tcp -m comment --comment "default/kubernetes:https" -m tcp -j DNAT --to-destination 192.168.0.98:443

-A KUBE-SEP-PQBIC6FYNOGG3SED -s 192.168.5.76/32 -m comment --comment "kube-system/kube-dns:dns" -j KUBE-MARK-MASQ

-A KUBE-SEP-PQBIC6FYNOGG3SED -p udp -m comment --comment "kube-system/kube-dns:dns" -m udp -j DNAT --to-destination 192.168.5.76:53

-A KUBE-SEP-S5CQZPQZARHXYA6J -s 192.168.5.76/32 -m comment --comment "kube-system/kube-dns:metrics" -j KUBE-MARK-MASQ

-A KUBE-SEP-S5CQZPQZARHXYA6J -p tcp -m comment --comment "kube-system/kube-dns:metrics" -m tcp -j DNAT --to-destination 192.168.5.76:9153

-A KUBE-SEP-XYDDOFWXZXQGZRSQ -s 172.0.32.0/32 -m comment --comment "kube-system/eks-extension-metrics-api:metrics-api" -j KUBE-MARK-MASQ

-A KUBE-SEP-XYDDOFWXZXQGZRSQ -p tcp -m comment --comment "kube-system/eks-extension-metrics-api:metrics-api" -m tcp -j DNAT --to-destination 172.0.32.0:10443

-A KUBE-SEP-ZNIZZUBEGKJH5NYC -s 192.168.5.76/32 -m comment --comment "kube-system/kube-dns:dns-tcp" -j KUBE-MARK-MASQ

-A KUBE-SEP-ZNIZZUBEGKJH5NYC -p tcp -m comment --comment "kube-system/kube-dns:dns-tcp" -m tcp -j DNAT --to-destination 192.168.5.76:53

-A KUBE-SERVICES -d 10.100.0.10/32 -p udp -m comment --comment "kube-system/kube-dns:dns cluster IP" -m udp --dport 53 -j KUBE-SVC-TCOU7JCQXEZGVUNU

-A KUBE-SERVICES -d 10.100.0.10/32 -p tcp -m comment --comment "kube-system/kube-dns:dns-tcp cluster IP" -m tcp --dport 53 -j KUBE-SVC-ERIFXISQEP7F7OF4

-A KUBE-SERVICES -d 10.100.0.10/32 -p tcp -m comment --comment "kube-system/kube-dns:metrics cluster IP" -m tcp --dport 9153 -j KUBE-SVC-JD5MR3NA4I4DYORP

-A KUBE-SERVICES -d 10.100.0.1/32 -p tcp -m comment --comment "default/kubernetes:https cluster IP" -m tcp --dport 443 -j KUBE-SVC-NPX46M4PTMTKRN6Y

-A KUBE-SERVICES -d 10.100.54.197/32 -p tcp -m comment --comment "kube-system/eks-extension-metrics-api:metrics-api cluster IP" -m tcp --dport 443 -j KUBE-SVC-I7SKRZYQ7PWYV5X7

-A KUBE-SERVICES -m comment --comment "kubernetes service nodeports; NOTE: this must be the last rule in this chain" -m addrtype --dst-type LOCAL -j KUBE-NODEPORTS

-A KUBE-SVC-ERIFXISQEP7F7OF4 -m comment --comment "kube-system/kube-dns:dns-tcp -> 192.168.10.183:53" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-BU7V3HIQWPVU7HYM

-A KUBE-SVC-ERIFXISQEP7F7OF4 -m comment --comment "kube-system/kube-dns:dns-tcp -> 192.168.5.76:53" -j KUBE-SEP-ZNIZZUBEGKJH5NYC

-A KUBE-SVC-I7SKRZYQ7PWYV5X7 -m comment --comment "kube-system/eks-extension-metrics-api:metrics-api -> 172.0.32.0:10443" -j KUBE-SEP-XYDDOFWXZXQGZRSQ

-A KUBE-SVC-JD5MR3NA4I4DYORP -m comment --comment "kube-system/kube-dns:metrics -> 192.168.10.183:9153" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-7ETZDPY2QTUGX22R

-A KUBE-SVC-JD5MR3NA4I4DYORP -m comment --comment "kube-system/kube-dns:metrics -> 192.168.5.76:9153" -j KUBE-SEP-S5CQZPQZARHXYA6J

-A KUBE-SVC-NPX46M4PTMTKRN6Y -m comment --comment "default/kubernetes:https -> 192.168.0.98:443" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-G5V4KEWYO6B2RBGW

-A KUBE-SVC-NPX46M4PTMTKRN6Y -m comment --comment "default/kubernetes:https -> 192.168.6.31:443" -j KUBE-SEP-BAHASVEYSP77KY2T

-A KUBE-SVC-TCOU7JCQXEZGVUNU -m comment --comment "kube-system/kube-dns:dns -> 192.168.10.183:53" -m statistic --mode random --probability 0.50000000000 -j KUBE-SEP-5UDGFAFYELDECNYA

-A KUBE-SVC-TCOU7JCQXEZGVUNU -m comment --comment "kube-system/kube-dns:dns -> 192.168.5.76:53" -j KUBE-SEP-PQBIC6FYNOGG3SED

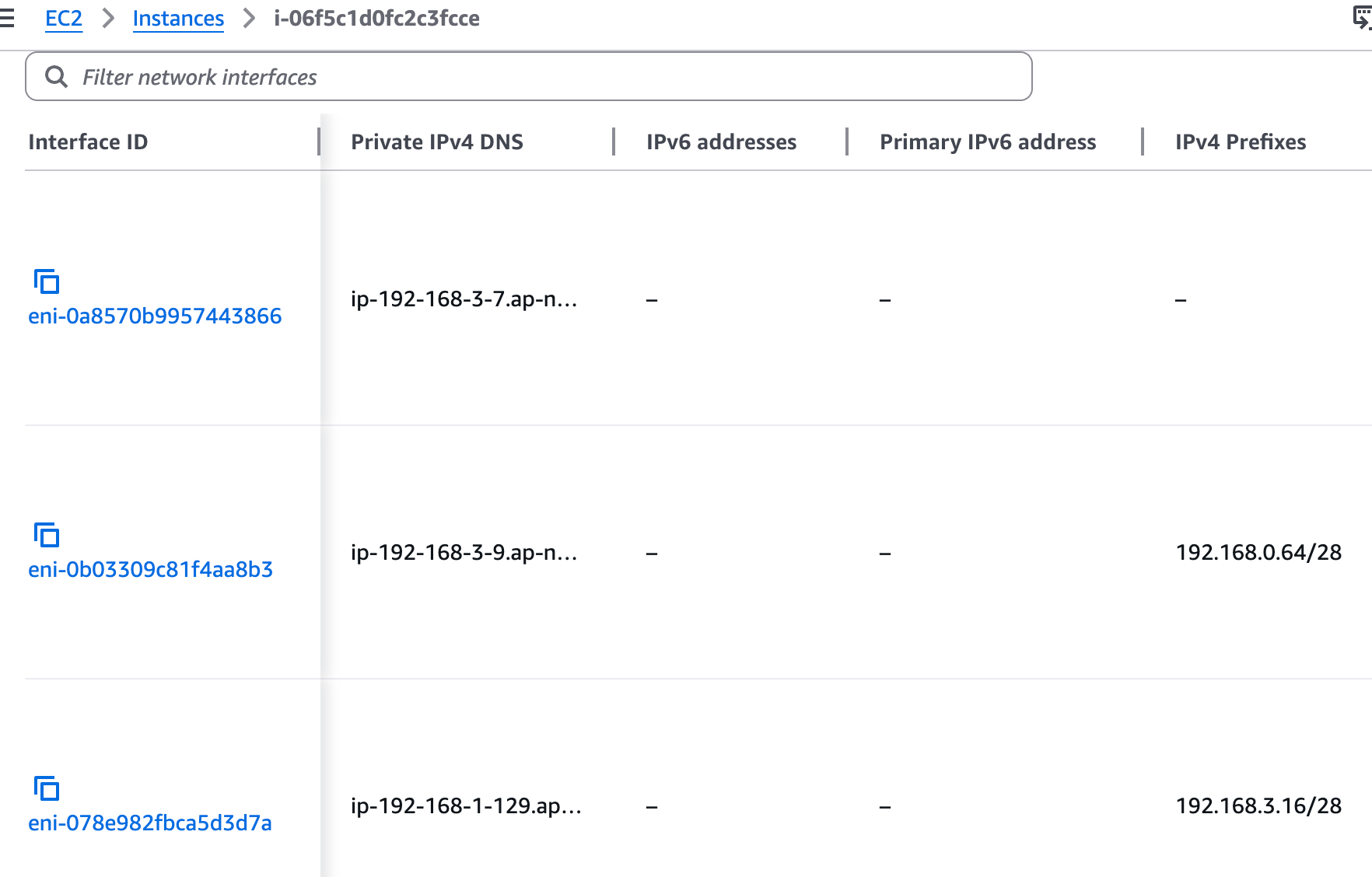

워커 노드1 기본 네트워크 구성

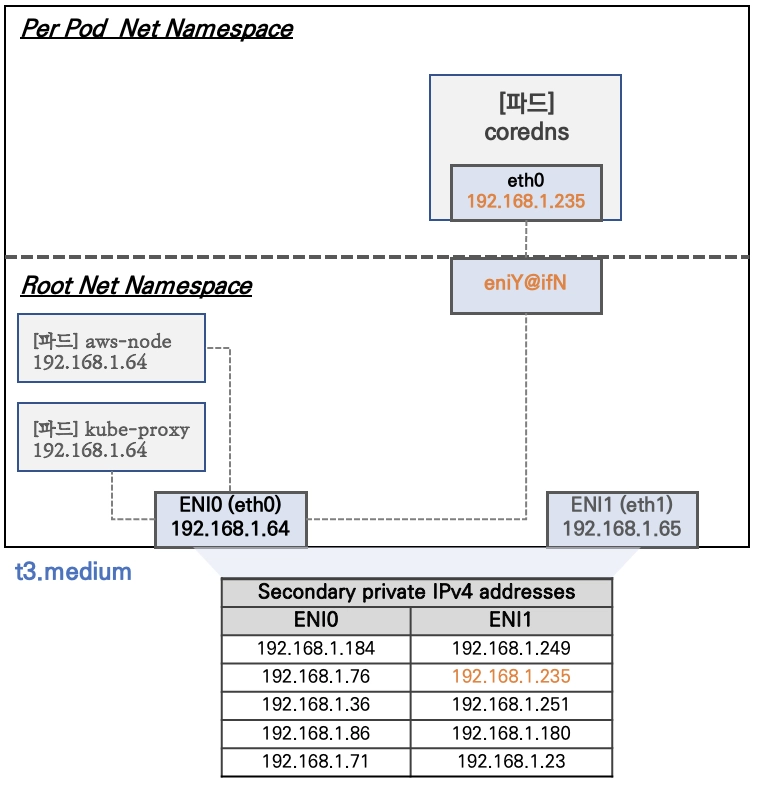

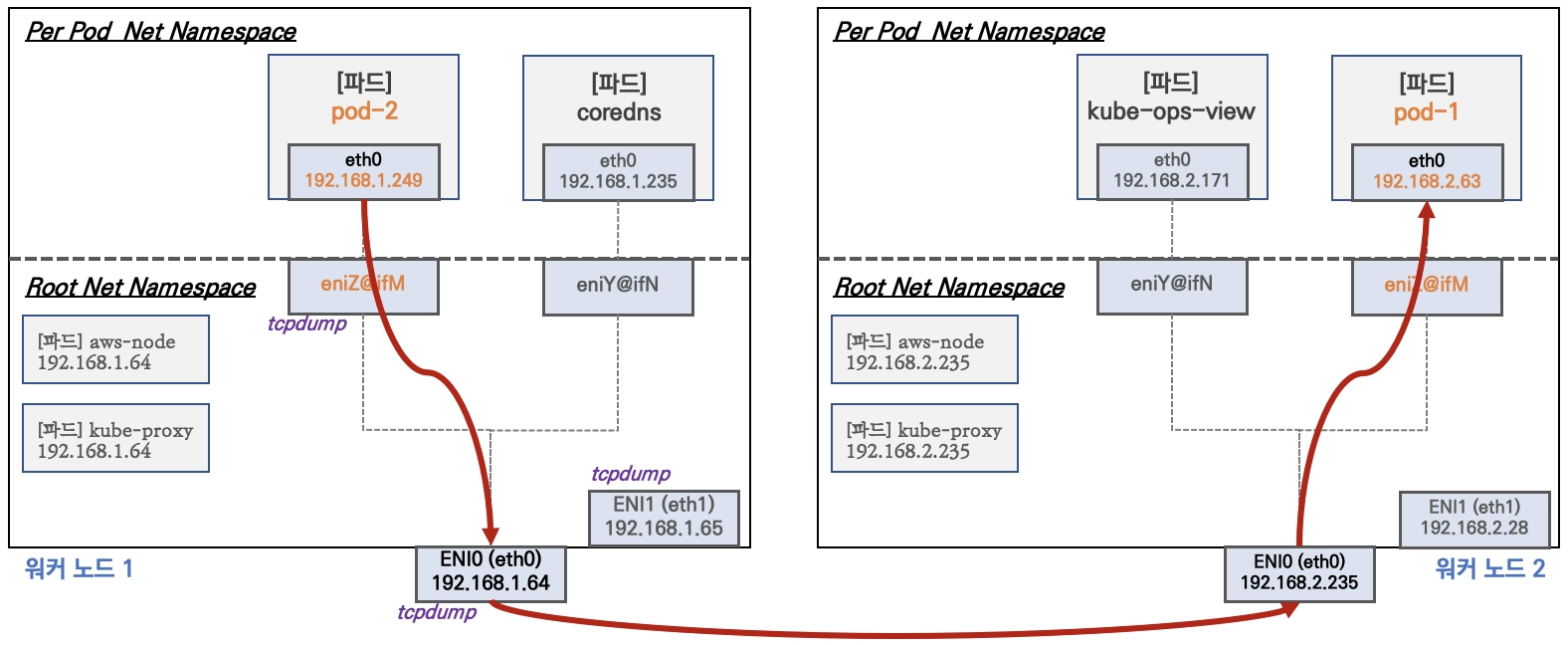

- Network 네임스페이스는 호스트(Root)와 파드 별(Per Pod)로 구분된다.

- 특정한 파드(kube-proxy, aws-node)는 호스트(Root)의 IP를 그대로 사용한다 ⇒ 파드의 Host Network 옵션

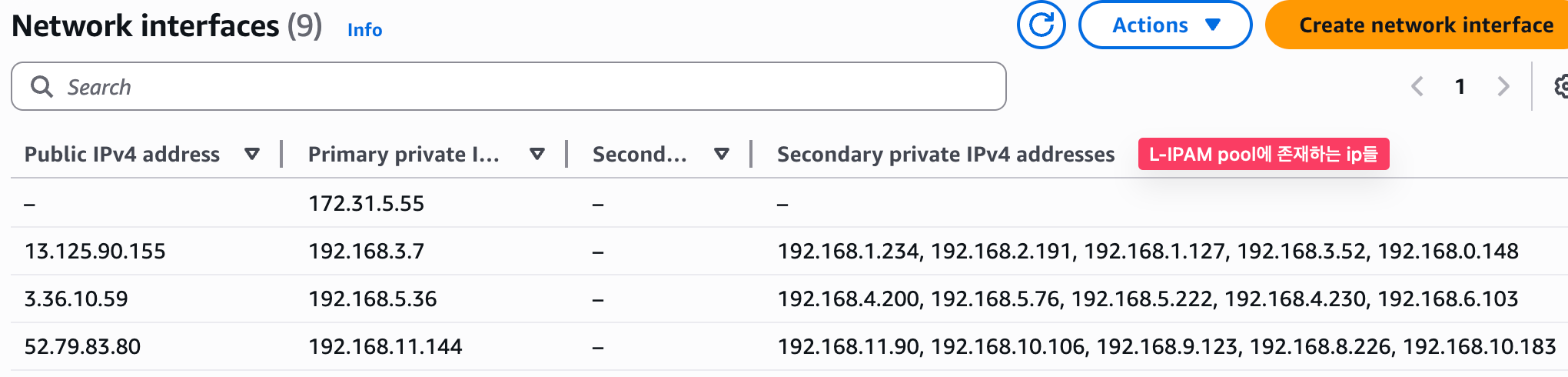

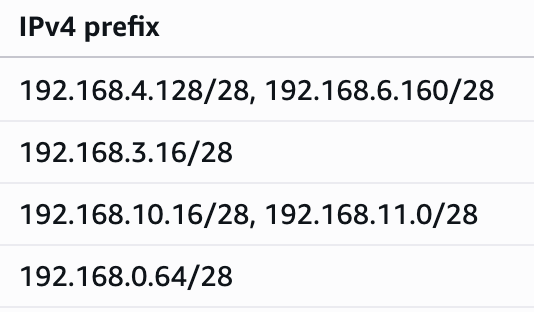

- t3.medium 의 경우 ENI 마다 최대 6개의 IP를 가질 수 있다.

- ENI0, ENI1 으로 2개의 ENI는 자신의 IP 이외에 추가적으로 5개의 보조 프라이빗 IP를 가질수 있다.

- coredns 파드는 veth 으로 호스트에는 eniY@ifN 인터페이스와 파드에 eth0 과 연결되어 있다.

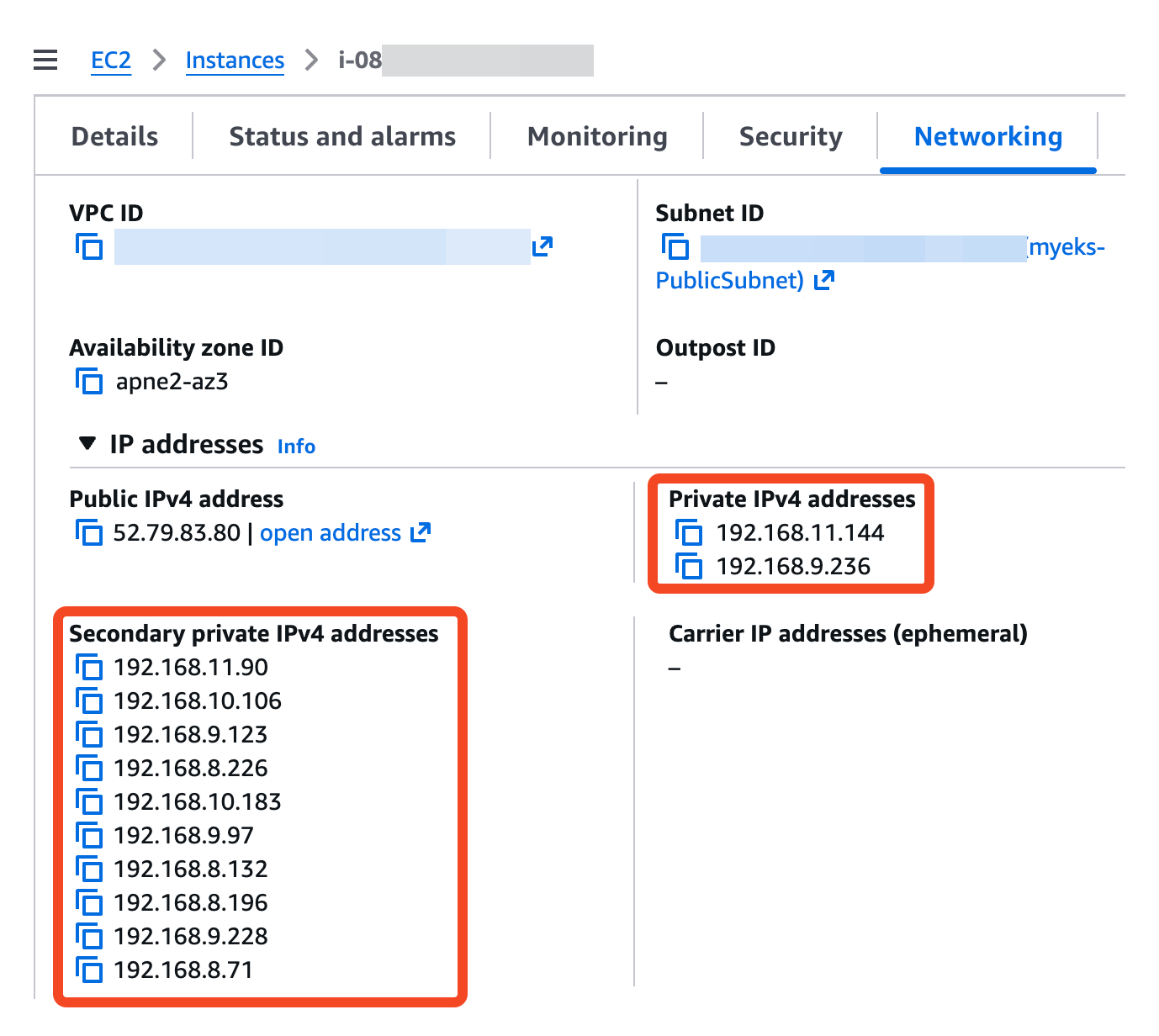

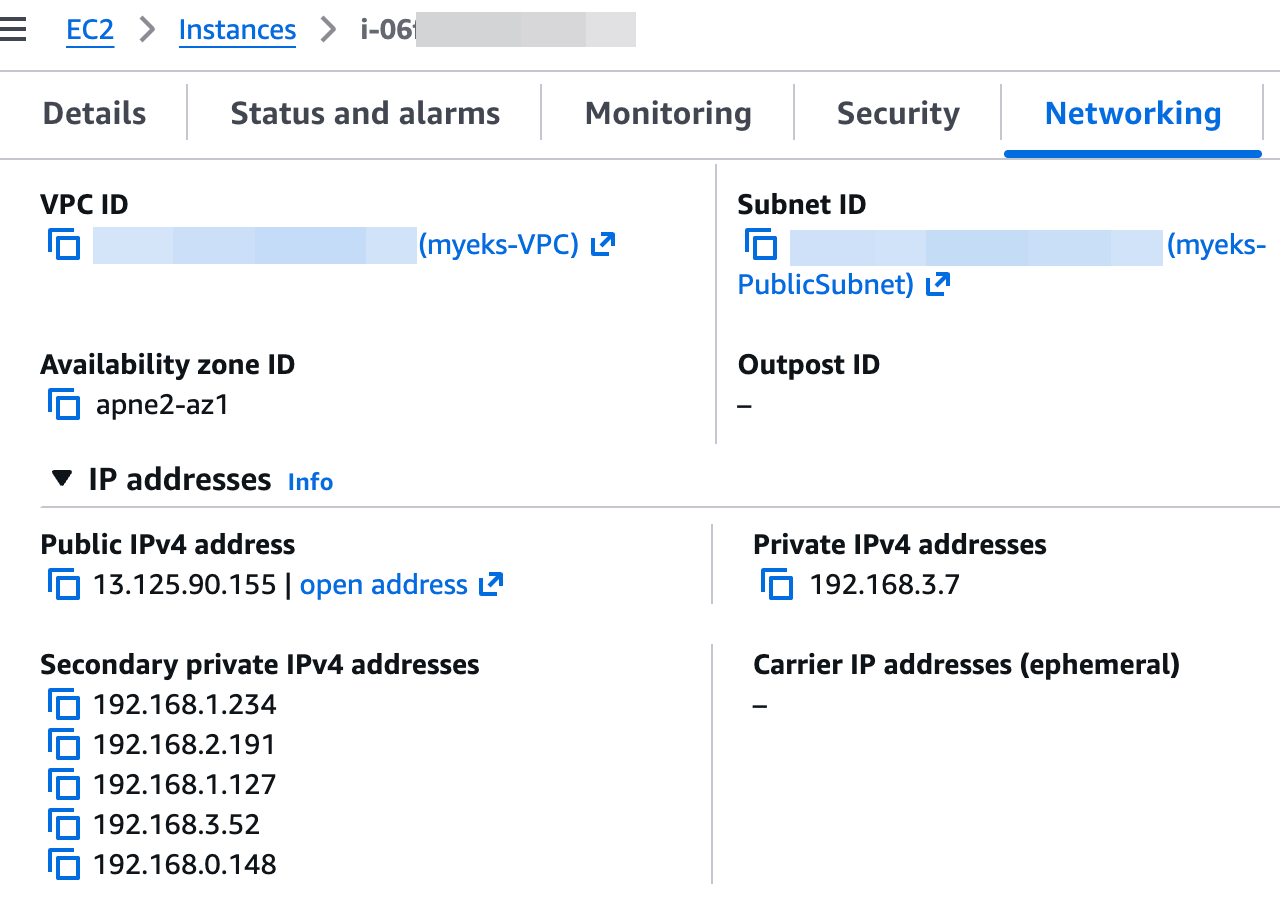

워커 노드1 인스턴스의 네트워크 정보 확인 : 프라이빗 IP와 보조 프라이빗 IP 확인

보조 IPv4 주소를 coredns 파드가 사용하는지 확인 ⇒ coredns 파드가 배치되지 않은 워커 노드에 ENI 갯수 확인!

# coredns 파드 IP 정보 확인

2w git:(main*) $ kubectl get pod -n kube-system -l k8s-app=kube-dns -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-cc56d5f8b-9nvgz 1/1 Running 0 22m 192.168.5.76 ip-192-168-5-36.ap-northeast-2.compute.internal <none> <none>

coredns-cc56d5f8b-x7p4t 1/1 Running 0 22m 192.168.10.183 ip-192-168-11-144.ap-northeast-2.compute.internal <none> <none>

# 노드의 라우팅 정보 확인 >> EC2 네트워크 정보의 '보조 프라이빗 IPv4 주소'와 비교해보자

2w git:(main*) $ for i in $N1 $N2 $N3; do echo ">> node $i <<"; ssh ec2-user@$i sudo ip -c route; echo; done

>> node 13.125.90.155 <<

default via 192.168.0.1 dev ens5 proto dhcp src 192.168.3.7 metric 512

192.168.0.0/22 dev ens5 proto kernel scope link src 192.168.3.7 metric 512

192.168.0.1 dev ens5 proto dhcp scope link src 192.168.3.7 metric 512

192.168.0.2 dev ens5 proto dhcp scope link src 192.168.3.7 metric 512

>> node 3.36.10.59 <<

default via 192.168.4.1 dev ens5 proto dhcp src 192.168.5.36 metric 512

192.168.0.2 via 192.168.4.1 dev ens5 proto dhcp src 192.168.5.36 metric 512

192.168.4.0/22 dev ens5 proto kernel scope link src 192.168.5.36 metric 512

192.168.4.1 dev ens5 proto dhcp scope link src 192.168.5.36 metric 512

192.168.5.76 dev eni481fe145bd1 scope link

>> node 52.79.83.80 <<

default via 192.168.8.1 dev ens5 proto dhcp src 192.168.11.144 metric 512

192.168.0.2 via 192.168.8.1 dev ens5 proto dhcp src 192.168.11.144 metric 512

192.168.8.0/22 dev ens5 proto kernel scope link src 192.168.11.144 metric 512

192.168.8.1 dev ens5 proto dhcp scope link src 192.168.11.144 metric 512

192.168.10.183 dev eni6422ac782e4 scope link

# IpamD debugging commands

# https://github.com/aws/amazon-vpc-cni-k8s/blob/master/docs/troubleshooting.md

2w git:(main*) $ for i in $N1 $N2 $N3; do echo ">> node $i <<"; ssh ec2-user@$i curl -s http://localhost:61679/v1/enis | jq; echo; done

>> node 13.125.90.155 <<

{

"0": {

"TotalIPs": 5,

"AssignedIPs": 0,

"ENIs": {

"eni-0a8570b9957443866": {

"ID": "eni-0a8570b9957443866",

"IsPrimary": true,

"IsTrunk": false,

"IsEFA": false,

"DeviceNumber": 0,

"AvailableIPv4Cidrs": {

"192.168.0.148/32": {

"Cidr": {

"IP": "192.168.0.148",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.1.127/32": {

"Cidr": {

"IP": "192.168.1.127",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.1.234/32": {

"Cidr": {

"IP": "192.168.1.234",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.2.191/32": {

"Cidr": {

"IP": "192.168.2.191",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.3.52/32": {

"Cidr": {

"IP": "192.168.3.52",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

}

},

"IPv6Cidrs": {},

"RouteTableID": 254

}

}

}

}

>> node 3.36.10.59 <<

{

"0": {

"TotalIPs": 10,

"AssignedIPs": 1,

"ENIs": {

"eni-04d4a0d9fd216b46b": {

"ID": "eni-04d4a0d9fd216b46b",

"IsPrimary": true,

"IsTrunk": false,

"IsEFA": false,

"DeviceNumber": 0,

"AvailableIPv4Cidrs": {

"192.168.4.200/32": {

"Cidr": {

"IP": "192.168.4.200",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.4.230/32": {

"Cidr": {

"IP": "192.168.4.230",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.5.222/32": {

"Cidr": {

"IP": "192.168.5.222",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.5.76/32": {

"Cidr": {

"IP": "192.168.5.76",

"Mask": "/////w=="

},

"IPAddresses": {

"192.168.5.76": {

"Address": "192.168.5.76",

"IPAMKey": {

"networkName": "aws-cni",

"containerID": "9057ac941292277cf8bd3dc28f6c58bb90eced5232b75132d27679e64eac99dc",

"ifName": "eth0"

},

"IPAMMetadata": {

"k8sPodNamespace": "kube-system",

"k8sPodName": "coredns-cc56d5f8b-9nvgz",

"interfacesCount": 1

},

"AssignedTime": "2026-03-24T12:42:24.092004241Z",

"UnassignedTime": "0001-01-01T00:00:00Z"

}

},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.6.103/32": {

"Cidr": {

"IP": "192.168.6.103",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

}

},

"IPv6Cidrs": {},

"RouteTableID": 254

},

"eni-0dc14ba34e34f6c18": {

"ID": "eni-0dc14ba34e34f6c18",

"IsPrimary": false,

"IsTrunk": false,

"IsEFA": false,

"DeviceNumber": 1,

"AvailableIPv4Cidrs": {

"192.168.4.227/32": {

"Cidr": {

"IP": "192.168.4.227",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.4.49/32": {

"Cidr": {

"IP": "192.168.4.49",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.5.192/32": {

"Cidr": {

"IP": "192.168.5.192",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.7.144/32": {

"Cidr": {

"IP": "192.168.7.144",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.7.146/32": {

"Cidr": {

"IP": "192.168.7.146",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

}

},

"IPv6Cidrs": {},

"RouteTableID": 2

}

}

}

}

>> node 52.79.83.80 <<

{

"0": {

"TotalIPs": 10,

"AssignedIPs": 1,

"ENIs": {

"eni-086e74c1e91f43cb2": {

"ID": "eni-086e74c1e91f43cb2",

"IsPrimary": false,

"IsTrunk": false,

"IsEFA": false,

"DeviceNumber": 1,

"AvailableIPv4Cidrs": {

"192.168.8.132/32": {

"Cidr": {

"IP": "192.168.8.132",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.8.196/32": {

"Cidr": {

"IP": "192.168.8.196",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.8.71/32": {

"Cidr": {

"IP": "192.168.8.71",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.9.228/32": {

"Cidr": {

"IP": "192.168.9.228",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.9.97/32": {

"Cidr": {

"IP": "192.168.9.97",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

}

},

"IPv6Cidrs": {},

"RouteTableID": 2

},

"eni-0eac3123a10629bc0": {

"ID": "eni-0eac3123a10629bc0",

"IsPrimary": true,

"IsTrunk": false,

"IsEFA": false,

"DeviceNumber": 0,

"AvailableIPv4Cidrs": {

"192.168.10.106/32": {

"Cidr": {

"IP": "192.168.10.106",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.10.183/32": {

"Cidr": {

"IP": "192.168.10.183",

"Mask": "/////w=="

},

"IPAddresses": {

"192.168.10.183": {

"Address": "192.168.10.183",

"IPAMKey": {

"networkName": "aws-cni",

"containerID": "f4ed3b515de27c5ae54519c12dbc5c7eef96985c43951b5550e9d8d4dbe6a7a2",

"ifName": "eth0"

},

"IPAMMetadata": {

"k8sPodNamespace": "kube-system",

"k8sPodName": "coredns-cc56d5f8b-x7p4t",

"interfacesCount": 1

},

"AssignedTime": "2026-03-24T12:42:24.119716014Z",

"UnassignedTime": "0001-01-01T00:00:00Z"

}

},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.11.90/32": {

"Cidr": {

"IP": "192.168.11.90",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.8.226/32": {

"Cidr": {

"IP": "192.168.8.226",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

},

"192.168.9.123/32": {

"Cidr": {

"IP": "192.168.9.123",

"Mask": "/////w=="

},

"IPAddresses": {},

"IsPrefix": false,

"AddressFamily": ""

}

},

"IPv6Cidrs": {},

"RouteTableID": 254

}

}

}

}

Network-Multitool 디플로이먼트 생성 - https://github.com/Praqma/Network-MultiTool

# [터미널1~3] 노드 모니터링

ssh ec2-user@$N1

watch -d "ip link | egrep 'ens|eni' ;echo;echo "[ROUTE TABLE]"; route -n | grep eni"

ssh ec2-user@$N2

watch -d "ip link | egrep 'ens|eni' ;echo;echo "[ROUTE TABLE]"; route -n | grep eni"

ssh ec2-user@$N3

watch -d "ip link | egrep 'ens|eni' ;echo;echo "[ROUTE TABLE]"; route -n | grep eni"

# Network-Multitool 디플로이먼트 생성

cat <<EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: netshoot-pod

spec:

replicas: 3

selector:

matchLabels:

app: netshoot-pod

template:

metadata:

labels:

app: netshoot-pod

spec:

containers:

- name: netshoot-pod

image: praqma/network-multitool

ports:

- containerPort: 80

- containerPort: 443

env:

- name: HTTP_PORT

value: "80"

- name: HTTPS_PORT

value: "443"

terminationGracePeriodSeconds: 0

EOF

# 파드 이름 변수 지정

2w git:(main*) $ PODNAME1=$(kubectl get pod -l app=netshoot-pod -o jsonpath='{.items[0].metadata.name}')

2w git:(main*) $ PODNAME2=$(kubectl get pod -l app=netshoot-pod -o jsonpath='{.items[1].metadata.name}')

2w git:(main*) $ PODNAME3=$(kubectl get pod -l app=netshoot-pod -o jsonpath='{.items[2].metadata.name}')

2w git:(main*) $ echo $PODNAME1 $PODNAME2 $PODNAME3

netshoot-pod-64fbf7fb5-9scs4 netshoot-pod-64fbf7fb5-kz8ff netshoot-pod-64fbf7fb5-zgqsp

# 파드 확인

2w git:(main*) $ kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

netshoot-pod-64fbf7fb5-9scs4 1/1 Running 0 2m11s 192.168.1.234 ip-192-168-3-7.ap-northeast-2.compute.internal <none> <none>