Amazon EKS 배포 및 확인

- 실습을 위한 로컬 PC 준비 (Mac OS)

1) aws cli 설치 및 iam (주체) 자격 증명 설정

# Install aws cli

brew install awscli

aws --version

# iam (주체) 자격 증명 설정

aws configure

AWS Access Key ID : <액세스 키 입력>

AWS Secret Access Key : <시크릿 키 입력>

Default region name : ap-northeast-2

# 확인

aws sts get-caller-identity

=============================================

2) (참고) AWS CLI로 EC2 Key Pair 생성

# 기본 Key Pair 생성 (pem 파일 생성)

aws ec2 create-key-pair \

--key-name my-keypair \

--query 'KeyMaterial' \

--output text > my-keypair.pem

# 권한 설정

chmod 400 my-keypair.pem

# 확인

aws ec2 describe-key-pairs --key-names my-keypair

=============================================

3) k8s 관리 필수 툴 설치

# Install kubectl

brew install kubernetes-cli

kubectl version --client=true

# Install Helm

brew install helm

helm version

=============================================

4) (권장) k8s 관리 유용한 툴 설치

# Install krew

brew install krew

# Install k9s

brew install k9s

# Install kube-ps1

brew install kube-ps1

# Install kubectx

brew install kubectx

# kubectl 출력 시 하이라이트 처리

brew install kubecolor

echo "alias k=kubectl" >> ~/.zshrc

echo "alias kubectl=kubecolor" >> ~/.zshrc

echo "compdef kubecolor=kubectl" >> ~/.zshrc

# k8s krew path : ~/.zshrc 아래 추가

export PATH="${KREW_ROOT:-$HOME/.krew}/bin:$PATH"

=============================================

테라폼 설치 : tfenv(권장) - [링크](https://learn.hashicorp.com/tutorials/terraform/install-cli?in=terraform/aws-get-started)

# tfenv 설치

brew install tfenv

# 설치 가능 버전 리스트 확인

tfenv list-remote

# 테라폼 특정 버전 설치

tfenv install 1.14.6

# 테라폼 특정 버전 사용 설정

tfenv use 1.14.6

# tfenv로 설치한 버전 확인

tfenv list

# 테라폼 버전 정보 확인

terraform version

# 자동완성

terraform -install-autocomplete

## 참고 .zshrc 에 아래 추가됨

cat ~/.zshrc

autoload -U +X bashcompinit && bashcompinit

complete -o nospace -C /usr/local/bin/terraform terraform실제 적용값 확인

1) aws cli 설치 및 iam (주체) 자격 증명 설정

# Install aws cli

brew install awscli

aws --version

aws-cli/2.13.32 Python/3.11.6 Darwin/22.2.0 exe/x86_64 prompt/off

# iam (주체) 자격 증명 설정

aws configure

AWS Access Key ID : <액세스 키 입력>

AWS Secret Access Key : <시크릿 키 입력>

Default region name : ap-northeast-2

# 확인

aws sts get-caller-identity

{

"UserId": "AIxxxxxxxxxxx",

"Account": "123123123123",

"Arn": "arn:aws:iam::123123123123:user/testuser"

}

=============================================

2) (참고) AWS CLI로 EC2 Key Pair 생성

# 기본 Key Pair 생성 (pem 파일 생성)

aws ec2 create-key-pair \

--key-name my-keypair \

--query 'KeyMaterial' \

--output text > my-keypair.pem

# 권한 설정

chmod 400 my-keypair.pem

# 확인

aws ec2 describe-key-pairs --key-names my-keypair

{

"KeyPairs": [

{

"KeyPairId": "key-xxxxxxxxxx",

"KeyFingerprint": "3c:xxxxxxxxxx",

"KeyName": "test-key",

"KeyType": "rsa",

"Tags": [

{

"Key": "Name",

"Value": "test-key"

}

],

"CreateTime": "xxxxxxxxxx"

}

]

}

=============================================

3) k8s 관리 필수 툴 설치

# Install kubectl

brew install kubernetes-cli

kubectl version --client=true

Client Version: v1.34.1

Kustomize Version: v5.7.1

Kubecolor Version: v0.5.2

# Install Helm

brew install helm

helm version

version.BuildInfo{Version:"v3.19.0", GitCommit:"3d8990f0836691f0229297773f3524598f46bda6", GitTreeState:"clean", GoVersion:"go1.25.1"}

=============================================

4) (권장) k8s 관리 유용한 툴 설치

# Install krew

brew install krew

# Install k9s

brew install k9s

# Install kube-ps1

brew install kube-ps1

# Install kubectx

brew install kubectx

# kubectl 출력 시 하이라이트 처리

brew install kubecolor

echo "alias k=kubectl" >> ~/.zshrc

echo "alias kubectl=kubecolor" >> ~/.zshrc

echo "compdef kubecolor=kubectl" >> ~/.zshrc

# k8s krew path : ~/.zshrc 아래 추가

export PATH="${KREW_ROOT:-$HOME/.krew}/bin:$PATH"

=============================================

테라폼 설치 : tfenv(권장) - [링크](https://learn.hashicorp.com/tutorials/terraform/install-cli?in=terraform/aws-get-started)

# tfenv 설치

brew install tfenv

# 설치 가능 버전 리스트 확인

tfenv list-remote

# 테라폼 특정 버전 설치

tfenv install 1.14.6

# 테라폼 특정 버전 사용 설정

tfenv use 1.14.6

# tfenv로 설치한 버전 확인

tfenv list

* 1.14.6 (set by /opt/homebrew/Cellar/tfenv/3.0.0/version)

1.11.0

# 테라폼 버전 정보 확인

terraform version

Terraform v1.14.6

on darwin_arm64

# 자동완성

terraform -install-autocomplete

## 참고 .zshrc 에 아래 추가됨

cat ~/.zshrc

autoload -U +X bashcompinit && bashcompinit

complete -o nospace -C /usr/local/bin/terraform terraform

- 실습 코드 - Github

# 코드 다운로드

$ git clone https://github.com/gasida/aews.git

Cloning into 'aews'...

remote: Enumerating objects: 15, done.

remote: Counting objects: 100% (15/15), done.

remote: Compressing objects: 100% (13/13), done.

remote: Total 15 (delta 1), reused 13 (delta 1), pack-reused 0 (from 0)

Receiving objects: 100% (15/15), 7.61 KiB | 3.80 MiB/s, done.

Resolving deltas: 100% (1/1), done.

s-aews $ cd aews

s-aews $ tree aews

aews

├── 1w

│ ├── eks.tf

│ ├── var.tf

│ └── vpc.tf

└── eks-private

├── ec2.tf

├── main.tf

├── outputs.tf

└── versions.tf

# 작업 디렉터리 이동

$ cd 1w

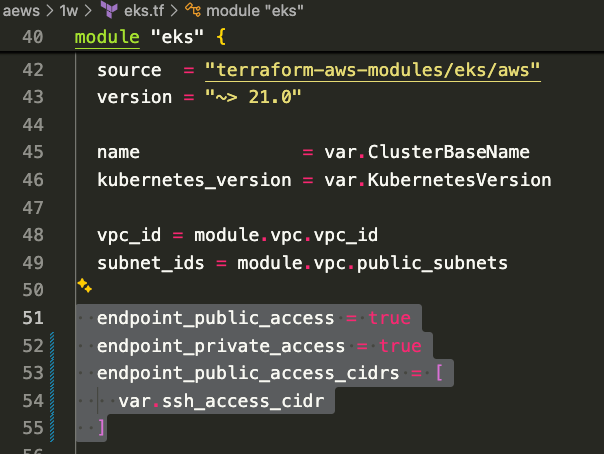

- vpc, eks 배포 (12분 정도 소요) →k8s 자격증명 설정

# 변수 지정

v:Documents:s-aews $ aws ec2 describe-key-pairs --query "KeyPairs[].KeyName" --output text

# 변수 수동 지정

# 원래 변수 내용 : export TF_VAR_KeyName=$(aws ec2 describe-key-pairs --query "KeyPairs[].KeyName" --output text)

v:Documents:s-aews $ export TF_VAR_KeyName=test-key

v:Documents:s-aews $ export TF_VAR_ssh_access_cidr=$(curl -s ipinfo.io/ip)/32

v:Documents:s-aews $ echo $TF_VAR_KeyName $TF_VAR_ssh_access_cidr

test-key x.x.x.x/32

# 배포 : 12분 정도 소요

v:Documents:s-aews:aews:1w $ terraform init

Initializing the backend...

Initializing modules...

Downloading registry.terraform.io/terraform-aws-modules/eks/aws 21.15.1 for eks...

- eks in .terraform/modules/eks

- eks.eks_managed_node_group in .terraform/modules/eks/modules/eks-managed-node-group

- eks.eks_managed_node_group.user_data in .terraform/modules/eks/modules/_user_data

- eks.fargate_profile in .terraform/modules/eks/modules/fargate-profile

Downloading registry.terraform.io/terraform-aws-modules/kms/aws 4.0.0 for eks.kms...

- eks.kms in .terraform/modules/eks.kms

- eks.self_managed_node_group in .terraform/modules/eks/modules/self-managed-node-group

- eks.self_managed_node_group.user_data in .terraform/modules/eks/modules/_user_data

Downloading registry.terraform.io/terraform-aws-modules/vpc/aws 6.6.0 for vpc...

- vpc in .terraform/modules/vpc

Initializing provider plugins...

- Finding hashicorp/aws versions matching ">= 6.0.0, >= 6.28.0"...

- Finding hashicorp/tls versions matching ">= 4.0.0"...

- Finding hashicorp/time versions matching ">= 0.9.0"...

- Finding hashicorp/cloudinit versions matching ">= 2.0.0"...

- Finding hashicorp/null versions matching ">= 3.0.0"...

- Installing hashicorp/aws v6.36.0...

- Installed hashicorp/aws v6.36.0 (signed by HashiCorp)

- Installing hashicorp/tls v4.2.1...

- Installed hashicorp/tls v4.2.1 (signed by HashiCorp)

- Installing hashicorp/time v0.13.1...

- Installed hashicorp/time v0.13.1 (signed by HashiCorp)

- Installing hashicorp/cloudinit v2.3.7...

- Installed hashicorp/cloudinit v2.3.7 (signed by HashiCorp)

- Installing hashicorp/null v3.2.4...

- Installed hashicorp/null v3.2.4 (signed by HashiCorp)

Terraform has created a lock file .terraform.lock.hcl to record the provider

selections it made above. Include this file in your version control repository

so that Terraform can guarantee to make the same selections by default when

you run "terraform init" in the future.

Terraform has been successfully initialized!

You may now begin working with Terraform. Try running "terraform plan" to see

any changes that are required for your infrastructure. All Terraform commands

should now work.

If you ever set or change modules or backend configuration for Terraform,

rerun this command to reinitialize your working directory. If you forget, other

commands will detect it and remind you to do so if necessary.

v:Documents:s-aews:aews:1w $ terraform plan

(상위 생략)

+ resource "aws_eks_addon" "before_compute" {

+ addon_name = "vpc-cni"

+ addon_version = (known after apply)

+ arn = (known after apply)

+ cluster_name = (known after apply)

+ configuration_values = (known after apply)

+ created_at = (known after apply)

+ id = (known after apply)

+ modified_at = (known after apply)

+ preserve = true

+ region = "ap-northeast-2"

+ resolve_conflicts_on_create = "NONE"

+ resolve_conflicts_on_update = "OVERWRITE"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ timeouts {}

}

# module.eks.aws_eks_addon.this["coredns"] will be created

+ resource "aws_eks_addon" "this" {

+ addon_name = "coredns"

+ addon_version = (known after apply)

+ arn = (known after apply)

+ cluster_name = (known after apply)

+ configuration_values = (known after apply)

+ created_at = (known after apply)

+ id = (known after apply)

+ modified_at = (known after apply)

+ preserve = true

+ region = "ap-northeast-2"

+ resolve_conflicts_on_create = "NONE"

+ resolve_conflicts_on_update = "OVERWRITE"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ timeouts {}

}

# module.eks.aws_eks_addon.this["kube-proxy"] will be created

+ resource "aws_eks_addon" "this" {

+ addon_name = "kube-proxy"

+ addon_version = (known after apply)

+ arn = (known after apply)

+ cluster_name = (known after apply)

+ configuration_values = (known after apply)

+ created_at = (known after apply)

+ id = (known after apply)

+ modified_at = (known after apply)

+ preserve = true

+ region = "ap-northeast-2"

+ resolve_conflicts_on_create = "NONE"

+ resolve_conflicts_on_update = "OVERWRITE"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ timeouts {}

}

# module.eks.aws_eks_cluster.this[0] will be created

+ resource "aws_eks_cluster" "this" {

+ arn = (known after apply)

+ bootstrap_self_managed_addons = false

+ certificate_authority = (known after apply)

+ cluster_id = (known after apply)

+ created_at = (known after apply)

+ deletion_protection = (known after apply)

+ endpoint = (known after apply)

+ id = (known after apply)

+ identity = (known after apply)

+ name = "myeks"

+ platform_version = (known after apply)

+ region = "ap-northeast-2"

+ role_arn = (known after apply)

+ status = (known after apply)

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ version = "1.34"

+ access_config {

+ authentication_mode = "API_AND_CONFIG_MAP"

+ bootstrap_cluster_creator_admin_permissions = false

}

+ compute_config (known after apply)

+ control_plane_scaling_config (known after apply)

+ encryption_config {

+ resources = [

+ "secrets",

]

+ provider {

+ key_arn = (known after apply)

}

}

+ kubernetes_network_config {

+ ip_family = "ipv4"

+ service_ipv4_cidr = (known after apply)

+ service_ipv6_cidr = (known after apply)

+ elastic_load_balancing (known after apply)

}

+ storage_config (known after apply)

+ upgrade_policy (known after apply)

+ vpc_config {

+ cluster_security_group_id = (known after apply)

+ endpoint_private_access = false

+ endpoint_public_access = true

+ public_access_cidrs = [

+ "0.0.0.0/0",

]

+ security_group_ids = (known after apply)

+ subnet_ids = (known after apply)

+ vpc_id = (known after apply)

}

}

# module.eks.aws_iam_openid_connect_provider.oidc_provider[0] will be created

+ resource "aws_iam_openid_connect_provider" "oidc_provider" {

+ arn = (known after apply)

+ client_id_list = [

+ "sts.amazonaws.com",

]

+ id = (known after apply)

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-eks-irsa"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-eks-irsa"

+ "Terraform" = "true"

}

+ thumbprint_list = (known after apply)

+ url = (known after apply)

}

# module.eks.aws_iam_policy.cluster_encryption[0] will be created

+ resource "aws_iam_policy" "cluster_encryption" {

+ arn = (known after apply)

+ attachment_count = (known after apply)

+ description = "Cluster encryption policy to allow cluster role to utilize CMK provided"

+ id = (known after apply)

+ name = (known after apply)

+ name_prefix = "myeks-cluster-ClusterEncryption"

+ path = "/"

+ policy = (known after apply)

+ policy_id = (known after apply)

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

}

# module.eks.aws_iam_role.this[0] will be created

+ resource "aws_iam_role" "this" {

+ arn = (known after apply)

+ assume_role_policy = jsonencode(

{

+ Statement = [

+ {

+ Action = [

+ "sts:TagSession",

+ "sts:AssumeRole",

]

+ Effect = "Allow"

+ Principal = {

+ Service = "eks.amazonaws.com"

}

+ Sid = "EKSClusterAssumeRole"

},

]

+ Version = "2012-10-17"

}

)

+ create_date = (known after apply)

+ force_detach_policies = true

+ id = (known after apply)

+ managed_policy_arns = (known after apply)

+ max_session_duration = 3600

+ name = (known after apply)

+ name_prefix = "myeks-cluster-"

+ path = "/"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ unique_id = (known after apply)

+ inline_policy (known after apply)

}

# module.eks.aws_iam_role_policy_attachment.cluster_encryption[0] will be created

+ resource "aws_iam_role_policy_attachment" "cluster_encryption" {

+ id = (known after apply)

+ policy_arn = (known after apply)

+ role = (known after apply)

}

# module.eks.aws_iam_role_policy_attachment.this["AmazonEKSClusterPolicy"] will be created

+ resource "aws_iam_role_policy_attachment" "this" {

+ id = (known after apply)

+ policy_arn = "arn:aws:iam::aws:policy/AmazonEKSClusterPolicy"

+ role = (known after apply)

}

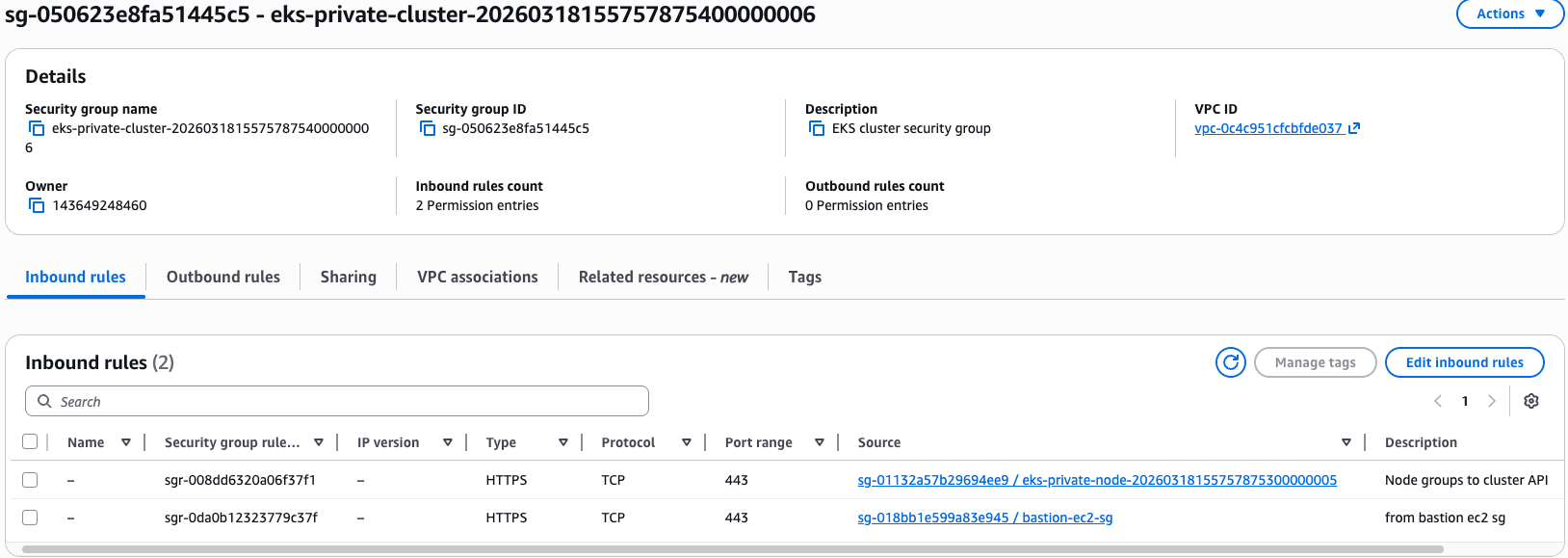

# module.eks.aws_security_group.cluster[0] will be created

+ resource "aws_security_group" "cluster" {

+ arn = (known after apply)

+ description = "EKS cluster security group"

+ egress = (known after apply)

+ id = (known after apply)

+ ingress = (known after apply)

+ name = (known after apply)

+ name_prefix = "myeks-cluster-"

+ owner_id = (known after apply)

+ region = "ap-northeast-2"

+ revoke_rules_on_delete = false

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-cluster"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-cluster"

+ "Terraform" = "true"

}

+ vpc_id = (known after apply)

}

# module.eks.aws_security_group.node[0] will be created

+ resource "aws_security_group" "node" {

+ arn = (known after apply)

+ description = "EKS node shared security group"

+ egress = (known after apply)

+ id = (known after apply)

+ ingress = (known after apply)

+ name = (known after apply)

+ name_prefix = "myeks-node-"

+ owner_id = (known after apply)

+ region = "ap-northeast-2"

+ revoke_rules_on_delete = false

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-node"

+ "Terraform" = "true"

+ "kubernetes.io/cluster/myeks" = "owned"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-node"

+ "Terraform" = "true"

+ "kubernetes.io/cluster/myeks" = "owned"

}

+ vpc_id = (known after apply)

}

# module.eks.aws_security_group_rule.cluster["ingress_nodes_443"] will be created

+ resource "aws_security_group_rule" "cluster" {

+ description = "Node groups to cluster API"

+ from_port = 443

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = false

+ source_security_group_id = (known after apply)

+ to_port = 443

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["egress_all"] will be created

+ resource "aws_security_group_rule" "node" {

+ cidr_blocks = [

+ "0.0.0.0/0",

]

+ description = "Allow all egress"

+ from_port = 0

+ id = (known after apply)

+ protocol = "-1"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = false

+ source_security_group_id = (known after apply)

+ to_port = 0

+ type = "egress"

}

# module.eks.aws_security_group_rule.node["ingress_cluster_10251_webhook"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Cluster API to node 10251/tcp webhook"

+ from_port = 10251

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = false

+ source_security_group_id = (known after apply)

+ to_port = 10251

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["ingress_cluster_443"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Cluster API to node groups"

+ from_port = 443

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = false

+ source_security_group_id = (known after apply)

+ to_port = 443

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["ingress_cluster_4443_webhook"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Cluster API to node 4443/tcp webhook"

+ from_port = 4443

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = false

+ source_security_group_id = (known after apply)

+ to_port = 4443

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["ingress_cluster_6443_webhook"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Cluster API to node 6443/tcp webhook"

+ from_port = 6443

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = false

+ source_security_group_id = (known after apply)

+ to_port = 6443

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["ingress_cluster_8443_webhook"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Cluster API to node 8443/tcp webhook"

+ from_port = 8443

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = false

+ source_security_group_id = (known after apply)

+ to_port = 8443

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["ingress_cluster_9443_webhook"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Cluster API to node 9443/tcp webhook"

+ from_port = 9443

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = false

+ source_security_group_id = (known after apply)

+ to_port = 9443

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["ingress_cluster_kubelet"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Cluster API to node kubelets"

+ from_port = 10250

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = false

+ source_security_group_id = (known after apply)

+ to_port = 10250

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["ingress_nodes_ephemeral"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Node to node ingress on ephemeral ports"

+ from_port = 1025

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = true

+ source_security_group_id = (known after apply)

+ to_port = 65535

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["ingress_self_coredns_tcp"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Node to node CoreDNS"

+ from_port = 53

+ id = (known after apply)

+ protocol = "tcp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = true

+ source_security_group_id = (known after apply)

+ to_port = 53

+ type = "ingress"

}

# module.eks.aws_security_group_rule.node["ingress_self_coredns_udp"] will be created

+ resource "aws_security_group_rule" "node" {

+ description = "Node to node CoreDNS UDP"

+ from_port = 53

+ id = (known after apply)

+ protocol = "udp"

+ region = "ap-northeast-2"

+ security_group_id = (known after apply)

+ security_group_rule_id = (known after apply)

+ self = true

+ source_security_group_id = (known after apply)

+ to_port = 53

+ type = "ingress"

}

# module.eks.time_sleep.this[0] will be created

+ resource "time_sleep" "this" {

+ create_duration = "30s"

+ id = (known after apply)

+ triggers = {

+ "certificate_authority_data" = (known after apply)

+ "endpoint" = (known after apply)

+ "kubernetes_version" = "1.34"

+ "name" = (known after apply)

+ "service_cidr" = (known after apply)

}

}

# module.vpc.aws_default_route_table.default[0] will be created

+ resource "aws_default_route_table" "default" {

+ arn = (known after apply)

+ default_route_table_id = (known after apply)

+ id = (known after apply)

+ owner_id = (known after apply)

+ region = "ap-northeast-2"

+ route = (known after apply)

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-VPC-default"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-VPC-default"

}

+ vpc_id = (known after apply)

+ timeouts {

+ create = "5m"

+ update = "5m"

}

}

# module.vpc.aws_default_security_group.this[0] will be created

+ resource "aws_default_security_group" "this" {

+ arn = (known after apply)

+ description = (known after apply)

+ egress = (known after apply)

+ id = (known after apply)

+ ingress = (known after apply)

+ name = (known after apply)

+ name_prefix = (known after apply)

+ owner_id = (known after apply)

+ region = "ap-northeast-2"

+ revoke_rules_on_delete = false

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-VPC-default"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-VPC-default"

}

+ vpc_id = (known after apply)

}

# module.vpc.aws_internet_gateway.this[0] will be created

+ resource "aws_internet_gateway" "this" {

+ arn = (known after apply)

+ id = (known after apply)

+ owner_id = (known after apply)

+ region = "ap-northeast-2"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-IGW"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-IGW"

}

+ vpc_id = (known after apply)

}

# module.vpc.aws_route.public_internet_gateway[0] will be created

+ resource "aws_route" "public_internet_gateway" {

+ destination_cidr_block = "0.0.0.0/0"

+ gateway_id = (known after apply)

+ id = (known after apply)

+ instance_id = (known after apply)

+ instance_owner_id = (known after apply)

+ network_interface_id = (known after apply)

+ origin = (known after apply)

+ region = "ap-northeast-2"

+ route_table_id = (known after apply)

+ state = (known after apply)

+ timeouts {

+ create = "5m"

}

}

# module.vpc.aws_route_table.public[0] will be created

+ resource "aws_route_table" "public" {

+ arn = (known after apply)

+ id = (known after apply)

+ owner_id = (known after apply)

+ propagating_vgws = (known after apply)

+ region = "ap-northeast-2"

+ route = (known after apply)

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-VPC-public"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-VPC-public"

}

+ vpc_id = (known after apply)

}

# module.vpc.aws_route_table_association.public[0] will be created

+ resource "aws_route_table_association" "public" {

+ id = (known after apply)

+ region = "ap-northeast-2"

+ route_table_id = (known after apply)

+ subnet_id = (known after apply)

}

# module.vpc.aws_route_table_association.public[1] will be created

+ resource "aws_route_table_association" "public" {

+ id = (known after apply)

+ region = "ap-northeast-2"

+ route_table_id = (known after apply)

+ subnet_id = (known after apply)

}

# module.vpc.aws_route_table_association.public[2] will be created

+ resource "aws_route_table_association" "public" {

+ id = (known after apply)

+ region = "ap-northeast-2"

+ route_table_id = (known after apply)

+ subnet_id = (known after apply)

}

# module.vpc.aws_subnet.public[0] will be created

+ resource "aws_subnet" "public" {

+ arn = (known after apply)

+ assign_ipv6_address_on_creation = false

+ availability_zone = "ap-northeast-2a"

+ availability_zone_id = (known after apply)

+ cidr_block = "192.168.1.0/24"

+ enable_dns64 = false

+ enable_resource_name_dns_a_record_on_launch = false

+ enable_resource_name_dns_aaaa_record_on_launch = false

+ id = (known after apply)

+ ipv6_cidr_block = (known after apply)

+ ipv6_cidr_block_association_id = (known after apply)

+ ipv6_native = false

+ map_public_ip_on_launch = true

+ owner_id = (known after apply)

+ private_dns_hostname_type_on_launch = (known after apply)

+ region = "ap-northeast-2"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-PublicSubnet"

+ "kubernetes.io/role/elb" = "1"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-PublicSubnet"

+ "kubernetes.io/role/elb" = "1"

}

+ vpc_id = (known after apply)

}

# module.vpc.aws_subnet.public[1] will be created

+ resource "aws_subnet" "public" {

+ arn = (known after apply)

+ assign_ipv6_address_on_creation = false

+ availability_zone = "ap-northeast-2b"

+ availability_zone_id = (known after apply)

+ cidr_block = "192.168.2.0/24"

+ enable_dns64 = false

+ enable_resource_name_dns_a_record_on_launch = false

+ enable_resource_name_dns_aaaa_record_on_launch = false

+ id = (known after apply)

+ ipv6_cidr_block = (known after apply)

+ ipv6_cidr_block_association_id = (known after apply)

+ ipv6_native = false

+ map_public_ip_on_launch = true

+ owner_id = (known after apply)

+ private_dns_hostname_type_on_launch = (known after apply)

+ region = "ap-northeast-2"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-PublicSubnet"

+ "kubernetes.io/role/elb" = "1"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-PublicSubnet"

+ "kubernetes.io/role/elb" = "1"

}

+ vpc_id = (known after apply)

}

# module.vpc.aws_subnet.public[2] will be created

+ resource "aws_subnet" "public" {

+ arn = (known after apply)

+ assign_ipv6_address_on_creation = false

+ availability_zone = "ap-northeast-2c"

+ availability_zone_id = (known after apply)

+ cidr_block = "192.168.3.0/24"

+ enable_dns64 = false

+ enable_resource_name_dns_a_record_on_launch = false

+ enable_resource_name_dns_aaaa_record_on_launch = false

+ id = (known after apply)

+ ipv6_cidr_block = (known after apply)

+ ipv6_cidr_block_association_id = (known after apply)

+ ipv6_native = false

+ map_public_ip_on_launch = true

+ owner_id = (known after apply)

+ private_dns_hostname_type_on_launch = (known after apply)

+ region = "ap-northeast-2"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-PublicSubnet"

+ "kubernetes.io/role/elb" = "1"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-PublicSubnet"

+ "kubernetes.io/role/elb" = "1"

}

+ vpc_id = (known after apply)

}

# module.vpc.aws_vpc.this[0] will be created

+ resource "aws_vpc" "this" {

+ arn = (known after apply)

+ cidr_block = "192.168.0.0/16"

+ default_network_acl_id = (known after apply)

+ default_route_table_id = (known after apply)

+ default_security_group_id = (known after apply)

+ dhcp_options_id = (known after apply)

+ enable_dns_hostnames = true

+ enable_dns_support = true

+ enable_network_address_usage_metrics = (known after apply)

+ id = (known after apply)

+ instance_tenancy = "default"

+ ipv6_association_id = (known after apply)

+ ipv6_cidr_block = (known after apply)

+ ipv6_cidr_block_network_border_group = (known after apply)

+ main_route_table_id = (known after apply)

+ owner_id = (known after apply)

+ region = "ap-northeast-2"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-VPC"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-VPC"

}

}

# module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0] will be created

+ resource "aws_eks_node_group" "this" {

+ ami_type = "AL2023_x86_64_STANDARD"

+ arn = (known after apply)

+ capacity_type = "ON_DEMAND"

+ cluster_name = (known after apply)

+ disk_size = (known after apply)

+ id = (known after apply)

+ instance_types = [

+ "t3.medium",

]

+ node_group_name = "myeks-node-group"

+ node_group_name_prefix = (known after apply)

+ node_role_arn = (known after apply)

+ region = "ap-northeast-2"

+ release_version = "1.34.4-20260311"

+ resources = (known after apply)

+ status = (known after apply)

+ subnet_ids = (known after apply)

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-node-group"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-node-group"

+ "Terraform" = "true"

}

+ version = "1.34"

+ launch_template {

+ id = (known after apply)

+ name = (known after apply)

+ version = (known after apply)

}

+ node_repair_config (known after apply)

+ scaling_config {

+ desired_size = 2

+ max_size = 4

+ min_size = 1

}

+ update_config {

+ max_unavailable_percentage = 33

}

}

# module.eks.module.eks_managed_node_group["default"].aws_iam_role.this[0] will be created

+ resource "aws_iam_role" "this" {

+ arn = (known after apply)

+ assume_role_policy = jsonencode(

{

+ Statement = [

+ {

+ Action = "sts:AssumeRole"

+ Effect = "Allow"

+ Principal = {

+ Service = "ec2.amazonaws.com"

}

+ Sid = "EKSNodeAssumeRole"

},

]

+ Version = "2012-10-17"

}

)

+ create_date = (known after apply)

+ description = "EKS managed node group IAM role"

+ force_detach_policies = true

+ id = (known after apply)

+ managed_policy_arns = (known after apply)

+ max_session_duration = 3600

+ name = (known after apply)

+ name_prefix = "myeks-node-group-eks-node-group-"

+ path = "/"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ unique_id = (known after apply)

+ inline_policy (known after apply)

}

# module.eks.module.eks_managed_node_group["default"].aws_iam_role_policy_attachment.this["AmazonEC2ContainerRegistryReadOnly"] will be created

+ resource "aws_iam_role_policy_attachment" "this" {

+ id = (known after apply)

+ policy_arn = "arn:aws:iam::aws:policy/AmazonEC2ContainerRegistryReadOnly"

+ role = (known after apply)

}

# module.eks.module.eks_managed_node_group["default"].aws_iam_role_policy_attachment.this["AmazonEKSWorkerNodePolicy"] will be created

+ resource "aws_iam_role_policy_attachment" "this" {

+ id = (known after apply)

+ policy_arn = "arn:aws:iam::aws:policy/AmazonEKSWorkerNodePolicy"

+ role = (known after apply)

}

# module.eks.module.eks_managed_node_group["default"].aws_iam_role_policy_attachment.this["AmazonEKS_CNI_Policy"] will be created

+ resource "aws_iam_role_policy_attachment" "this" {

+ id = (known after apply)

+ policy_arn = "arn:aws:iam::aws:policy/AmazonEKS_CNI_Policy"

+ role = (known after apply)

}

# module.eks.module.eks_managed_node_group["default"].aws_launch_template.this[0] will be created

+ resource "aws_launch_template" "this" {

+ arn = (known after apply)

+ default_version = (known after apply)

+ description = "Custom launch template for myeks-node-group EKS managed node group"

+ id = (known after apply)

+ key_name = "voieul-key"

+ latest_version = (known after apply)

+ name = (known after apply)

+ name_prefix = "default-"

+ region = "ap-northeast-2"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ update_default_version = true

+ user_data = "Q29udGVudC1UeXBlOiBtdWx0aXBhcnQvbWl4ZWQ7IGJvdW5kYXJ5PSJNSU1FQk9VTkRBUlkiCk1JTUUtVmVyc2lvbjogMS4wDQoNCi0tTUlNRUJPVU5EQVJZDQpDb250ZW50LVRyYW5zZmVyLUVuY29kaW5nOiA3Yml0DQpDb250ZW50LVR5cGU6IHRleHQveC1zaGVsbHNjcmlwdA0KTWltZS1WZXJzaW9uOiAxLjANCg0KIyEvYmluL2Jhc2gKZWNobyAiU3RhcnRpbmcgY3VzdG9tIGluaXRpYWxpemF0aW9uLi4uIgpkbmYgdXBkYXRlIC15CmRuZiBpbnN0YWxsIC15IHRyZWUgYmluZC11dGlscwplY2hvICJDdXN0b20gaW5pdGlhbGl6YXRpb24gY29tcGxldGVkLiIKDQotLU1JTUVCT1VOREFSWS0tDQo="

+ vpc_security_group_ids = (known after apply)

# (1 unchanged attribute hidden)

+ metadata_options {

+ http_endpoint = "enabled"

+ http_protocol_ipv6 = (known after apply)

+ http_put_response_hop_limit = 1

+ http_tokens = "required"

+ instance_metadata_tags = (known after apply)

}

+ tag_specifications {

+ resource_type = "instance"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-node-group"

+ "Terraform" = "true"

}

}

+ tag_specifications {

+ resource_type = "network-interface"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-node-group"

+ "Terraform" = "true"

}

}

+ tag_specifications {

+ resource_type = "volume"

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Name" = "myeks-node-group"

+ "Terraform" = "true"

}

}

}

# module.eks.module.kms.data.aws_iam_policy_document.this[0] will be read during apply

# (config refers to values not yet known)

<= data "aws_iam_policy_document" "this" {

+ id = (known after apply)

+ json = (known after apply)

+ minified_json = (known after apply)

+ override_policy_documents = []

+ source_policy_documents = []

+ statement {

+ actions = [

+ "kms:*",

]

+ resources = [

+ "*",

]

+ sid = "Default"

+ principals {

+ identifiers = [

+ "arn:aws:iam::143649248460:root",

]

+ type = "AWS"

}

}

+ statement {

+ actions = [

+ "kms:CancelKeyDeletion",

+ "kms:Create*",

+ "kms:Delete*",

+ "kms:Describe*",

+ "kms:Disable*",

+ "kms:Enable*",

+ "kms:Get*",

+ "kms:ImportKeyMaterial",

+ "kms:List*",

+ "kms:Put*",

+ "kms:ReplicateKey",

+ "kms:Revoke*",

+ "kms:ScheduleKeyDeletion",

+ "kms:TagResource",

+ "kms:UntagResource",

+ "kms:Update*",

]

+ resources = [

+ "*",

]

+ sid = "KeyAdministration"

+ principals {

+ identifiers = [

+ "arn:aws:iam::143649248460:user/voieul-user",

]

+ type = "AWS"

}

}

+ statement {

+ actions = [

+ "kms:Decrypt",

+ "kms:DescribeKey",

+ "kms:Encrypt",

+ "kms:GenerateDataKey*",

+ "kms:ReEncrypt*",

]

+ resources = [

+ "*",

]

+ sid = "KeyUsage"

+ principals {

+ identifiers = [

+ (known after apply),

]

+ type = "AWS"

}

}

}

# module.eks.module.kms.aws_kms_alias.this["cluster"] will be created

+ resource "aws_kms_alias" "this" {

+ arn = (known after apply)

+ id = (known after apply)

+ name = "alias/eks/myeks"

+ name_prefix = (known after apply)

+ region = "ap-northeast-2"

+ target_key_arn = (known after apply)

+ target_key_id = (known after apply)

}

# module.eks.module.kms.aws_kms_key.this[0] will be created

+ resource "aws_kms_key" "this" {

+ arn = (known after apply)

+ bypass_policy_lockout_safety_check = false

+ customer_master_key_spec = "SYMMETRIC_DEFAULT"

+ description = "myeks cluster encryption key"

+ enable_key_rotation = true

+ id = (known after apply)

+ is_enabled = true

+ key_id = (known after apply)

+ key_usage = "ENCRYPT_DECRYPT"

+ multi_region = false

+ policy = (known after apply)

+ region = "ap-northeast-2"

+ rotation_period_in_days = (known after apply)

+ tags = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

+ tags_all = {

+ "Environment" = "cloudneta-lab"

+ "Terraform" = "true"

}

}

# module.eks.module.eks_managed_node_group["default"].module.user_data.null_resource.validate_cluster_service_cidr will be created

+ resource "null_resource" "validate_cluster_service_cidr" {

+ id = (known after apply)

}

Plan: 52 to add, 0 to change, 0 to destroy.

────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────

Note: You didn't use the -out option to save this plan, so Terraform can't guarantee to take exactly these actions if you run "terraform apply" now.

v:Documents:s-aews:aews:1w $ nohup sh -c "terraform apply -auto-approve" > create.log 2>&1 &

[1] 81707

(상위 생략)

module.eks.time_sleep.this[0]: Still creating... [00m20s elapsed]

module.eks.aws_eks_addon.before_compute["vpc-cni"]: Still creating... [00m20s elapsed]

module.eks.time_sleep.this[0]: Still creating... [00m30s elapsed]

module.eks.time_sleep.this[0]: Creation complete after 30s [id=2026-03-18T12:37:02Z]

module.eks.module.eks_managed_node_group["default"].module.user_data.null_resource.validate_cluster_service_cidr: Creating...

module.eks.module.eks_managed_node_group["default"].module.user_data.null_resource.validate_cluster_service_cidr: Creation complete after 0s [id=5648872249672722725]

module.eks.module.eks_managed_node_group["default"].aws_launch_template.this[0]: Creating...

module.eks.aws_eks_addon.before_compute["vpc-cni"]: Still creating... [00m30s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_launch_template.this[0]: Creation complete after 6s [id=lt-0c9986db3ebbdd005]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Creating...

module.eks.aws_eks_addon.before_compute["vpc-cni"]: Still creating... [00m40s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [00m10s elapsed]

module.eks.aws_eks_addon.before_compute["vpc-cni"]: Still creating... [00m50s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [00m20s elapsed]

module.eks.aws_eks_addon.before_compute["vpc-cni"]: Still creating... [01m00s elapsed]

module.eks.aws_eks_addon.before_compute["vpc-cni"]: Creation complete after 1m5s [id=myeks:vpc-cni]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [00m30s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [00m40s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [00m50s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [01m00s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [01m10s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [01m20s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [01m30s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Still creating... [01m40s elapsed]

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Creation complete after 1m48s [id=myeks:myeks-node-group]

module.eks.aws_eks_addon.this["coredns"]: Creating...

module.eks.aws_eks_addon.this["kube-proxy"]: Creating...

module.eks.aws_eks_addon.this["coredns"]: Still creating... [00m10s elapsed]

module.eks.aws_eks_addon.this["kube-proxy"]: Still creating... [00m10s elapsed]

module.eks.aws_eks_addon.this["coredns"]: Creation complete after 14s [id=myeks:coredns]

module.eks.aws_eks_addon.this["kube-proxy"]: Still creating... [00m20s elapsed]

module.eks.aws_eks_addon.this["kube-proxy"]: Creation complete after 24s [id=myeks:kube-proxy]

Apply complete! Resources: 52 added, 0 changed, 0 destroyed.

[1] + 81707 done

v:Documents:s-aews:aews:1w $ tail -f create.log

module.eks.module.eks_managed_node_group["default"].aws_eks_node_group.this[0]: Creation complete after 1m48s [id=myeks:myeks-node-group]

module.eks.aws_eks_addon.this["coredns"]: Creating...

module.eks.aws_eks_addon.this["kube-proxy"]: Creating...

module.eks.aws_eks_addon.this["coredns"]: Still creating... [00m10s elapsed]

module.eks.aws_eks_addon.this["kube-proxy"]: Still creating... [00m10s elapsed]

module.eks.aws_eks_addon.this["coredns"]: Creation complete after 14s [id=myeks:coredns]

module.eks.aws_eks_addon.this["kube-proxy"]: Still creating... [00m20s elapsed]

module.eks.aws_eks_addon.this["kube-proxy"]: Creation complete after 24s [id=myeks:kube-proxy]

Apply complete! Resources: 52 added, 0 changed, 0 destroyed.

# 자격증명 설정

v:Documents:s-aews:aews:1w $ aws eks update-kubeconfig --region ap-northeast-2 --name myeks

Added new context arn:aws:eks:ap-northeast-2:123123123123:cluster/myeks to /Users/test-user/.kube/config

# k8s config 확인 및 rename context

v:Documents:s-aews:aews:1w $ cat ~/.kube/config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURCVENDQWUyZ0F3SUJBZ0lJV1F2NXVIS2d2aEF3RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFd01UZ3hPRE15TVRoYUZ3MHpOVEV3TVRZeE9ETTNNVGhhTUJVeApFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLCkFvSUJBUURRYWttb1NWSkZPeS9acFlCZFlZbDYvWnUxNHJjcVFwN2huSWVITlFRWmJwcTh4b2FTMkl6TmRuSEwKeUtpMmJPNTZJNGFzVk10RWpEQkZyK3h2M0VwOWYrTVRFNmJkUTRaMmZvbG1uWXpGT1lkZ2pMN2Y2cXM0bVBCYwp5ejVFZXpzQ1AvSlJHZCtINzg3TnpCekk4ZjYrV09wODl2QVVVYmQ2aTNJSDNtTncyekgwSkFFWjU1c3B2OEtlCjJ6Q05ocmU3Y1dEQlYrUS9jZCs2RVBtK1hieU1aRStPenF4ZlVxR1FxRmJ5dmNHK3VHbkpuaGdlVThHYXlRQXoKcG43WXNIditnWVFPQkZYajQxeEM2U0Rhc2xsS25EWGQrVUpBd3VqdnZZSFR3ZEdRYVlBVWhLV1pCTWxmM2NFNAphZUl5TUZ6dEJ4Zkh5dVRKQXRVN2tJU2xmbjM1QWdNQkFBR2pXVEJYTUE0R0ExVWREd0VCL3dRRUF3SUNwREFQCkJnTlZIUk1CQWY4RUJUQURBUUgvTUIwR0ExVWREZ1FXQkJSL2twbDFoSzB0bjQ4KzhIRjJPaFEwcFRiLzB6QVYKQmdOVkhSRUVEakFNZ2dwcmRXSmxjbTVsZEdWek1BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQ2p0YXJ2Y1NnUwpaeWtxMVZraUVCU0E4dTZOM1ZPazNmN1F0dTJrVG5tS1dGaGY3M0NWR0lxNXkxOWJoVzBNeUIxVHEzcE5ySVY1Cm0vRS96alp5RnRacEdNbHJDRCt0YjZvN1gxcnBLeitGVkR5TTEvR3plelI0Z2FVZ0ltbld4R2tlaStheEFkcFcKTmFjdGRTcWxGZExxcEpSQjJQRFM3MW5HUUF1cGlZMXpDVnRxM0FTdHBsWHk3TCtRd2NuNk83WUFQU1VvT2R0YQpJTWkyY0RKQi9VS09idEUwaldQczlLUG85cVY0WjVudWpwZ1RpbUttdDdSQUhRQUxvcHA3aGRDdG1na3liQVhqCkJWaFdJdVp4cmtrZUZnbEMvUXZaWmRidUZjSWZVYlZJSnVHcGo2Vmx6cTlMQ201UmpBYXMzM2NqUFV3emlrNWkKbjdlczBSdUdBWk9HCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://127.0.0.1:57280

name: kind-myk8s

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURCVENDQWUyZ0F3SUJBZ0lJQU5uL3ovTUFlaWd3RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TmpBek1UZ3hNakk1TWpoYUZ3MHpOakF6TVRVeE1qTTBNamhhTUJVeApFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLCkFvSUJBUUMwUTYyK1ZudnV6aXdDTmx2SlIrRTNFUTY5OXpRNVB6V3JHb0dXNVN1L2VPczlsTklmYjVSVmU4M3EKRXNUWVpCUmY3YXluVFdVOHRVVGYzRFZWNzA5VTJPTW1mRWIzU0lCakhUb1lVS2xCN1RWaWVPY0NrdkVKRmRCUgpuTnBqYnpPVW5HMFNRcHFjMG5XSTMzcmxuTXhGM2xWMXRpV3FKSFovRlFkZE8zNmFoSldMQkRIbWUvL2tyTHZQClN1Z1NaeWVwWVQ3am9PRzhBWEVSeTFnMXBJdFVFSzkvd09QTk13R3J1S3hoU1RWUUorVFRyWTJpS2poVFllT24KTmJ0UGlnK0tHVjEvT2pzL0pWcFpoNHRxaCtObmVjd3ZjYmQ5U1lwbVhvVmJGSEVCb1FrbkMwOWxVOHJYYkM3Ngo4M1dyaFIxU2Rzdmpaai9HMWJjdy9qMXlwK2xMQWdNQkFBR2pXVEJYTUE0R0ExVWREd0VCL3dRRUF3SUNwREFQCkJnTlZIUk1CQWY4RUJUQURBUUgvTUIwR0ExVWREZ1FXQkJRS1hLY0h6Ym16cXIwR1dGVTk4Q1NVdU9uTHJ6QVYKQmdOVkhSRUVEakFNZ2dwcmRXSmxjbTVsZEdWek1BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQ2pocUpjMUdPcQp3U0NVNWF1OWJlVXliRG5ZWXZQa0xLNDJDd2lWY3p0TzhRZ1Z6amptUk5OSjQxYWJrbnUrV0l4blJ0Tkp3amljCkNrN3ZFN0dEeTJGU0pPLzRjdXBvYkhDcUlPRXlNdHZtVFRoWEFvVDJST2JNaG1DTGtBY2p1YUMxS2FuV1BrMkkKN1ZVQzcvY3pDanVXb1oyWWpSVTVlSG5tUk5oUk9VckZSMlRhamt4SEFxZ2N6VVMybEpoOTZmc1RhR09pdjE3WQpnRis2aTZqdkNlSnhUV0xyOHY2NUpMcFFmcHBWVzloYVhQTVNuOUlsUXc2NmUrOUdRdlRvalhkTTVEUFl3dm51CmxHTGJtWm5UOFo2c0h3UzZjb3NIaU1LSUV4WGk0RXZPZG13QUNIQTZLTE5Ba3BzdWg4b0R0dXBVUnFjdE9lK2wKSjRrWElpQjR5ekg1Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://CC5D719ACF5FB0EC4C92959793A4488F.yl4.ap-northeast-2.eks.amazonaws.com

name: arn:aws:eks:ap-northeast-2:123123123:cluster/myeks

contexts:

- context:

cluster: kind-myk8s

namespace: default

user: kind-myk8s

name: kind-myk8s

- context:

cluster: arn:aws:eks:ap-northeast-2:123123123:cluster/myeks

user: arn:aws:eks:ap-northeast-2:123123123:cluster/myeks

name: arn:aws:eks:ap-northeast-2:123123123:cluster/myeks

current-context: arn:aws:eks:ap-northeast-2:123123123:cluster/myeks

kind: Config

users:

- name: kind-myk8s

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURLVENDQWhHZ0F3SUJBZ0lJVjh5ZmJvWkxCYnN3RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFd01UZ3hPRE15TVRoYUZ3MHlOakV3TVRneE9ETTNNVGhhTUR3eApIekFkQmdOVkJBb1RGbXQxWW1WaFpHMDZZMngxYzNSbGNpMWhaRzFwYm5NeEdUQVhCZ05WQkFNVEVHdDFZbVZ5CmJtVjBaWE10WVdSdGFXNHdnZ0VpTUEwR0NTcUdTSWIzRFFFQkFRVUFBNElCRHdBd2dnRUtBb0lCQVFDc2NqRFEKUnI5UENxY1BpMnRxMGhIRHlYZ2hIRDdXOXh0R2tteHFuai92cVk2cGFqRlEraSs1TXpaUDRJbERxVXVSZVIwZgpSZ3Q5cTJENyszcVJYM3p2VlMyRldpK1VEK2VJQnN3Y3J3U0pycFJSUzZGYkQwNmxuVzBzNmtnQlZLMXNZbjdsCjdkYmZDM0Zhd3VzMmtwQTFCeTJUQ3dOeHlkaDM2Y0FLMmlmNzVlV0ZmbTErMjNsckE4NzZPZCtIcHkrRlEwSEYKcWlYMC9LWXluWXduQi9zUE9tTzVKdHRxcFNzY0V2SjZQRUQ3b1FFUS81eEFMaVY3QWMxbUZ1ZUVBckJyQS9qQgpFd2hEY2V3V1pJTmZxSkc0TVUyZUQxWS8zWHpEZitvbTRSNmlJTUpFdW8wUFlienlMcWJuMldTeDV0NDFoQ2dHCnhVVGxtMi9jaC9ESnAvdmZBZ01CQUFHalZqQlVNQTRHQTFVZER3RUIvd1FFQXdJRm9EQVRCZ05WSFNVRUREQUsKQmdnckJnRUZCUWNEQWpBTUJnTlZIUk1CQWY4RUFqQUFNQjhHQTFVZEl3UVlNQmFBRkgrU21YV0VyUzJmano3dwpjWFk2RkRTbE52L1RNQTBHQ1NxR1NJYjNEUUVCQ3dVQUE0SUJBUUJqT3RPUWxHU2FnZnV6NkErSDloZkM0NEtYClIzbXZzTkhSeHB0NW4rOHFqU1lWSlBJUTAvUU5CTElZK3NBN3hXLzc4STh2MmZudFRiZmhVNjA4aXRTZjZnblgKSkpiSFJ1TCtCeXY0ZnVjSlFEQ2gyc0dtbEZGM29UaDZkVTJkWmdXVXhvM2pHaDlmbmlHMjlaWDY5RDQyNXMrcQpTajRiNFhxa3FxaklETVkveFhqamorRW10bkQ3WXFOSjBjZnNnelg3eTBwbzJBQXZsUGF4eGJySkJkNEMzME1SClhvQ1hkSXhra3AzaERTU3VGb3hpS0lkejMrZ1c1UkZ5UzF5ME0zdnh4TkRJMWZSREFsVTZJNkt4bHo0Z21vZDYKWWU5NXBibk1wTmgwclRRY3piN2VDL0YzeHAvUWN5ejhDaWRyKzZCTUg3TytnV3dpUnFIV1pCZ1ZxR2I2Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcEFJQkFBS0NBUUVBckhJdzBFYS9Ud3FuRDR0cmF0SVJ3OGw0SVJ3KzF2Y2JScEpzYXA0Lzc2bU9xV294ClVQb3Z1VE0yVCtDSlE2bExrWGtkSDBZTGZhdGcrL3Q2a1Y5ODcxVXRoVm92bEEvbmlBYk1ISzhFaWE2VVVVdWgKV3c5T3BaMXRMT3BJQVZTdGJHSis1ZTNXM3d0eFdzTHJOcEtRTlFjdGt3c0RjY25ZZCtuQUN0b24rK1hsaFg1dApmdHQ1YXdQTytqbmZoNmN2aFVOQnhhb2w5UHltTXAyTUp3ZjdEenBqdVNiYmFxVXJIQkx5ZWp4QSs2RUJFUCtjClFDNGxld0hOWmhibmhBS3dhd1A0d1JNSVEzSHNGbVNEWDZpUnVERk5uZzlXUDkxOHczL3FKdUVlb2lEQ1JMcU4KRDJHODhpNm01OWxrc2ViZU5ZUW9Cc1ZFNVp0djNJZnd5YWY3M3dJREFRQUJBb0lCQVFDQjFITVZ5NzNxeDIxaAprYWpzd24ybmR2NWZoMEYwWEpTSGZHUHRuWGtyZWUremN3VHdIM3hncGNMbFBucDVtM01PY2kzUHhzK043TUpXCjFFM0NOeTc3alpoNUJwNDlqZi9WOUxBbGhFc1pVWHZPL083ZGZOZk1ib3FzdnpJNDlrU2ZEa1RWM1V2aG4xN1gKWTFydE9ra2g4MmFIaDBvdm1EVEdpeEVQMnBFeDN2WC9BQytEaDV4VkY5SmpuTkJTZXY0SWNhdHhBUVdHNVY1dQpmdzhBNEZIRUZQUGVZKy9FVnlzRVR5T1FnTDJLa2hybjg3Z2lZRzBJMjkyY0VuYWlBY1RjMVF2WS81bHJMaEtBCndzQ3ZpTSt6WWxpQ0FGdzNoQ3lLdmZIZGYxTVgyM0g3MUJ1enJXNS9ZSERlUUtEc3RJNVFFdEVqR0Vna29mb2EKVUNiMmpnZlpBb0dCQU4rK3ROUTR0TmljQXprTEE4bEZHa3kxUDZBaFNjOFpoZ2ZqbTFMeXNaaDRubGZRQ0dhWQpNcjRYazNaMmR4SHhSWlBsRUkvWFFaOWxyQlU3OHpIS1dHOWtjSjI4NEtQZGRzMTlHS0FXRTJVaW1XdW1BNnpTCjFRc2lHUE5DWVVkZTZ6ZlVRcUJSUkVtN3Y1cVJ4TjFSMnRFWksrWDluQTE3d0J4MVVGR0lmdHhOQW9HQkFNVk8KVFVyem9RY3I5NGplS1VSTTFsY25reXNxeE1OTGZ5UW1HeXFtdi84YXE4SFlQS3JmaWFCZXBUUml3ZkRYSzU1RAppRkZyaCtDYjJCeUV4UkNlTzJPcVY1cERObTZJVEs2N294T0lZQWlVeGZZV2NlblFlMXh1WWQ4WXF1YkJEUEtjCk9nK1piQTd1dkYva1BVTUszZTBvU3YxN2JZS0pXdE9VcCtNSzBwN2JBb0dCQUp6czk0MEUvS29UdWhydkE4Zk4KWktXNlZaYXM0a1NUcFRLeFMwWkJHNWhSdU5Uai9wQmVYUENBUHBmT2ZMS2o0dVhZdWVYNDFuakNhWkEzRE5tMgpEcEtLQW9aUGE4cmlVQ25OZkZFRFNyVWJNRG1WSld5NExsM3htMGc2SFZwZVUyRkR5VHNCNUlCR1l4czQ4N2M2CmF0dE82VUFVd0xlZ1BOeDQxMDFvQzNuZEFvR0FiVWFkeGxwQ29CODR2SVFXcE81TmMvM0dJNDFQWnI2RWp6ZlAKcWdLcXFaWlM5RXhYNVdkaTZRQWlUVzQ0N2JPdVE3d3hYcTdJbFp5YXg4aTlBQ1F5emxORXEzcDRSaVdWR3QxdgpSMTBybXZVUzR1V3hkNGJ4RzlOQ3YzWUJDVVo0YmxJYVVoTnQ1cU5RajJkd2lwWVZMY2s0SjBYWjlBY3cxNmdvCmg3V3h5eXNDZ1lCRkdYZXRzMGorSkRKVWZWRGlXeW1VOEp4OWZudk5rMTUzQW5ibm1RYkdvNUNrVTE2T2xWaEgKNm9sc29aM3M3bmZqajFVdkhwZWlFeWZzY0tVaUNSL3pkVUM0UWRQME00VW5maUdxU0JKQThtNUl6d1NZZk9XcgpZcVFVK1ROYU1FRXEzL1YraUlnb3htMlI3b2VYZGtCU3lBS3Nyb0VFRlZ4M1IvZTRDa3cxL3c9PQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=

- name: arn:aws:eks:ap-northeast-2:123123123:cluster/myeks

user:

exec:

apiVersion: client.authentication.k8s.io/v1beta1

args:

- --region

- ap-northeast-2

- eks

- get-token

- --cluster-name

- myeks

- --output

- json

v:Documents:s-aews:aews:1w $ cat ~/.kube/config | grep current-context | awk '{print $2}'

arn:aws:eks:ap-northeast-2:123123123:cluster/myeks

v:Documents:s-aews:aews:1w $ kubectl config rename-context $(cat ~/.kube/config | grep current-context | awk '{print $2}') myeks

Context "arn:aws:eks:ap-northeast-2:123123123:cluster/myeks" renamed to "myeks".

v:Documents:s-aews:aews:1w $ cat ~/.kube/config | grep current-context

current-context: myeks

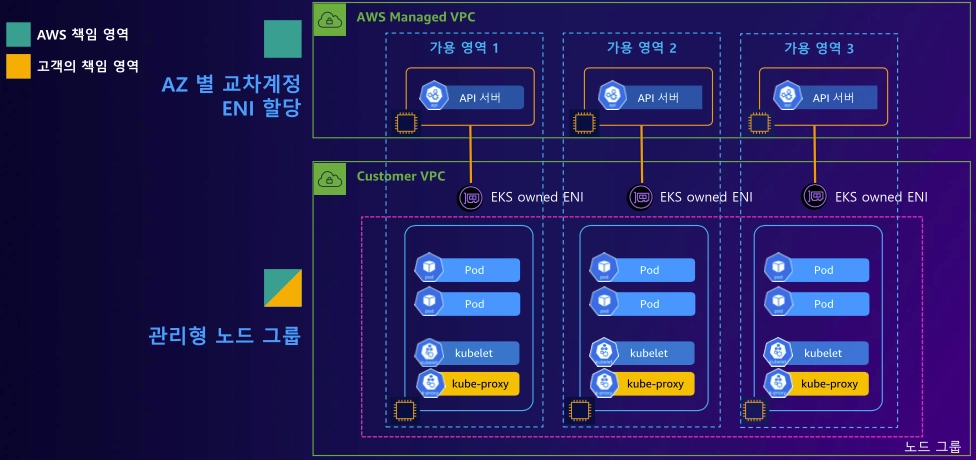

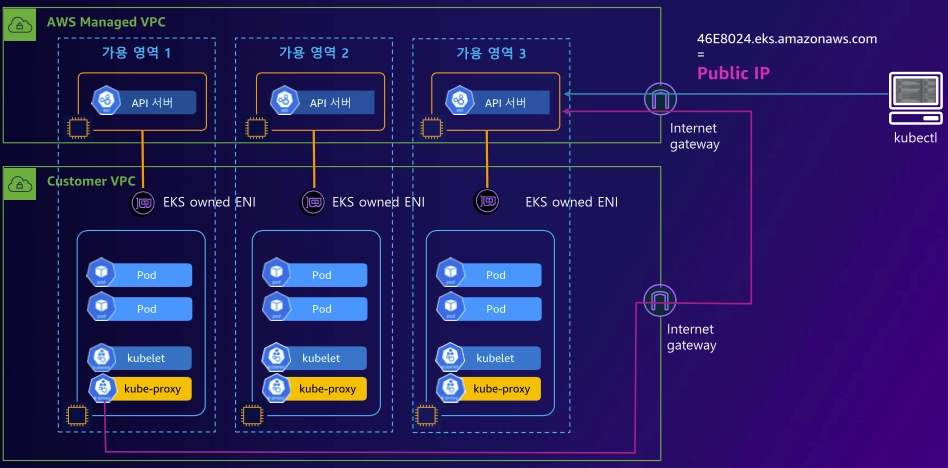

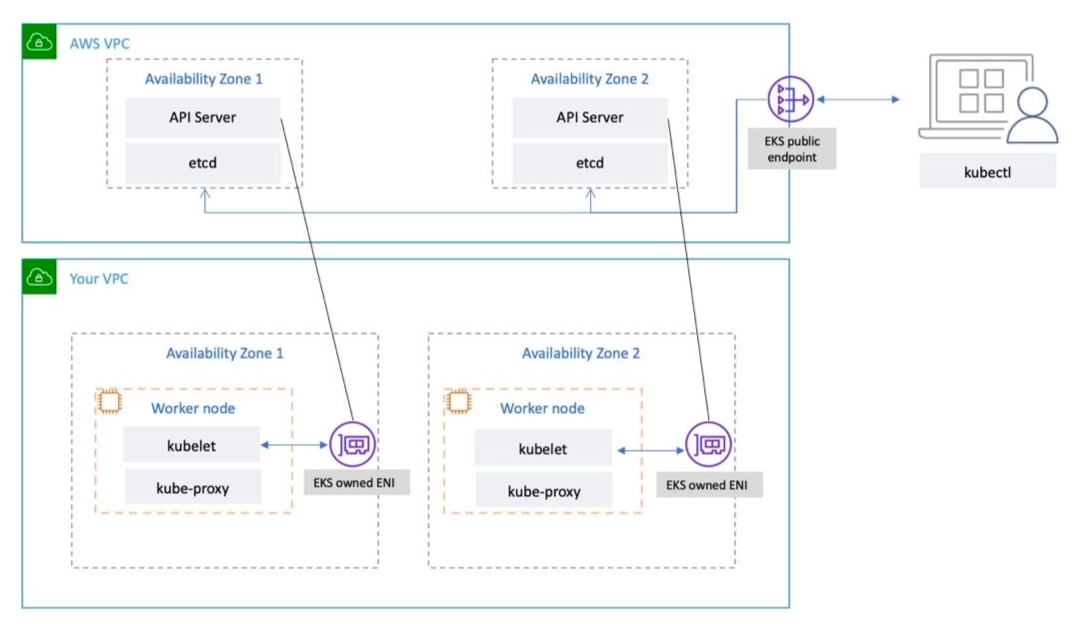

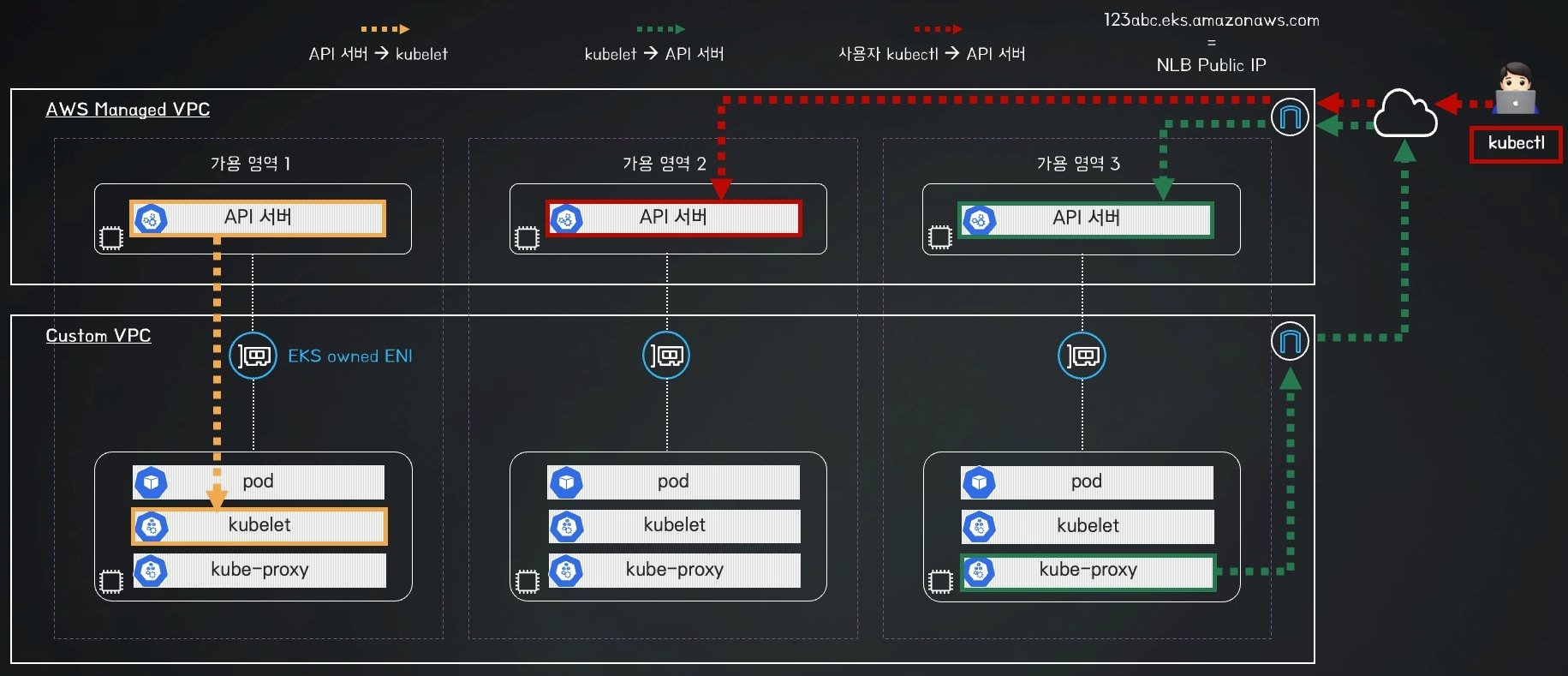

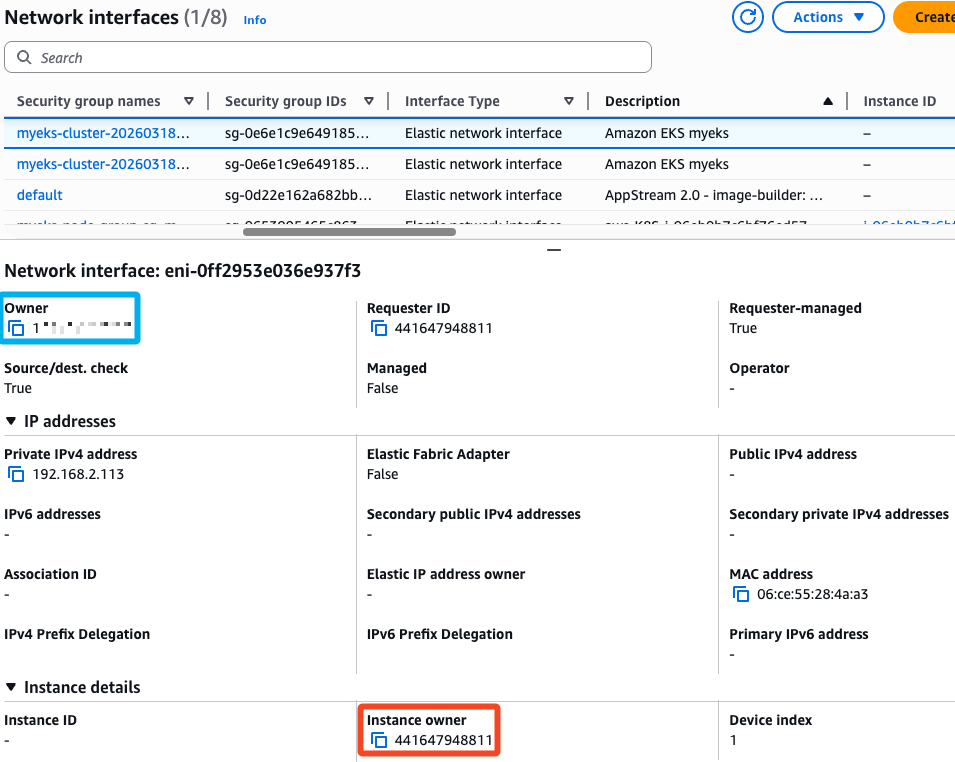

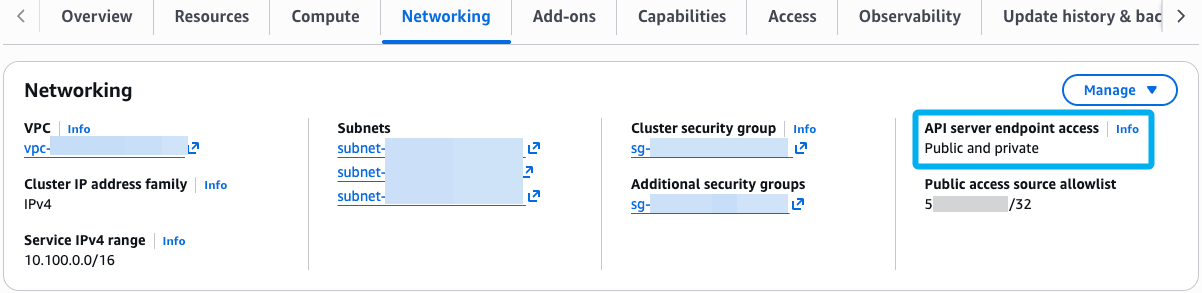

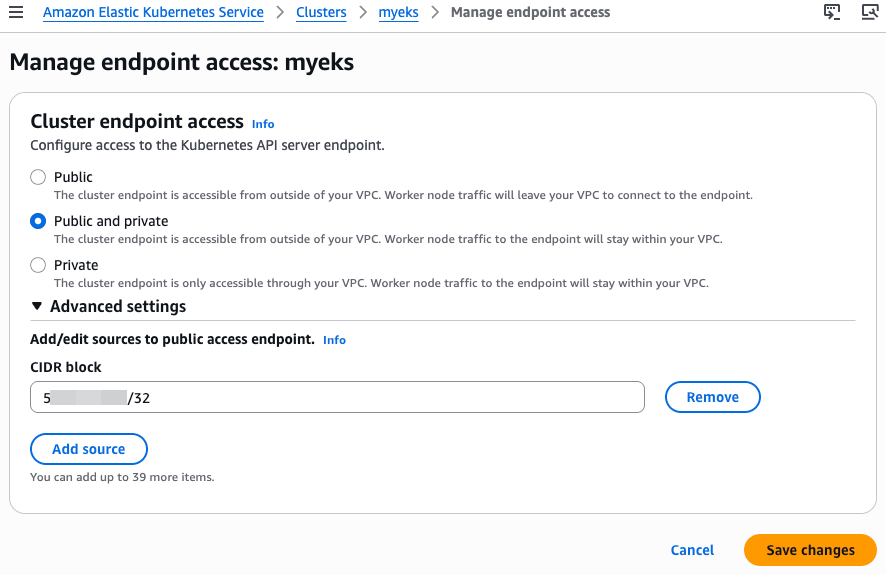

- eks 정보 확인 vs vanilla k8s 비교

# 제어부

# eks 클러스터 정보 확인

v:Documents:s-aews:aews:1w $ kubectl cluster-info

Kubernetes control plane is running at https://CC5D719ACF5FB0EC4C92959793A4488F.yl4.ap-northeast-2.eks.amazonaws.com

CoreDNS is running at https://CC5D719ACF5FB0EC4C92959793A4488F.yl4.ap-northeast-2.eks.amazonaws.com/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'.

# endpoint 확인

v:Documents:s-aews:aews:1w $ CLUSTER_NAME=myeks

v:Documents:s-aews:aews:1w $ aws eks describe-cluster --name $CLUSTER_NAME | jq

{

"cluster": {

"name": "myeks",

"arn": "arn:aws:eks:ap-northeast-2:123123123:cluster/myeks",

"createdAt": "2026-03-18T21:30:05.697000+09:00",

"version": "1.34",

"endpoint": "https://CC5D719ACF5FB0EC4C92959793A4488F.yl4.ap-northeast-2.eks.amazonaws.com",

"roleArn": "arn:aws:iam::123123123:role/myeks-cluster-20260318122941951100000001",

"resourcesVpcConfig": {

"subnetIds": [

"subnet-06b33fd18193e693b",

"subnet-028f4b3ae2da413cf",

"subnet-077140ca4e1985111"

],

"securityGroupIds": [

"sg-0e6e1c9e649185332"

],

"clusterSecurityGroupId": "sg-0d6d36714729e81b1",

"vpcId": "vpc-045dd0f66fad655bf",

"endpointPublicAccess": true,

"endpointPrivateAccess": false,

"publicAccessCidrs": [

"0.0.0.0/0"

]

},

"kubernetesNetworkConfig": {

"serviceIpv4Cidr": "10.100.0.0/16",

"ipFamily": "ipv4"

},

"logging": {

"clusterLogging": [

{

"types": [

"api",

"audit",

"authenticator",

"controllerManager",

"scheduler"

],

"enabled": false

}

]

},

"identity": {

"oidc": {

"issuer": "https://oidc.eks.ap-northeast-2.amazonaws.com/id/CC5D719ACF5FB0EC4C92959793A4488F"

}

},

"status": "ACTIVE",

"certificateAuthority": {

"data": "LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURCVENDQWUyZ0F3SUJBZ0lJQU5uL3ovTUFlaWd3RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TmpBek1UZ3hNakk1TWpoYUZ3MHpOakF6TVRVeE1qTTBNamhhTUJVeApFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLCkFvSUJBUUMwUTYyK1ZudnV6aXdDTmx2SlIrRTNFUTY5OXpRNVB6V3JHb0dXNVN1L2VPczlsTklmYjVSVmU4M3EKRXNUWVpCUmY3YXluVFdVOHRVVGYzRFZWNzA5VTJPTW1mRWIzU0lCakhUb1lVS2xCN1RWaWVPY0NrdkVKRmRCUgpuTnBqYnpPVW5HMFNRcHFjMG5XSTMzcmxuTXhGM2xWMXRpV3FKSFovRlFkZE8zNmFoSldMQkRIbWUvL2tyTHZQClN1Z1NaeWVwWVQ3am9PRzhBWEVSeTFnMXBJdFVFSzkvd09QTk13R3J1S3hoU1RWUUorVFRyWTJpS2poVFllT24KTmJ0UGlnK0tHVjEvT2pzL0pWcFpoNHRxaCtObmVjd3ZjYmQ5U1lwbVhvVmJGSEVCb1FrbkMwOWxVOHJYYkM3Ngo4M1dyaFIxU2Rzdmpaai9HMWJjdy9qMXlwK2xMQWdNQkFBR2pXVEJYTUE0R0ExVWREd0VCL3dRRUF3SUNwREFQCkJnTlZIUk1CQWY4RUJUQURBUUgvTUIwR0ExVWREZ1FXQkJRS1hLY0h6Ym16cXIwR1dGVTk4Q1NVdU9uTHJ6QVYKQmdOVkhSRUVEakFNZ2dwcmRXSmxjbTVsZEdWek1BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQ2pocUpjMUdPcQp3U0NVNWF1OWJlVXliRG5ZWXZQa0xLNDJDd2lWY3p0TzhRZ1Z6amptUk5OSjQxYWJrbnUrV0l4blJ0Tkp3amljCkNrN3ZFN0dEeTJGU0pPLzRjdXBvYkhDcUlPRXlNdHZtVFRoWEFvVDJST2JNaG1DTGtBY2p1YUMxS2FuV1BrMkkKN1ZVQzcvY3pDanVXb1oyWWpSVTVlSG5tUk5oUk9VckZSMlRhamt4SEFxZ2N6VVMybEpoOTZmc1RhR09pdjE3WQpnRis2aTZqdkNlSnhUV0xyOHY2NUpMcFFmcHBWVzloYVhQTVNuOUlsUXc2NmUrOUdRdlRvalhkTTVEUFl3dm51CmxHTGJtWm5UOFo2c0h3UzZjb3NIaU1LSUV4WGk0RXZPZG13QUNIQTZLTE5Ba3BzdWg4b0R0dXBVUnFjdE9lK2wKSjRrWElpQjR5ekg1Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K"

},

"platformVersion": "eks.18",

"tags": {

"Terraform": "true",

"Environment": "cloudneta-lab"

},

"encryptionConfig": [

{

"resources": [

"secrets"

],

"provider": {

"keyArn": "arn:aws:kms:ap-northeast-2:123123123:key/8c81b765-2233-44e4-84ee-2bbcce038fb3"

}

}

]

}

}

v:Documents:s-aews:aews:1w $ aws eks describe-cluster --name $CLUSTER_NAME | jq -r .cluster.endpoint

https://CC5D719ACF5FB0EC4C92959793A4488F.yl4.ap-northeast-2.eks.amazonaws.com

v:Documents:s-aews:aews:1w $ APIDNS=$(aws eks describe-cluster --name $CLUSTER_NAME | jq -r .cluster.endpoint | cut -d '/' -f 3)

dig +short $APIDNS

43.202.134.241

15.164.74.222

v:Documents:s-aews:aews:1w $ curl -s ipinfo.io/43.202.134.241

{

"ip": "43.202.134.241",

"hostname": "ec2-43-202-134-241.ap-northeast-2.compute.amazonaws.com",

"city": "Incheon",

"region": "Incheon",

"country": "KR",

"loc": "37.4565,126.7052",

"org": "AS16509 Amazon.com, Inc.",

"postal": "21505",

"timezone": "Asia/Seoul",

"readme": "https://ipinfo.io/missingauth"

}%

# eks 노드 그룹 정보 확인

v:Documents:s-aews:aews:1w $ aws eks describe-nodegroup --cluster-name $CLUSTER_NAME --nodegroup-name $CLUSTER_NAME-node-group | jq

{

"nodegroup": {

"nodegroupName": "myeks-node-group",

"nodegroupArn": "arn:aws:eks:ap-northeast-2:143649248460:nodegroup/myeks/myeks-node-group/0ace8079-9855-fe64-5622-36bda851f604",

"clusterName": "myeks",

"version": "1.34",

"releaseVersion": "1.34.4-20260311",

"createdAt": "2026-03-18T21:37:13.964000+09:00",

"modifiedAt": "2026-03-18T21:58:46.540000+09:00",

"status": "ACTIVE",

"capacityType": "ON_DEMAND",

"scalingConfig": {

"minSize": 1,

"maxSize": 4,

"desiredSize": 2

},

"instanceTypes": [

"t3.medium"

],

"subnets": [

"subnet-06b33fd18193e693b",

"subnet-028f4b3ae2da413cf",

"subnet-077140ca4e1985111"

],

"amiType": "AL2023_x86_64_STANDARD",

"nodeRole": "arn:aws:iam::143649248460:role/myeks-node-group-eks-node-group-20260318122955045200000005",

"labels": {},

"resources": {

"autoScalingGroups": [

{

"name": "eks-myeks-node-group-0ace8079-9855-fe64-5622-36bda851f604"

}

]

},

"health": {

"issues": []

},

"updateConfig": {

"maxUnavailablePercentage": 33

},

"launchTemplate": {

"name": "default-20260318123703010100000008",

"version": "1",

"id": "lt-0c9986db3ebbdd005"

},

"tags": {

"Terraform": "true",

"Environment": "cloudneta-lab",

"Name": "myeks-node-group"

}

}

}

v:Documents:s-aews:aews:1w $ aws eks describe-nodegroup --cluster-name $CLUSTER_NAME --nodegroup-name $CLUSTER_NAME-node-group | jq

{

"nodegroup": {

"nodegroupName": "myeks-node-group",

"nodegroupArn": "arn:aws:eks:ap-northeast-2:143649248460:nodegroup/myeks/myeks-node-group/0ace8079-9855-fe64-5622-36bda851f604",

"clusterName": "myeks",

"version": "1.34",

"releaseVersion": "1.34.4-20260311",

"createdAt": "2026-03-18T21:37:13.964000+09:00",

"modifiedAt": "2026-03-18T21:58:46.540000+09:00",

"status": "ACTIVE",

"capacityType": "ON_DEMAND",

"scalingConfig": {

"minSize": 1,

"maxSize": 4,

"desiredSize": 2

},

"instanceTypes": [

"t3.medium"

],

"subnets": [

"subnet-06b33fd18193e693b",

"subnet-028f4b3ae2da413cf",

"subnet-077140ca4e1985111"

],

"amiType": "AL2023_x86_64_STANDARD",

"nodeRole": "arn:aws:iam::143649248460:role/myeks-node-group-eks-node-group-20260318122955045200000005",

"labels": {},

"resources": {

"autoScalingGroups": [

{

"name": "eks-myeks-node-group-0ace8079-9855-fe64-5622-36bda851f604"

}

]

},

"health": {

"issues": []

},

"updateConfig": {

"maxUnavailablePercentage": 33

},

"launchTemplate": {

"name": "default-20260318123703010100000008",

"version": "1",

"id": "lt-0c9986db3ebbdd005"

},

"tags": {

"Terraform": "true",

"Environment": "cloudneta-lab",

"Name": "myeks-node-group"

}

}

}

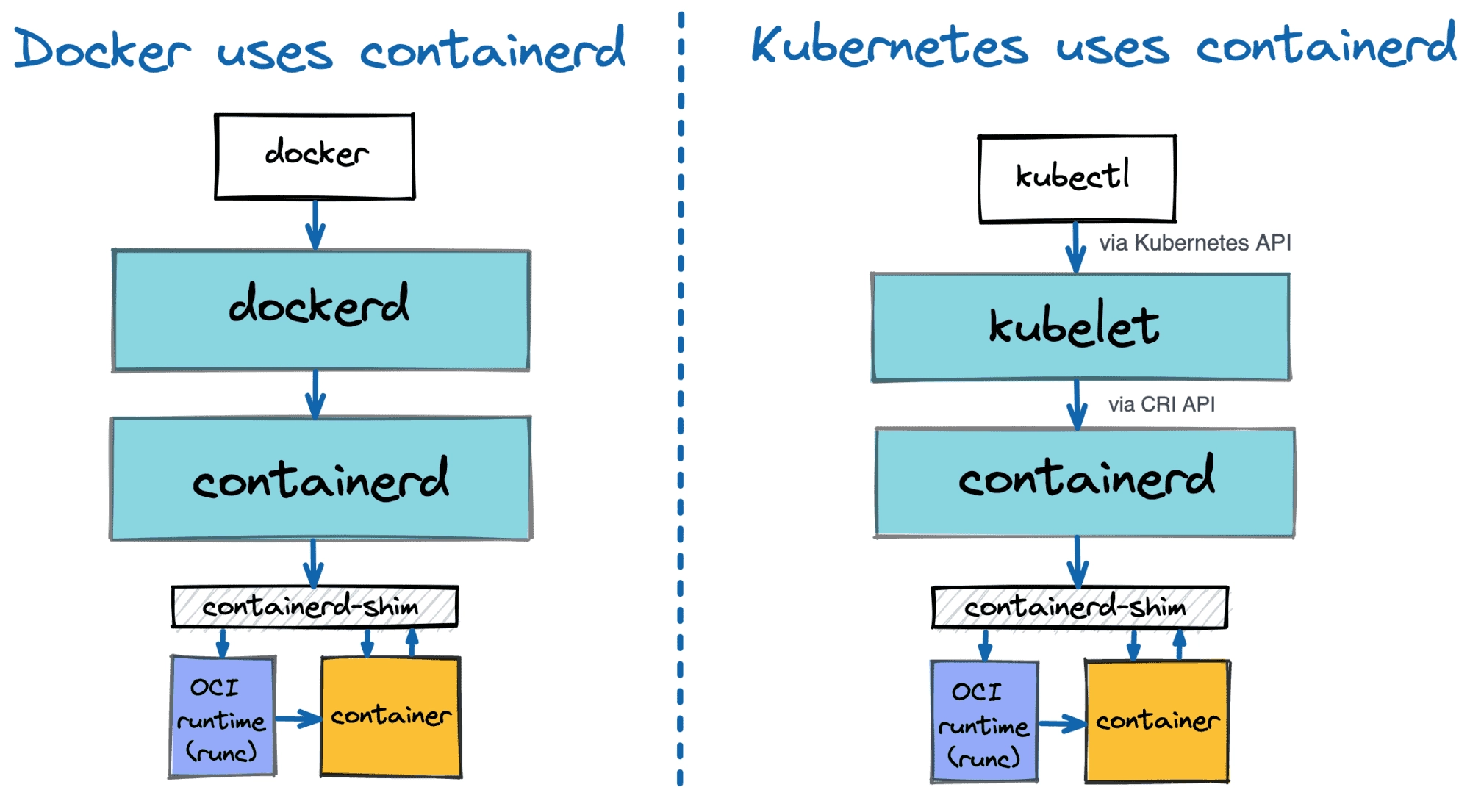

# 노드 정보 확인 : OS와 컨테이너 런타임 확인

v:Documents:s-aews:aews:1w $ kubectl get node -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

ip-192-168-1-31.ap-northeast-2.compute.internal Ready <none> 25m v1.34.4-eks-f69f56f 192.168.1.31 43.202.52.69 Amazon Linux 2023.10.20260302 6.12.73-95.123.amzn2023.x86_64 containerd://2.1.5

ip-192-168-2-173.ap-northeast-2.compute.internal Ready <none> 25m v1.34.4-eks-f69f56f 192.168.2.173 13.125.148.230 Amazon Linux 2023.10.20260302 6.12.73-95.123.amzn2023.x86_64 containerd://2.1.5

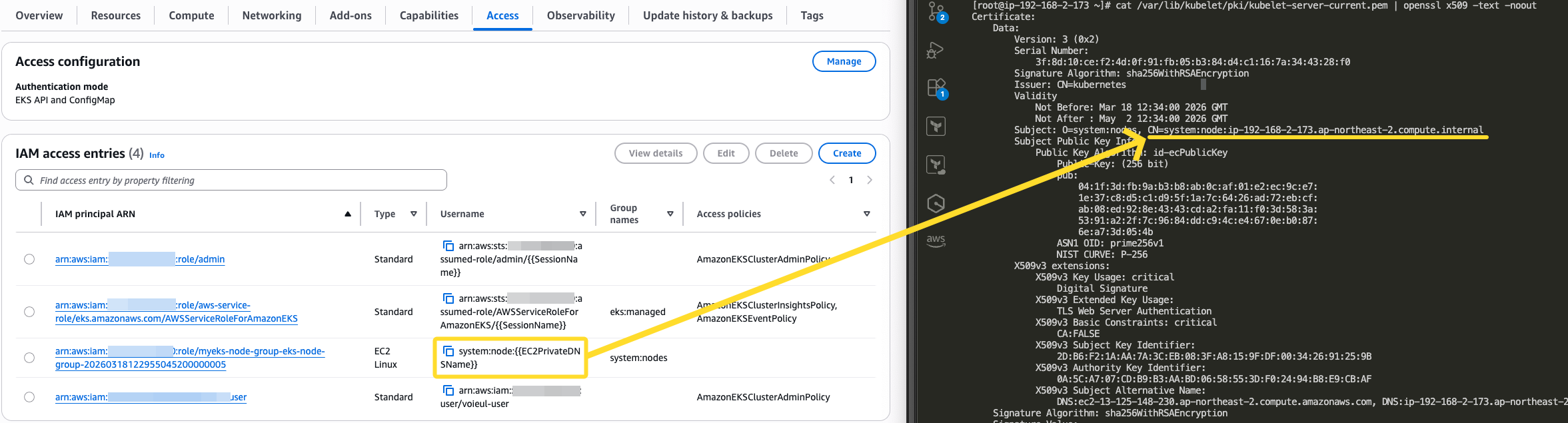

# 인증 정보 확인 : 자세한 정보는 보안에서 다룸

v:Documents:s-aews:aews:1w $ kubectl get node -v=6

I0318 22:04:35.324760 64149 cmd.go:527] kubectl command headers turned on

I0318 22:04:35.335149 64149 loader.go:402] Config loaded from file: /Users/test-user/.kube/config

I0318 22:04:35.335881 64149 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0318 22:04:35.335898 64149 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0318 22:04:35.335900 64149 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0318 22:04:35.335903 64149 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0318 22:04:35.335905 64149 envvar.go:172] "Feature gate default state" feature="InOrderInformers" enabled=true

I0318 22:04:36.563534 64149 round_trippers.go:632] "Response" verb="GET" url="https://CC5D719ACF5FB0EC4C92959793A4488F.yl4.ap-northeast-2.eks.amazonaws.com/api/v1/nodes?limit=500" status="200 OK" milliseconds=1214

NAME STATUS ROLES AGE VERSION

ip-192-168-1-31.ap-northeast-2.compute.internal Ready <none> 26m v1.34.4-eks-f69f56f

ip-192-168-2-173.ap-northeast-2.compute.internal Ready <none> 26m v1.34.4-eks-f69f56f

## Get a token for authentication with an Amazon EKS cluster

v:Documents:s-aews:aews:1w $ AWS_DEFAULT_REGION=ap-northeast-2

v:Documents:s-aews:aews:1w $ aws eks get-token help

v:Documents:s-aews:aews:1w $ aws eks get-token --cluster-name $CLUSTER_NAME --region $AWS_DEFAULT_REGION | jq

{

"kind": "ExecCredential",

"apiVersion": "client.authentication.k8s.io/v1beta1",

"spec": {},

"status": {

"expirationTimestamp": "2026-03-18T13:21:08Z",

"token": "k8s-aws-v1.aHR0cHM6Ly9zdHMuYXAtbm9ydGhlYXN0LTIuYW1hem9uYXdzLmNvbS8_QWN0aW9uPUdldENhbGxlcklkZW50aXR5JlZlcnNpb249MjAxMS0wNi0xNSZYLUFtei1BbGdvcml0aG09QVdTNC1ITUFDLVNIQTI1NiZYLUFtei1DcmVkZW50aWFsPUFLSUFTQzRSSlNUR09RM1pJSEVZJTJGMjAyNjAzMTglMkZhcC1ub3J0aGVhc3QtMiUyRnN0cyUyRmF3czRfcmVxdWVzdCZYLUFtei1EYXRlPTIwMjYwMzE4VDEzMDcwOFomWC1BbXotRXhwaXJlcz02MCZYLUFtei1TaWduZWRIZWFkZXJzPWhvc3QlM0J4LWs4cy1hd3MtaWQmWC1BbXotU2lnbmF0dXJlPWZiZWY3ODE3NzFiMjM1MTEwYTllNDY1MmIyYzdmNzFjZDM0NWYyNDcxZjUyNjI2ZjBlOWIzZTVlMjI5ODY1NWU"

}

}

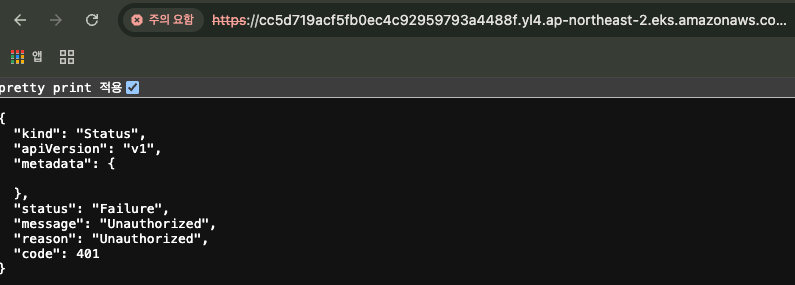

- "API server endpoint의 url + /version" 으로 접속 시, 버전 정보 노출 X (이전에는 노출됐었음)

# 시스템 파드 정보 확인

# 파드 정보 확인 : 온프레미스 쿠버네티스의 파드 배치와 다른점은? , 파드의 IP의 특징이 어떤가요? 자세한 네트워크는 2주차에서 다룸

v:Documents:s-aews:aews:1w $ kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

aws-node-b5tvm 2/2 Running 0 34m

aws-node-bln4t 2/2 Running 0 34m

coredns-d487b6fcb-77lxz 1/1 Running 0 34m

coredns-d487b6fcb-ng874 1/1 Running 0 34m

kube-proxy-fg2zs 1/1 Running 0 34m

kube-proxy-t4pvk 1/1 Running 0 34m

v:Documents:s-aews:aews:1w $ kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system aws-node-b5tvm 2/2 Running 0 35m

kube-system aws-node-bln4t 2/2 Running 0 35m

kube-system coredns-d487b6fcb-77lxz 1/1 Running 0 34m

kube-system coredns-d487b6fcb-ng874 1/1 Running 0 34m

kube-system kube-proxy-fg2zs 1/1 Running 0 34m

kube-system kube-proxy-t4pvk 1/1 Running 0 34m

v:Documents:s-aews:aews:1w $ kubectl get pod -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

aws-node-b5tvm 2/2 Running 0 35m 192.168.2.173 ip-192-168-2-173.ap-northeast-2.compute.internal <none> <none>

aws-node-bln4t 2/2 Running 0 35m 192.168.1.31 ip-192-168-1-31.ap-northeast-2.compute.internal <none> <none>

coredns-d487b6fcb-77lxz 1/1 Running 0 34m 192.168.1.100 ip-192-168-1-31.ap-northeast-2.compute.internal <none> <none>

coredns-d487b6fcb-ng874 1/1 Running 0 34m 192.168.2.230 ip-192-168-2-173.ap-northeast-2.compute.internal <none> <none>

kube-proxy-fg2zs 1/1 Running 0 34m 192.168.2.173 ip-192-168-2-173.ap-northeast-2.compute.internal <none> <none>

kube-proxy-t4pvk 1/1 Running 0 34m 192.168.1.31 ip-192-168-1-31.ap-northeast-2.compute.internal <none> <none>

# kube-system 네임스페이스에 모든 리소스 확인

v:Documents:s-aews:aews:1w $ kubectl get deploy,ds,pod,cm,secret,svc,ep,endpointslice,pdb,sa,role,rolebinding -n kube-system

Warning: v1 Endpoints is deprecated in v1.33+; use discovery.k8s.io/v1 EndpointSlice

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/coredns 2/2 2 2 35m

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

daemonset.apps/aws-node 2 2 2 2 2 <none> 36m

daemonset.apps/kube-proxy 2 2 2 2 2 <none> 35m

NAME READY STATUS RESTARTS AGE

pod/aws-node-b5tvm 2/2 Running 0 35m

pod/aws-node-bln4t 2/2 Running 0 35m

pod/coredns-d487b6fcb-77lxz 1/1 Running 0 35m

pod/coredns-d487b6fcb-ng874 1/1 Running 0 35m

pod/kube-proxy-fg2zs 1/1 Running 0 35m

pod/kube-proxy-t4pvk 1/1 Running 0 35m

NAME DATA AGE

configmap/amazon-vpc-cni 7 36m

configmap/aws-auth 1 36m

configmap/coredns 1 35m

configmap/extension-apiserver-authentication 6 39m

configmap/kube-apiserver-legacy-service-account-token-tracking 1 39m

configmap/kube-proxy 1 35m

configmap/kube-proxy-config 1 35m

configmap/kube-root-ca.crt 1 39m

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/eks-extension-metrics-api ClusterIP 10.100.83.92 <none> 443/TCP 39m

service/kube-dns ClusterIP 10.100.0.10 <none> 53/UDP,53/TCP,9153/TCP 35m

NAME ENDPOINTS AGE

endpoints/eks-extension-metrics-api 172.0.32.0:10443 39m

endpoints/kube-dns 192.168.1.100:53,192.168.2.230:53,192.168.1.100:53 + 3 more... 35m

NAME ADDRESSTYPE PORTS ENDPOINTS AGE

endpointslice.discovery.k8s.io/eks-extension-metrics-api-zpgqq IPv4 10443 172.0.32.0 39m

endpointslice.discovery.k8s.io/kube-dns-l2d4m IPv4 9153,53,53 192.168.2.230,192.168.1.100 35m

NAME MIN AVAILABLE MAX UNAVAILABLE ALLOWED DISRUPTIONS AGE

poddisruptionbudget.policy/coredns N/A 1 1 35m

NAME SECRETS AGE

serviceaccount/attachdetach-controller 0 39m

serviceaccount/aws-cloud-provider 0 39m

serviceaccount/aws-node 0 36m

serviceaccount/certificate-controller 0 39m

serviceaccount/clusterrole-aggregation-controller 0 39m

serviceaccount/coredns 0 35m

serviceaccount/cronjob-controller 0 39m

serviceaccount/daemon-set-controller 0 39m

serviceaccount/default 0 39m

serviceaccount/deployment-controller 0 39m

serviceaccount/disruption-controller 0 39m

serviceaccount/endpoint-controller 0 39m

serviceaccount/endpointslice-controller 0 39m

serviceaccount/endpointslicemirroring-controller 0 39m

serviceaccount/ephemeral-volume-controller 0 39m

serviceaccount/expand-controller 0 39m

serviceaccount/generic-garbage-collector 0 39m

serviceaccount/horizontal-pod-autoscaler 0 39m

serviceaccount/job-controller 0 39m

serviceaccount/kube-proxy 0 35m

serviceaccount/legacy-service-account-token-cleaner 0 39m

serviceaccount/namespace-controller 0 39m

serviceaccount/node-controller 0 39m

serviceaccount/persistent-volume-binder 0 39m

serviceaccount/pod-garbage-collector 0 39m

serviceaccount/pv-protection-controller 0 39m

serviceaccount/pvc-protection-controller 0 39m

serviceaccount/replicaset-controller 0 39m

serviceaccount/replication-controller 0 39m

serviceaccount/resource-claim-controller 0 39m

serviceaccount/resourcequota-controller 0 39m

serviceaccount/root-ca-cert-publisher 0 39m

serviceaccount/service-account-controller 0 39m

serviceaccount/service-cidrs-controller 0 39m

serviceaccount/service-controller 0 39m

serviceaccount/statefulset-controller 0 39m

serviceaccount/tagging-controller 0 39m

serviceaccount/ttl-after-finished-controller 0 39m

serviceaccount/ttl-controller 0 39m

serviceaccount/validatingadmissionpolicy-status-controller 0 39m

serviceaccount/volumeattributesclass-protection-controller 0 39m

NAME CREATED AT

role.rbac.authorization.k8s.io/eks-vpc-resource-controller-role 2026-03-18T12:35:17Z

role.rbac.authorization.k8s.io/eks:addon-manager 2026-03-18T12:35:16Z

role.rbac.authorization.k8s.io/eks:authenticator 2026-03-18T12:35:14Z

role.rbac.authorization.k8s.io/eks:az-poller 2026-03-18T12:35:14Z

role.rbac.authorization.k8s.io/eks:coredns-autoscaler 2026-03-18T12:35:14Z

role.rbac.authorization.k8s.io/eks:fargate-manager 2026-03-18T12:35:16Z

role.rbac.authorization.k8s.io/eks:network-policy-controller 2026-03-18T12:35:17Z

role.rbac.authorization.k8s.io/eks:node-manager 2026-03-18T12:35:16Z

role.rbac.authorization.k8s.io/eks:service-operations-configmaps 2026-03-18T12:35:15Z

role.rbac.authorization.k8s.io/extension-apiserver-authentication-reader 2026-03-18T12:35:13Z

role.rbac.authorization.k8s.io/system::leader-locking-kube-controller-manager 2026-03-18T12:35:13Z

role.rbac.authorization.k8s.io/system::leader-locking-kube-scheduler 2026-03-18T12:35:13Z

role.rbac.authorization.k8s.io/system:controller:bootstrap-signer 2026-03-18T12:35:13Z

role.rbac.authorization.k8s.io/system:controller:cloud-provider 2026-03-18T12:35:13Z

role.rbac.authorization.k8s.io/system:controller:token-cleaner 2026-03-18T12:35:13Z

NAME ROLE AGE

rolebinding.rbac.authorization.k8s.io/eks-vpc-resource-controller-rolebinding Role/eks-vpc-resource-controller-role 39m

rolebinding.rbac.authorization.k8s.io/eks:addon-manager Role/eks:addon-manager 39m

rolebinding.rbac.authorization.k8s.io/eks:authenticator Role/eks:authenticator 39m

rolebinding.rbac.authorization.k8s.io/eks:az-poller Role/eks:az-poller 39m

rolebinding.rbac.authorization.k8s.io/eks:coredns-autoscaler Role/eks:coredns-autoscaler 39m

rolebinding.rbac.authorization.k8s.io/eks:fargate-manager Role/eks:fargate-manager 39m

rolebinding.rbac.authorization.k8s.io/eks:network-policy-controller Role/eks:network-policy-controller 39m

rolebinding.rbac.authorization.k8s.io/eks:node-manager Role/eks:node-manager 39m

rolebinding.rbac.authorization.k8s.io/eks:service-operations Role/eks:service-operations-configmaps 39m

rolebinding.rbac.authorization.k8s.io/system::extension-apiserver-authentication-reader Role/extension-apiserver-authentication-reader 39m

rolebinding.rbac.authorization.k8s.io/system::leader-locking-kube-controller-manager Role/system::leader-locking-kube-controller-manager 39m

rolebinding.rbac.authorization.k8s.io/system::leader-locking-kube-scheduler Role/system::leader-locking-kube-scheduler 39m

rolebinding.rbac.authorization.k8s.io/system:controller:bootstrap-signer Role/system:controller:bootstrap-signer 39m

rolebinding.rbac.authorization.k8s.io/system:controller:cloud-provider Role/system:controller:cloud-provider 39m

rolebinding.rbac.authorization.k8s.io/system:controller:token-cleaner Role/system:controller:token-cleaner 39m

# 모든 파드의 컨테이너 이미지 정보 확인 : dkr.ecr 저장소 확인!

v:Documents:s-aews:aews:1w $ kubectl get pods --all-namespaces -o jsonpath="{.items[*].spec.containers[*].image}" | tr -s '[[:space:]]' '\n' | sort | uniq -c

2 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon-k8s-cni:v1.21.1-eksbuild.5

2 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/amazon/aws-network-policy-agent:v1.3.1-eksbuild.1

2 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/eks/coredns:v1.13.2-eksbuild.3

2 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/eks/kube-proxy:v1.34.5-eksbuild.2

# kube-proxy : iptables mode, bind 0.0.0.0, conntrack 등

v:Documents:s-aews:aews:1w $ kubectl describe pod -n kube-system -l k8s-app=kube-proxy

Name: kube-proxy-fg2zs

Namespace: kube-system

Priority: 2000001000

Priority Class Name: system-node-critical

Service Account: kube-proxy

Node: ip-192-168-2-173.ap-northeast-2.compute.internal/192.168.2.173

Start Time: Wed, 18 Mar 2026 21:39:05 +0900

Labels: controller-revision-hash=f7bb99b97

k8s-app=kube-proxy

pod-template-generation=1

Annotations: <none>

Status: Running

IP: 192.168.2.173

IPs:

IP: 192.168.2.173

Controlled By: DaemonSet/kube-proxy

Containers:

kube-proxy:

Container ID: containerd://308970dfd717b2b93e5341ea872e92ef972ca66b2a38aa864afa119f4bcaebb4

Image: 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/eks/kube-proxy:v1.34.5-eksbuild.2

Image ID: 602401143452.dkr.ecr.ap-northeast-2.amazonaws.com/eks/kube-proxy@sha256:839e8625a1b230e9cd90323a484ed2ec0c2dbb2dca63cc8cbe086e8b1252d8a5

Port: <none>

Host Port: <none>

Command:

kube-proxy

--v=2

--config=/var/lib/kube-proxy-config/config

--hostname-override=$(NODE_NAME)

State: Running

Started: Wed, 18 Mar 2026 21:39:08 +0900

Ready: True

Restart Count: 0

Requests:

cpu: 100m

Environment:

NODE_NAME: (v1:spec.nodeName)

Mounts:

/lib/modules from lib-modules (ro)

/run/xtables.lock from xtables-lock (rw)

/var/lib/kube-proxy-config/ from config (rw)

/var/lib/kube-proxy/ from kubeconfig (rw)

/var/log from varlog (rw)

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-rhthb (ro)

Conditions:

Type Status

PodReadyToStartContainers True

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

varlog:

Type: HostPath (bare host directory volume)

Path: /var/log

HostPathType:

xtables-lock:

Type: HostPath (bare host directory volume)

Path: /run/xtables.lock

HostPathType: FileOrCreate

lib-modules:

Type: HostPath (bare host directory volume)

Path: /lib/modules

HostPathType:

kubeconfig:

Type: ConfigMap (a volume populated by a ConfigMap)

Name: kube-proxy

Optional: false

config:

Type: ConfigMap (a volume populated by a ConfigMap)

Name: kube-proxy-config

Optional: false

kube-api-access-rhthb:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

Optional: false