** 가시다님이 진행하는 CI/CD Study 내용을 기반으로 정리 및 작성하였습니다.

1. Cluster Management

kind mgmt k8s 배포 + ingress-nginx + Argo CD

# kind k8s 배포

$ kind create cluster --name mgmt --image kindest/node:v1.32.8 --config - <<EOF

> kind: Cluster

> apiVersion: kind.x-k8s.io/v1alpha4

> nodes:

> - role: control-plane

ingre> labels:

> ingress-ready: true

> extraPortMappings:

containe> - containerPort: 80

> hostPort: 80

> protocol: TCP

> - containerPort: 443

> hostPort: 443

> protocol: TCP

> - containerPort: 30000

tPort:> hostPort: 30000

> EOF

Creating cluster "mgmt" ...

✓ Ensuring node image (kindest/node:v1.32.8) 🖼

✓ Preparing nodes 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

Set kubectl context to "kind-mgmt"

You can now use your cluster with:

kubectl cluster-info --context kind-mgmt

Not sure what to do next? 😅 Check out https://kind.sigs.k8s.io/docs/user/quick-start/

# Nginx ingress 배포

$ kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/main/deploy/static/provider/kind/deploy.yaml

namespace/ingress-nginx created

serviceaccount/ingress-nginx created

serviceaccount/ingress-nginx-admission created

role.rbac.authorization.k8s.io/ingress-nginx created

role.rbac.authorization.k8s.io/ingress-nginx-admission created

clusterrole.rbac.authorization.k8s.io/ingress-nginx created

clusterrole.rbac.authorization.k8s.io/ingress-nginx-admission created

rolebinding.rbac.authorization.k8s.io/ingress-nginx created

rolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx created

clusterrolebinding.rbac.authorization.k8s.io/ingress-nginx-admission created

configmap/ingress-nginx-controller created

service/ingress-nginx-controller created

service/ingress-nginx-controller-admission created

deployment.apps/ingress-nginx-controller created

job.batch/ingress-nginx-admission-create created

job.batch/ingress-nginx-admission-patch created

ingressclass.networking.k8s.io/nginx created

validatingwebhookconfiguration.admissionregistration.k8s.io/ingress-nginx-admission created

#

$ kubectl get deployment ingress-nginx-controller -n ingress-nginx -o yaml \

sed '/> | sed '/- --publish-status-address=localhost/a\

- -> - --enable-ssl-passthrough' | kubectl apply -f -

deployment.apps/ingress-nginx-controller configured

#

$ openssl req -x509 -nodes -days 365 -newkey rsa:2048 \

yout arg> -keyout argocd.example.com.key \

-ou> -out argocd.example.com.crt \

bj "/C> -subj "/CN=argocd.example.com/O=argocd"

...+....+...+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++*...+...........+......+.+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++*...+......+..........+............+..+......+....+.........+..+....+.....+...................+............+..+.............+........+....+..+...................+......+......+..+.+..+....+.....+.+..............+.+..+..........+...........+....+...+........+....+..+.+.....+.......+......+....................+.+.....+.........+.........+...+...+....+...+............+..+.+........+......+.+...+..+....+.........+..+....+........+.+.....+......+...+...............+...+....+...............+...+......+.....+....+....................+..........+...+........+.........+.+...........+...+.......+.....+...+......+.+.....+....+.....+............+.........+......+...+....+...+.....+....+...+..+...+.+......+......+...+..+..........+.....+.......+......+............+.................+.+.........+.....+.+.....+...............+.+.....+.+..............+..........+......+...............+..+.+......+...+........+....+......+..+..........+..+.........+.+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

...+...+.....+.........+.+..+............+....+..+....+..............+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++*......+......+++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++*....+...+.................+....+.....+.........+.......+..............+.......+.....+.......+..+.........+.+...+...+.....+...+....+......+.....+.......+..............+.+..+..........+........+............+.......+...........+...+.+...+........+....+...+......+...........+......+....+...+..+.........+......+.+..............+.+...+......+...+........+...+.........+..........+...+..+......+................+...+..+...+.....................+....+.................+......+...+..........+.....+.+...+.....+......+......+.+........+....+.....+............+...............+.+..+.......+...+...........+....+.....+.+....................+............+....+.....+...+...+...............+...+.......+.....+.......+.....+......+.......+..+.+.....+.......+...+...........+..................+.......+...+..+...............+.+..+.......+.........+..+..........+...+..+...+................+......+..++++++++++++++++++++++++++++

$ kubectl create ns argocd

namespace/argocd created

$ kubectl -n argocd create secret tls argocd-server-tls \

> --cert=argocd.example.com.crt \

> --key=argocd.example.com.key

secret/argocd-server-tls created

#

cat <<EOF > argocd-values.yaml

global:

domain: argocd.example.com

server:

ingress:

enabled: true

ingressClassName: nginx

annotations:

nginx.ingress.kubernetes.io/force-ssl-redirect: "true"

nginx.ingress.kubernetes.io/ssl-passthrough: "true"

tls: true

EOF

$ helm install argocd argo/argo-cd --version 9.0.5 -f argocd-values.yaml --namespace argocd

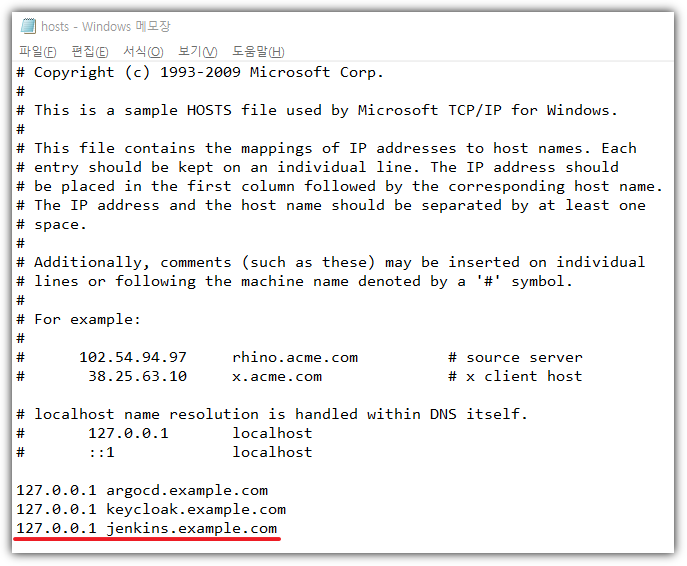

## C:\Windows\System32\drivers\etc\hosts 관리자모드에서 메모장에 내용 추가

127.0.0.1 argocd.example.com

# 최초 접속 암호 확인

$ kubectl -n argocd get secret argocd-initial-admin-secret -o jsonpath="{.data.password}" | base64 -d ;echo

AbH############

# 접근 시 오류로 포트포워딩 방식으로 진행

$ argocd login argocd.example.com --insecure --username admin --password $ARGOPW

{"level":"fatal","msg":"rpc error: code = Unknown desc = unexpected HTTP status code received from server: 307 (Temporary Redirect); malformed header: missing HTTP content-type","time":"2025-11-19T22:40:54+09:00"}

$ kubectl port-forward svc/argocd-server -n argocd 8000:443

$ argocd login localhost:8000 --insecure --username admin --password $ARGOPW

'admin:login' logged in successfully

Context 'localhost:8000' updated

$ argocd cluster list

SERVER NAME VERSION STATUS MESSAGE PROJECT

https://kubernetes.default.svc in-cluster Unknown Cluster has no applications and is not being monitored.

$ argocd proj list

NAME DESCRIPTION DESTINATIONS SOURCES CLUSTER-RESOURCE-WHITELIST NAMESPACE-RESOURCE-BLACKLIST SIGNATURE-KEYS ORPHANED-RESOURCES

DESTINATION-SERVICE-ACCOUNTS

default *,* * */* <none> <none> disabled

<none>

$ argocd account list

NAME ENABLED CAPABILITIES

admin true login

# admin 계정 암호 변경 : qwe12345

$ argocd account update-password --current-password $ARGOPW --new-password qwe12345

Password updated

Context 'localhost:8000' updated

kind dev/prd k8s 배포 & k8s 자격증명 수정

# 설치 전 확인

$ kubectl config get-contexts

CURRENT NAME CLUSTER AUTHINFO NAMESPACE

* kind-mgmt kind-mgmt kind-mgmt

# 도커 네트워크 확인 : mgmt 컨테이너 IP 확인

$ docker network ls

NETWORK ID NAME DRIVER SCOPE

89ae2f73546f bridge bridge local

dc17350be0d8 host host local

9fa01c22fe6c kind bridge local

2db7e140ea5c none null local

$ docker network inspect kind | jq

[

{

"Name": "kind",

"Id": "9fa01c22fe6c83d1daabd0236a6701b73082ccae6dea24f874dcf18671475472",

"Created": "2025-10-25T12:21:19.640716723+09:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv4": true,

"EnableIPv6": true,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "172.18.0.0/16",

"Gateway": "172.18.0.1"

},

{

"Subnet": "fc00:f853:ccd:e793::/64",

"Gateway": "fc00:f853:ccd:e793::1"

}

]

},

"Internal": false,

"Attachable": false,

"Ingress": false,

"ConfigFrom": {

"Network": ""

},

"ConfigOnly": false,

"Containers": {

"7b1ca60115a80689cd862f6837aba2635b02126203105ea32f3adc0447151b36": {

"Name": "mgmt-control-plane",

"EndpointID": "c72a01444d75164b151122538230cc0aa0788f8f0d3943197c71e822c46982e6",

"MacAddress": "f2:50:89:9f:29:ad",

"IPv4Address": "172.18.0.2/16",

"IPv6Address": "fc00:f853:ccd:e793::2/64"

}

},

"Options": {

"com.docker.network.bridge.enable_ip_masquerade": "true",

"com.docker.network.driver.mtu": "1500"

},

"Labels": {}

}

]

# kind k8s 배포

(⎈|kind-mgmt:N/A) gaji:~$ kind create cluster --name dev --image kindest/node:v1.32.8 --config - <<EOF

ind: C> kind: Cluster

pi> apiVersion: kind.x-k8s.io/v1alpha4

- role> nodes:

> - role: control-plane

raPortM> extraPortMappings:

> - containerPort: 31000

> hostPort: 31000

F> EOF

Creating cluster "dev" ...

✓ Ensuring node image (kindest/node:v1.32.8) 🖼

✓ Preparing nodes 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

Set kubectl context to "kind-dev"

You can now use your cluster with:

kubectl cluster-info --context kind-dev

Thanks for using kind! 😊

(⎈|kind-dev:N/A) gaji:~$ kind create cluster --name prd --image kindest/node:v1.32.8 --config - <<EOF

ind: C> kind: Cluster

> apiVersion: kind.x-k8s.io/v1alpha4

> nodes:

> - role: control-plane

raPortM> extraPortMappings:

> - containerPort: 32000

> hostPort: 32000

> EOF

Creating cluster "prd" ...

✓ Ensuring node image (kindest/node:v1.32.8) 🖼

✓ Preparing nodes 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

Set kubectl context to "kind-prd"

You can now use your cluster with:

kubectl cluster-info --context kind-prd

Have a question, bug, or feature request? Let us know! https://kind.sigs.k8s.io/#community 🙂

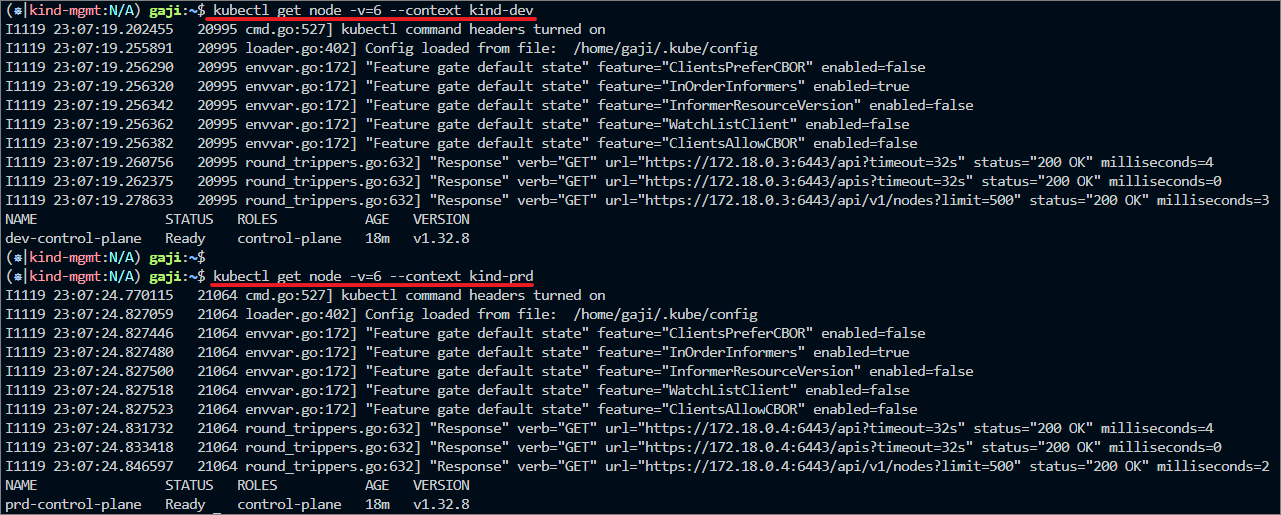

# 설치 후 확인

$ kubectl config get-contexts

CURRENT NAME CLUSTER AUTHINFO NAMESPACE

kind-dev kind-dev kind-dev

kind-mgmt kind-mgmt kind-mgmt

* kind-prd kind-prd kind-prd

# mgmt k8s 자격증명 변경

(⎈|kind-prd:N/A) gaji:~$ kubectl config use-context kind-mgmt

Switched to context "kind-mgmt".

(⎈|kind-mgmt:N/A) gaji:~$ kubectl config get-contexts

CURRENT NAME CLUSTER AUTHINFO NAMESPACE

kind-dev kind-dev kind-dev

* kind-mgmt kind-mgmt kind-mgmt

kind-prd kind-prd kind-prd

#

$ cat ~/.kube/config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURCVENDQWUyZ0F3SUJBZ0lJWlFyUStYR3NPYlF3RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFeE1Ua3hNelF6TVRsYUZ3MHpOVEV4TVRjeE16UTRNVGxhTUJVeApFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLCkFvSUJBUUNXOTBFVk44RGY3aWExbkloaTNpbkdHQ1ptQllkeEZrcDNHUVM4NTlZTk0walQxVXNBUTQxZytVRXMKNG11Sld4SGR6VUFLVlpQOWFMRCtsZWNjRVZHNnA5N2NyYWJ6dDU2Z3BrcEg4NUpOTVhTRkpPUHNFM0tEbks3bQp3TDNmcWZOUmFXN3ZyenY2anVJOGxvdnNqb1BKWlQ5bmVJWVArcEdDOTlIMXpIalFTc1hNcFQ3dnRTMHdTdXpPCm5zRVlLV1BjVHUwVFB4aE5TRnQwK1M4dDhRQjZ6OVlsVFRIWHEvUlFYcVlOT0tQYk1BTWllOWlhOGdaT0NneDkKQUlQNFF2V0FNbXBzeFJQZ05VYkw5Y1Y0Vkpld0VDRVZsRVYvTzF3TkIzV05BemNOVCtxRXRZQzN5MVNtbWVzcgowaG5ySnowQmdkcnhvMENFY09WbEptZHhtc2FKQWdNQkFBR2pXVEJYTUE0R0ExVWREd0VCL3dRRUF3SUNwREFQCkJnTlZIUk1CQWY4RUJUQURBUUgvTUIwR0ExVWREZ1FXQkJTU08reG5YWm9iQXprbldOWGQreGRDeHg4cU9UQVYKQmdOVkhSRUVEakFNZ2dwcmRXSmxjbTVsZEdWek1BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQUtEcXZ0ZG13Wgp1ckJ4RWRQVTZ0bnBMN0lGdDVlUkM2RVJicXFRaVJ1dXVVT3hlRS9aTUo3ZE5HdWc5UjRReXdubVB5OVdXdjFJCnBMaitNRnNKaVI4TFpCV3VWeXgzdFZrWC9XR2Q1UjJvcEtrRTNSSm9md1h5Ry9wSTlmUGFTdG1wQk5JMjhncEsKRzlBWmJWcXBLV1hNTmpkeHdxOFdKYVo2RWhsQ0l4RFRVQUU0OUp1Z1AyL09id01BcVVKSkg2VTZzZzVQVzd2ZgpJOWRhWnZKZ3ZXUmtRN05Sdm9tbGhtNFpPZjNjdkcvME9ydEoxTElhU2FEa2I4R01CekU1UFFPNkt3YUdNdXVQCjJvdFJrSFVLZDA4emRqREZ3djVmcXo2a2ppSjhNUFRkOHkyeFFmbVc3eklGdW9uWTRVMFdLNFhsTEdRLzVyQmIKek1YU0l0SjFOSExPCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://127.0.0.1:40527

name: kind-dev

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURCVENDQWUyZ0F3SUJBZ0lJT2tuQWp6TUVXWDB3RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFeE1Ua3hNekE0TXpaYUZ3MHpOVEV4TVRjeE16RXpNelphTUJVeApFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLCkFvSUJBUUN5ZGQ0eXJNNi9rb0RKTGZSSnJQbENLOHllREJvMnRvL3NnYy9rMnlaMDVYVHRQTVh1Qmp1ZHp3UDUKRHgrZmgxaUZmMVRnQjZBUlhrd2U0VDdiV0toMGJaMGcrcTcvYlZWWjdsa0Z1QTRzcHNnU3hYUGthUHNlek9JSwpYQk52UFVCM2pZblRuNlIwYnFFb0lPSkpKeU1mbmRJaHNWaEt4Vm5jS25jNG9lWU83RU1FMEh3b05yblV2bHh0CnMzbTR6amFrSjVrK04xbldBZXduN1U1MnJJTXpxb2ZTVW9Ec0x4TjEyQ3UySHhuU0NUUjYzNUpaVzk1Zi9QcHQKM05IQzV5Y1hYakRwOXNJeUo3VVpsVXVnWkEzaFhmOWQwQTFEMHFqakJPamxuZUVjVWtNTXFXV3E5S2s5SzlSUQozVGpJQ1NFMUh0VTA2SW82blk0Q2R1emJ6VEhuQWdNQkFBR2pXVEJYTUE0R0ExVWREd0VCL3dRRUF3SUNwREFQCkJnTlZIUk1CQWY4RUJUQURBUUgvTUIwR0ExVWREZ1FXQkJUOGMwazRHREpFd0lzMlZRTHgrTDVWcGVxVG16QVYKQmdOVkhSRUVEakFNZ2dwcmRXSmxjbTVsZEdWek1BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQkZZNHJkS2RJVgp4c0hEWUdteXp6bW1vd3RPZXp4RyttQUFTK1h1ejlDVVp3djBzZzlEekFDYkRURDJHYTVxKzNyYVN5TzY2dmxWCmFza2JjdHMzSkJLVDFjZ0xwVVNlSEc0SGV2M0UrN0N0YlZkYXloZHVwbUc4UjVEa3l1RGgzS25NZkVwS0dQRFAKaXowSlpRZ0Z6L0RtUXBkaGdDWUs5L085eW1RNnZjQWYzS0NvNW5UNjE1NnlHQ2pwTGcwUGpYOGhSeTFRMWJtKwpOUWVhVU9Oa2VoOW4xY1luL3pEaHkxS2N0b01yTDZzcVF5VVJDN3N0SmIrUDhTVlNIQ2NBdit3Ui9OSXh5RElmCklMYkJCcWpIVFdxUTU4blhhMnBUb09rSndSOHpLSy9DelJvbFhlNGxSbWJJaW1hWnBBWTNkcXBYQTNsRFNpRzEKdk1yY1ZMWEg4UXFPCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://127.0.0.1:37593

name: kind-mgmt

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURCVENDQWUyZ0F3SUJBZ0lJUGNPWHp2am5KV1F3RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFeE1Ua3hNelF6TkRKYUZ3MHpOVEV4TVRjeE16UTROREphTUJVeApFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLCkFvSUJBUUM3OTQwUEs1bmFab21jMzVYemVTRUVjMGc2am1ZUWJWR2d4d2h6TGlTamxISmQ3dWJGYytZSG9Qb1QKVjhTaWJ5cFF6akNwMU1BcmxjMTRSTktjcEpHRmlzajdadENhSGk3T3diaFMxb05BelZ2L1h1K1RxMmhyN3VqeApvVTNPYzhRT2UzVzFBTlFOcGNrN3BQTU1nMGZtUStpN3I5SDQwemxKcHBlTWx4eGZrU2lJM0FSdHJLSGRQUU1PCnRKR2ZIVmcyM20zVjdLeTJVcklEbkozakhrYnc3eUJMVHVUeWNoZzhhN2lvZVJOWGY3RHNoMmMyemJQT1ZtbDIKUDZUK0JyNmdqU210WTIwclBneXdFV1Y3djIyUUYzZWdXV3BiVDZ4RkgyVGRPSWxmYm8yVjFNNlFiQ1NrbG81OQpJMHJTUkNNNDd2K2xPeWhUZkpGY3RpMThBZzJKQWdNQkFBR2pXVEJYTUE0R0ExVWREd0VCL3dRRUF3SUNwREFQCkJnTlZIUk1CQWY4RUJUQURBUUgvTUIwR0ExVWREZ1FXQkJRVzI4VTlRRlRWQXB3OWZ2ajA1Vk9jMmpyQnVqQVYKQmdOVkhSRUVEakFNZ2dwcmRXSmxjbTVsZEdWek1BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQnpVeWdzVFNqNwpOSTZ4ZkN0NEt4alBJZElLdWJlckJtMHpITkJZUGVJSzJGUnI5OWpNVlZhQkF2L0xwcWRKYTNvVFhKN25GMngyCnZpRUVYTmVPWUMzdGJMeWZySzNEbTdXWDdvS093SnFOcVhNZEJDZjZETkJCUmYrUUhxM090TGtvb0F1eXNDYlYKU29ZVUhPY0h1VHRPdU9XdXU5UmNPRXRLMlRMdmFteDVhT3VqVGxaNStkVWpvbTkyeG5SRmdMUXZEWWJUV1BKawp5ZFptbXA0aG0yZmpyei9pc3JzU1pUcWxKU2o4WEgrRVg3ei9LNUlDVUdadytoc0svTUdKdHBrQy84cXZsZStECjhnRUFVVlV1bDlQQjVuNngxSG5GV3JyYXh3c2tYNkdqcVJZYmVxck5WWFlVakEyLzA3NzVWUzJhdjAyNWxNVU0KdkdXV2w0cVFnSWtSCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://127.0.0.1:36889

name: kind-prd

contexts:

- context:

cluster: kind-dev

user: kind-dev

name: kind-dev

- context:

cluster: kind-mgmt

user: kind-mgmt

name: kind-mgmt

- context:

cluster: kind-prd

user: kind-prd

name: kind-prd

current-context: kind-mgmt

kind: Config

users:

- name: kind-dev

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURLVENDQWhHZ0F3SUJBZ0lJSlFBdll1anBrTU13RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFeE1Ua3hNelF6TVRsYUZ3MHlOakV4TVRreE16UTRNVGxhTUR3eApIekFkQmdOVkJBb1RGbXQxWW1WaFpHMDZZMngxYzNSbGNpMWhaRzFwYm5NeEdUQVhCZ05WQkFNVEVHdDFZbVZ5CmJtVjBaWE10WVdSdGFXNHdnZ0VpTUEwR0NTcUdTSWIzRFFFQkFRVUFBNElCRHdBd2dnRUtBb0lCQVFER1JnRCsKU1J2QWhrVTErTk1zTXVIUFQ5UGFodUkyakFvcklabFVwYTM4SDVub0xkVkNOU0xrRXQ5RHhDREJ3S1JZRVA4YwpMcTF1Zk9SYWpzZDBkSzZZbHpJRUV6cnJnemVmMEM0VTlmbjFsZWF1Z1NrMEx4dXU5M0RRZXhJRENMLzFYU0JRCnpvZ2hqN0VUS3NRTVVGNDVSY1oyZGNCVmRyNkNHY21pVDJKWWowTGlBeTM1MUFNTitaMnNkZ1F5czJSVHc0NjEKcXZudEZIUXFNVUFMa1JqSVhOeFFKa1ZvT202TzZoWkNqOWFNWldUYUNHVWdXZ3hXSno4ZWtGMHNOcmtxQlY1TApJZm5hZDQxZXV5VUtHUEZKeW41TUNJOXRuMVh3R1krTy9SSDFoVE1UWkQwbGVvTkxmSmdXSHc3Q2c4S0xiZVZVCk5kVng3ZFplaDhXQWlKeGJBZ01CQUFHalZqQlVNQTRHQTFVZER3RUIvd1FFQXdJRm9EQVRCZ05WSFNVRUREQUsKQmdnckJnRUZCUWNEQWpBTUJnTlZIUk1CQWY4RUFqQUFNQjhHQTFVZEl3UVlNQmFBRkpJNzdHZGRtaHNET1NkWQoxZDM3RjBMSEh5bzVNQTBHQ1NxR1NJYjNEUUVCQ3dVQUE0SUJBUUJLeXJRdGJwTFhSRkJWbDRmUE1rQ3lBNWVzCjAzdDdKMU10YVd3Y1VwZVVYUjQzVGtlajY5SndjMFdTUnJlSVhxTkdLTk0wWW5GNXE5bFF3cmpaQlFqTGNrZ3EKcTN2NVBkd1ZCRHdPenR0eGE5eHhRK29XS0czZnd3d2RHVGs4ZW1vZzFiMnlYVjdFY0hLdUdFaURHZS9VNWY3cAprdk4rTVVFM0VPcmljamI2T1AwOFZYbWlPUVV3Z1VXajZUSmpZOUxDcjlVVUVLU2poak1ScmppQjZqSnRQSDB1CmlDMndBK1VtczRhSW9DbldodmFjUWdzNktrMHVuTDJBWTVldnNETjZISTZMa0Y5bVpxeHEvT3FQOG5TTktyMjAKZ2JGZmpWZDN5RXhITE01S2c2eGFST2twY2hiT0hLN2tadEVGM0NVSVV1alBqRExIRlpueVJaN05RWHdZCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcEFJQkFBS0NBUUVBeGtZQS9ra2J3SVpGTmZqVExETGh6MC9UMm9iaU5vd0tLeUdaVktXdC9CK1o2QzNWClFqVWk1QkxmUThRZ3djQ2tXQkQvSEM2dGJuemtXbzdIZEhTdW1KY3lCQk02NjRNM245QXVGUFg1OVpYbXJvRXAKTkM4YnJ2ZHcwSHNTQXdpLzlWMGdVTTZJSVkreEV5ckVERkJlT1VYR2RuWEFWWGErZ2huSm9rOWlXSTlDNGdNdAorZFFERGZtZHJIWUVNck5rVThPT3RhcjU3UlIwS2pGQUM1RVl5RnpjVUNaRmFEcHVqdW9XUW8vV2pHVmsyZ2hsCklGb01WaWMvSHBCZExEYTVLZ1ZlU3lINTJuZU5YcnNsQ2hqeFNjcCtUQWlQYlo5VjhCbVBqdjBSOVlVekUyUTkKSlhxRFMzeVlGaDhPd29QQ2kyM2xWRFhWY2UzV1hvZkZnSWljV3dJREFRQUJBb0lCQUUvazFYR2hzL1VZSmJ0aAowSFgyNnRiR3ErOWFlcnpRSVNqUUdTRHF3ZmplSXY1VHVhTThGaHNoSDZZRzJjdzQvQUFnbVN1YUEzaUtDdG9TCnZueEdxRFFFZUxyN3BMcFIzWkFnS3ZGM1RJbVJKYXNia0tiZWRLRkVROGsrVGp1Nnd0N3o3NW1nSDJxbXBBdTkKSjlKdWNBbFQvR3l2ZGhqNkNEb1VlaE5WT2FQRklNZTdlYmJlbUxoRlVaWHlMRDErdGFlZW9SMzY5T1Bya09GKwpZNWp4UjMzSkZIZVlkQmk3eFhYbjdFNTRBUmIxcWFEamk5cHBIVnJMSWJjUEh4Q0EybThBOXlSZ1dsYUNRZUFYCjJVNGEwK2M3dWRGWjhYZEZscjhTclBveUpjYUNtOG1ocjJsbkt4N0tGdFdZWVdtTnNRcnhWVmNvcDRiaHlXWisKazZvWFNrRUNnWUVBNjNZRWp6Uzh2SGJ1d3hwallaWTJIODFhVWlTd0R0MDZUdm5XQWFzOUhvK2ZlU1h3dGhWLwpBL25Jb0hBTEJwZGU4SVhkekdNUWpPTXFsM00yei9CdkgwN1Q2cmtudHJ5R3FCTHBJcW5Gbmh1STNpWnRxTU54CkY4ZWJDSG54Z1l0aTdKNGNjN0JzdFlDUjhZa25IUFU0RnArenVwZExBWk55a0tnN25lNHV2ajBDZ1lFQTE1R1EKOG9EcllwanNNK0VQNWxGUUJqQnhEKzNaSjdmN0l0QXkzZnc5Qkl1UFlJV0VvSnNXOGd6UnR3OHpUeWNpMVRTaApTSDRjaFJLVnN3eGNEVExJMERqRUN3NDRvR3hoSEM0dHZYUEFSREZ3aU5UOTZHL0svc0MzTGRtU01mTVdZekJjClo5M1hQYVNPSjE0MlRtRnQyTkZDUkxhVDA2UVNhejZpY3dZSHhuY0NnWUF2SHBmOC9JWEVoVngwaU1SWWxCSFMKdldxVXc2akQ0THU1Y0QvR1o3azhjMnRyZUE4NnNRU1JEQng5RnM3dUM3N0JEVmo4Zk5xa0J4WFA1d3VTQTBDSgptR0hLT0RGZFhVN3BOWmVZQ3pkejAzakpWQkZmMDNTL1dIK0s2N1JMdzFRUm0vWi9wRStzNXNUTi9DOXFtUndYClV2QkpwOXNudnBVUG84c0NhTmJMMVFLQmdRQ2V3YVNYQTl6V2d6ckpSa0hZWkIva1B2NjFOWHlNNU5EK0pZaWcKdFdnV2xkVmt4MUFTbThVOVE3V2E5SVhjRUQxMStVbWlRc1lzTnJDcTZUcE51ZzNzVXpJRjFsWmJ4eFdKbEhTNQpKcXI5VGMvTDVkaU11dkFyeDYvZ0EzREllbmNOVzR0aUx4MmFWenNkd1NPTGUvTlREMkdYaTBLNVJnY09sbFU3CkVzRGRKd0tCZ1FDS0lpMnJqb21iaW1CM09NOHh0azFtb3lvbmlRY0NPNmlyNHorekwrRGRVajBzemhSOWQxTnkKSEMxUzlIcE12RjJaRGdxd2MrcFRGak5odDRLS0VESlFRLzNITFBweUNGVGJRZld6RS9OZjJ0N0xDdksvRWFWMAo0OUptb0cyTko0NWJqZEZydGk3Nnltck5ZQjRpMzdZV0RjUEU5eXFYcDVqOW9VTDlOWEdQelE9PQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=

- name: kind-mgmt

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURLVENDQWhHZ0F3SUJBZ0lJS0J3RjdIUG9WZGN3RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFeE1Ua3hNekE0TXpaYUZ3MHlOakV4TVRreE16RXpNelphTUR3eApIekFkQmdOVkJBb1RGbXQxWW1WaFpHMDZZMngxYzNSbGNpMWhaRzFwYm5NeEdUQVhCZ05WQkFNVEVHdDFZbVZ5CmJtVjBaWE10WVdSdGFXNHdnZ0VpTUEwR0NTcUdTSWIzRFFFQkFRVUFBNElCRHdBd2dnRUtBb0lCQVFDNmd6eUYKOHZLQVB4WG1aL1JTa0t2NEtRNHBZZFRlWE1wSGM3SFNBQzlmc0cwZ2N6WU51Z1p5d29oZkltclFXMzBDUWRsUAp3QzNma0hCem9ZM2JUNEhYdTRDTzlkV2RZSDN0dGhzNy9xUFhlWm9Jd0JFZHF2QUR3anF2eGlIRDJwa2pRTlYxCjZXUkVHWVpuaTAyUlJwVDRyVWNXMTl1UWtwbHpDbHdNekNEYko1U05sQkVoT25ZbWVQSG51VXFDWnI4OUdrLy8KYm5nZVhjR3pIMU50ME5WTVRtMDYrUUp5QU5WMWk1dDdzRW5FaWREbHlRU3hiRG9aOVFUcGFDZDdlOW1wMG0xcQpUOHMrdC9ZQW9INlFjVzd1Mk5CK1laSjY4STdFeGN6NFNpVFNOT3VGZVJvcy93ZHFGdWIyYnpzODBldmN0L1o1CjZIQ1RRcDBmbkNSY0lEQkhBZ01CQUFHalZqQlVNQTRHQTFVZER3RUIvd1FFQXdJRm9EQVRCZ05WSFNVRUREQUsKQmdnckJnRUZCUWNEQWpBTUJnTlZIUk1CQWY4RUFqQUFNQjhHQTFVZEl3UVlNQmFBRlB4elNUZ1lNa1RBaXpaVgpBdkg0dmxXbDZwT2JNQTBHQ1NxR1NJYjNEUUVCQ3dVQUE0SUJBUUNHaVlOQ296c1NjQys5Y0JWOG1FTUVIWVJMCmFWaXVldXo1dStYSWhOZWRtdnRMNkcrYUtYTllPamNSSmtkamhJWmFqcHhoZ2srdTkzbWRSNmpObWpsdE1iMkgKaGhpOVJHWlJpZUd6ZUZSVkhwSWVSelN0M0VRNUZ0RXkxZ1YvYkcxd0xucFhFWTU2cktkMEZKM0FxWE41bmNkagpzMmxya094WjJlV3U1RTJYMTJHMCt0aXp4dE1Nb0tlOGlBOUZReXk3R0hmUDNxNjZBVitjSjhoSHlaclhhWTRQCkplaEFVakhDVm1HN2p4MGxUODdNL3pZSnR4cFFYdjBmR2ZFT3pFZE1Wb0VZcmhOdVJMZ1g2RUtnMEFLMEZLNG0KVjAzY0JpZ1E3dGZVbEUwOG1STTd4QVo1WGZvZkNhcDVYYUdNTmRNcldpVmxETkVvQWxpenB6aTdjeHVYCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb3dJQkFBS0NBUUVBdW9NOGhmTHlnRDhWNW1mMFVwQ3IrQ2tPS1dIVTNsektSM094MGdBdlg3QnRJSE0yCkRib0djc0tJWHlKcTBGdDlBa0haVDhBdDM1QndjNkdOMjArQjE3dUFqdlhWbldCOTdiWWJPLzZqMTNtYUNNQVIKSGFyd0E4STZyOFlodzlxWkkwRFZkZWxrUkJtR1o0dE5rVWFVK0sxSEZ0ZmJrSktaY3dwY0RNd2cyeWVValpRUgpJVHAySm5qeDU3bEtnbWEvUFJwUC8yNTRIbDNCc3g5VGJkRFZURTV0T3ZrQ2NnRFZkWXViZTdCSnhJblE1Y2tFCnNXdzZHZlVFNldnbmUzdlpxZEp0YWsvTFByZjJBS0Ira0hGdTd0alFmbUdTZXZDT3hNWE0rRW9rMGpUcmhYa2EKTFA4SGFoYm05bTg3UE5IcjNMZjJlZWh3azBLZEg1d2tYQ0F3UndJREFRQUJBb0lCQUc2TDhvZUNJL0dYY3FlSgozZXBDRFd1ZENlUEZOS0pIWlMxTWlZenF4eWwwTEhvYlQySjdhKzhCRmtzczN4cDMzM1JEQzBhVnBacm94WDRECnQwelJweWZ5M3BQZGFhdEowZG5mSWUxQlZHTVdsQkd6cFhGc0s2NU9wUElpQVVsUWU0dkZsYis1Z2RCTFFMcUcKZW9jc3lvZEtUT1JoTktaUC9kdU52RmxwYzkvd1ptZmVKdDJPenYrQUdiVUVhRTFwMUJFcXhhWDBqcEF4NVkyYwpzMEhUQlE2SUM0Y29tdHpvZE4zbmNoc3krNWtDWERSRnJaK0lldWxqVCtQeUxKQ2xxSjRoT0ZqaElleHZzck1sCmR1NW40WjhLcXhTNjZHanErbDVFQktMSW1RaVBMOTR3VVdlSTlzc29lemRWRFY3dWRsNGN6Y0FQZlpvOUtQTm4KRE9oVmljRUNnWUVBNVpRWUtRSmhjcGJkZkdWbnFHTjBwZ0dGcmQzZWhSRFdBRERVQTdkYVlFa1F2eWxZYk9ndQpBNm5qVEsrM3NoZnJIaWF2N0Y5bDVmdStjalVCTFhUb2Q0WCtvMGEyc2R6aVJGeFJZdzdHdUI1L2c2WXVNU2hXClptbzl1cEJMV3JiOGZHd1FVS2lXS1NSMmw5M2NlRmpLa1ZoODZaRW9PZi8rYTlIZWoydnhiZjhDZ1lFQXovcFMKN0NFaFVpRms3MHNoSytiOTVoK3J5VzZNL2xTdGpCcGpJd05FSThGVU8yMG5uMk5DdHNKU3czVDRRZmpSczA4ZwpkcjJYOWpScjY4bExLVnBkaTByTjY4TlFsZWUrQnRNWnFJUldWR0xDdTg0NUNsc2x2NVZiQUJlM0hOLzc0TU0wCmIyblNQMk5rdmV3bTI0b0h5dzB2bkR1ZjFISHRRYXV4dDRkdVRia0NnWUVBZzlPR0RBWlI3bnF5czd1R3lpcFIKMFFFT3djZWsvc09zSG0vclRqYjZyazlHVG41dlNCb0tVaEE3ZE0xeHJkSU9NRUlHd3JRRXp3cS9VTlVlMS90SgpnVmh2MzFHN2xtWS9od0Y3dW1YQnRmZk5RTXNydXc1dWptQnpFYTNKbDAxN3JmWmZaL0ZtU0RtbjM0eUdESlR2CnZJWVROQXNtRGlmdzcvaVMwOGduMmdFQ2dZQlhWdVp2NTFIWTdkRTNkTE9QZmtmdDFpc01RbkxQYzd3VjlCYmgKNDBOQVNMWVk1clFYQ1ZaQTdjWlg4czAyMTBrcEpmZWFKZkNsSWtxWUVFYVNMVExQaGpDSDY5UHh3QXBiVDFlZApIMFlwMWZlMWF0c2xjRFdnQ1JiWUtSMXE4TjBUL0tZT2k4QVJncW9SNEJSSmFlUHY3NitveXBsS1hEV291SE8vCmdRNWZjUUtCZ0czR3JpZ3J1VGtCbTlZU2lOMzErNmt1V2dCa1BWMUdGYWdqSXIxNE1nN2I5bng5bFlLSkRLc2kKQmcwL2tGcEhrUUxLRFFzM2VFYVdiWlc4LzRtN2d0eXZpSGMwMGVEQTh3emNLci92c2crc3FHUHZwZ1BkZ0hpcwpmcXViZGhCbUQ1T1ZuT2R0NGo5ZlQ0YldPNXIzYUs0STg2Tll0S2g0Z2sxZVdLNGVqWUJOCi0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg==

- name: kind-prd

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURLVENDQWhHZ0F3SUJBZ0lJZDZORHJHcDVTTVl3RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFeE1Ua3hNelF6TkRKYUZ3MHlOakV4TVRreE16UTROREphTUR3eApIekFkQmdOVkJBb1RGbXQxWW1WaFpHMDZZMngxYzNSbGNpMWhaRzFwYm5NeEdUQVhCZ05WQkFNVEVHdDFZbVZ5CmJtVjBaWE10WVdSdGFXNHdnZ0VpTUEwR0NTcUdTSWIzRFFFQkFRVUFBNElCRHdBd2dnRUtBb0lCQVFERXV2T1oKajg1aVFRTHpsejdFdlVSamZtSGRqT0cwWGhMT3c2WHhWYkgxSHZkZUp6cDR4NkZjSUJ4ZkxVNjIyYi9nc3NSRApJamZEakN0dlJ1UVpkb1ZzQ0FlT0R6QTVXcUZlTzBiTUxlUWNwN295SVZkaVVwMW9icHliZVFES3FUT3poYWw3CmV2TjVzUzVJMm9XTzV5ZjBlUTZhS0lTTHYycnNyV29vZncrdHE5VGhWRzV4NkNOdy9ma3Y4NnVRelJPcndaMTMKMHRFWUFuS0RNRnlaZkJ1SWlTblpVYnlJUmdBdmp5aE8veUsvMEtJcTg0N2NFam4zc1BkeVh1aTJzQ0JsbklJbQprRm5tOEtJR3ZYdnMxNGNRME5zVGlYaWVObVI0aElZVHhPaEZqL3V4d1dmOVY1VEJRdCtkelJBMXROSkdKanZyCjdRZVArQVRnelIyRW5zUnBBZ01CQUFHalZqQlVNQTRHQTFVZER3RUIvd1FFQXdJRm9EQVRCZ05WSFNVRUREQUsKQmdnckJnRUZCUWNEQWpBTUJnTlZIUk1CQWY4RUFqQUFNQjhHQTFVZEl3UVlNQmFBRkJiYnhUMUFWTlVDbkQxKworUFRsVTV6YU9zRzZNQTBHQ1NxR1NJYjNEUUVCQ3dVQUE0SUJBUUNCbmlsbXhzcFgwNkR3MnJ4Y211WW9rWGFtCmFORW9WcWdMTFJybVBWSWZVOEZaN1pNTzI0clJXTGZZTFMyRVhEbUNYZnFQZUZ5Rm1oVk4xaDMxNkRDWDRPK2UKeVQrWHI0aHRsSWhrWXROd3g1TDM3eVBNZlNDTWJqa01Venh6Z1UrWml0YytCY010ZjdWbWlyZWVTbUdQTEhWagptckc1VUxTY3NPQTg1WXN5Qk5NL0pKNm40SGtnRFhKMzZDdy8xcFo3V3k5dFZqeUtGbWZwb1RFWjJwR2JrZytBCmpCUzVzMjRrT1lYUW9YUUZvcVVhUWhjcDhnd0IrdmRZM1dHSVZoYU5HNS96QUg2RXZjS24yQ01zUHplSmtHQXkKVkJvaGs1Tk9QQWFHSFZ2V0xnSnQwdUVqdnEyTE9jUXZWb1MvUzUrdXFma0sxTWZVc1hLWUhZOVZTaGVxCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb3dJQkFBS0NBUUVBeExyem1ZL09Za0VDODVjK3hMMUVZMzVoM1l6aHRGNFN6c09sOFZXeDlSNzNYaWM2CmVNZWhYQ0FjWHkxT3R0bS80TExFUXlJM3c0d3JiMGJrR1hhRmJBZ0hqZzh3T1ZxaFhqdEd6QzNrSEtlNk1pRlgKWWxLZGFHNmNtM2tBeXFrenM0V3BlM3J6ZWJFdVNOcUZqdWNuOUhrT21paUVpNzlxN0sxcUtIOFByYXZVNFZSdQpjZWdqY1AzNUwvT3JrTTBUcThHZGQ5TFJHQUp5Z3pCY21Yd2JpSWtwMlZHOGlFWUFMNDhvVHY4aXY5Q2lLdk9PCjNCSTU5N0QzY2w3b3RyQWdaWnlDSnBCWjV2Q2lCcjE3N05lSEVORGJFNGw0bmpaa2VJU0dFOFRvUlkvN3NjRm4KL1ZlVXdVTGZuYzBRTmJUU1JpWTc2KzBIai9nRTRNMGRoSjdFYVFJREFRQUJBb0lCQUI0REgxS1grN1pGa0x1MApvU0RHZHY1dXMyTm5NSytoZ21FRXUvWWJTckRJYnBod2g1MFJaMjUwZjUrTmUrcXRRWFo0RHpUbXJYY3BsUjYrCncrR1drVGd2Nldja3JnaXg2TmhGVnNHalpwOXdmeGxTdU5BSkpuWmVHWW9tWlphRlJadm5ram1DUWs2WVZ5VksKSVFIeUJjaG9CM0JCS2lkZnBtSkErbWIvSzFjbEVObG5IL0gxdzBZTFdMQU1VM1lDQ2xlZHRVMDNkdDFGWGNYaQpYS25oZmh2VHRzL0hYb04ydHFVcUdBSzFNNHJ3SjU1SUNwOGhYK0ZZMmVNcEVZcW5mN2pUZVhKaWhmTkp3RVVsCmp1NlZ2NDVzbXRENVJ1WFhBcEc0ZUhuOVlZdEsyNWgzRVFwSTAzMkZvSWZlTXdNRlBGUWt1SXAxZlU3dVJ0U0kKS2NwU0hlRUNnWUVBK0sxelRMWUl5cUFhZDBsWU1TNkorTy9UREppcThYSmVkR3hmSVJBeVNkVVRxQ1hGMTNFVApLK1ZQZG5nN2JFYXIwLzlRVVloWHBuNHcyT2NndUxCbkxaRUtFVTV0L3Q5TFlxVUxhQmdVODB0UVZJS25JMmViCmxpc1ZERXN2aEZPQnpoOWMwaWkzK0gzYVowdTh1K05RZ05XdUxXNHhxMm1ONmtsT1N0YmE0RzBDZ1lFQXlvWHIKUEtTSExybmhJZ3o5TXlSUGZRUHVwUk41RjBzeWlQMlJOS3I3RHQwTDEvUFBpVC9UL0MrWnZsQk9mSExOSzU0MApKRFE4RDViNmxTV0QwTWZWUVVhd1pFMG1mcnB0bCtheGFPTThNcXdtRzVrNm4yTnFnUThJNVlTaGx5TjJoWkdCCllNc2FEMUxsYS9ETC9nMTA0QzJjMi9lMndjcW9CZTd2NHcyOFRtMENnWUJSbkhydWZmODhvSGFQRjE3K0pRdmEKeXJvMHRCWEZ6NGI0Sm1qQjdSTFQ4RDNYUFM1RW1qTjBnSGtucENXOFR5VXRHZWsrR01UTE96YkV1SFdncHlQMQpiRmdsZmR2VGZXeThIdll6WDFQZzFLSzBXWHlJdmdQdHNJM2p6dmoxTUlLMUpzM0xtdGxsajhnUmhtV1dNKy9ECm1GemxRL2pCREk4cWlJeE5PMTN4c1FLQmdRQ3RiUHFoUnA4QWMycUgxeW1uOXNzZTJoUXRSanltcHQxU2xCa1oKU2VXTnQ5cWhoZ1pObU52MkUra0xJUWZrNkFZcitPRGJzK05PMGxJcEVDUU4wR0ljOE9TeUw2THNXTWJxa2tHUAorUzhla1c1Y3FkMmFpZTYxTCtQRmI4dFVlcWpPL21nVk5EZFBzZ1FHYUFDM3dGdzZjTFRuWlB3YWZXbGZFMXBYClMvYmFEUUtCZ0J5Z0ZIUlpQSTYzZ0VOUDhBYk5tL0k2ck5md1BTMy93dlA5Nk92dDhnTkRiL1AyNnd2cGNZRmQKS0dNQ3poZk5EdTVWNW1UQytZT3MxU2ZEZlh2dklQMnpCLzhwTFlvZXR4NFdLS3pFMGxnbGxsUUl4RmJFN2NnVQpxMkxPWnpCWGNiWWpNK1pCbzA5U2ZsVUNKakUzZExmMVR3aW9rS3VST1NLTUl6SEliQi83Ci0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg==

(⎈|kind-mgmt:N/A) gaji:~$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS

NAMES

83302723c7ef kindest/node:v1.32.8 "/usr/local/bin/entr…" 2 minutes ago Up 2 minutes 0.0.0.0:32000->32000/tcp, 127.0.0.1:36889->6443/tcp

prd-control-plane

61bd44293dcb kindest/node:v1.32.8 "/usr/local/bin/entr…" 3 minutes ago Up 3 minutes 0.0.0.0:31000->31000/tcp, 127.0.0.1:40527->6443/tcp

dev-control-plane

7b1ca60115a8 kindest/node:v1.32.8 "/usr/local/bin/entr…" 37 minutes ago Up 37 minutes 0.0.0.0:80->80/tcp, 0.0.0.0:443->443/tcp, 0.0.0.0:30000->30000/tcp, 127.0.0.1:37593->6443/tcp mgmt-control-plane

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get pod -A --context kind-mgmt

NAMESPACE NAME READY STATUS RESTARTS AGE

argocd argocd-application-controller-0 1/1 Running 0 22m

argocd argocd-applicationset-controller-bbff79c6f-7q7ks 1/1 Running 0 22m

argocd argocd-dex-server-6877ddf4f8-8zxxw 1/1 Running 0 22m

argocd argocd-notifications-controller-7b5658fc47-cbc2s 1/1 Running 0 22m

argocd argocd-redis-7d948674-gcjn4 1/1 Running 0 22m

argocd argocd-repo-server-7679dc55f5-74wfp 1/1 Running 0 22m

argocd argocd-server-7d769b6f48-hcs65 1/1 Running 0 22m

ingress-nginx ingress-nginx-controller-8676d56f78-c7tjm 1/1 Running 0 32m

kube-system coredns-668d6bf9bc-7brk9 1/1 Running 0 37m

kube-system coredns-668d6bf9bc-gzwp4 1/1 Running 0 37m

kube-system etcd-mgmt-control-plane 1/1 Running 0 37m

kube-system kindnet-kb7sx 1/1 Running 0 37m

kube-system kube-apiserver-mgmt-control-plane 1/1 Running 0 37m

kube-system kube-controller-manager-mgmt-control-plane 1/1 Running 0 37m

kube-system kube-proxy-rtkpj 1/1 Running 0 37m

kube-system kube-scheduler-mgmt-control-plane 1/1 Running 0 37m

local-path-storage local-path-provisioner-7dc846544d-gqtrg 1/1 Running 0 37m

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get pod -A --context kind-dev

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-668d6bf9bc-qc9d5 1/1 Running 0 2m43s

kube-system coredns-668d6bf9bc-x25rj 1/1 Running 0 2m43s

kube-system etcd-dev-control-plane 1/1 Running 0 2m50s

kube-system kindnet-qzw7j 1/1 Running 0 2m43s

kube-system kube-apiserver-dev-control-plane 1/1 Running 0 2m49s

kube-system kube-controller-manager-dev-control-plane 1/1 Running 0 2m49s

kube-system kube-proxy-xv6k4 1/1 Running 0 2m43s

kube-system kube-scheduler-dev-control-plane 1/1 Running 0 2m49s

local-path-storage local-path-provisioner-7dc846544d-sqsgq 1/1 Running 0 2m43s

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get pod -A --context kind-prd

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-668d6bf9bc-hvm5v 1/1 Running 0 2m23s

kube-system coredns-668d6bf9bc-qngws 1/1 Running 0 2m23s

kube-system etcd-prd-control-plane 1/1 Running 0 2m31s

kube-system kindnet-mwbs5 1/1 Running 0 2m23s

kube-system kube-apiserver-prd-control-plane 1/1 Running 0 2m29s

kube-system kube-controller-manager-prd-control-plane 1/1 Running 0 2m29s

kube-system kube-proxy-gmq8b 1/1 Running 0 2m23s

kube-system kube-scheduler-prd-control-plane 1/1 Running 0 2m29s

local-path-storage local-path-provisioner-7dc846544d-lztcs 1/1 Running 0 2m23s

# alias 설정

alias k8s1='kubectl --context kind-mgmt'

alias k8s2='kubectl --context kind-dev'

alias k8s3='kubectl --context kind-prd'

# 확인

(⎈|kind-mgmt:N/A) gaji:~$ k8s1 get node -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

mgmt-control-plane Ready control-plane 38m v1.32.8 172.18.0.2 <none> Debian GNU/Linux 12 (bookworm) 6.6.87.2-microsoft-standard-WSL2 containerd://2.1.3

(⎈|kind-mgmt:N/A) gaji:~$ k8s2 get node -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

dev-control-plane Ready control-plane 3m50s v1.32.8 172.18.0.3 <none> Debian GNU/Linux 12 (bookworm) 6.6.87.2-microsoft-standard-WSL2 containerd://2.1.3

(⎈|kind-mgmt:N/A) gaji:~$ k8s3 get node -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

prd-control-plane Ready control-plane 3m31s v1.32.8 172.18.0.4 <none> Debian GNU/Linux 12 (bookworm) 6.6.87.2-microsoft-standard-WSL2 containerd://2.1.3

# 도커 네트워크 확인 : 컨테이너 IP 확인

(⎈|kind-mgmt:N/A) gaji:~$ docker network inspect kind | grep -E 'Name|IPv4Address'

"Name": "kind",

"Name": "dev-control-plane",

"IPv4Address": "172.18.0.3/16",

"Name": "mgmt-control-plane",

"IPv4Address": "172.18.0.2/16",

"Name": "prd-control-plane",

"IPv4Address": "172.18.0.4/16",

# 같은 도커 네트워크를 사용하기 때문에 도메인으로 질의해도 통신 가능

# dev-control-plane 도메인이 172.18.0.3/16으로 변환돼서 curl로 호출하는 것

docker exec -it mgmt-control-plane curl -sk https://dev-control-plane:6443/version

# local 에서 ping 통신 확인

(⎈|kind-mgmt:N/A) gaji:~$ ping -c 1 172.18.0.3

PING 172.18.0.3 (172.18.0.3) 56(84) bytes of data.

64 bytes from 172.18.0.3: icmp_seq=1 ttl=64 time=0.527 ms

--- 172.18.0.3 ping statistics ---

1 packets transmitted, 1 received, 0% packet loss, time 0ms

rtt min/avg/max/mdev = 0.527/0.527/0.527/0.000 ms

(⎈|kind-mgmt:N/A) gaji:~$ ping -c 1 172.18.0.2

PING 172.18.0.2 (172.18.0.2) 56(84) bytes of data.

64 bytes from 172.18.0.2: icmp_seq=1 ttl=64 time=0.108 ms

--- 172.18.0.2 ping statistics ---

1 packets transmitted, 1 received, 0% packet loss, time 0ms

rtt min/avg/max/mdev = 0.108/0.108/0.108/0.000 ms

(⎈|kind-mgmt:N/A) gaji:~$ ping -c 1 172.18.0.4

PING 172.18.0.4 (172.18.0.4) 56(84) bytes of data.

64 bytes from 172.18.0.4: icmp_seq=1 ttl=64 time=0.289 ms

--- 172.18.0.4 ping statistics ---

1 packets transmitted, 1 received, 0% packet loss, time 0ms

rtt min/avg/max/mdev = 0.289/0.289/0.289/0.000 ms- kubeconfig 파일에 dev, prd에 IP 및 포트 변경 (mgmt는 그대로)

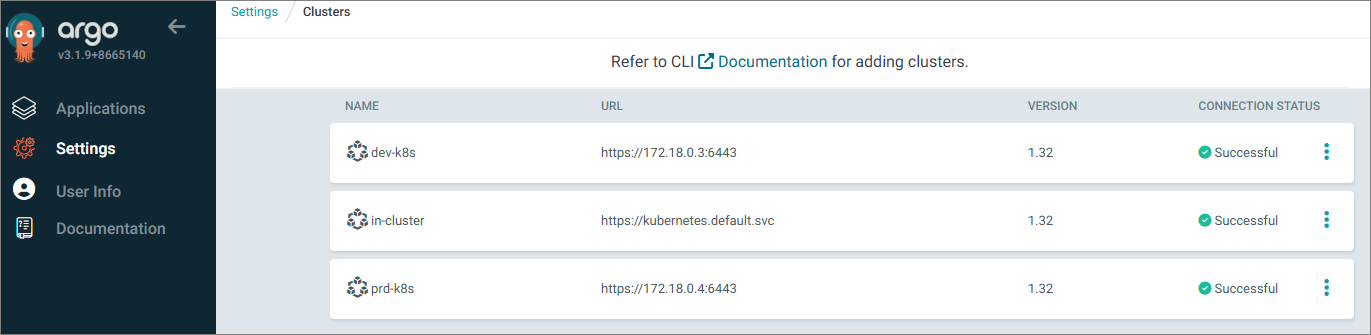

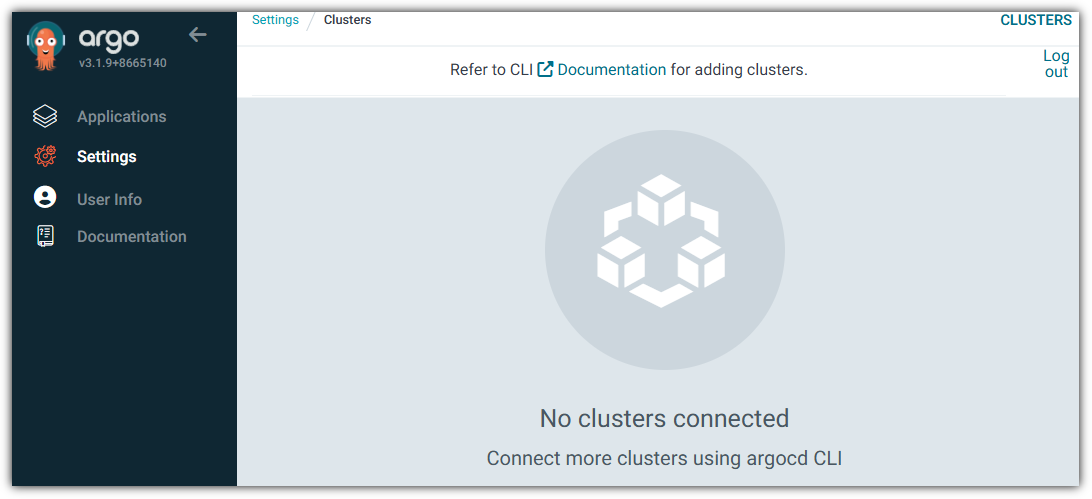

Argo CD에 다른 K8S Cluster 등록

(⎈|kind-mgmt:N/A) gaji:~$ argocd cluster list

SERVER NAME VERSION STATUS MESSAGE PROJECT

https://kubernetes.default.svc in-cluster Unknown Cluster has no applications and is not being monitored.

(⎈|kind-mgmt:N/A) gaji:~$ argocd cluster list -o json | jq

[

{

"server": "https://kubernetes.default.svc",

"name": "in-cluster",

"config": {

"tlsClientConfig": {

"insecure": false

}

},

"connectionState": {

"status": "Unknown",

"message": "Cluster has no applications and is not being monitored.",

"attemptedAt": "2025-11-19T14:12:07Z"

},

"info": {

"connectionState": {

"status": "Unknown",

"message": "Cluster has no applications and is not being monitored.",

"attemptedAt": "2025-11-19T14:12:07Z"

},

"cacheInfo": {},

"applicationsCount": 0

}

}

]

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get secret -n argocd

NAME TYPE DATA AGE

argocd-initial-admin-secret Opaque 1 43m

argocd-notifications-secret Opaque 0 43m

argocd-redis Opaque 1 55m

argocd-secret Opaque 3 43m

argocd-server-tls kubernetes.io/tls 2 56m

sh.helm.release.v1.argocd.v1 helm.sh/release.v1 1 43m

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get secret -n argocd argocd-secret -o jsonpath='{.data}' | jq

{

"admin.password": "JDJhJDEwJFI0VEkvVk5JVVdVcjR6Ukl3Y1prLk9tUmVBYWg0RXJLRTVDblBmbTkvWjNkaWpwUW05eWtt",

"admin.passwordMtime": "MjAyNS0xMS0xOVQxMzo0NDoxNVo=",

"server.secretkey": "cUU3bTU5dmo0NWJHdkNHUlE2MGFPakJvQ1ljUWE4MzRNRU1UeVVvREVHMD0="

}

(⎈|kind-mgmt:N/A) gaji:~$ k8s2 get sa -n kube-system

NAME SECRETS AGE

attachdetach-controller 0 24m

bootstrap-signer 0 24m

certificate-controller 0 24m

clusterrole-aggregation-controller 0 24m

coredns 0 24m

cronjob-controller 0 24m

daemon-set-controller 0 24m

default 0 24m

deployment-controller 0 24m

disruption-controller 0 24m

endpoint-controller 0 24m

endpointslice-controller 0 24m

endpointslicemirroring-controller 0 24m

ephemeral-volume-controller 0 24m

expand-controller 0 24m

generic-garbage-collector 0 24m

horizontal-pod-autoscaler 0 24m

job-controller 0 24m

kindnet 0 24m

kube-proxy 0 24m

legacy-service-account-token-cleaner 0 24m

namespace-controller 0 24m

node-controller 0 24m

persistent-volume-binder 0 24m

pod-garbage-collector 0 24m

pv-protection-controller 0 24m

pvc-protection-controller 0 24m

replicaset-controller 0 24m

replication-controller 0 24m

resourcequota-controller 0 24m

root-ca-cert-publisher 0 24m

service-account-controller 0 24m

statefulset-controller 0 24m

token-cleaner 0 24m

ttl-after-finished-controller 0 24m

ttl-controller 0 24m

validatingadmissionpolicy-status-controller 0 24m

(⎈|kind-mgmt:N/A) gaji:~$ k8s3 get sa -n kube-system

NAME SECRETS AGE

attachdetach-controller 0 24m

bootstrap-signer 0 24m

certificate-controller 0 24m

clusterrole-aggregation-controller 0 24m

coredns 0 24m

cronjob-controller 0 24m

daemon-set-controller 0 24m

default 0 24m

deployment-controller 0 24m

disruption-controller 0 24m

endpoint-controller 0 24m

endpointslice-controller 0 24m

endpointslicemirroring-controller 0 24m

ephemeral-volume-controller 0 24m

expand-controller 0 24m

generic-garbage-collector 0 24m

horizontal-pod-autoscaler 0 24m

job-controller 0 24m

kindnet 0 24m

kube-proxy 0 24m

legacy-service-account-token-cleaner 0 24m

namespace-controller 0 24m

node-controller 0 24m

persistent-volume-binder 0 24m

pod-garbage-collector 0 24m

pv-protection-controller 0 24m

pvc-protection-controller 0 24m

replicaset-controller 0 24m

replication-controller 0 24m

resourcequota-controller 0 24m

root-ca-cert-publisher 0 24m

service-account-controller 0 24m

statefulset-controller 0 24m

token-cleaner 0 24m

ttl-after-finished-controller 0 24m

ttl-controller 0 24m

validatingadmissionpolicy-status-controller 0 24m

# dev k8s 등록

(⎈|kind-mgmt:N/A) gaji:~$ argocd cluster add kind-dev --name dev-k8s

WARNING: This will create a service account `argocd-manager` on the cluster referenced by context `kind-dev` with full cluster level privileges. Do you want to continue [y/N]? y

{"level":"info","msg":"ServiceAccount \"argocd-manager\" created in namespace \"kube-system\"","time":"2025-11-19T23:14:15+09:00"}

{"level":"info","msg":"ClusterRole \"argocd-manager-role\" created","time":"2025-11-19T23:14:15+09:00"}

{"level":"info","msg":"ClusterRoleBinding \"argocd-manager-role-binding\" created","time":"2025-11-19T23:14:15+09:00"}

{"level":"info","msg":"Created bearer token secret \"argocd-manager-long-lived-token\" for ServiceAccount \"argocd-manager\"","time":"2025-11-19T23:14:15+09:00"}

Cluster 'https://172.18.0.3:6443' added

# 클러스터 자격증명은 시크릿에 저장하고, 해당 시크릿에 반드 시 argocd.argoproj.io/secret-type=cluster 라벨 필요

# 바로 위에서 Argo CD에 dev-k8s를 추가하면 기존 mgmt k8s argocd의 namespace에 secret이 추가됨

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get secret -n argocd -l argocd.argoproj.io/secret-type=cluster

NAME TYPE DATA AGE

cluster-172.18.0.3-4100004299 Opaque 3 90s

# 클러스터 리스트 확인

(⎈|kind-mgmt:N/A) gaji:~$ argocd cluster list

SERVER NAME VERSION STATUS MESSAGE PROJECT

https://172.18.0.3:6443 dev-k8s Unknown Cluster has no applications and is not being monitored.

https://kubernetes.default.svc in-cluster Unknown Cluster has no applications and is not being monitored.

# prd k8s 등록

(⎈|kind-mgmt:N/A) gaji:~$ argocd cluster add kind-prd --name prd-k8s --yes

{"level":"info","msg":"ServiceAccount \"argocd-manager\" created in namespace \"kube-system\"","time":"2025-11-19T23:19:47+09:00"}

{"level":"info","msg":"ClusterRole \"argocd-manager-role\" created","time":"2025-11-19T23:19:47+09:00"}

{"level":"info","msg":"ClusterRoleBinding \"argocd-manager-role-binding\" created","time":"2025-11-19T23:19:47+09:00"}

{"level":"info","msg":"Created bearer token secret \"argocd-manager-long-lived-token\" for ServiceAccount \"argocd-manager\"","time":"2025-11-19T23:19:47+09:00"}

Cluster 'https://172.18.0.4:6443' added

(⎈|kind-mgmt:N/A) gaji:~$ k8s3 get sa -n kube-system argocd-manager

NAME SECRETS AGE

argocd-manager 0 5s

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get secret -n argocd -l argocd.argoproj.io/secret-type=cluster

NAME TYPE DATA AGE

cluster-172.18.0.3-4100004299 Opaque 3 5m44s

cluster-172.18.0.4-568336172 Opaque 3 13s ---> prd용 SECRET 추가됨

(⎈|kind-mgmt:N/A) gaji:~$ argocd cluster list

SERVER NAME VERSION STATUS MESSAGE PROJECT

https://172.18.0.4:6443 prd-k8s Unknown Cluster has no applications and is not being monitored.

https://172.18.0.3:6443 dev-k8s Unknown Cluster has no applications and is not being monitored.

https://kubernetes.default.svc in-cluster Unknown Cluster has no applications and is not being monitored.

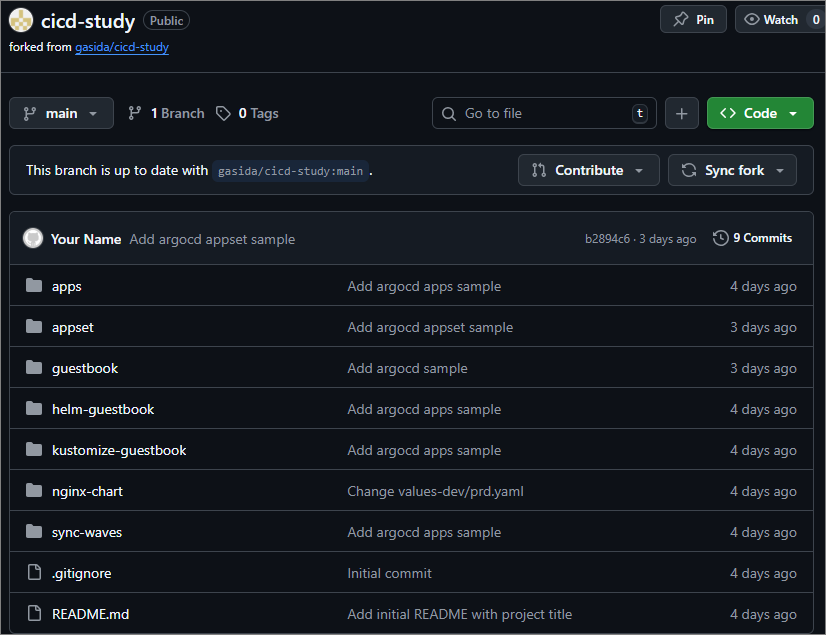

- gasida님의 github 리포지토리 Fork

Argo CD로 3개의 K8S Cluster 에 각각 Nginx 배포

$ docker network inspect kind | grep -E 'Name|IPv4Address'

"Name": "kind",

"Name": "dev-control-plane",

"IPv4Address": "172.18.0.3/16",

"Name": "mgmt-control-plane",

"IPv4Address": "172.18.0.2/16",

"Name": "prd-control-plane",

"IPv4Address": "172.18.0.4/16",

(⎈|kind-mgmt:N/A) gaji:~$ DEVK8SIP=172.18.0.3

(⎈|kind-mgmt:N/A) gaji:~$ PRDK8SIP=172.18.0.4

(⎈|kind-mgmt:N/A) gaji:~$ echo $DEVK8SIP $PRDK8SIP

172.18.0.3 172.18.0.4

# argocd app 배포

cat <<EOF | kubectl apply -f -

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: mgmt-nginx

namespace: argocd

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

project: default

source:

helm:

valueFiles:

- values.yaml

path: nginx-chart

repoURL: https://github.com/########/cicd-study

targetRevision: HEAD

syncPolicy:

automated:

prune: true

syncOptions:

- CreateNamespace=true

destination:

namespace: mgmt-nginx

server: https://kubernetes.default.svc

EOF

cat <<EOF | kubectl apply -f -

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: dev-nginx

namespace: argocd

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

project: default

source:

helm:

valueFiles:

- values-dev.yaml

path: nginx-chart

repoURL: https://github.com/########/cicd-study

targetRevision: HEAD

syncPolicy:

automated:

prune: true

syncOptions:

- CreateNamespace=true

destination:

namespace: dev-nginx

server: https://$DEVK8SIP:6443

EOF

cat <<EOF | kubectl apply -f -

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

name: prd-nginx

namespace: argocd

finalizers:

- resources-finalizer.argocd.argoproj.io

spec:

project: default

source:

helm:

valueFiles:

- values-prd.yaml

path: nginx-chart

repoURL: https://github.com/########/cicd-study

targetRevision: HEAD

syncPolicy:

automated:

prune: true

syncOptions:

- CreateNamespace=true

destination:

namespace: prd-nginx

server: https://$PRDK8SIP:6443

EOF

- mgmt, prd, dev 클러스터별 배포 및 접근 확인

- 삭제

(⎈|kind-mgmt:N/A) gaji:~$ kubectl delete applications -n argocd mgmt-nginx dev-nginx prd-nginx

application.argoproj.io "mgmt-nginx" deleted from argocd namespace

application.argoproj.io "dev-nginx" deleted from argocd namespace

application.argoproj.io "prd-nginx" deleted from argocd namespace

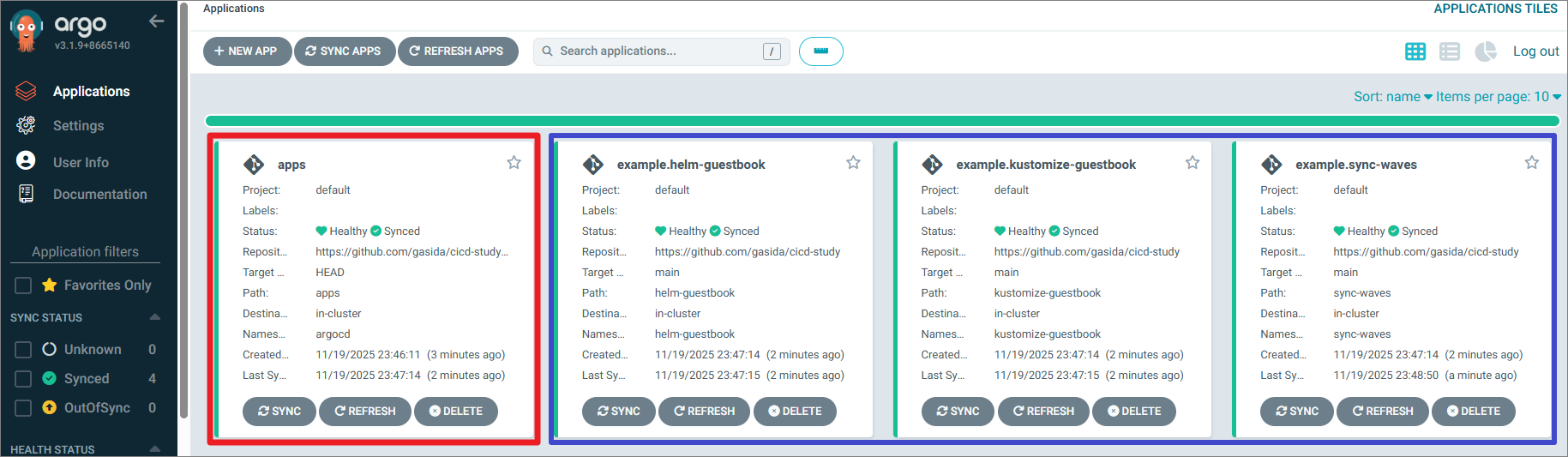

2. ApplicationSet

- app of apps 패턴

- app of apps 패턴은 부모 애플리케이션과 자식 애플리케이션 집합을 논리적으로 그룹화할 수 있는 기능을 제공한다.

- 부모 애플리케이션을 통해 자식 애플리케이션을 만들수 있고, 함께 배포할 수 있는 애플리케이션 그룹을 선언적으로 관리할 수 있다.

- app of apps 패턴을 사용하면 여러 Argo CD 애플리케이션 CRD를 배포하는 대신 부모 애플리케이션 하나만 배포하면 된다.

- 논리적 그룹화가 되어, 그룹의 모든 애플리케이션이 배포되고 정상 상태인지 확인 할 수 있다.

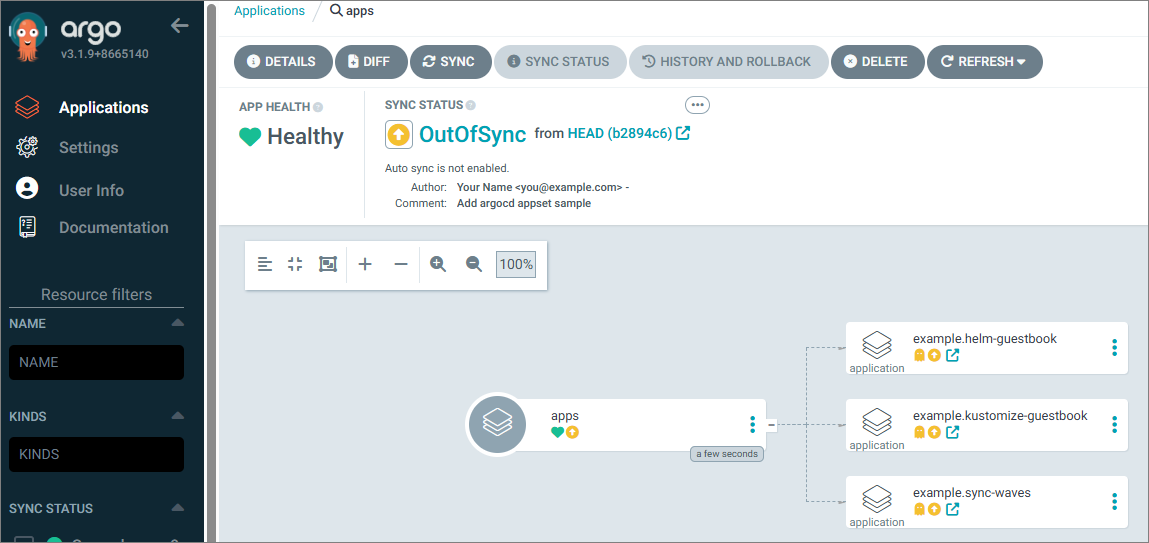

애플리케이션 배포

# Root Application 하나에 여러 Application manifest를 넣어 관리

argocd app create apps \

--dest-namespace argocd \

--dest-server https://kubernetes.default.svc \

--repo https://github.com/gasida/cicd-study.git \

--path apps

-> 아직 동기화를 안 해서 간단하게만 확인됨

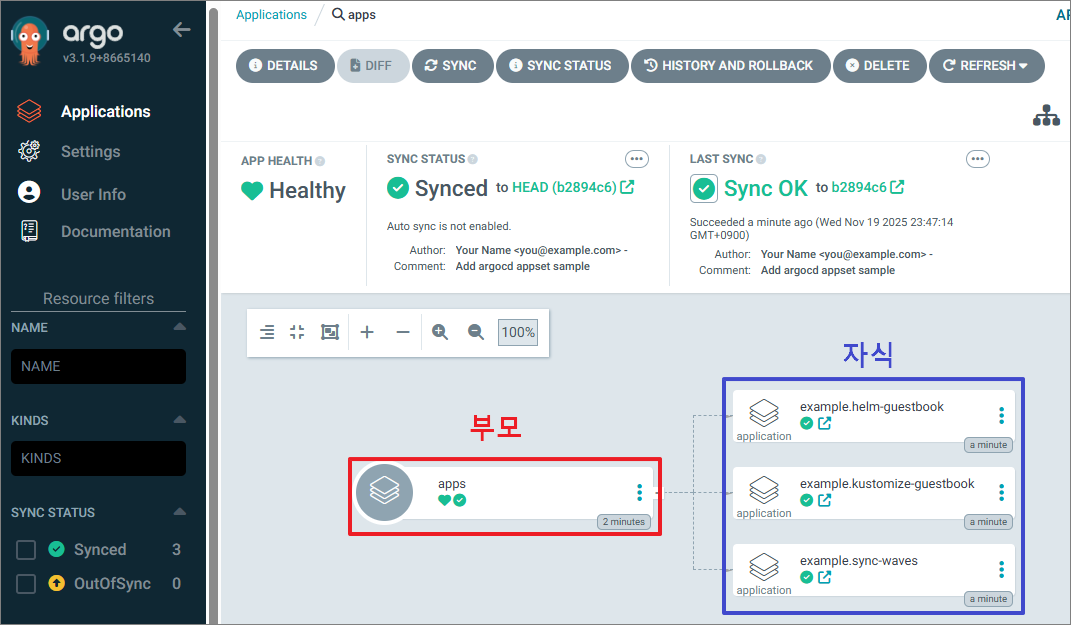

- Sync

# Root Application을 sync하면 하위 앱들이 자동 생성됨

argocd app sync apps

TIMESTAMP GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE

2025-11-19T23:47:14+09:00 argoproj.io Application argocd example.helm-guestbook OutOfSync Missing

2025-11-19T23:47:14+09:00 argoproj.io Application argocd example.kustomize-guestbook OutOfSync Missing

2025-11-19T23:47:14+09:00 argoproj.io Application argocd example.sync-waves OutOfSync Missing

2025-11-19T23:47:14+09:00 argoproj.io Application argocd example.helm-guestbook OutOfSync Missing application.argoproj.io/example.helm-guestbook

created

2025-11-19T23:47:14+09:00 argoproj.io Application argocd example.kustomize-guestbook OutOfSync Missing application.argoproj.io/example.kustomize-guestbook created

2025-11-19T23:47:14+09:00 argoproj.io Application argocd example.sync-waves OutOfSync Missing application.argoproj.io/example.sync-waves created

Name: argocd/apps

Project: default

Server: https://kubernetes.default.svc

Namespace: argocd

URL: https://argocd.example.com/applications/apps

Source:

- Repo: https://github.com/gasida/cicd-study.git

Target:

Path: apps

SyncWindow: Sync Allowed

Sync Policy: Manual

Sync Status: Synced to (b2894c6)

Health Status: Healthy

Operation: Sync

Sync Revision: b2894c67f7a64e42b408da5825cb0b87ee306b04

Phase: Succeeded

Start: 2025-11-19 23:47:14 +0900 KST

Finished: 2025-11-19 23:47:14 +0900 KST

Duration: 0s

Message: successfully synced (all tasks run)

GROUP KIND NAMESPACE NAME STATUS HEALTH HOOK MESSAGE

argoproj.io Application argocd example.helm-guestbook Synced application.argoproj.io/example.helm-guestbook created

argoproj.io Application argocd example.kustomize-guestbook Synced application.argoproj.io/example.kustomize-guestbook created

argoproj.io Application argocd example.sync-waves Synced application.argoproj.io/example.sync-waves created

# 배포 리소스 확인

(⎈|kind-mgmt:N/A) gaji:~$ argocd app list

NAME CLUSTER NAMESPACE PROJECT STATUS HEALTH SYNCPOLICY CONDITIONS REPO

PATH TARGET

argocd/apps https://kubernetes.default.svc argocd default Synced Healthy Manual <none> https://github.com/gasida/cicd-study.git apps

argocd/example.helm-guestbook https://kubernetes.default.svc helm-guestbook default Synced Healthy Auto-Prune <none> https://github.com/gasida/cicd-study helm-guestbook main

argocd/example.kustomize-guestbook https://kubernetes.default.svc kustomize-guestbook default Synced Healthy Auto-Prune <none> https://github.com/gasida/cicd-study kustomize-guestbook main

argocd/example.sync-waves https://kubernetes.default.svc sync-waves default Synced Healthy Auto-Prune <none> https://github.com/gasida/cicd-study sync-waves main

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

argocd argocd-application-controller-0 1/1 Running 0 81m

argocd argocd-applicationset-controller-bbff79c6f-7q7ks 1/1 Running 0 81m

argocd argocd-dex-server-6877ddf4f8-8zxxw 1/1 Running 0 81m

argocd argocd-notifications-controller-7b5658fc47-cbc2s 1/1 Running 0 81m

argocd argocd-redis-7d948674-gcjn4 1/1 Running 0 81m

argocd argocd-repo-server-7679dc55f5-74wfp 1/1 Running 0 81m

argocd argocd-server-7d769b6f48-hcs65 1/1 Running 0 81m

helm-guestbook helm-guestbook-667dffd5cf-mzt2x 1/1 Running 0 3m15s

ingress-nginx ingress-nginx-controller-8676d56f78-c7tjm 1/1 Running 0 91m

kube-system coredns-668d6bf9bc-7brk9 1/1 Running 0 96m

kube-system coredns-668d6bf9bc-gzwp4 1/1 Running 0 96m

kube-system etcd-mgmt-control-plane 1/1 Running 0 96m

kube-system kindnet-kb7sx 1/1 Running 0 96m

kube-system kube-apiserver-mgmt-control-plane 1/1 Running 0 96m

kube-system kube-controller-manager-mgmt-control-plane 1/1 Running 0 96m

kube-system kube-proxy-rtkpj 1/1 Running 0 96m

kube-system kube-scheduler-mgmt-control-plane 1/1 Running 0 96m

kustomize-guestbook kustomize-guestbook-ui-85db984648-g86b9 1/1 Running 0 3m15s

local-path-storage local-path-provisioner-7dc846544d-gqtrg 1/1 Running 0 96m

sync-waves backend-64rtd 1/1 Running 0 2m11s

sync-waves frontend-rkkn6 1/1 Running 0 111s

sync-waves maint-page-down-7bd9g 0/1 Completed 0 107s

sync-waves maint-page-up-z4j69 0/1 Completed 0 2m1s

sync-waves upgrade-sql-schemab2894c6-presync-1763563634-d9xl9 0/1 Completed 0 3m15s

ApplicationSet 컨트롤러 : Argo CD Application 을 관리

- ApplicationSet 컨트롤러는 CustomResourceDefinition (CRD) 지원을 추가하는 쿠버네티스 컨트롤러 입니다.

- 이 컨트롤러/CRD는 다수의 클러스터와 모노리포 내에서 Argo CD 애플리케이션을 관리하는 자동화와 유연성을 제공합니다.

- ApplicationSet Controller 가 제공하는 기능

- Argo CD를 사용하여 단일 Kubernetes 매니페스트를 사용하여 여러 Kubernetes 클러스터에 배포

- Argo CD를 사용하여 하나 이상의 Git 저장소에서 여러 애플리케이션을 배포하기 위해 단일 Kubernetes 매니페스트를 사용하는 기능

- 모노레포에 대한 지원 개선: Argo CD 컨텍스트에서 모노레포는 단일 Git 저장소 내에 정의된 여러 Argo CD 애플리케이션 리소스입니다.

- 다중 테넌트 클러스터 내에서 Argo CD를 사용하여 개별 클러스터 테넌트가 애플리케이션을 배포하는 기능을 향상시킵니다(대상 클러스터/네임스페이스를 활성화하는 데 권한이 있는 클러스터 관리자가 관여할 필요 없음)

- 위 그림처럼 ApplicationSet Controller(그림 중앙 맨 상단)가 Argo CD(그림 중간)와 통신하는 방식을 확인할 수 있다.

- Argo CD 네임스페이스 내에서 애플리케이션 리소스를 생성, 수정, 삭제하는 것이 유일한 목적임을 알 수 있다.

- ApplicationSet 의 유일한 목표는 Argo CD 애플리케이션 리소스가 선언적 ApplicationSet 리소스에 정의된 상태로 보장되도록 하는 것이다.

- ApplicationSet은 제너레이터 generator 를 사용해 각기 다른 데이터 소스를 지원하는 매개변수를 생성한다. 제너레이터의 종류 - Generators

- 목록 List : Argo CD 애플리케이션이 사용할 수 있는 쿠버네티스 클러스터 목록

- 클러스터 Cluster : Argo CD에서 이미 정의되고 관리되는 클러스터 기반의 동적 목록 (클러스터 추가/제거 이벤트에 자동으로 응답하는 기능 포함)

- 깃 Git : 깃 리포지터리 내에서 혹은 깃 리포지터리의 디렉터리 구조를 기반으로 하는 애플리케이션 생성

- 매트릭스 Matrix : 2개의 서로 다른 제너레이터에 대한 매개변수 결합

- 병합 Merge : 2개의 다른 제너레이터에 대한 매개변수 병합

- SCM 공급자 : 소스 코드 관리 SCM Source Code Management 공급자(예, 깃랩이나 깃허브)에서 리포지터리를 자동으로 검색

- 풀 리퀘스트 Pull Request : SCMaaS 제공자(예: GitHub)의 API를 사용하여 Repo 내에서 열려 있는 풀 리퀘스트를 자동으로 검색

- 클러스터 결정 리소스 Cluster Decision Resource : 사용자 지정 리소스별 논리를 사용하여 배포할 Argo CD 클러스터 목록을 생성

- 플러그인 Plugin : 플러그인 생성기는 매개변수를 제공하기 위해 RPC HTTP 요청을 만듭니다.

- 제너레이터 : ApplicationSet 의 기본 구성 요소는 제너레이터다. 제너레이터는 ApplicationSet 에서 사용되는 매개변수 생성을 담당한다.

- 목록 List 제너레이터 : 고정된 클러스터 목록에 Argo CD 애플리케이션을 지정할 수 있다 - Docs

ApplicationSet List 제네레이터 실습

#

docker network inspect kind | grep -E 'Name|IPv4Address'

DEVK8SIP=192.168.97.3

PRDK8SIP=192.168.97.4

echo $DEVK8SIP $PRDK8SIP

# argocd app 배포

cat <<EOF | kubectl apply -f -

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

metadata:

name: guestbook

namespace: argocd

spec:

goTemplate: true

goTemplateOptions: ["missingkey=error"]

generators:

- list:

elements:

- cluster: dev-k8s

url: https://$DEVK8SIP:6443

- cluster: prd-k8s

url: https://$PRDK8SIP:6443

template:

metadata:

name: '{{.cluster}}-guestbook'

labels:

environment: '{{.cluster}}'

managed-by: applicationset

spec:

project: default

source:

repoURL: https://github.com/gasida/cicd-study.git

targetRevision: HEAD

path: appset/list/{{.cluster}}

destination:

server: '{{.url}}'

namespace: guestbook

syncPolicy:

syncOptions:

- CreateNamespace=true

EOF

#

kubectl get applicationsets -n argocd guestbook -o yaml

kubectl get applicationsets -n argocd guestbook -o yaml | k neat | yq

kubectl get applicationsets -n argocd

argocd appset list

argocd app list

argocd app list -l managed-by=applicationset

kubectl get applications -n argocd

kubectl get applications -n argocd --show-labels

# sync

argocd app sync -l managed-by=applicationset

# 생성된 application yaml 확인

kubectl get applications -n argocd dev-k8s-guestbook -o yaml | k neat | yq

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

labels:

environment: dev-k8s

managed-by: applicationset

name: dev-k8s-guestbook

namespace: argocd

spec:

destination:

namespace: guestbook

server: https://192.168.97.3:6443

project: default

source:

path: appset/list/dev-k8s

repoURL: https://github.com/gasida/cicd-study.git

targetRevision: HEAD

syncPolicy:

syncOptions:

- CreateNamespace=true

kubectl get applications -n argocd prd-k8s-guestbook -o yaml | k neat | yq

apiVersion: argoproj.io/v1alpha1

kind: Application

metadata:

labels:

environment: prd-k8s

managed-by: applicationset

name: prd-k8s-guestbook

namespace: argocd

spec:

destination:

namespace: guestbook

server: https://192.168.97.4:6443

project: default

source:

path: appset/list/prd-k8s

repoURL: https://github.com/gasida/cicd-study.git

targetRevision: HEAD

syncPolicy:

syncOptions:

- CreateNamespace=true

# 각 k8s 에 배포된 파드 정보 확인

k8s2 get pod -n guestbook

k8s3 get pod -n guestbook

# 삭제

argocd appset delete guestbook --yes

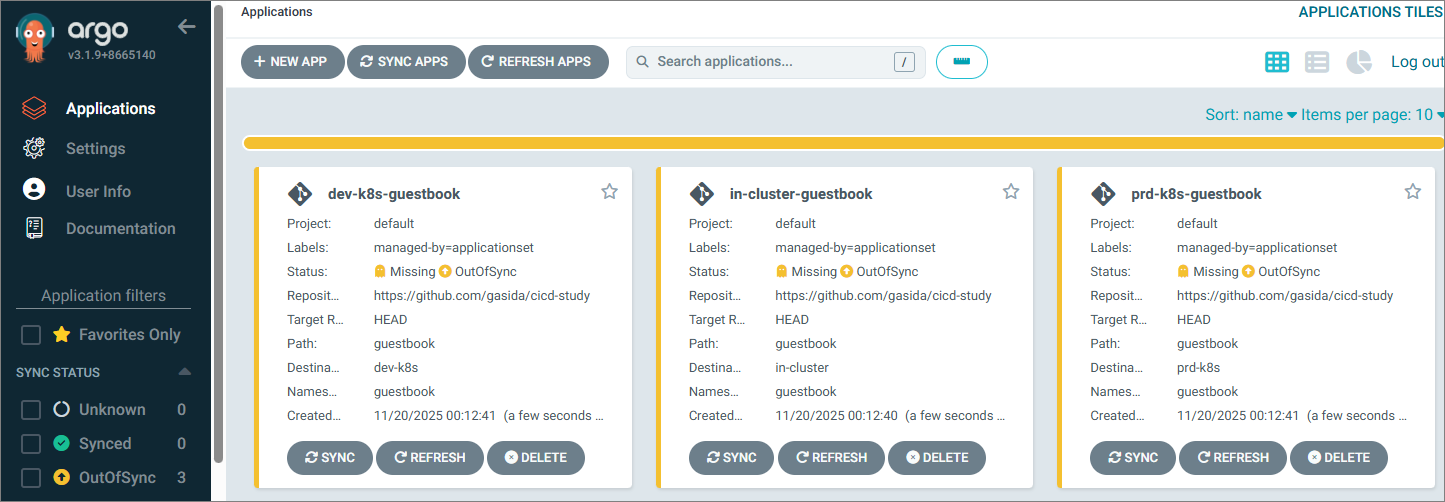

ApplicationSet Cluster 제네레이터 실습

#

cat <<EOF | kubectl apply -f -

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

metadata:

name: guestbook

namespace: argocd

spec:

goTemplate: true

goTemplateOptions: ["missingkey=error"]

generators:

- clusters: {}

template:

metadata:

name: '{{.name}}-guestbook'

labels:

managed-by: applicationset

spec:

project: "default"

source:

repoURL: https://github.com/gasida/cicd-study

targetRevision: HEAD

path: guestbook

destination:

server: '{{.server}}'

namespace: guestbook

syncPolicy:

syncOptions:

- CreateNamespace=true

EOF

#

kubectl get applicationsets -n argocd guestbook -o yaml

kubectl get applicationsets -n argocd guestbook -o yaml | k neat | yq

kubectl get applicationsets -n argocd

argocd appset list

argocd app list

argocd app list -l managed-by=applicationset

kubectl get applications -n argocd --show-labels

# sync

argocd app sync -l managed-by=applicationset

# 생성된 application yaml 확인

kubectl get applications -n argocd in-cluster-guestbook -o yaml | k neat | yq

kubectl get applications -n argocd dev-k8s-guestbook -o yaml | k neat | yq

kubectl get applications -n argocd prd-k8s-guestbook -o yaml | k neat | yq

# 각 k8s 에 배포된 파드 정보 확인

k8s1 get pod -n guestbook

k8s2 get pod -n guestbook

k8s3 get pod -n guestbook

# 삭제

argocd appset delete guestbook --yes

- generator가 cluster로 명시된 경우, Argo CD에 연결된 모든 클러스터에 애플리케이션이 배포된다.

- dev k8s 만 배포 해보자

#

kubectl get secret -n argocd -l argocd.argoproj.io/secret-type=cluster

NAME TYPE DATA AGE

cluster-192.168.97.3-3897021443 Opaque 3 58m

cluster-192.168.97.4-3756131620 Opaque 3 58m

DEVK8S=cluster-192.168.97.3-3897021443

kubectl label secrets $DEVK8S -n argocd env=stg

kubectl get secret -n argocd -l env=stg

NAME TYPE DATA AGE

cluster-192.168.97.3-3897021443 Opaque 3 60m

#

cat <<EOF | kubectl apply -f -

apiVersion: argoproj.io/v1alpha1

kind: ApplicationSet

metadata:

name: guestbook

namespace: argocd

spec:

goTemplate: true

goTemplateOptions: ["missingkey=error"]

generators:

- clusters:

selector:

matchLabels:

env: "stg"

template:

metadata:

name: '{{.name}}-guestbook'

labels:

managed-by: applicationset

spec:

project: "default"

source:

repoURL: https://github.com/gasida/cicd-study

targetRevision: HEAD

path: guestbook

destination:

server: '{{.server}}'

namespace: guestbook

syncPolicy:

syncOptions:

- CreateNamespace=true

automated:

prune: true

selfHeal: true

EOF

#

kubectl get applicationsets -n argocd

argocd appset list

argocd app list -l managed-by=applicationset

kubectl get applications -n argocd --show-labels

# 삭제

argocd appset delete guestbook --yes

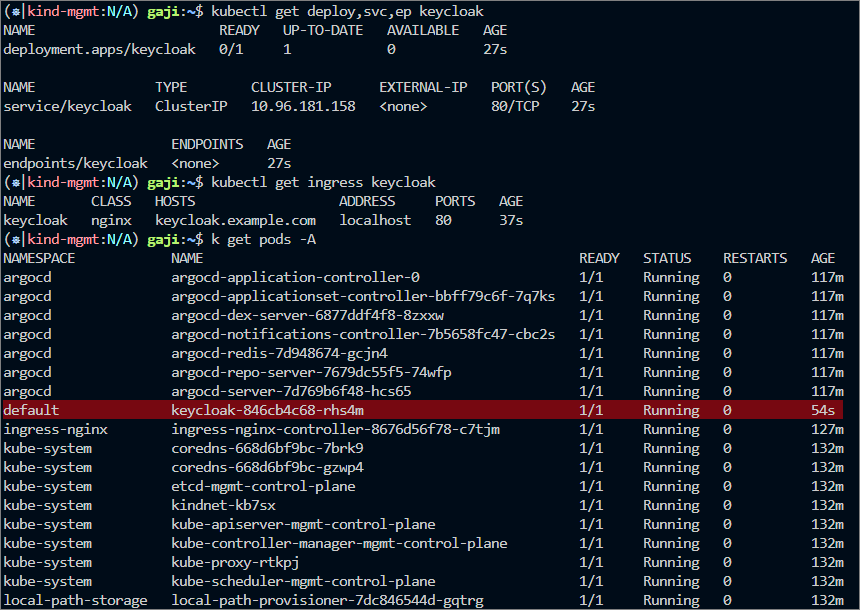

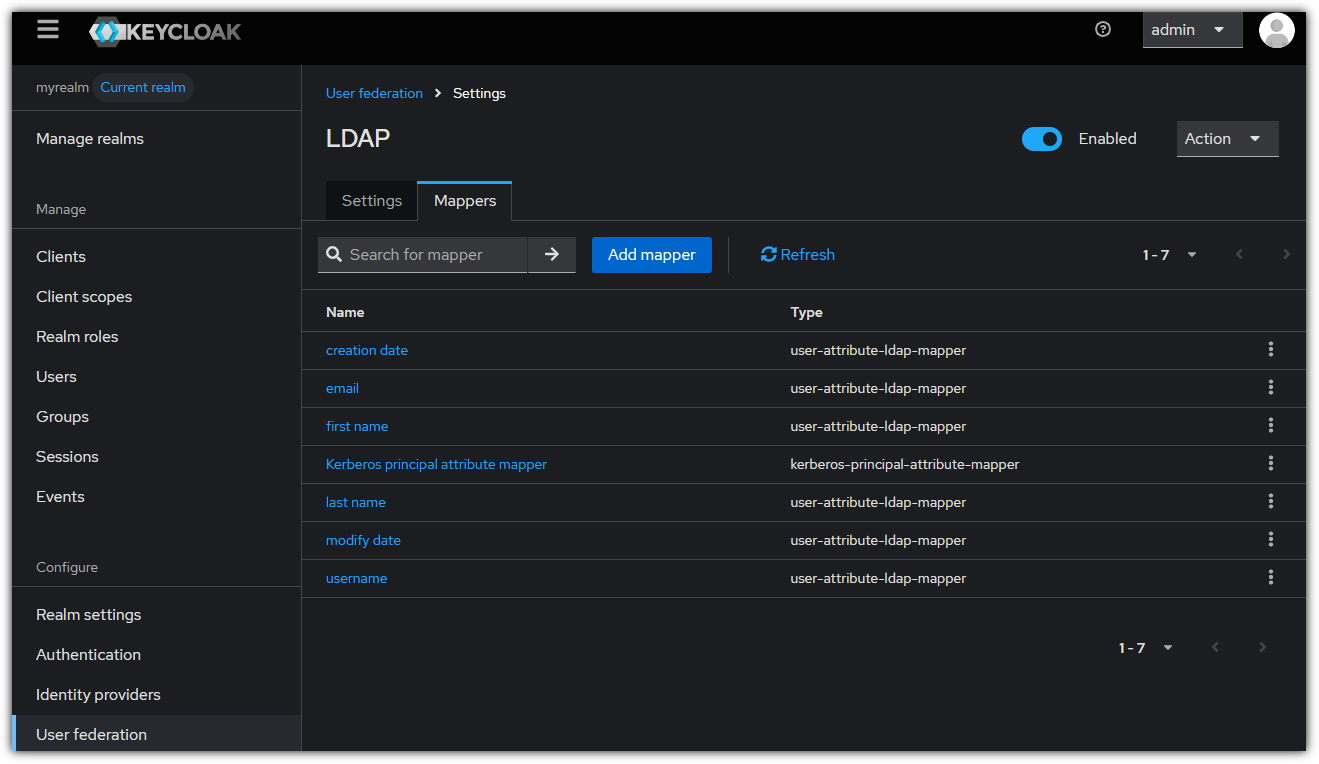

3. OpenLDAP + KeyCloak + Argo CD + Jenkins

keycloak 파드로 배포

#

cat <<EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: keycloak

labels:

app: keycloak

spec:

replicas: 1

selector:

matchLabels:

app: keycloak

template:

metadata:

labels:

app: keycloak

spec:

containers:

- name: keycloak

image: quay.io/keycloak/keycloak:26.4.0

args: ["start-dev"] # dev mode 실행

env:

- name: KEYCLOAK_ADMIN

value: admin

- name: KEYCLOAK_ADMIN_PASSWORD

value: admin

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Service

metadata:

name: keycloak

spec:

selector:

app: keycloak

ports:

- name: http

port: 80

targetPort: 8080

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: keycloak

namespace: default

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /

nginx.ingress.kubernetes.io/ssl-redirect: "false"

spec:

ingressClassName: nginx

rules:

- host: keycloak.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: keycloak

port:

number: 8080

EOF

# 확인

kubectl get deploy,svc,ep keycloak

kubectl get ingress keycloak

NAME CLASS HOSTS ADDRESS PORTS AGE

keycloak nginx keycloak.example.com localhost 80 91s

#

curl -s -H "Host: keycloak.example.com" http://127.0.0.1 -I

HTTP/1.1 302 Found

Location: http://keycloak.example.com/admin/

# 도메인 설정

## macOS의 /etc/hosts 파일 수정

echo "127.0.0.1 keycloak.example.com" | sudo tee -a /etc/hosts

cat /etc/hosts

## C:\Windows\System32\drivers\etc\hosts 관리자모드에서 메모장에 내용 추가

127.0.0.1 keycloak.example.com

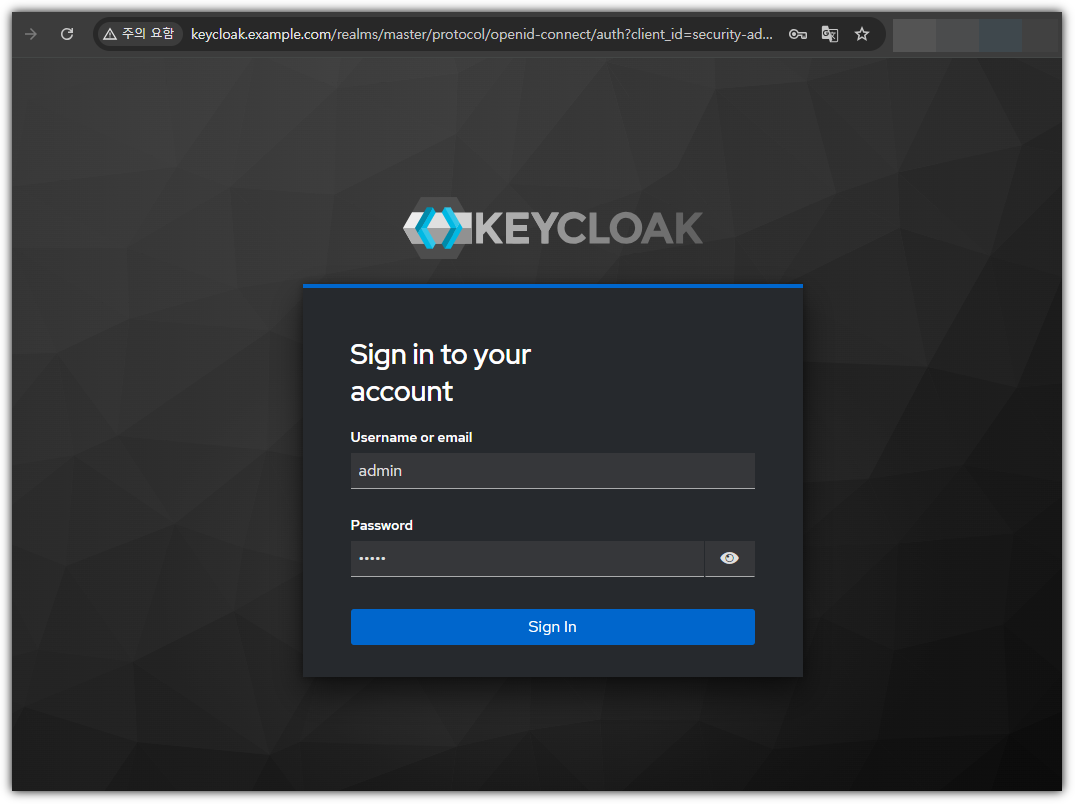

# keycloak 웹 접속 : admin / admin

curl -s http://keycloak.example.com -I

open "http://keycloak.example.com/admin"

- hosts 파일 업데이트 (## C:\Windows\System32\drivers\etc\hosts 관리자모드에서 메모장에 내용 추가)

- # keycloak 웹 접속 : admin / admin

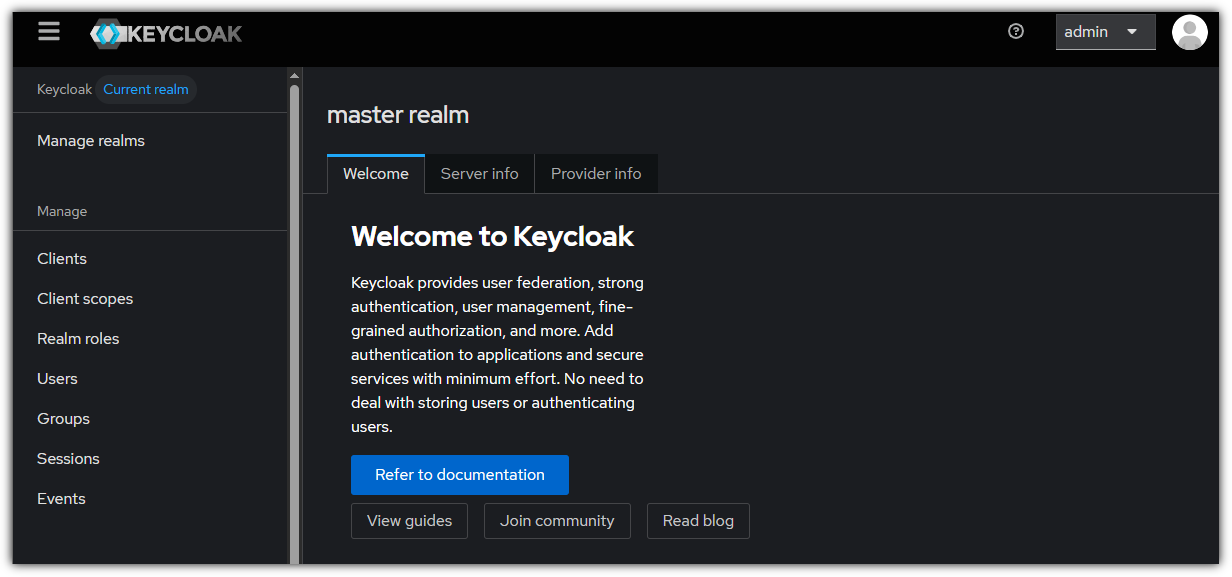

- http://keycloak.example.com/admin

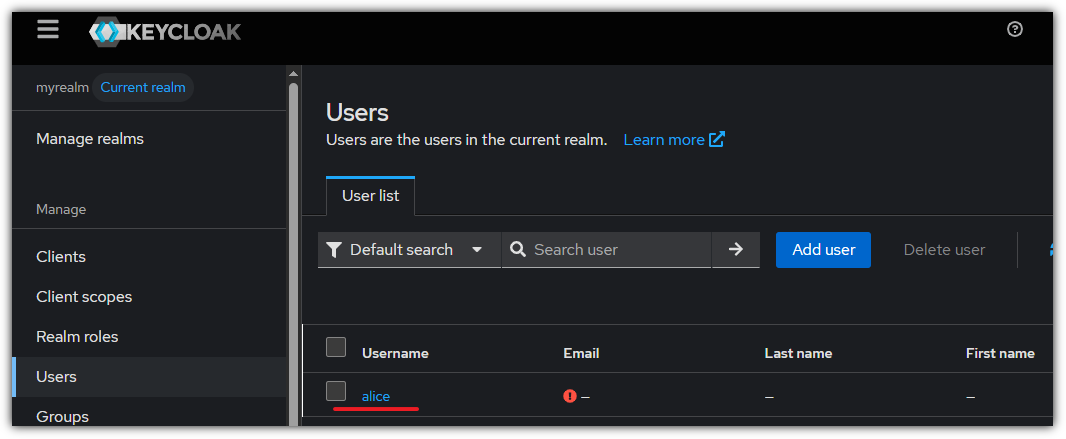

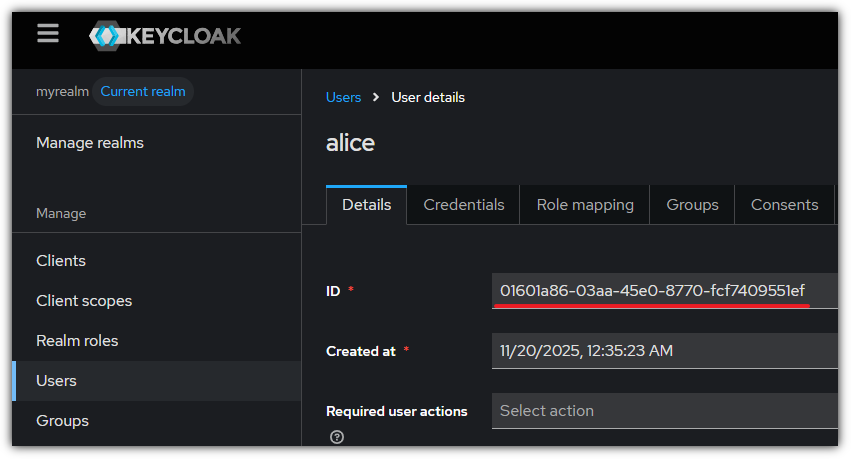

- realms 생성 : myrealm

- users 생성 : alice - 암호 alice123

mgmt k8a in-cluster 내부에서도 keycloak / argocd 도메인 호출 가능하게 설정

# 경량의 curl 테스트용 파드 생성

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: Pod

metadata:

name: curl

namespace: default

spec:

containers:

- name: curl

image: curlimages/curl:latest

command: ["sleep", "infinity"]

EOF

#

kubectl get pod -l app=keycloak -owide

kubectl get pod -l app=keycloak -o jsonpath='{.items[0].status.podIP}'

KEYCLOAKIP=$(kubectl get pod -l app=keycloak -o jsonpath='{.items[0].status.podIP}')

echo $KEYCLOAKIP

# 기본 통신 및 정보 확인 : ClusterIP 메모!

kubectl get svc keycloak

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

keycloak ClusterIP 10.96.58.66 <none> 80/TCP 25m

kubectl get svc -n argocd argocd-server

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

argocd-server ClusterIP 10.96.156.196 <none> 80/TCP,443/TCP 134m

kubectl exec -it curl -- ping -c 1 $KEYCLOAKIP

kubectl exec -it curl -- curl -s http://keycloak.default.svc.cluster.local/realms/myrealm/.well-known/openid-configuration | jq

# 왜 호출이 되지 않을까?

kubectl exec -it curl -- curl -s http://keycloak.example.com -I

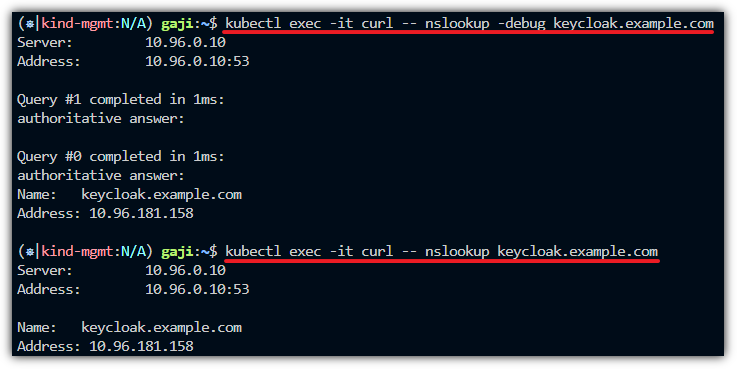

kubectl exec -it curl -- nslookup -debug keycloak.example.com

kubectl exec -it curl -- nslookup keycloak.example.com

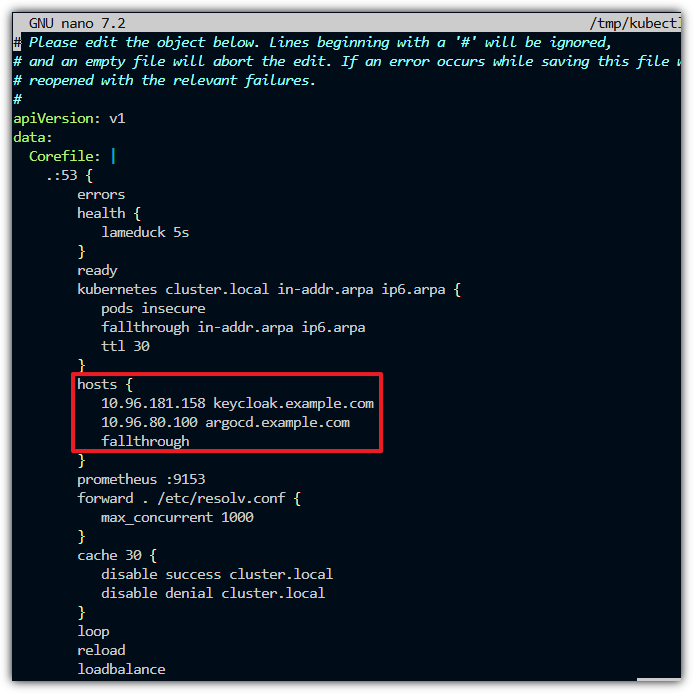

# 해결해보자 : coredns 에 hosts 플러그인 활용

KUBE_EDITOR="nano" kubectl edit cm -n kube-system coredns

.:53 {

...

kubernetes cluster.local in-addr.arpa ip6.arpa {

pods insecure

fallthrough in-addr.arpa ip6.arpa

ttl 30

}

hosts {

<CLUSTER IP> keycloak.example.com

<CLUSTER IP> argocd.example.com

fallthrough

}

reload # cm 설정 변경 시 자동으로 reload 적용됨

...

# cm 설정 변경 시 자동으로 reload 적용 로그 확인

kubectl logs -n kube-system -l k8s-app=kube-dns --timestamps

# 도메인 질의 시, ClusterIP로 변경 확인 : 파드 내에 dns 쿼리 캐시는 없는 걸까요???

kubectl exec -it curl -- nslookup -debug keycloak.example.com

kubectl exec -it curl -- nslookup keycloak.example.com

# cm 설정 변경 시 자동으로 reload 적용 로그 확인

(⎈|kind-mgmt:N/A) gaji:~$ kubectl logs -n kube-system -l k8s-app=kube-dns --timestamps

2025-11-19T13:14:07.678842542Z .:53

2025-11-19T13:14:07.678983143Z [INFO] plugin/reload: Running configuration SHA512 = 1b226df79860026c6a52e67daa10d7f0d57ec5b023288ec00c5e05f93523c894564e15b91770d3a07ae1cfbe861d15b37d4a0027e69c546ab112970993a3b03b

2025-11-19T13:14:07.679001973Z CoreDNS-1.11.3

2025-11-19T13:14:07.679004936Z linux/amd64, go1.21.11, a6338e9

2025-11-19T15:45:09.776138119Z [INFO] Reloading

2025-11-19T15:45:09.949688839Z [INFO] plugin/reload: Running configuration SHA512 = c0e1967e5ec5bba539d464ddee4570848f5d2276921584e17cd159f21c7f3c80b8abed9461bcf466635c023730e75920bf7cec8991ec8a2b5df2816acd795759

2025-11-19T15:45:09.949711015Z [INFO] Reloading complete

2025-11-19T15:47:58.390539734Z [INFO] Reloading

2025-11-19T15:47:58.493824926Z [INFO] plugin/reload: Running configuration SHA512 = d89ef6dda91aad7ce456216b31e53dc65f463370e8bba44afdef282bb1662a4c81d5b0b79d709fefcd6f2d615dc79e51f02aa26e2c6af4792437e6b93f0907d1

2025-11-19T15:47:58.493852255Z [INFO] Reloading complete

2025-11-19T13:14:07.681537390Z .:53

2025-11-19T13:14:07.681571417Z [INFO] plugin/reload: Running configuration SHA512 = 1b226df79860026c6a52e67daa10d7f0d57ec5b023288ec00c5e05f93523c894564e15b91770d3a07ae1cfbe861d15b37d4a0027e69c546ab112970993a3b03b

2025-11-19T13:14:07.681575909Z CoreDNS-1.11.3

2025-11-19T13:14:07.681577726Z linux/amd64, go1.21.11, a6338e9

2025-11-19T15:45:55.198798427Z [INFO] Reloading

2025-11-19T15:45:55.301722532Z [INFO] plugin/reload: Running configuration SHA512 = c0e1967e5ec5bba539d464ddee4570848f5d2276921584e17cd159f21c7f3c80b8abed9461bcf466635c023730e75920bf7cec8991ec8a2b5df2816acd795759

2025-11-19T15:45:55.301744565Z [INFO] Reloading complete

2025-11-19T15:48:32.578580033Z [INFO] Reloading

2025-11-19T15:48:32.680249878Z [INFO] plugin/reload: Running configuration SHA512 = d89ef6dda91aad7ce456216b31e53dc65f463370e8bba44afdef282bb1662a4c81d5b0b79d709fefcd6f2d615dc79e51f02aa26e2c6af4792437e6b93f0907d1

2025-11-19T15:48:32.680273803Z [INFO] Reloading complete

- 도메인 질의 시, ClusterIP로 변경 확인

- 기존 도메인 질의 시 오류

- ConfigMap 수정 & hosts 파일 업데이트 후 질의 시 정상 조회

-> 아래 keyclock의 서비스에 Cluster IP와 동일하게 조회됨

- 서비스 확인

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get svc keycloak

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

keycloak ClusterIP 10.96.181.158 <none> 80/TCP 19m

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get svc -n argocd argocd-server

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

argocd-server ClusterIP 10.96.80.100 <none> 80/TCP,443/TCP 135m

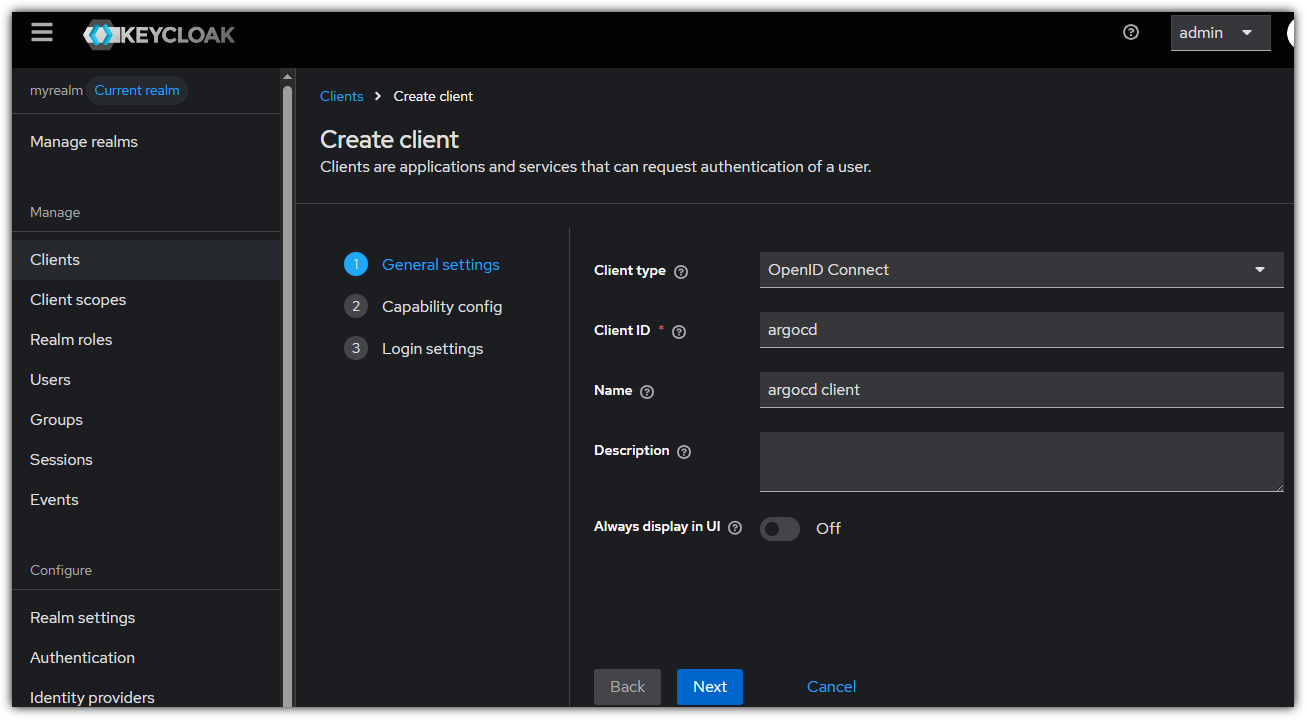

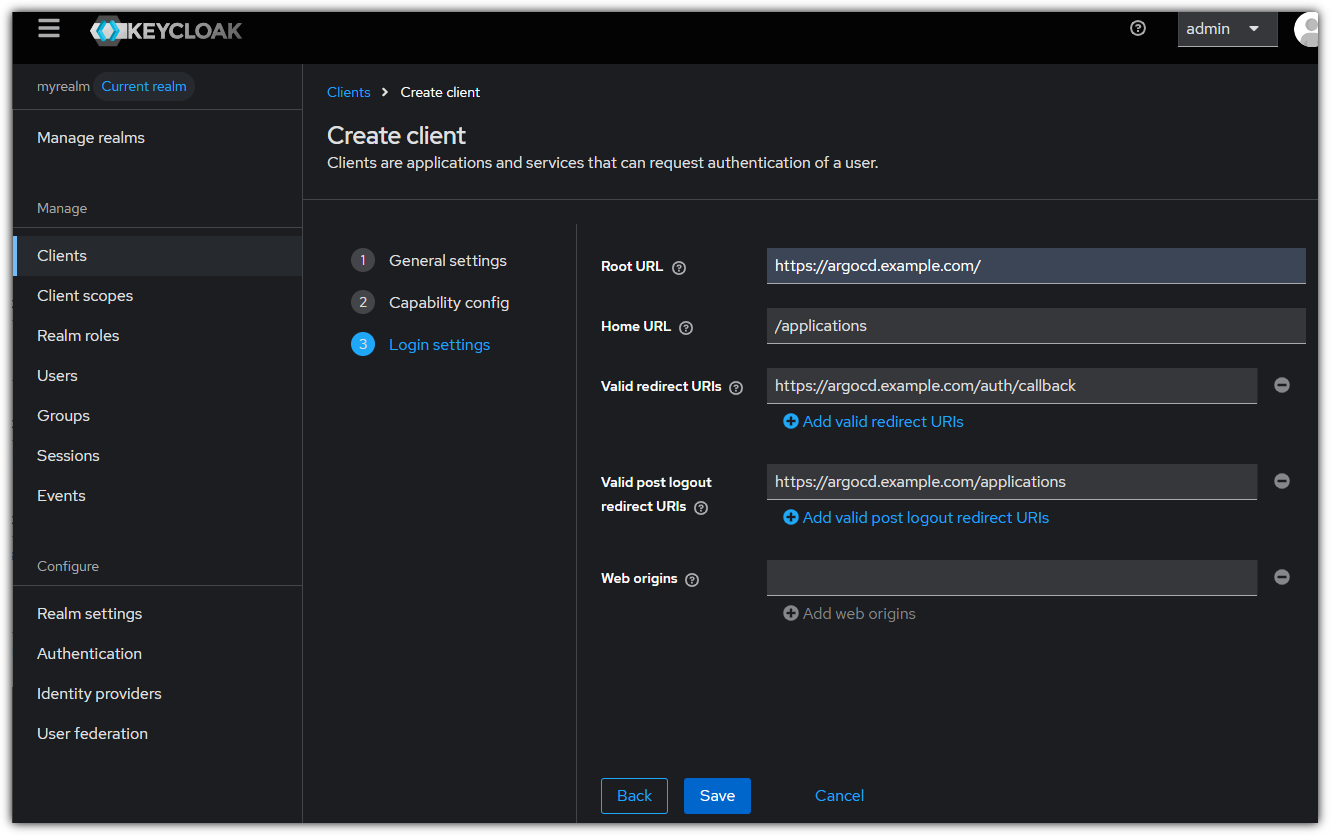

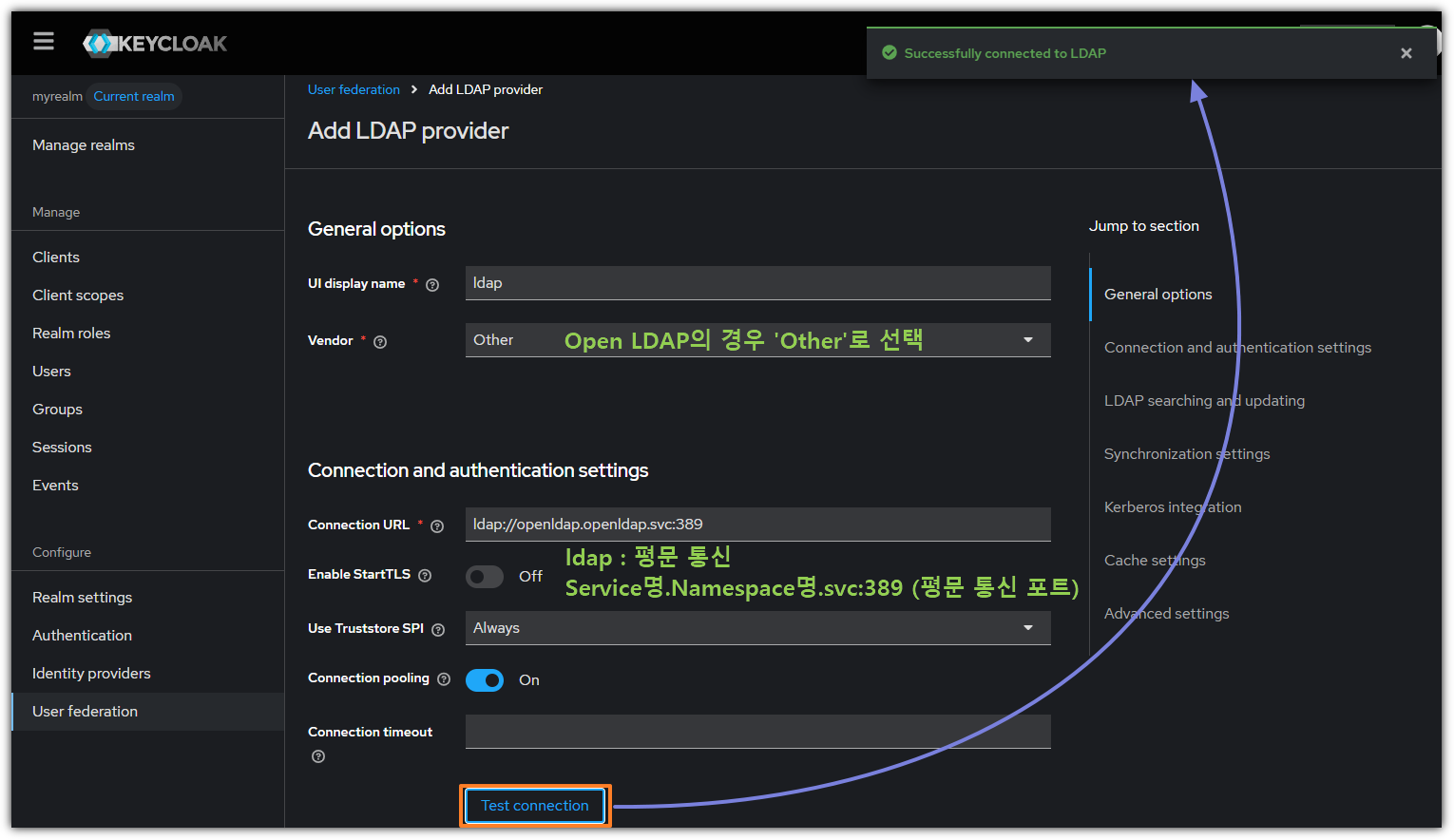

keycloak 에 argo cd 를 위한 client 생성

Configuring ArgoCD OIDC

- 클라이언트 시크릿 설정

kubectl -n argocd patch secret argocd-secret --patch='{"stringData": { "oidc.keycloak.clientSecret": "hkV#####################" }}'

# 확인

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get secret -n argocd argocd-secret -o jsonpath='{.data}' | jq

{

"admin.password": "JDJhJDEwJFI0VEkvVk5JVVdVcjR6Ukl3Y1prLk9tUmVBYWg0RXJLRTVDblBmbTkvWjNkaWpwUW05eWtt",

"admin.passwordMtime": "MjAyNS0xMS0xOVQxMzo0NDoxNVo=",

"oidc.keycloak.clientSecret": "aGtWNHBISXljSmdWTnN5V2lWYTJKYlBWc2tERjVuWFY=",

"server.secretkey": "cUU3bTU5dmo0NWJHdkNHUlE2MGFPakJvQ1ljUWE4MzRNRU1UeVVvREVHMD0="

}

- Now we can configure the config map and add the oidc configuration to enable our keycloak authentication.

kubectl patch cm argocd-cm -n argocd --type merge -p '

data:

oidc.config: |

name: Keycloak

issuer: http://keycloak.example.com/realms/myrealm

clientID: argocd

clientSecret: hkV###############################

requestedScopes: ["openid", "profile", "email"]

'

- argocd 서버 재시작

(⎈|kind-mgmt:N/A) gaji:~$ kubectl rollout restart deploy argocd-server -n argocd

deployment.apps/argocd-server restarted

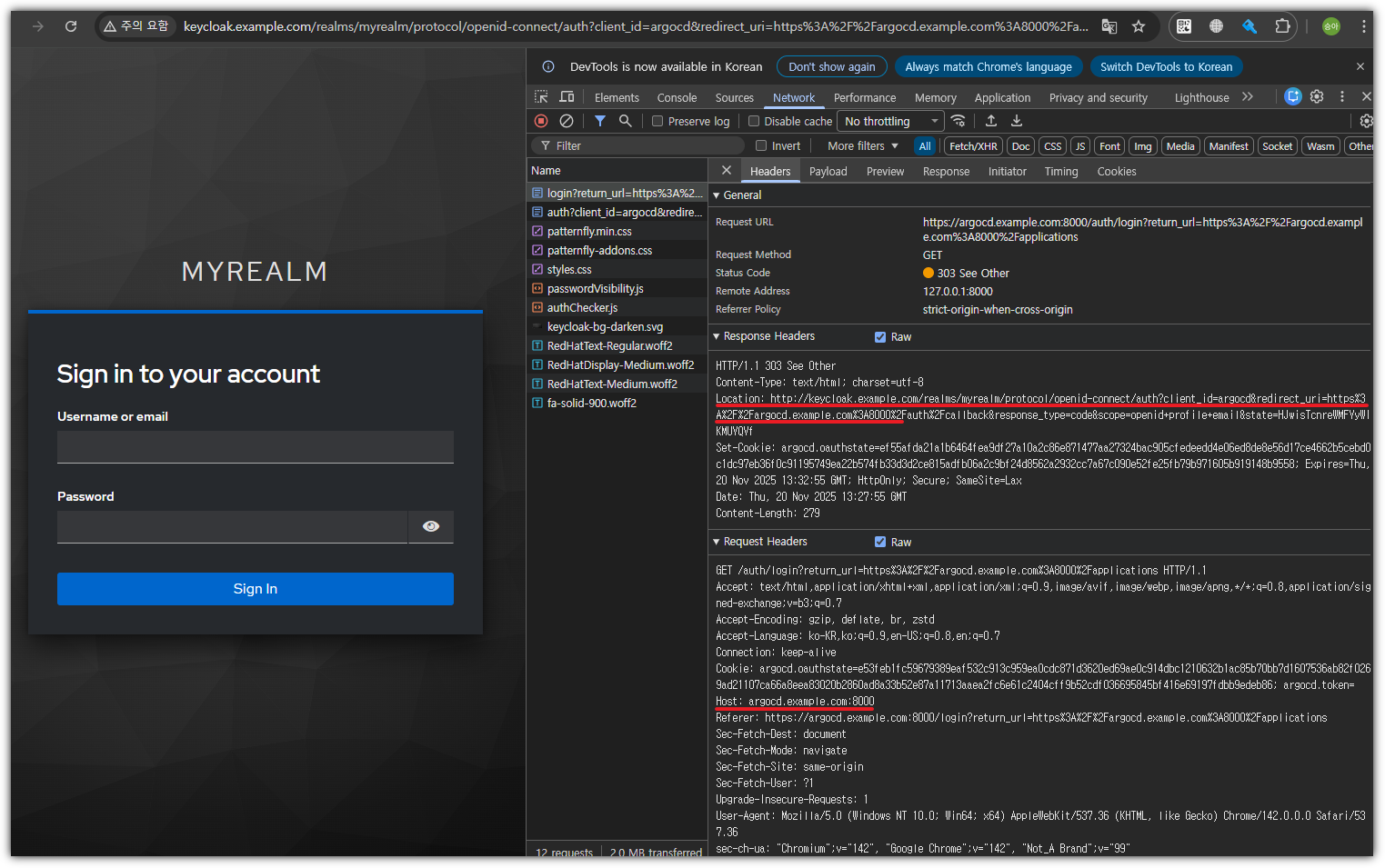

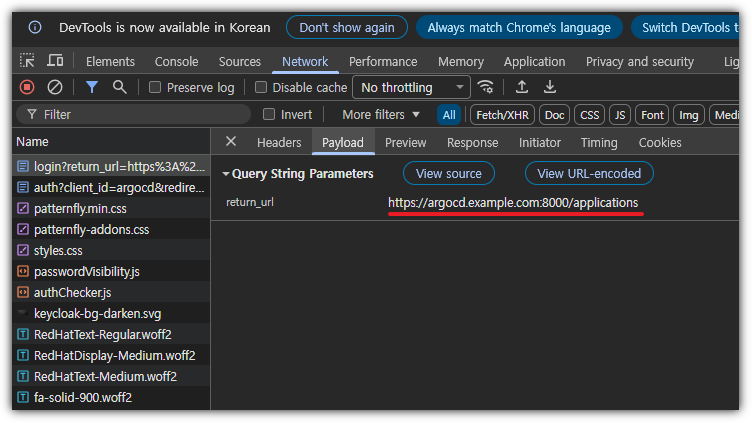

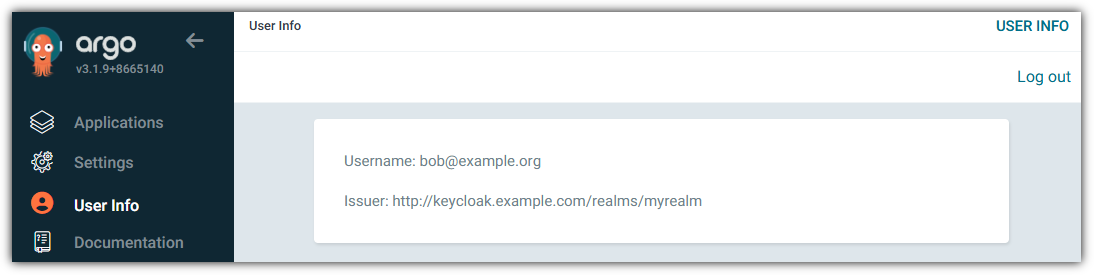

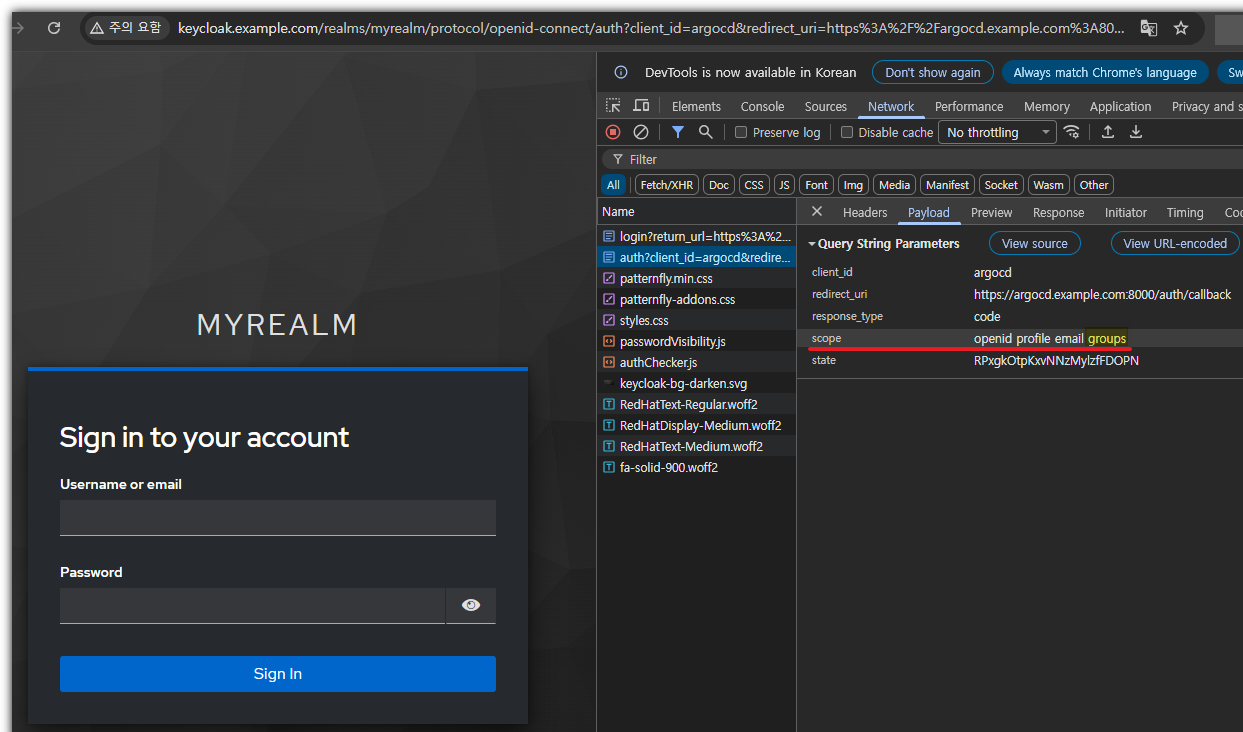

keycloak 인증을 통한 Argo CD 로그인 : admin logout 후 keycloak 를 통한 alice 로그인

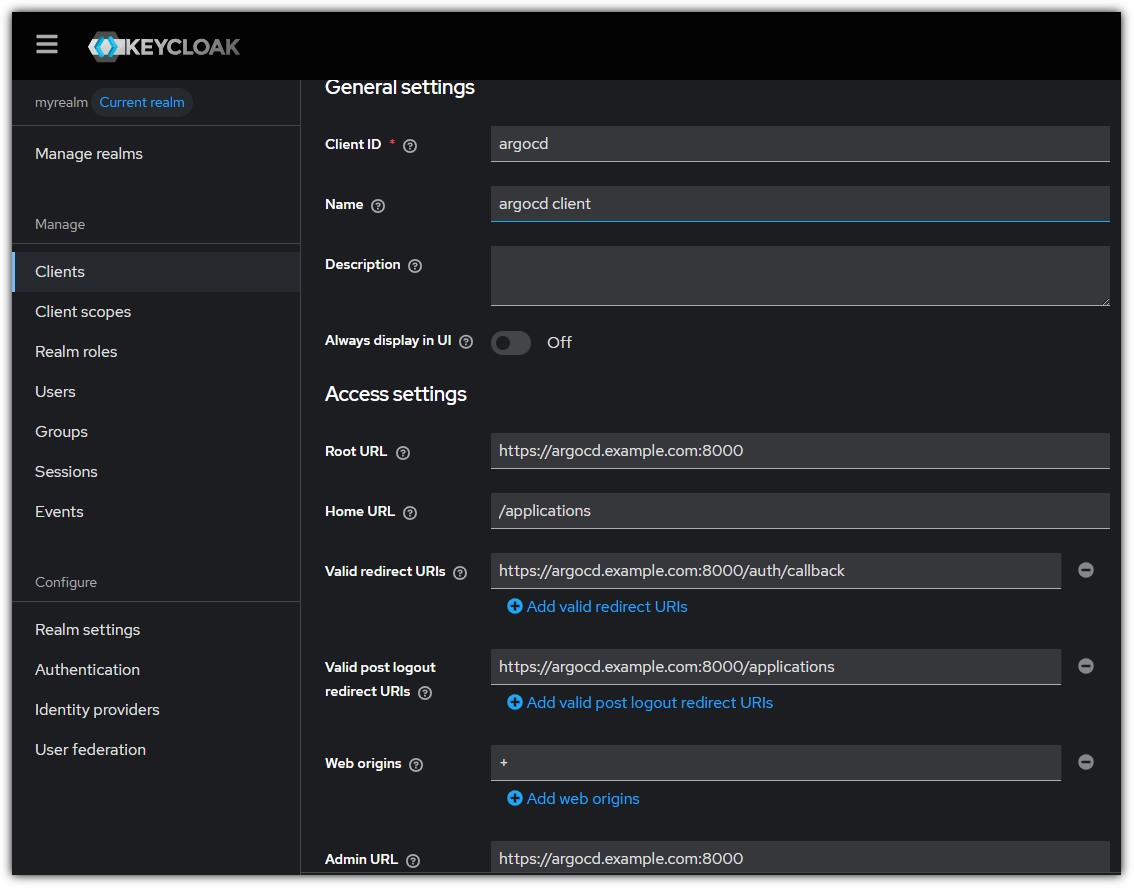

- keycloak을 통한 로그인 시 오류 발생으로 일부 내용 수정

- Argo CD의 ConfigMap 내용 추가 & Keycloak에 argocd client 내용 수정

$ KUBE_EDITOR="nano" kubectl edit cm -n argocd argocd-cm

apiVersion: v1

data:

admin.enabled: "true"

application.instanceLabelKey: argocd.argoproj.io/instance

application.sync.impersonation.enabled: "false"

exec.enabled: "false"

oidc.config: |

name: Keycloak

issuer: http://keycloak.example.com/realms/myrealm

clientID: argocd

clientSecret: hkV4pHIycJgVNsyWiVa2JbPVskDF5nXV

requestedScopes: ["openid", "profile", "email"]

redirectURL: https://argocd.example.com:8000/auth/callback # 설정1

resource.customizations.ignoreResourceUpdates.ConfigMap: |

url: https://argocd.example.com:8000 # 설정2

$ $ kubectl rollout restart deploy argocd-server -n argocd

deployment.apps/argocd-server restarted

$ kubectl port-forward svc/argocd-server -n argocd 8000:443

Forwarding from 127.0.0.1:8000 -> 8080

Forwarding from [::1]:8000 -> 8080

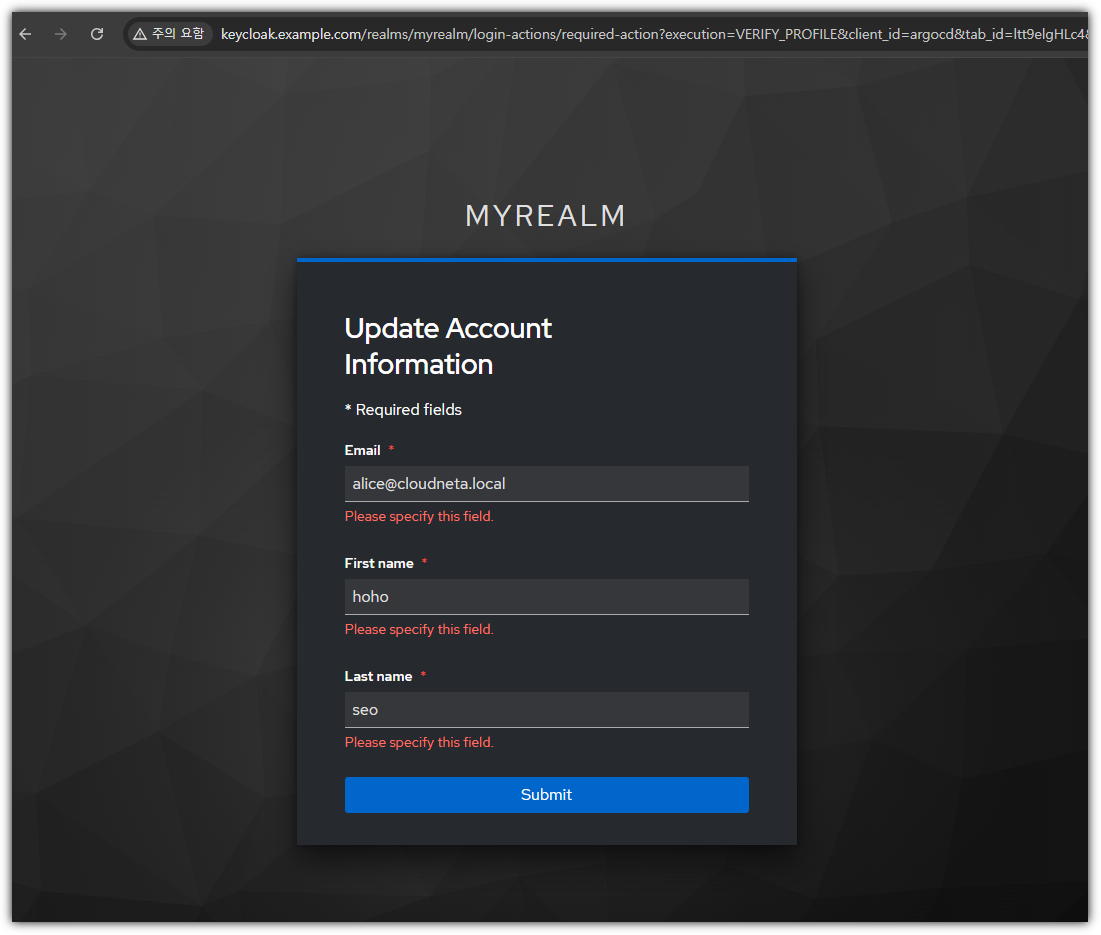

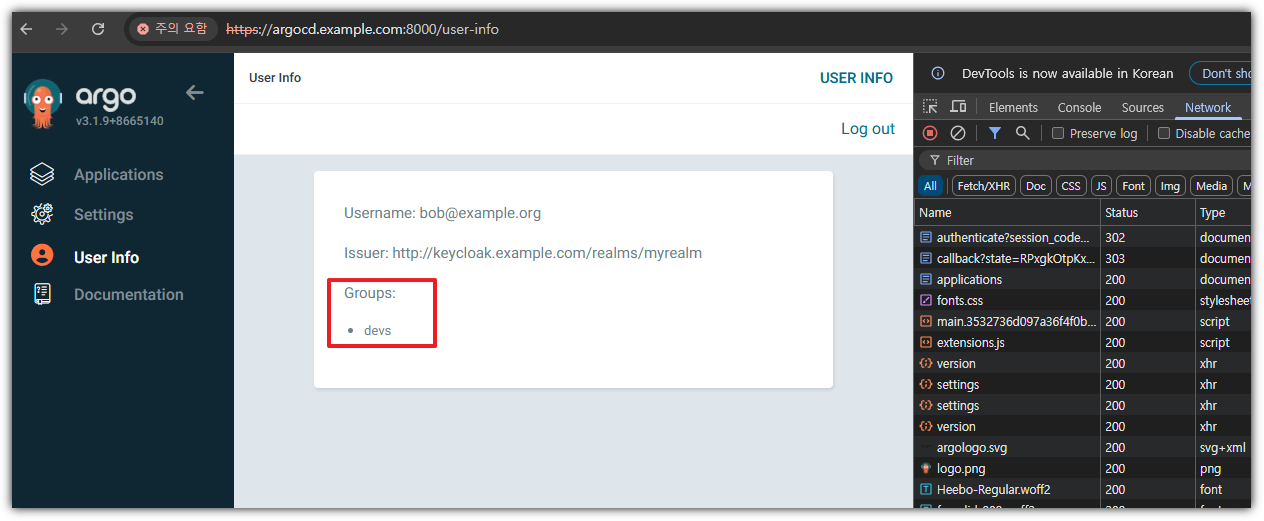

- 정상 접근 확인

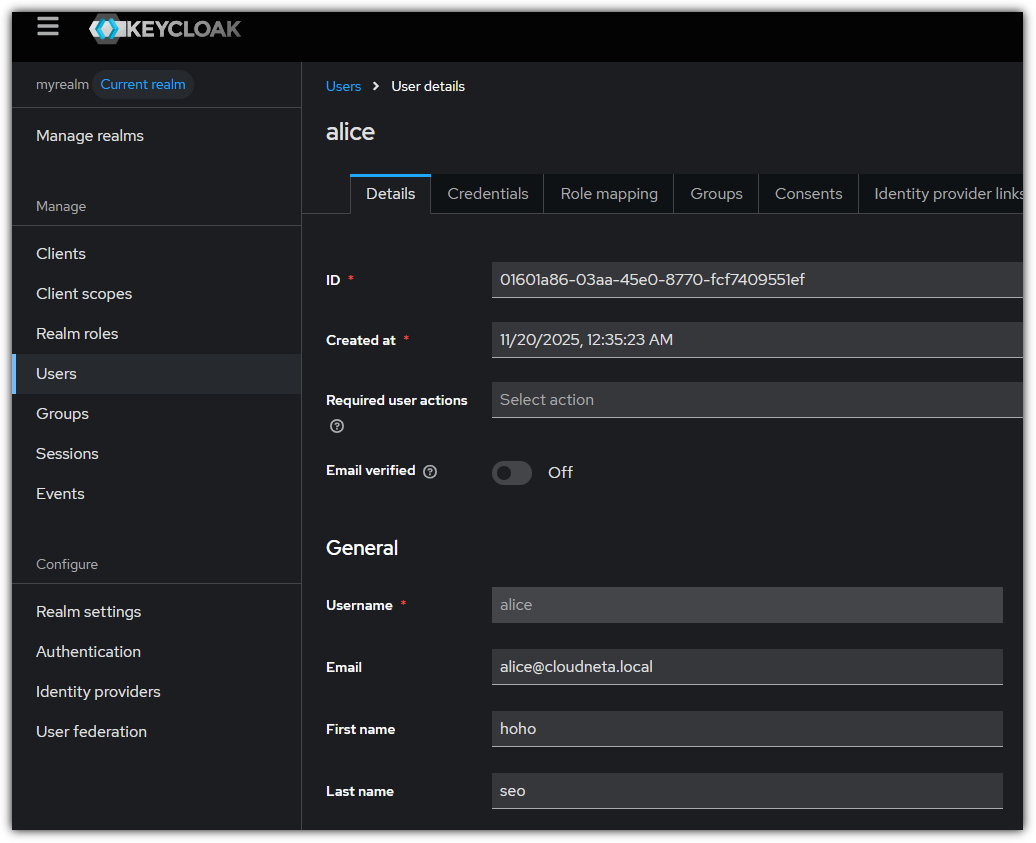

- alice로 로그인 후 정보 업데이트

-> Alice 사용자의 user 정보도 업데이트 확인됨

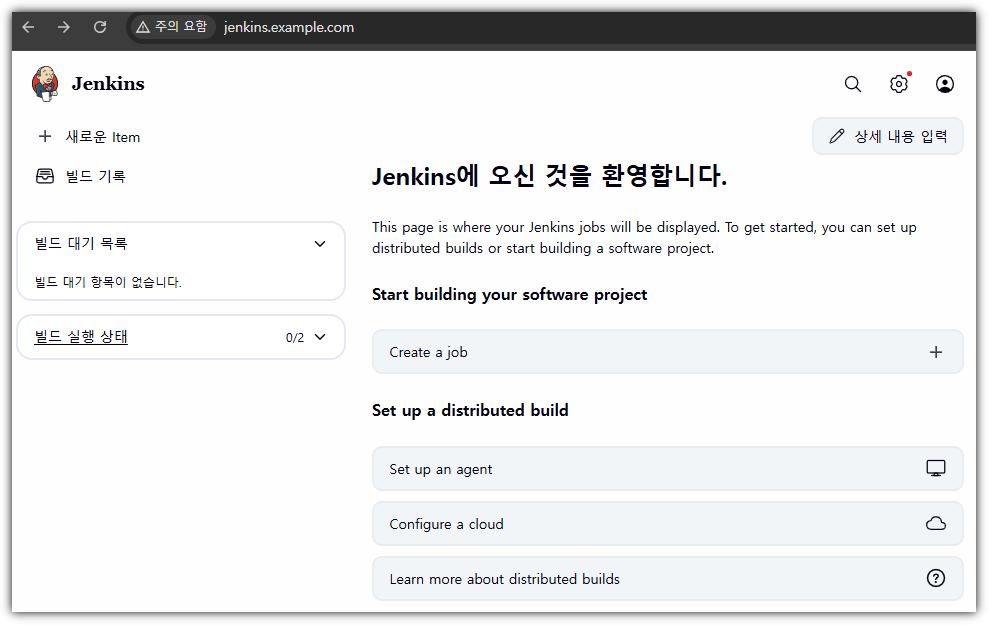

jenkins 직접 파드로 배포

kubectl create ns jenkins

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: jenkins-pvc

namespace: jenkins

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: jenkins

namespace: jenkins

spec:

replicas: 1

selector:

matchLabels:

app: jenkins

template:

metadata:

labels:

app: jenkins

spec:

securityContext:

fsGroup: 1000

containers:

- name: jenkins

image: jenkins/jenkins:lts

ports:

- name: http

containerPort: 8080

- name: agent

containerPort: 50000

volumeMounts:

- name: jenkins-home

mountPath: /var/jenkins_home

volumes:

- name: jenkins-home

persistentVolumeClaim:

claimName: jenkins-pvc

---

apiVersion: v1

kind: Service

metadata:

name: jenkins-svc

namespace: jenkins

spec:

type: ClusterIP

selector:

app: jenkins

ports:

- port: 8080

targetPort: http

protocol: TCP

name: http

- port: 50000

targetPort: agent

protocol: TCP

name: agent

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: jenkins-ingress

namespace: jenkins

annotations:

nginx.ingress.kubernetes.io/proxy-body-size: "0"

nginx.ingress.kubernetes.io/proxy-read-timeout: "600"

spec:

ingressClassName: nginx

rules:

- host: jenkins.example.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: jenkins-svc

port:

number: 8080

EOF

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get deploy,svc,ep,pvc -n jenkins

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/jenkins 1/1 1 1 116s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/jenkins-svc ClusterIP 10.96.111.122 <none> 8080/TCP,50000/TCP 116s

NAME ENDPOINTS AGE

endpoints/jenkins-svc 10.244.0.60:50000,10.244.0.60:8080 116s

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS VOLUMEATTRIBUTESCLASS AGE

persistentvolumeclaim/jenkins-pvc Bound pvc-ab4c24f4-0a8c-4169-a54a-149314614bed 10Gi RWO standard <unset>

116s

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get ingress -n jenkins jenkins-ingress

NAME CLASS HOSTS ADDRESS PORTS AGE

jenkins-ingress nginx jenkins.example.com localhost 80 30s

- hosts 파일 업데이트 (## C:\Windows\System32\drivers\etc\hosts 관리자모드에서 메모장에 내용 추가)

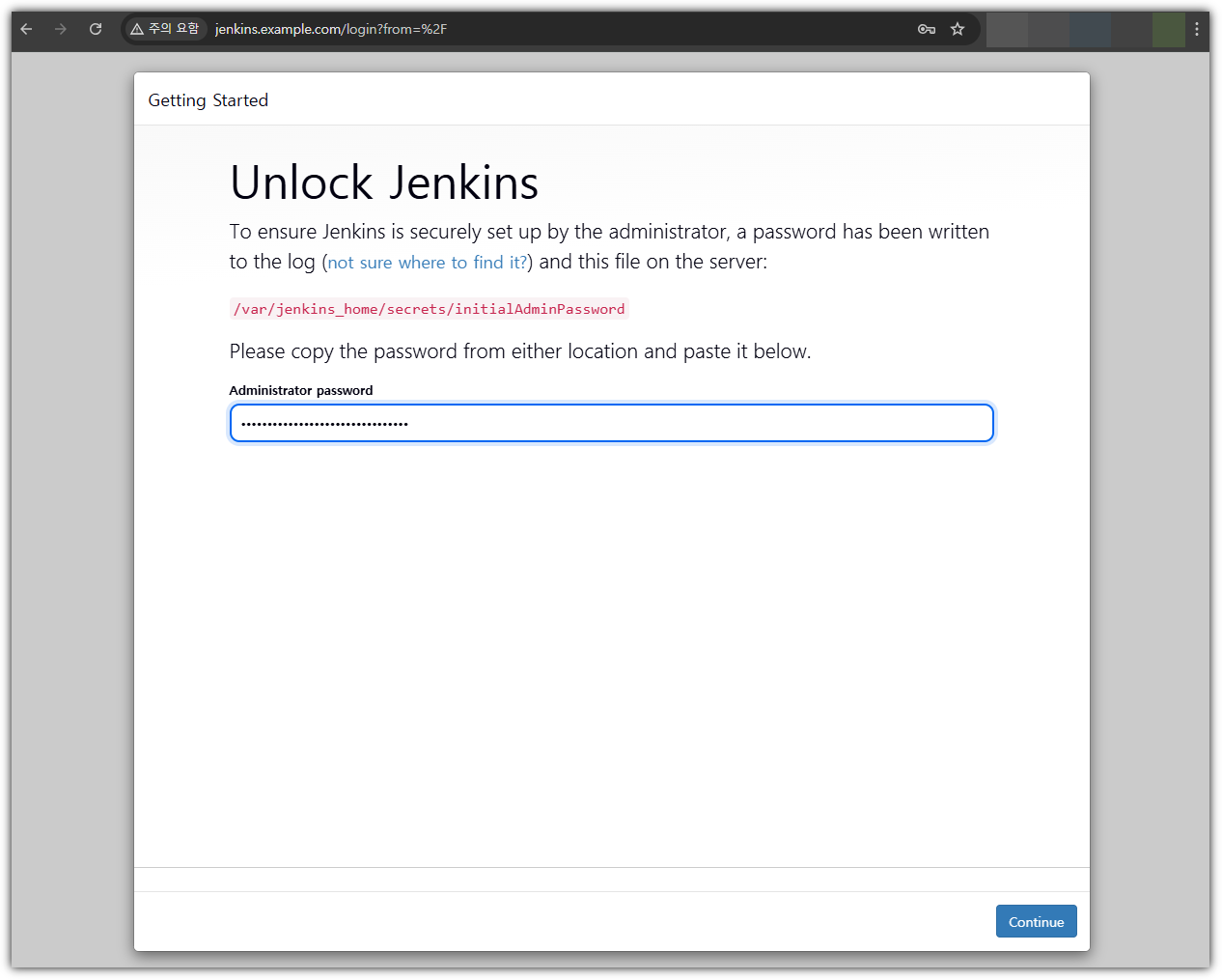

# 초기 암호 확인

kubectl exec -it -n jenkins deploy/jenkins -- cat /var/jenkins_home/secrets/initialAdminPassword

6809e#########################

- jenkins 웹 페이지 접속 - http://jenkins.example.com

- in-cluster 호출을 위한 도메인 설정

- 기본 통신 정보 확인 및 Coredns에 hosts 플러그인 활용

(⎈|kind-mgmt:N/A) gaji:~$ kubectl get svc -n jenkins

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

jenkins-svc ClusterIP 10.96.111.122 <none> 8080/TCP,50000/TCP 6m47s

KUBE_EDITOR="nano" kubectl edit cm -n kube-system coredns

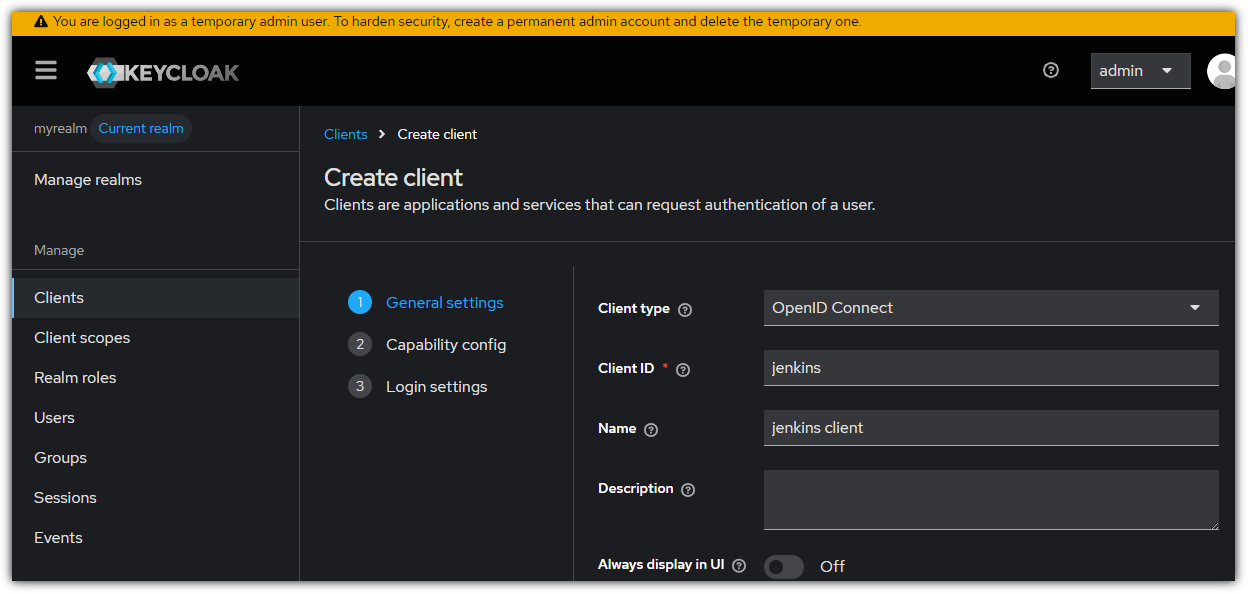

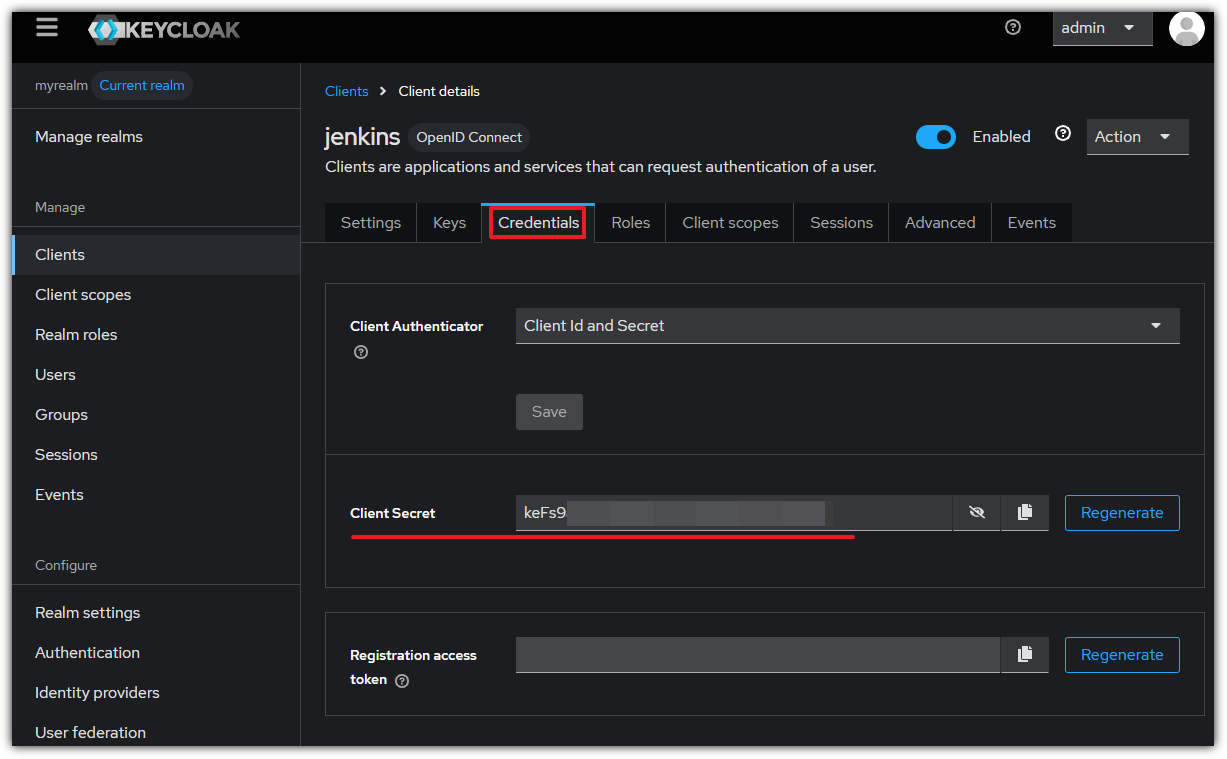

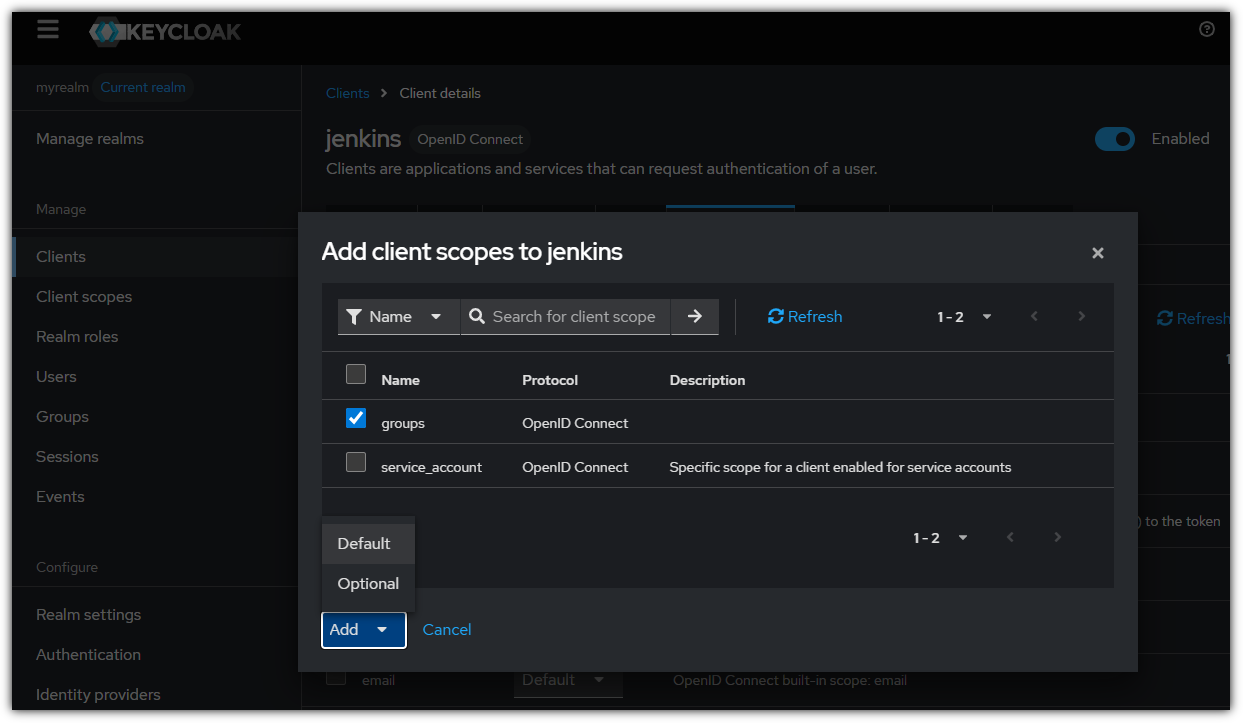

keycloak 에 jenkins 를 위한 client 생성

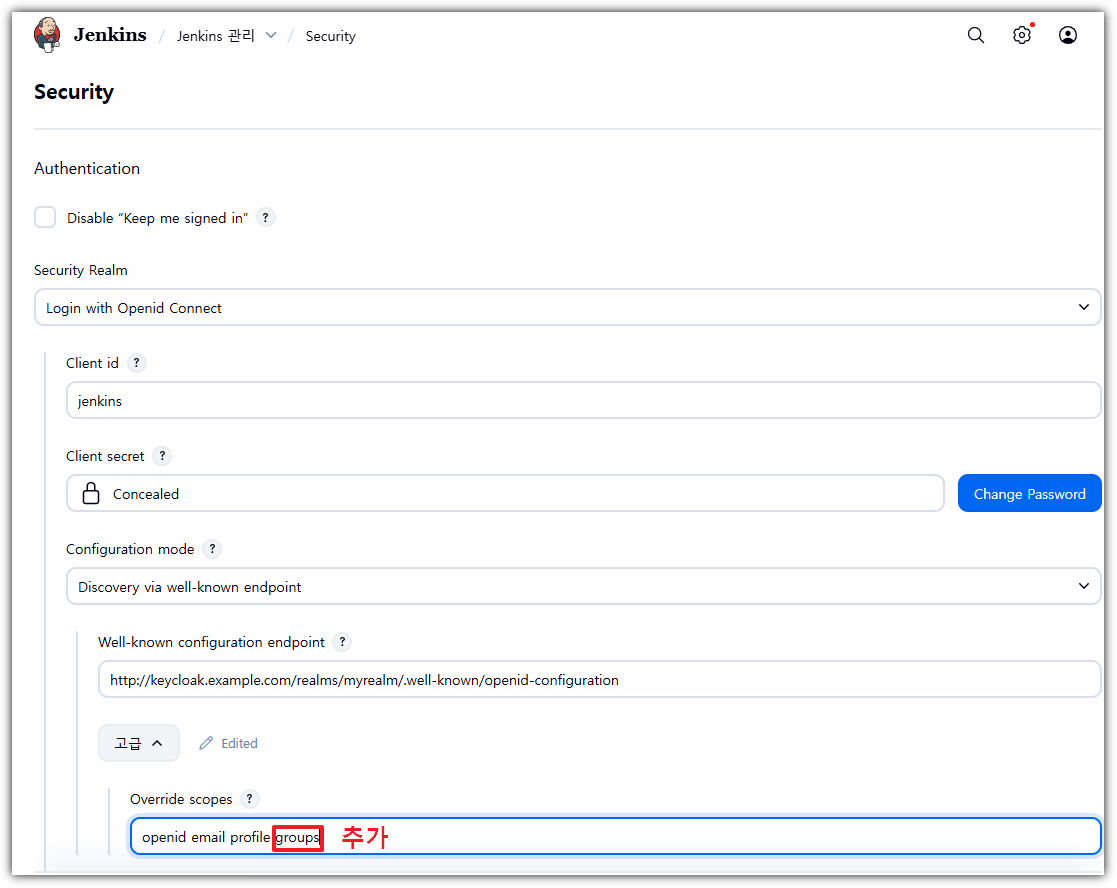

Configuring Jenkins OIDC

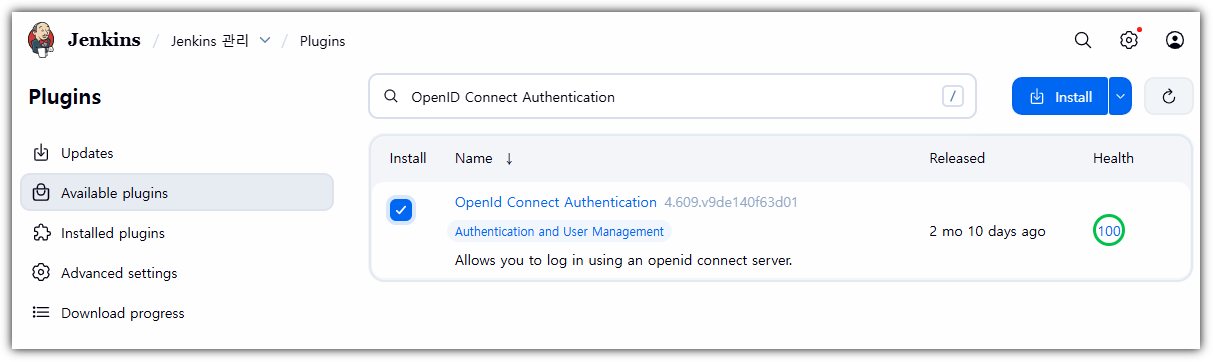

- 플러그인 설치 : OpenID Connect Authentication

- Manage Jenkins → Security : Security Realm 설정 → 하단 Save

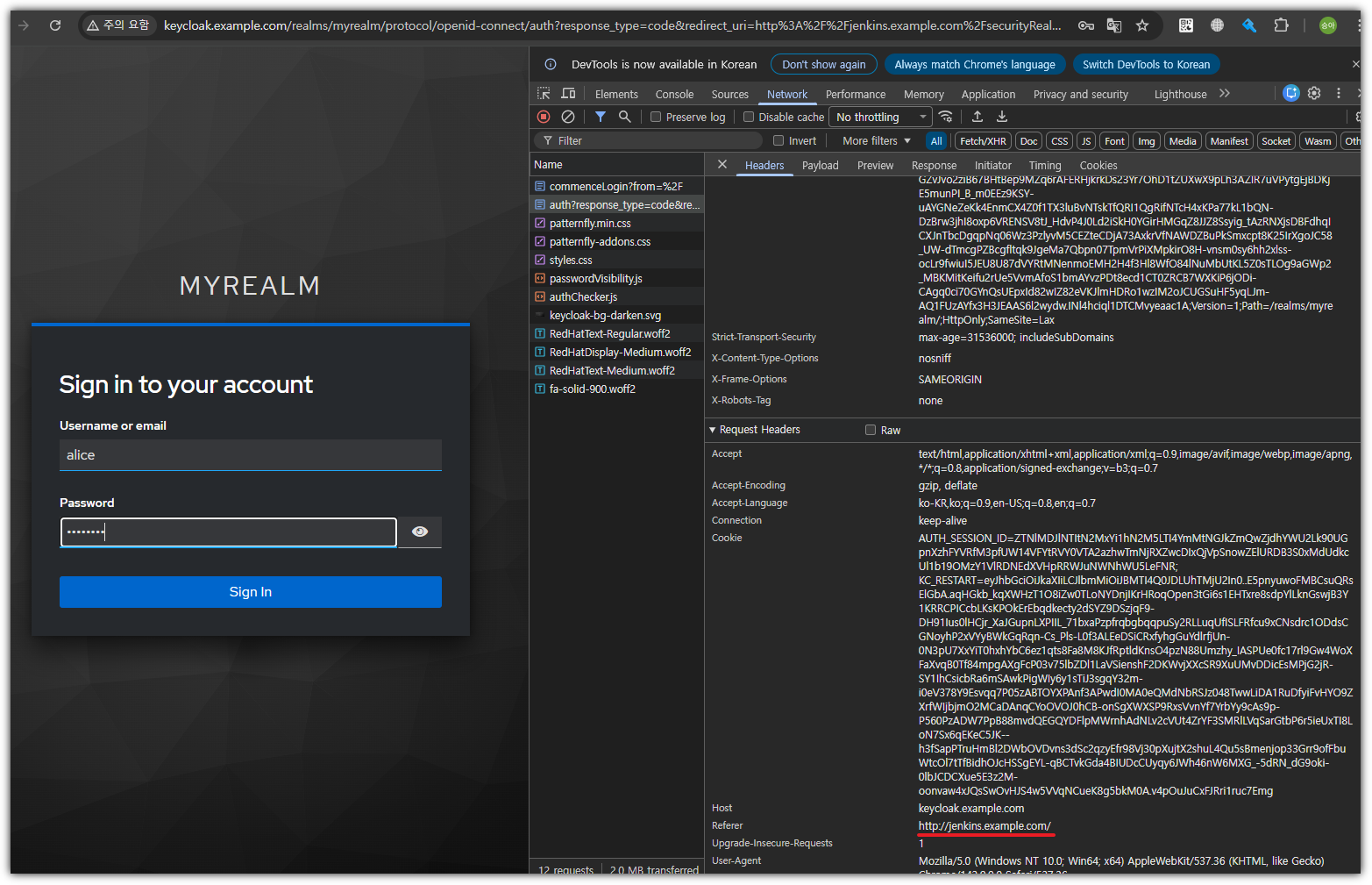

- jenkins 로그아웃 후 Keycloak으로 인증 거치는지 확인

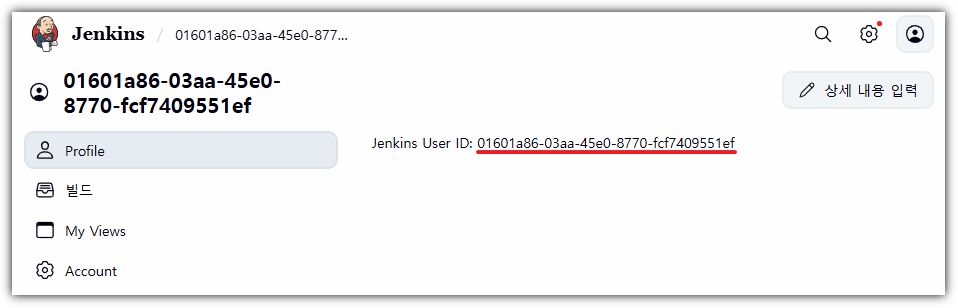

- jenkins에 alice 사용자로 로그인

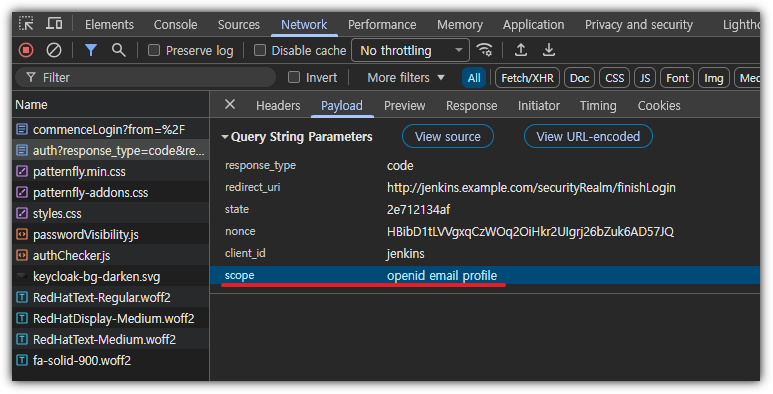

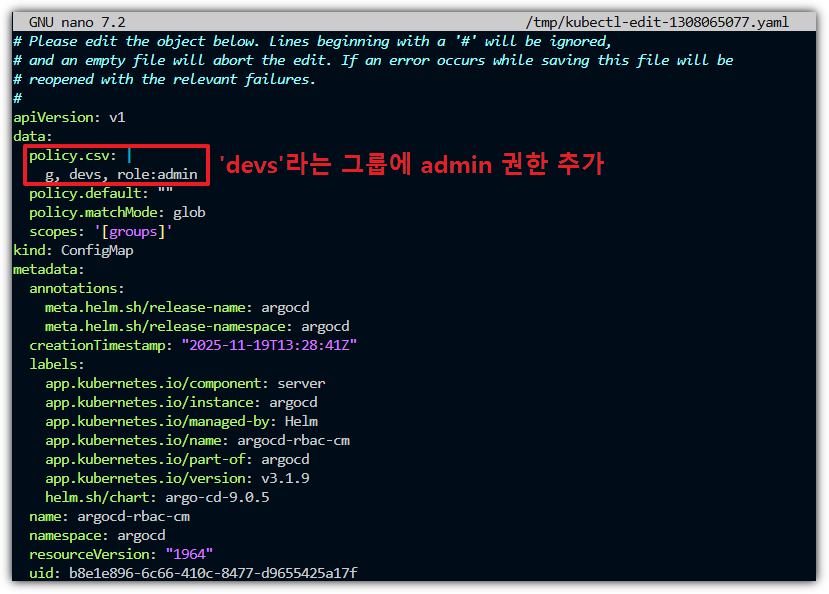

-> 위에 젠킨스에서 설정한 scope 범위도 확인됨

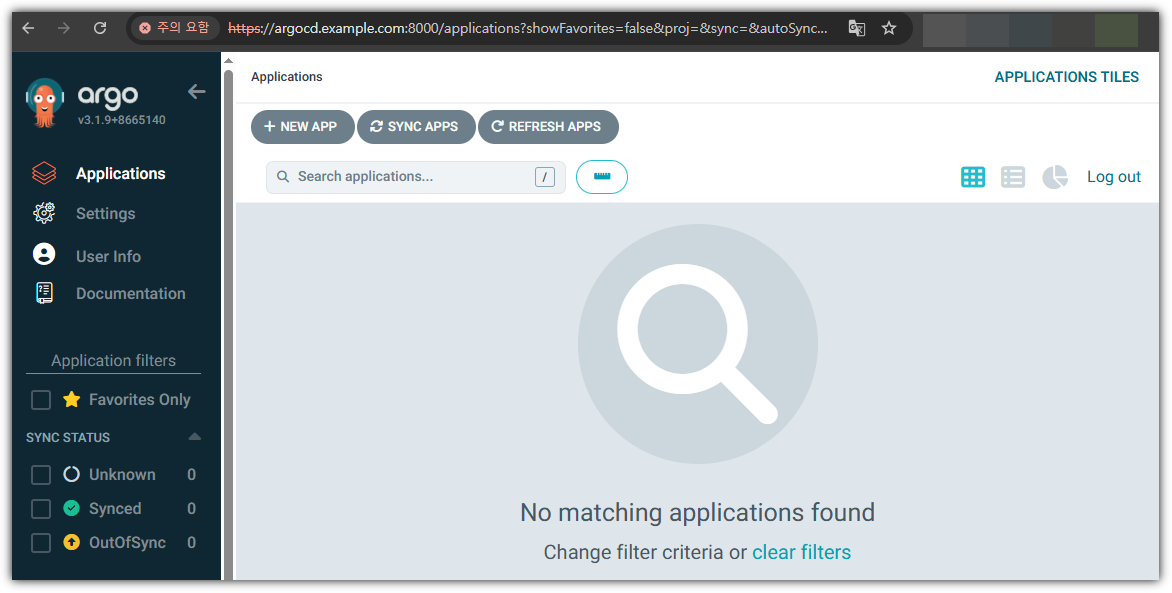

-> 이 상태에서 Argo CD 웹 페이지에서 keycloak을 통해 로그인 버튼을 누르면 ID/PW 입력 없이 바로 로그인 됨 (SSO 동작)

- argocd 접속 시, 해당 사용자 정보로 바로 로그인 확인 ← SSO 동작 확인!

- keycloak 에 인증된 사용자 session 정보 확인

-> 한쪽에서 로그인 하면 다른 곳에서 로그인은 자동적으로 적용됨

- alice 사용자로 모두 접근됐는지 확인 -> 모두 alice의 id로 확인됨

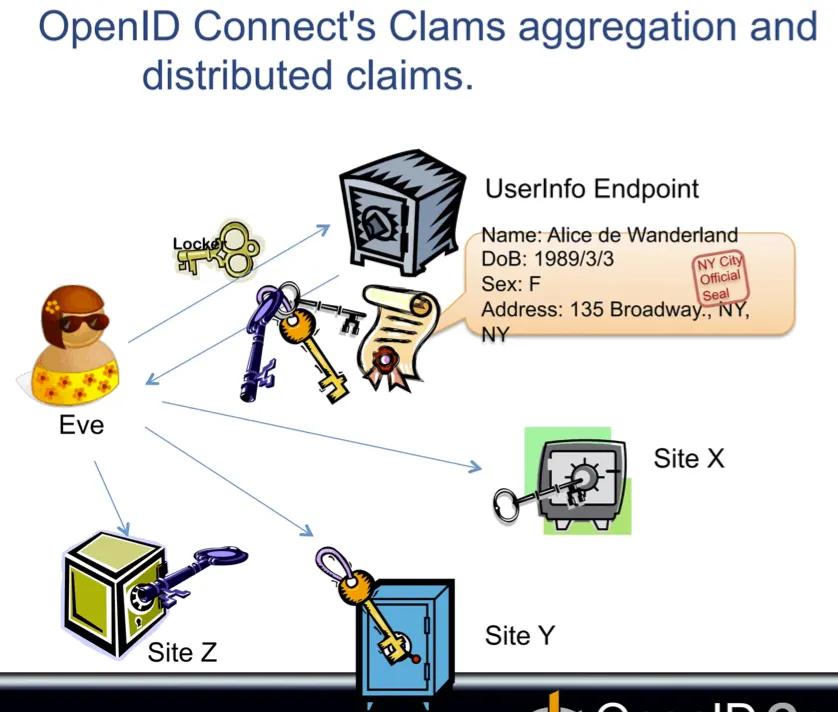

Scope → Claims (참고)

- Scope = “어떤 정보(Claim)를 요청할지 정의한 권한 범위”

- 클라이언트(앱)가 인증 서버에게 “나는 사용자로부터 어떤 수준의 정보를 받고 싶어" 라고 요청하는 것.

- OIDC 표준 스코프:

- openid → OIDC 인증 사용

- profile → 이름, 프로필 정보

- email → 이메일 정보

- address → 주소

- phone → 전화번호

- Claim = “JWT 안에 담기는 실제 사용자 정보 조각”

- 토큰에 포함되는 키-값 형태의 속성들로, 사용자나 토큰 자체에 대한 정보를 의미함.

- 보통 ID Token, Access Token에 들어가는 Claim들:

- sub: 사용자 고유 ID

- email: 사용자 이메일

- name: 이름

- given_name: 이름(이름 부분)

- family_name: 성

- roles: 사용자 역할

- groups: 그룹 정보

- 정리

✔ Scope은 요청하는 권한/정보 범위

✔ Claim은 토큰에 실제 담기는 정보

- openid

- openid + profile

- openid + profile + email

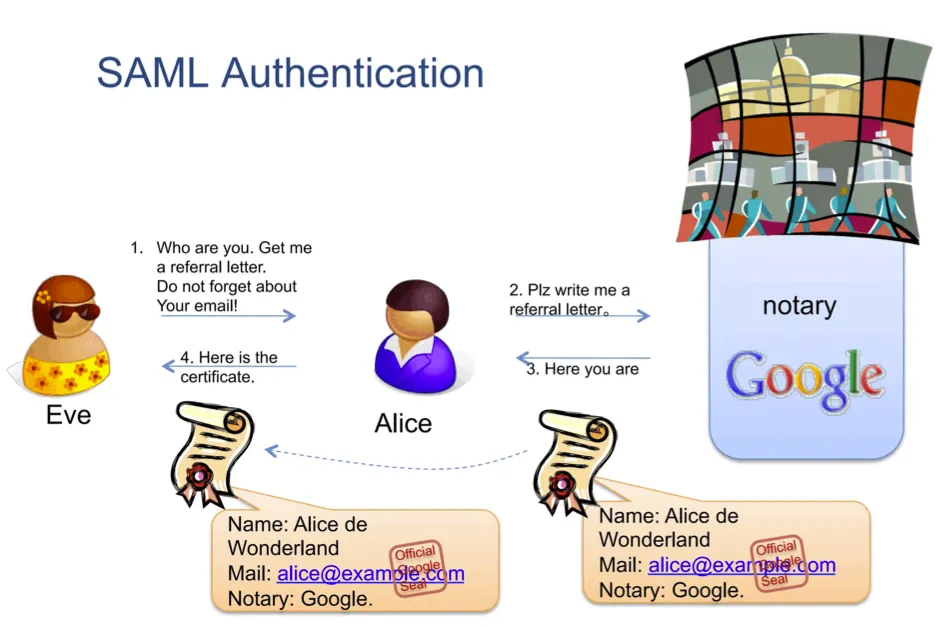

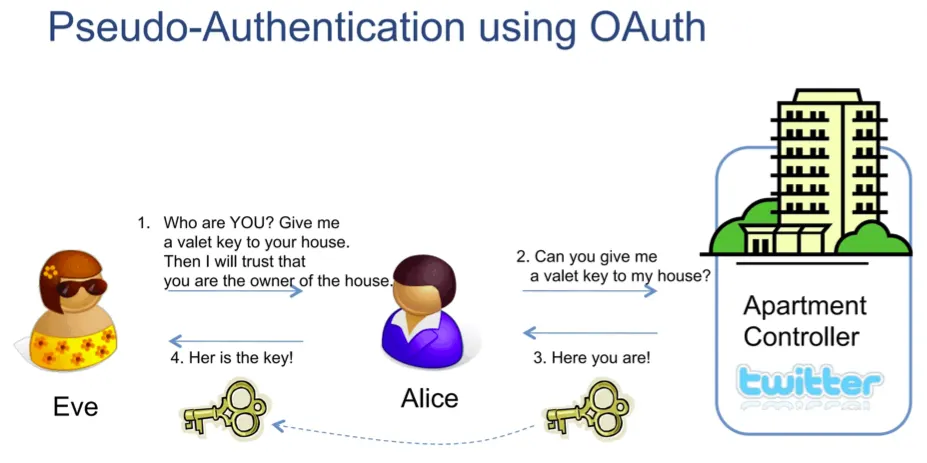

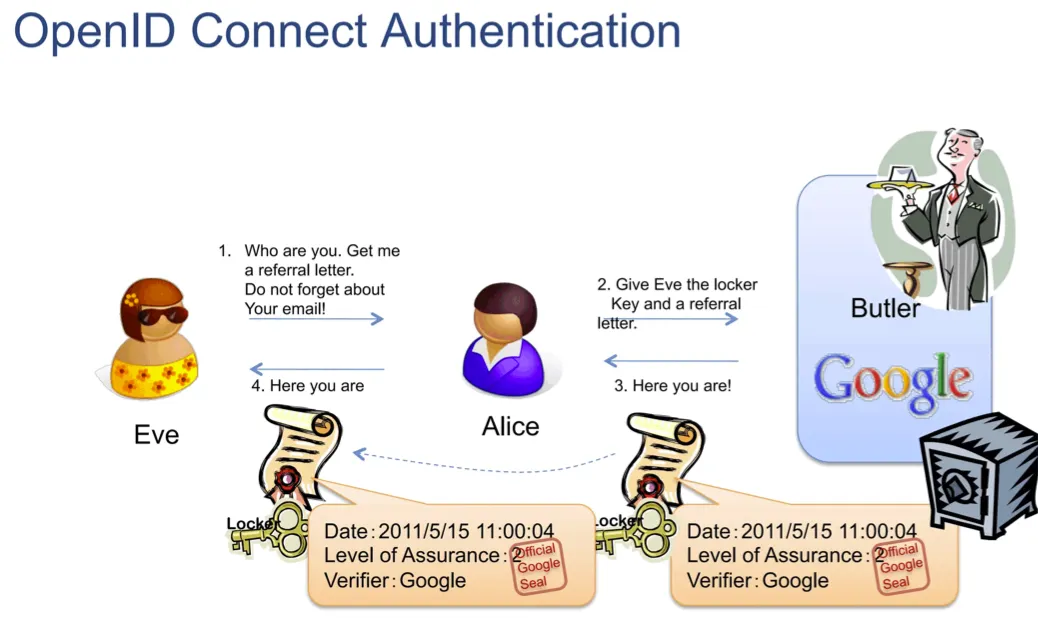

OpenID Connect 인증 (참고)

- SAML Authentication

- Pseudo-Authentication using OAuth

- OpenID Connect Authentication

여러 개의 Site (X, Y, Z)들이 각각의 Client로 보면 되고, 한 쪽에 로그인을 하면 해당 세션 정보를 갖고 동일한 realm에 등록되어 있는 다른 Client 사이트로도 SSO 형태로 로그인이 가능하다.

Keycloak에서 세션 관련 동작 (참고)

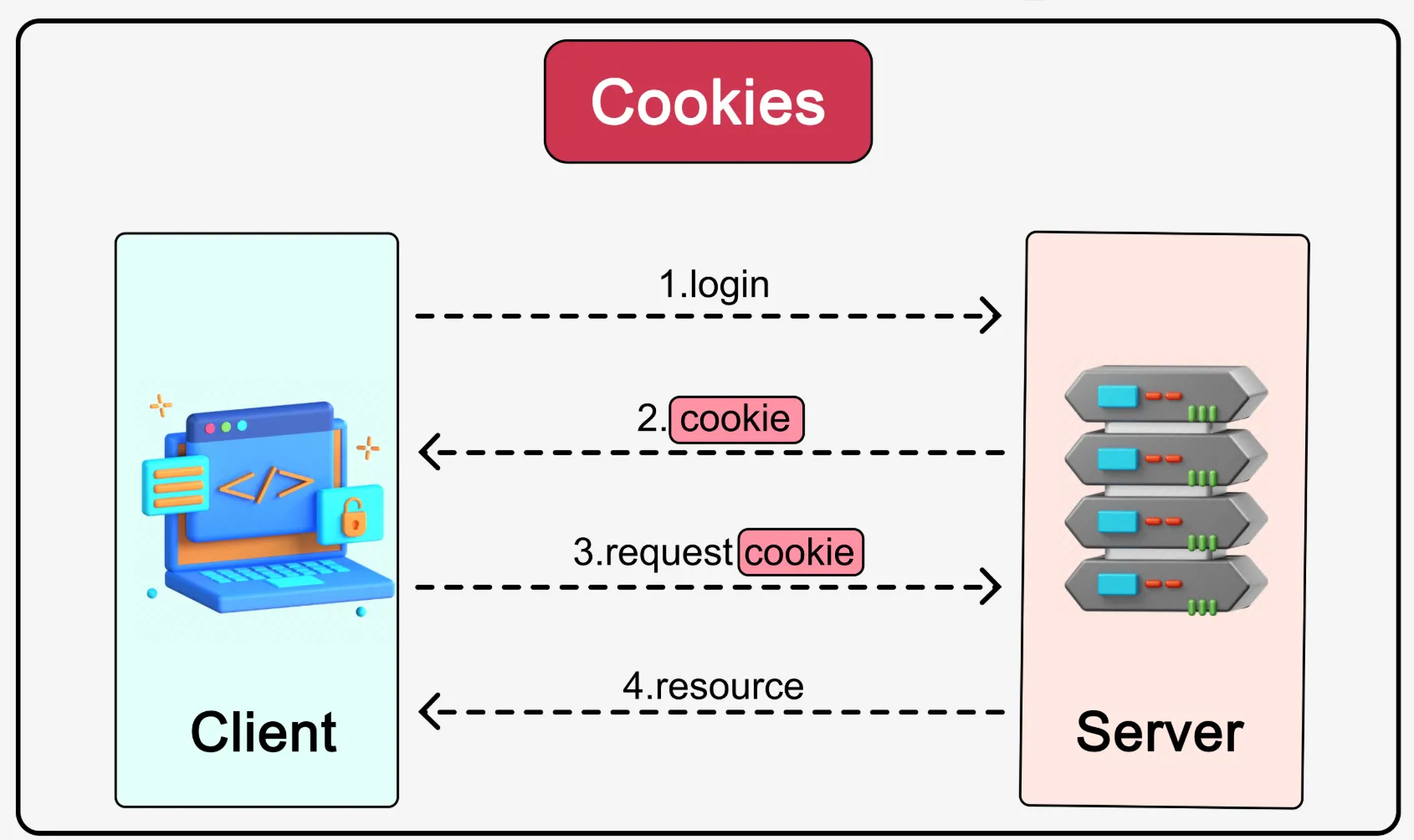

- 쿠키(Cookies)는 브라우저에서 관리

- 서버는 로그인 시 사용자 인증 정보를 쿠키에 직접 저장한다.

- 이후 브라우저는 요청마다 이 쿠키를 함께 전송하며, 서버는 쿠키를 검증해 인증 여부를 판단한다.

- 서버는 별도의 세션 상태를 저장하지 않는다. (stateless 구조)

- 보안상 위험: 쿠키 유출 시 곧바로 인증 정보가 탈취 가능!!

- 세션(Sessions)은 서버 측에서 관리

- 로그인 시 서버는 인증 정보를 검증하고, 서버 내부에 세션을 생성한다.

- 브라우저에는 인증 데이터 대신 세션 ID만 쿠키 형태로 저장한다.

- 이후 브라우저는 요청마다 이 세션 ID를 전송하고, 서버는 세션 저장소에 등록된 ID와 일치 여부를 확인해 인증을 유지한다.

- 서버가 세션의 수명과 만료를 제어하기 때문에 보안성이 훨씬 높음.

- Keycloak에서 동작 : KeyCloak은 HTTP의 stateless 특성을 보완하기 위해, 서버 측 세션 + 클라이언트 측 쿠키를 결합한 구조로 동작한다.

- 사용자가 로그인

- Keycloak 서버는 서버 내부에 세션(User Session)을 생성

- 브라우저에 KEYCLOAK_IDENTITY 쿠키를 발급 (세션 ID 포함)

- 브라우저가 요청을 보낼 때마다 쿠키를 전달하고, Keycloak은 이를 통해 세션을 식별

- Keycloak은 세션 ID와 일치 여부를 확인해 인증을 유지

LDAP (Lightweight Directory Access Protocol)

- 쉽게 비유하자면, ‘회사 주소록 + 출입증 발급 시스템’을 디지털로 만든 것 .by ChatGPT

- LDAP = 사용자·그룹·권한 정보를 계층적으로 보관하는 “주소록/조직도”

(ex. AD에 저장된 만명의 직원 정보를 갖고 있는 디렉토리)- 디렉터리 서비스에 접근하기 위한 표준 프로토콜

- 쉽게 말해, “조직 내 사용자, 그룹, 권한 등의 정보를 트리 구조로 저장하고 조회하는 시스템”

dc=example,dc=org # Base DN(Root DN) -> example.org와 같은 도메인 느낌

├── ou=people

│ ├── uid=alice

│ └── uid=bob

└── ou=groups

├── cn=devs

└── cn=admins- 각 노드는 DN(Distinguished Name)으로 전체 경로를 식별

- 전체 경로의 단계는 여러 개의 RDN(Relative Distinguished Name)으로 구성

- 예시) 사용자 alice

- DN: uid=alice,ou=people,dc=example,dc=org

- DN에 할당된 “uid=alice”, “ou=people”, “dc=example,dc=org”은 각 엔트리의 RDN

- 예시) 사용자 alice

- LDAP 서버 = 회사의 인사/보안부LDAP 서버도 마찬가지로, 사용자·그룹·권한 정보를 “디렉터리”라는 구조로 보관하는 중앙 관리소입니다.

- 회사에는 모든 직원의 정보(이름, 부서, 직급, 이메일 등)를 한 곳에 모아 관리하는 인사부가 있죠.

- 디렉터리 구조 = 회사 조직도LDAP도 “dc=company, ou=부서, cn=사용자” 같은 계층적 주소로 정보를 저장합니다.

- 회사에 “본사–부서–팀–직원”으로 이어지는 조직도가 있는 것처럼,

- 클라이언트(애플리케이션) = 직원 신분 확인 창구인사부가 “맞다/아니다” 또는 “이 사람의 권한은 이런 것”을 알려주죠.

- 누군가 회사 시스템에 로그인하면, 시스템이 인사부(=LDAP 서버)에 “이 사람 우리 직원 맞나요?” 하고 묻습니다.

- 인증(Authentication) = 신분증 검사

- 시스템이 LDAP 서버에 아이디와 비밀번호를 보내 확인받습니다.

- 권한 부여(Authorization) = 출입증/권한 확인

- LDAP 서버가 “이 사람은 개발팀” “이 사람은 관리자”처럼 그룹 정보나 역할을 반환하면, 시스템이 그 정보에 따라 접근 권한을 부여합니다.

- 네트워크 상의 디렉토리 서비스 표준인 X.500의 DAP(Directory Access Protocol)를 기반으로한 경량화(Lightweight) 된 DAP 버전.

- LDAP Flow

- LDAP 디렉터리에서 표현하는 정보 구조 2가지 - Blog

- Entry : 디렉토리에서 정보를 표현하는 기본 단위이다. Entry는 다수의 attribute로 구성됩니다.

- Atribute : Entry의 각 타입을 저장하는 공간으로 1개의 Attribute에 하나, 또는 다수의 값을 담을 수 있습니다.

- 도구 : Windows AD, OpenLDAP - Home 등등

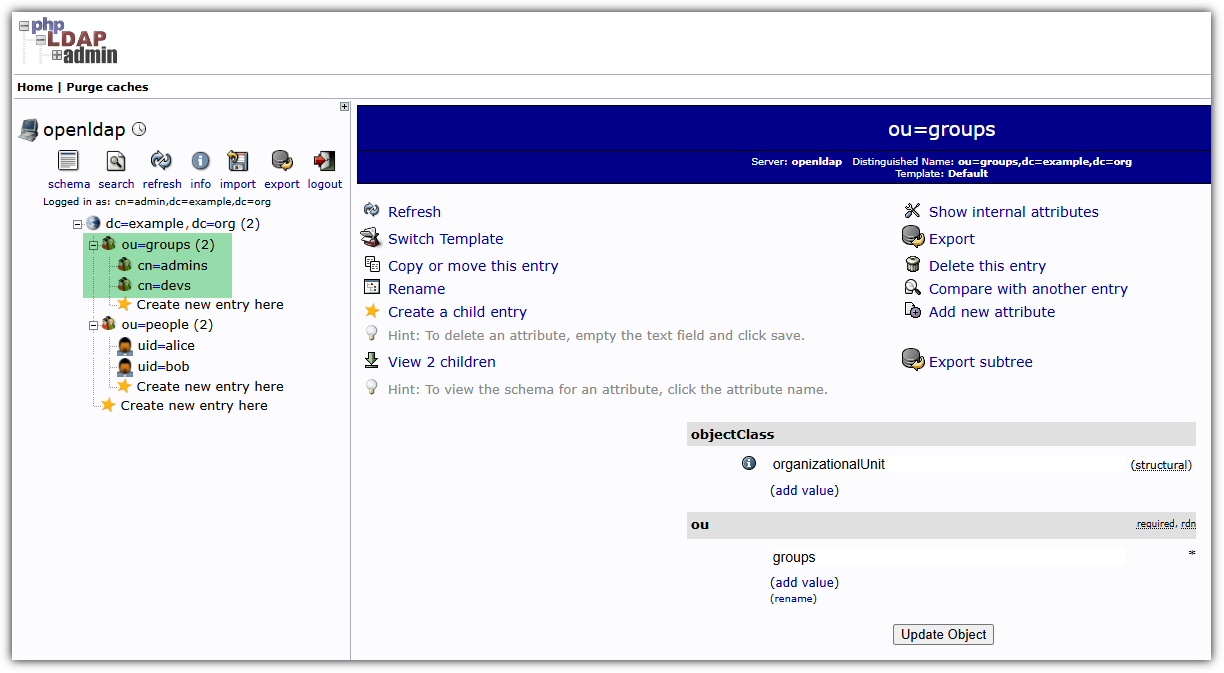

OpenLDAP 서버 배포

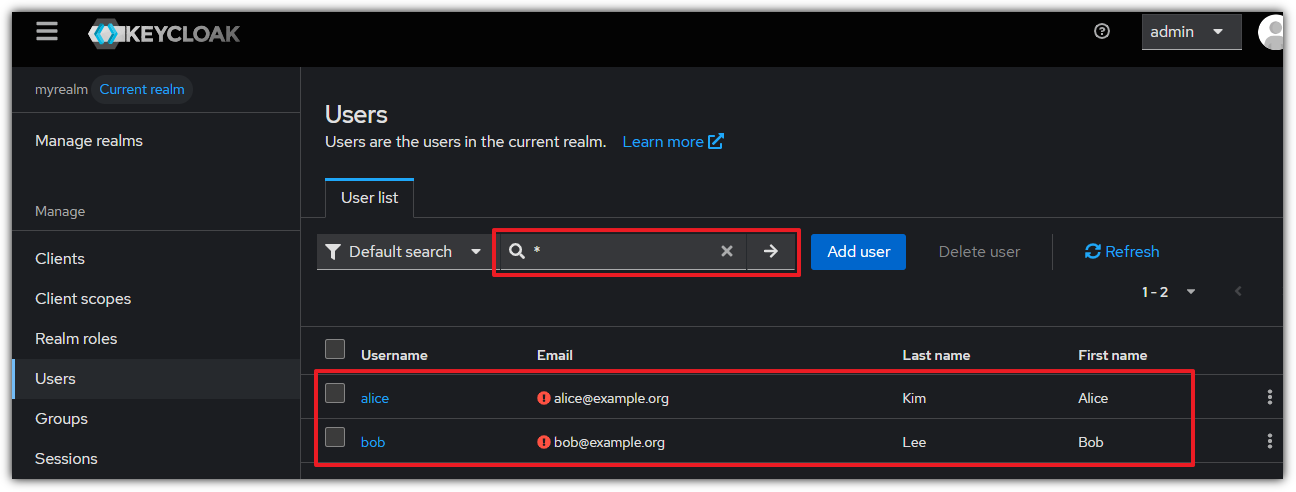

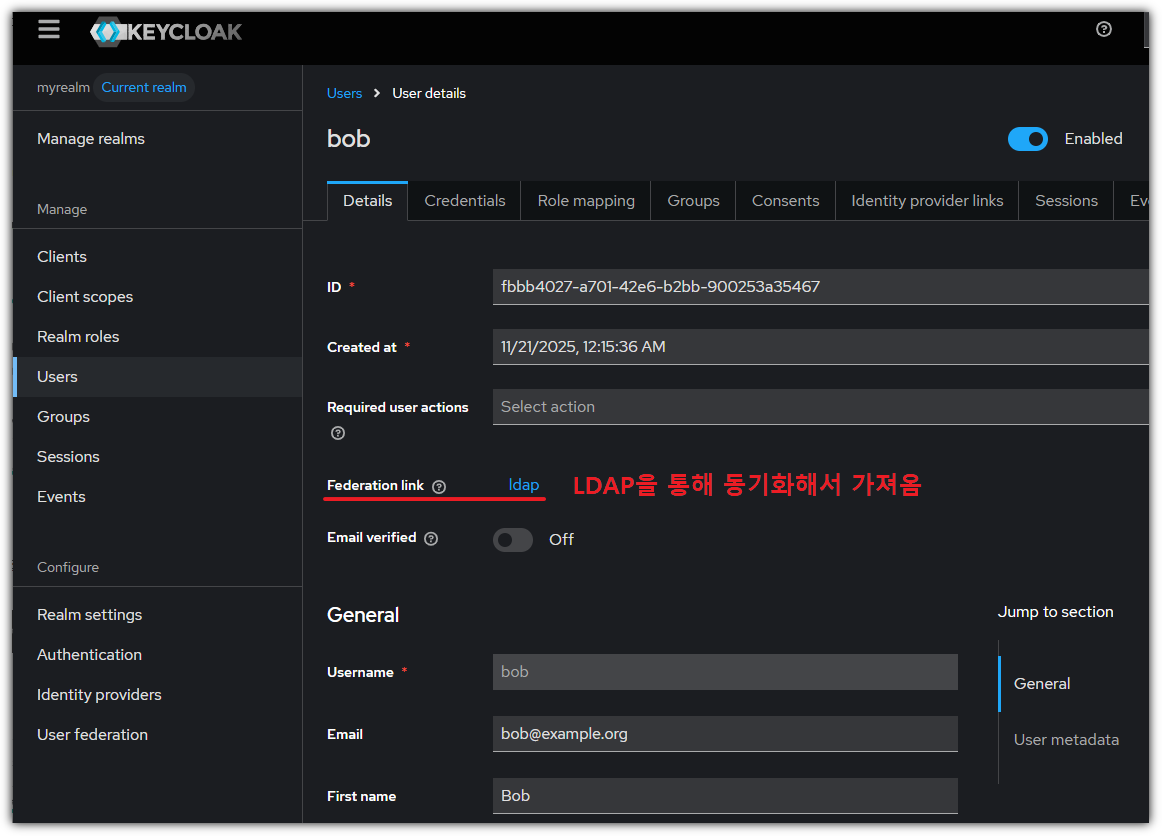

- 사전 작업 : Keycloak 에 alice sign out 및 alice 사용자 제거 (jenkins, argo cd도 로그아웃)

- OpenLDAP 서버 배포

- pod 안에 멀티 컨테이너로 open ldap과 웹 ui 역할을 하는 phpldapadmin 배포

#

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: Namespace

metadata:

name: openldap

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: openldap

namespace: openldap

spec:

replicas: 1

selector:

matchLabels:

app: openldap

template:

metadata:

labels:

app: openldap

spec:

containers:

- name: openldap

image: osixia/openldap:1.5.0

ports:

- containerPort: 389

name: ldap

- containerPort: 636

name: ldaps

env:

- name: LDAP_ORGANISATION # 기관명, LDAP 기본 정보 생성 시 사용

value: "Example Org"

- name: LDAP_DOMAIN # LDAP 기본 Base DN 을 자동 생성

value: "example.org"

- name: LDAP_ADMIN_PASSWORD # LDAP 관리자 패스워드

value: "admin"

- name: LDAP_CONFIG_PASSWORD

value: "admin"

- name: phpldapadmin

image: osixia/phpldapadmin:0.9.0

ports:

- containerPort: 80

name: phpldapadmin

env:

- name: PHPLDAPADMIN_HTTPS

value: "false"

- name: PHPLDAPADMIN_LDAP_HOSTS

value: "openldap" # LDAP hostname inside cluster

---

apiVersion: v1

kind: Service

metadata:

name: openldap

namespace: openldap

spec:

selector:

app: openldap

ports:

- name: phpldapadmin

port: 80

targetPort: 80

nodePort: 30000

- name: ldap

port: 389

targetPort: 389

- name: ldaps

port: 636

targetPort: 636

type: NodePort

EOF

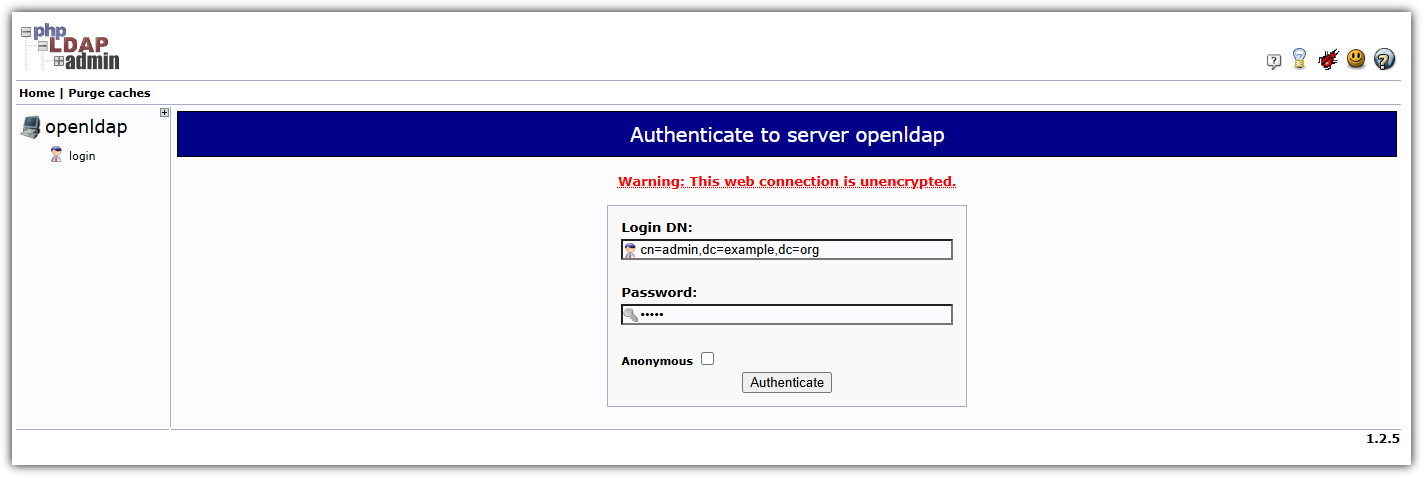

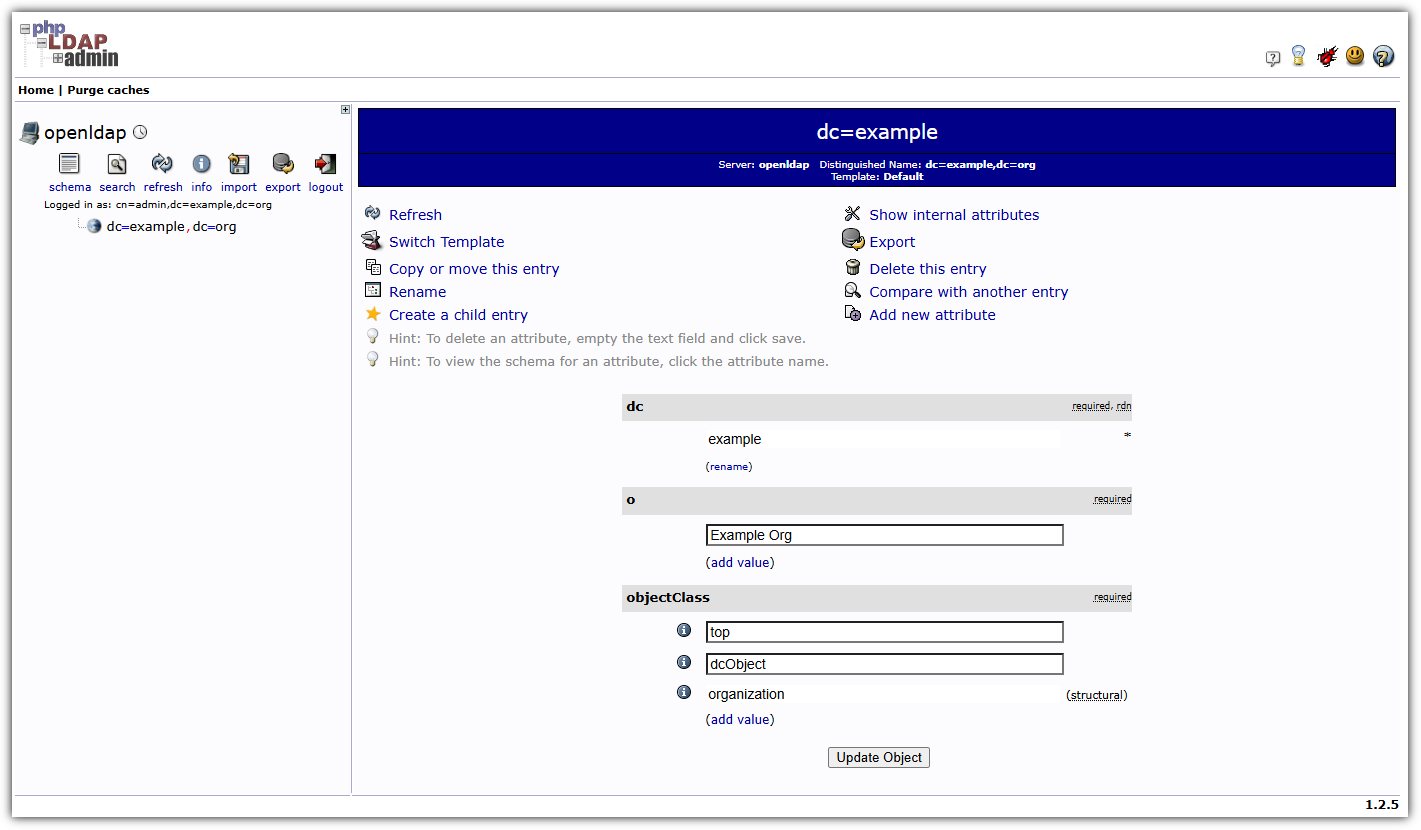

- LDAP 웹 페이지 접속 - http://127.0.0.1:30000

- 로그인

OpenLDAP 구성

#

kubectl -n openldap exec -it deploy/openldap -c openldap -- bash

----------------------------------------------------

#

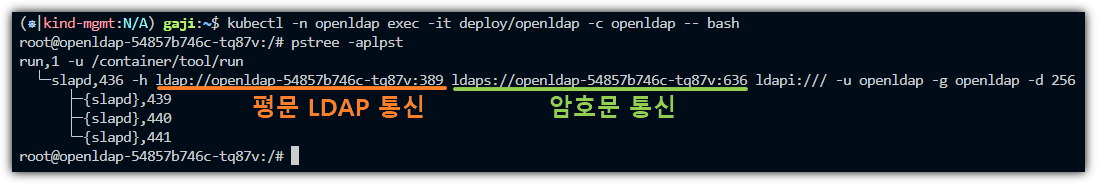

pstree -aplpst

run,1 -u /container/tool/run

└─slapd,433 -h ldap://openldap-54857b746c-ch9g4:389 ldaps://openldap-54857b746c-ch9g4:636 ldapi:/// -u openldap -g openldap -d 256

├─{slapd},436

└─{slapd},437

# LDAP 관리자 인증 테스트 : 정상일 경우 LDAP 기본 엔트리 출력

ldapsearch -x -H ldap://localhost:389 -b dc=example,dc=org -D "cn=admin,dc=example,dc=org" -w admin

# extended LDIF

#

# LDAPv3

# base <dc=example,dc=org> with scope subtree

# filter: (objectclass=*)

# requesting: ALL

#

# example.org

dn: dc=example,dc=org

objectClass: top

objectClass: dcObject

objectClass: organization

o: Example Org

dc: example

# search result

search: 2

result: 0 Success

# numResponses: 2

# numEntries: 1

# 실습 사용 최종 트리 구조 (미리 보기)

dc=example,dc=org

├── ou=people

│ ├── uid=alice

│ │ ├── cn: Alice

│ │ ├── sn: Kim

│ │ ├── uid: alice

│ │ └── mail: alice@example.org

│ └── uid=bob

│ ├── cn: Bob

│ ├── sn: Lee

│ ├── uid: bob

│ └── mail: bob@example.org

└── ou=groups

├── cn=devs

│ └── member: uid=bob,ou=people,dc=example,dc=org

└── cn=admins

└── member: uid=alice,ou=people,dc=example,dc=org

# ldapadd로 ou 추가 (organizationalUnit)

cat <<EOF | ldapadd -x -D "cn=admin,dc=example,dc=org" -w admin

dn: ou=people,dc=example,dc=org

objectClass: organizationalUnit

ou: people

dn: ou=groups,dc=example,dc=org

objectClass: organizationalUnit

ou: groups

EOF

adding new entry "ou=people,dc=example,dc=org"

adding new entry "ou=groups,dc=example,dc=org"

# ldapadd로 users 추가 (inetOrgPerson) : alice , bob

cat <<EOF | ldapadd -x -D "cn=admin,dc=example,dc=org" -w admin

dn: uid=alice,ou=people,dc=example,dc=org

objectClass: inetOrgPerson

cn: Alice

sn: Kim

uid: alice

mail: alice@example.org

userPassword: alice123

dn: uid=bob,ou=people,dc=example,dc=org

objectClass: inetOrgPerson

cn: Bob

sn: Lee

uid: bob

mail: bob@example.org

userPassword: bob123

EOF

adding new entry "uid=alice,ou=people,dc=example,dc=org"

adding new entry "uid=bob,ou=people,dc=example,dc=org"

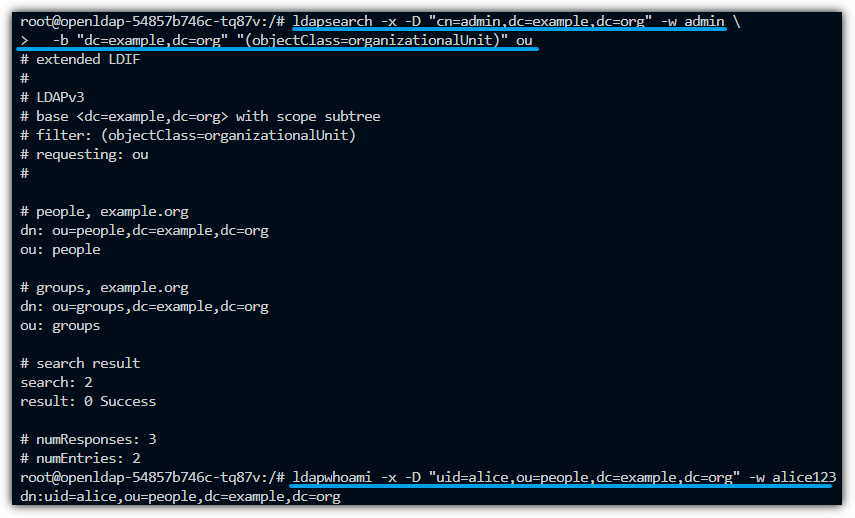

# ldapsearch 검색 : ou

ldapsearch -x -D "cn=admin,dc=example,dc=org" -w admin \

-b "dc=example,dc=org" "(objectClass=organizationalUnit)" ou

# ldapsearch 검색 : 사용자

ldapsearch -x -D "cn=admin,dc=example,dc=org" -w admin \

-b "ou=people,dc=example,dc=org" "(uid=*)" uid cn mail

# ldapsearch 검색 : 그룹/멤버 확인

ldapsearch -x -D "cn=admin,dc=example,dc=org" -w admin \

-b "ou=groups,dc=example,dc=org" "(objectClass=groupOfNames)" cn member

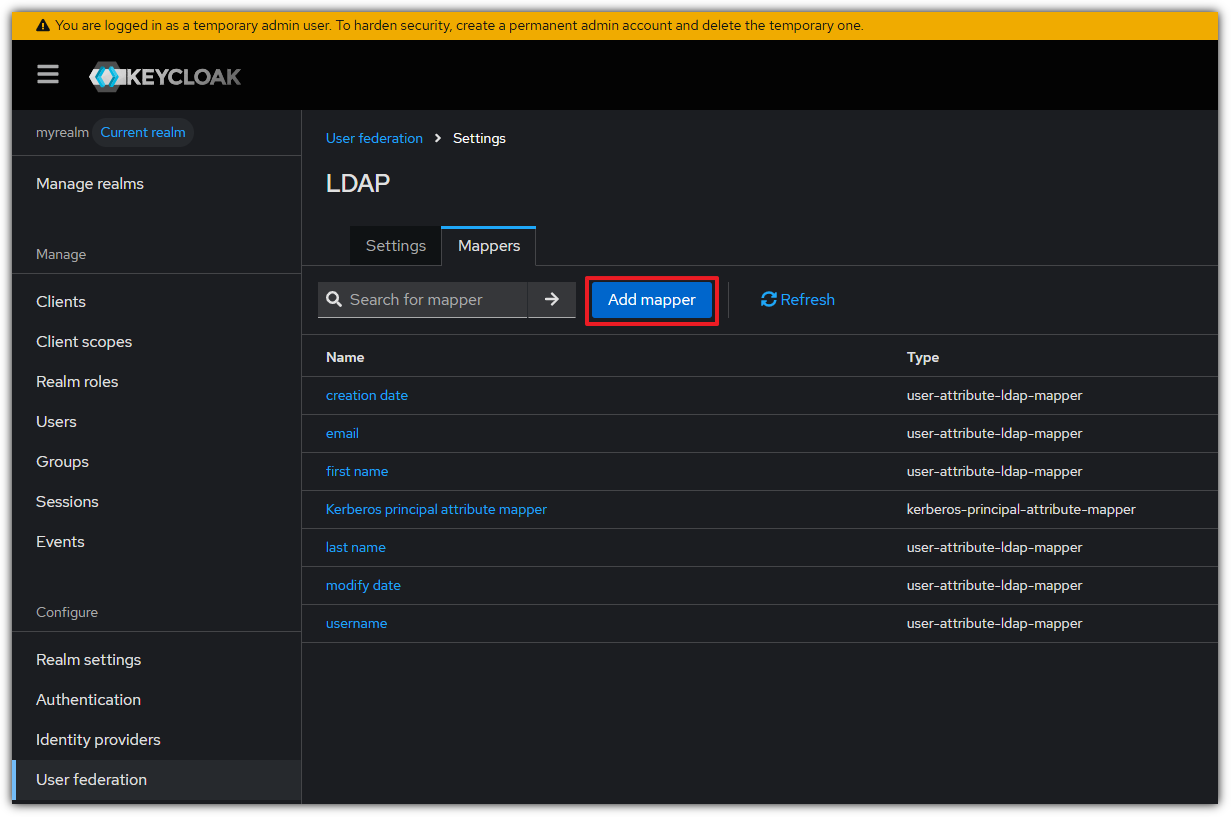

# LDAP 사용자 인증 테스트 : 정상일 경우 LDAP 기본 엔트리 출력